Bad Romance – the memento edition

Posted by Paul in code, events, remix, The Echo Nest on March 18, 2010

At SXSW I gave a talk about how computers can help make remixing music easier. For the talk I created a few fun remixes. Here’s one of my favorites. It’s a beat-reversed version of Lady Gaga’s Bad Romance. The code to create it is here: vreverse.py

How Music Information Retrieval can help you get the girl

Posted by Paul in music information retrieval, startup on March 17, 2010

Parag Chordia from Georgia Tech and his colleagues have spun out a music-tech company called khush. Khush makes cutting-edge artificial intelligence music applications. Their first app is LaDiDa – which is an auto-accompaniment application. You sing a capella into your iPhone and Ladida plays it back with a full accompaniment of music …. something like Songsmith (but with good music).

I had a chance to chat with Parag, along with Khush CEO Perna Gupta (she’s the dream girl in the video, btw), and Alex Rae (programmer+music geek). These folks are fired up about khush and LaDiDa. It’s great to see another innovative company come out of the MIR world. I think they will be going places.

Unofficial Artist Guide to SXSW

Posted by Paul in events, Music, recommendation, The Echo Nest on March 4, 2010

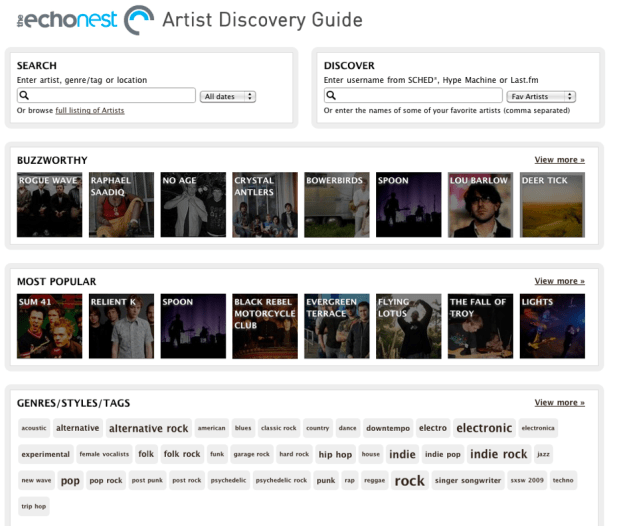

I’m excited! Next week I travel to Austin for a week long computer+music geek-fest at SXSW. A big part of SXSW is the music – there are nearly 2,000 different artists playing at SXSW this year. But that presents a problem – there are so many bands going to SXSW (many I’ve never heard of) that I find it very hard to figure out which bands I should go and see. I need a tool to help me find sift through all of the artists – a tool that will help me decide which artists I should add to my schedule and which ones I should skip. I’m not the only one who was daunted by the large artist list. Taylor McKnight, founder of SCHED*, was thinking the same thing. He wanted to give his users a better way to plan their time at SXSW. And so over a couple of weekends Taylor built (with a little backend support from us) The Unofficial Artist Discovery Guide to SXSW.

The Unofficial Artist Discovery Guide to SXSW is a tool that allows you to explore the many artists attending this year’s SXSW. It lets you search for artists, browse popularity, music style, ‘buzzworthiness’, or similarity to your favorite artists – and it will make recommendations for you based on your music taste (using your Last.fm, Sched* or Hype Machine accounts) . The Artist Guide supplies enough context (bios, images, music, tag clouds, links) to help you decide if you might like an artist.

Here’s the guide:

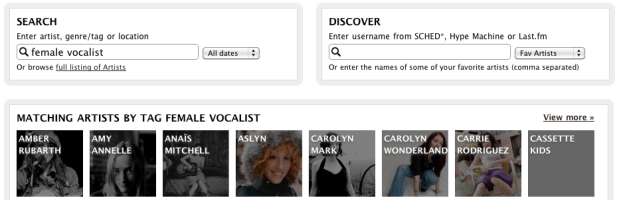

Here’s a quick tour of some of the things you can do with the guide. First off, you can Search for artists by name, genre/tag or location. This helps you find music when you know what you are looking for.

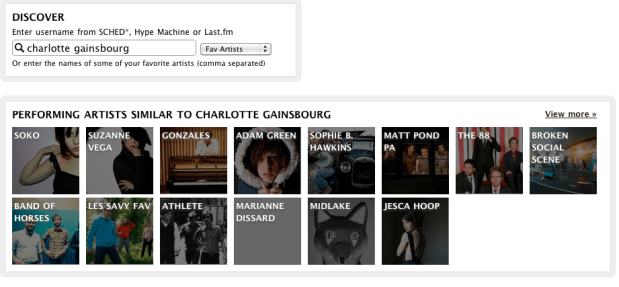

However, you may not always be sure what you are looking for – that’s where you use Discover. This gives you recommendations based on the music you already like. Type in the name of a few artists (even artists that are not playing at SXSW) or your SCHED*, Hype Machine or Last.fm user name, and ‘Discover’ will give you a set of recommendations for SXSW artists based on your music taste. For example, I’ve been listening to Charlotte Gainsbourg lately so I can use the artist guide to help me find SXSW artists that I might like:

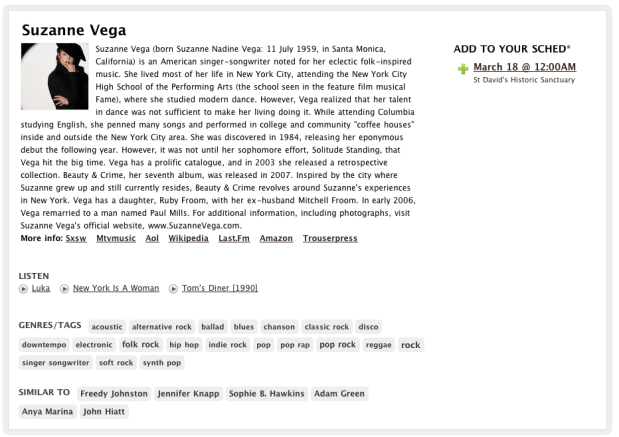

If I see an artist that looks interesting I can drill down and get more info about the artist:

From here I can read the artist bio, listen to some audio, explore other similar SXSW artists or add the event to my SCHED* schedule.

From here I can read the artist bio, listen to some audio, explore other similar SXSW artists or add the event to my SCHED* schedule.

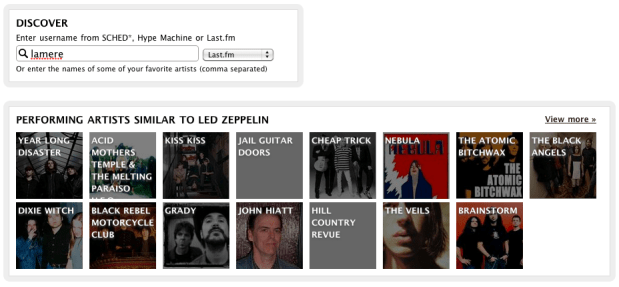

I use Last.fm quite a bit, so I can enter my Last.fm name and get SXSW recommendations based upon my Last.fm top artists. The artist guide tries to mix things up a little bit so if I don’t like the recommendations I see, I can just ask again and I can get a different set. Here are some recommendations based on my recent listening at Last.fm:

If you’ve been using the wonderful SCHED* to keep track of your SXSW calendar you can use the guide to get recommendations based on artists that you’ve already added to your SXSW calendar.

In addition to search and discovery, the guide gives you a number of different ways to browse the SXSW Artist space. You can browse by ‘buzzworthy’ artists – these are artists that are getting the most buzz on the web:

Or the most well-known artists:

You can browse by the style of music via a tag cloud:

And by venue:

Building the guide was pretty straightforward. Taylor used the Echo Nest APIs to get the detailed artist data such as familiarity, popularity, artist bios, links, images, tags and audio. The only data that was not available at the Echo Nest was the venue and schedule info which was provided by Arkadiy (one of Taylor’s colleagues). Even though SXSW artists can be extremely long tail (some don’t even have Myspace pages), the Echo Nest was able to provide really good coverage for these sets (There was coverage for over 95% of the artists). Still there are a few gaps and I suspect there may be a few errors in the data (my favorite wrong image is for the band Abe Vigoda). If you are in a band that is going to SXSW and you see that we have some of your info wrong, send me an email (paul@echonest.com) and I’ll make it right.

We are excited to see the this Artist Discovery guide built on top of the Echo Nest. It’s a great showcase for the Echo Nest developer platform and working with Taylor was great. He’s one of these hyper-creative, energetic types – smart, gets things done and full of new ideas. Taylor may be adding a few more features to the guide before SXSW, so stay tuned and we’ll keep you posted on new developments.

NodeJS and DonkDJ

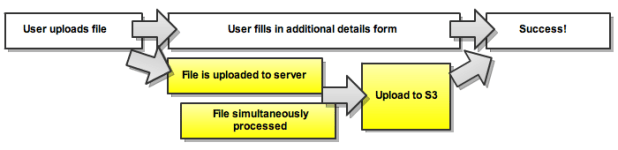

Brian points me to RF Watson’s (creator of DonkDj) interesting post about how he’s using NodeJS to solve concurrency problems in his audio-uploading web apps. Worth a read.

How much is a song play worth?

Posted by Paul in Music, research, The Echo Nest on March 1, 2010

Over the last 15 years or so, music listening has moved online. Now instead of putting a record on the turntable or a CD in the player, we fire up a music application like iTunes, Pandora or Spotify to listen to music. One interesting side-effect of this transition to online music is that there is now a lot of data about music listening behavior. Sites like Last.fm can keep track of every song that you listen to and offer you all sorts of statistics about your play history. Applications like iTunes can phone home your detailed play history. Listeners leave footprints on P2P networks, in search logs and every time they hit the play button on hundreds of music sites. People are blogging, tweeting and IMing about the concerts they attend, and the songs that they love (and hate). Every day, gigabytes of data about our listening habits are generated on the web.

With this new data come the entrepreneurs who sample the data, churn though it and offer it to those who are trying to figure out how best to market new music. Companies like Big Champagne, Bandmetrics, Musicmetric, Next Big Sound and The Echo Nest among others offer windows into this vast set of music data. However, there’s still a gap in our understanding of how to interpret this data. Yes, we have vast amounts data about music listening on the web, but that doesn’t mean we know how to interpret this data- or how to tie it to the music marketplace. How much is a track play on a computer in London related to a sale of that track in a traditional record store in Iowa? How do searches on a P2P network for a new album relate to its chart position? Is a track illegally made available for free on a music blog hurting or helping music sales? How much does a twitter mention of my song matter? There are many unanswered questions about how online music activity correlates with the more traditional ways of measuring artist success such as music sales and chart position. These are important questions to ask, yet they have been impossible to answer because the people who have the new data (data from the online music world) generally don’t talk to the people who own the old data and vice versa.

We think that understanding this relationship is key and so we are working to answer these questions via a research consortium between The Echo Nest, Yahoo Research and UMG unit Island Def Jam. In this consortium, three key elements are being brought together. Island Def Jam is contributing deep and detailed sales data for its music properties – sales data that is not usually released to the public, Yahoo! Research brings detailed search data (with millions and millions of queries for music) along with deep expertise in analyzing and understanding what search can predict while The Echo Nest brings our understanding of Intenet music activity such as playcount data, friend and fan counts, blog mentions, reviews, mp3 posts, p2p activity as well as second generation metrics as sentiment analysis, audio feature analysis and listener demographics . With the traditional sales data, combined with the online music activity and search data the consortium hopes to develop a predictive model for music by discovering correlations between Internet music activity and market reaction. With this model, we would be able to quantify the relative importance of a good review on a popular music website in terms of its predicted effect on sales or popularity. We would be able to pinpoint and rank various influential areas and platforms on the music web that artists should spend more of their time and energy to reach a bigger fanbase. Combining anonymously observable metrics with internal sales and trend data will give keen insight into the true effects of the internet music world.

There are some big brains working on building this model. Echo Nest co-founder Brian Whitman (He’s a Doctor!) and the team from Yahoo! Research that authored the paper “What Can Search Predict” which looks closely at how to use query volume to forecast openining box-office revenue for feature films. The Yahoo! research team includes a stellar lineup: Yahoo! Principal research scientist Duncan Watts whose research on the early-rater effect is a must read for anyone interested in recommendation and discovery; Yahoo! Principal Research Scientist David Pennock who focuses on algorithmic economics (be sure to read Greg Linden’s take on Seung-Taek Park and David’s paper Applying Collaborative Filtering Techniques to Movie Search for Better Ranking and Browsing); Jake Hoffman, expert in machine learning and data-driven modeling of complex systems; Research Scientist Sharad Goel (see his interesting paper on Anatomy of the Long Tail: Ordinary People with Extraordinary Tastes) and Research Scientist Sébastien Lahaie, expert in marketplace design, reputation systems (I’ve just added his paper Applying Learning Algorithms to Preference Elicitation to my reading list). This is a top-notch team

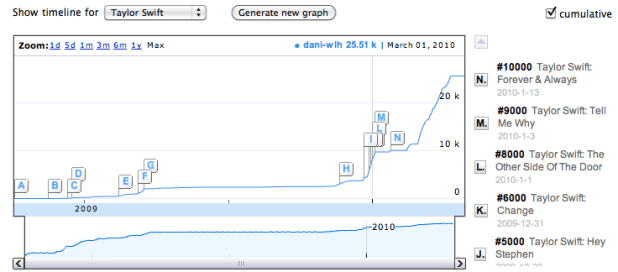

I look forward to the day when we have a predictive model for music that will help us understand how this:

affects this:

.

LyricWiki + Musicbrainz == ‘awesome’

Two of my favorite public resources for music data: LyricWiki and MusicBrainz are now working together: LyricWiki and MusicBrainz integration! Congrats Sean and Robert!

Here comes the antiphon

Posted by Paul in Music, remix, The Echo Nest on February 25, 2010

I’m gearing up for the SXSW panel on remix I’m giving in a couple of weeks. I thought I should veer away from ‘science experiments’ and try to create some remixes that sound musical. Here’s one where I’ve used remix to apply a little bit of a pre-echo to ‘Here Comes the Sun’. It gives it a little bit of a call and answer feel:

The core (choir?) code is thus:

for bar in enumerate(self.bar):

cur_data = self.input[bar]

if last:

last_data = self.input[last]

mixed_data = audio.mix(cur_data, last_data, mix=.3)

out.append(mixed_data)

else:

out.append(cur_data)

last = bar

Echo Nest Client Library for the Android Platform

Posted by Paul in code, Music, The Echo Nest on February 24, 2010

The Echo Nest is participating in annual mobdev contest for the Mobile Application Development (mobdev) course at Olin College offered by Mark L. Chang. Already, our participation is bearing fruit. Ilari Shafer, one of our course assistants created a version of the Echo Nest Java client library that runs on Android. You can fetch it here: echo-nest-android-java-api [zip].

The Echo Nest is participating in annual mobdev contest for the Mobile Application Development (mobdev) course at Olin College offered by Mark L. Chang. Already, our participation is bearing fruit. Ilari Shafer, one of our course assistants created a version of the Echo Nest Java client library that runs on Android. You can fetch it here: echo-nest-android-java-api [zip].

I spent a few hours yesterday talking to the mobdev class. The students had lots of great questions and lots of really interesting ideas on how to use the Echo Nest APIs to build interesting mobile apps. I can’t wait to see what they build in 10 days.

Organize your online music with ExtensionFM

On Friday I installed ExtensionFM– a chrome extension that helps you manage your online music listening. Dan Kantor, the creator, has a little video that shows you how it works:

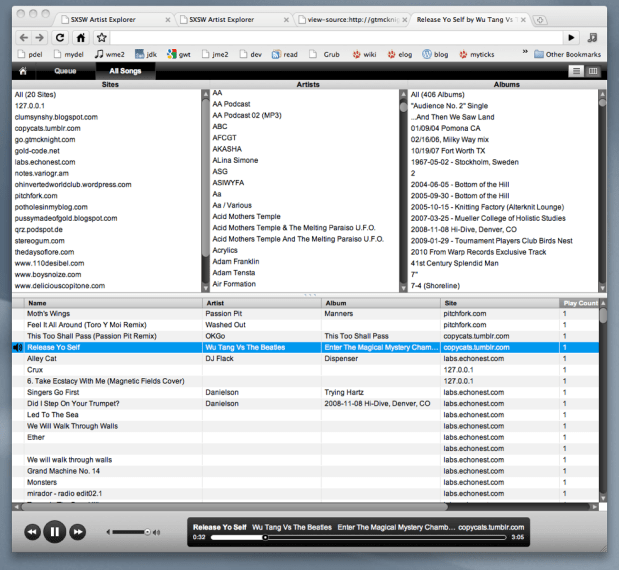

The idea behind ExtensionFM is very simple. When I visit a site that has music ExtensionFM notices and squirrels away all of the links to the music into an iTunes like player:

It does all of this work in the background without me having to do anything. After a weekend of browsing, ExtensionFM found music on 20 sites from over 300 artists, over 400 albums – for a total of over 1,000 tracks. ExtensionFM remembers the sites where the music was from and keeps track of when the links die. Note that it doesn’t actually copy music onto your computer, ExtensionFM just makes it easier to play music that is already out there.

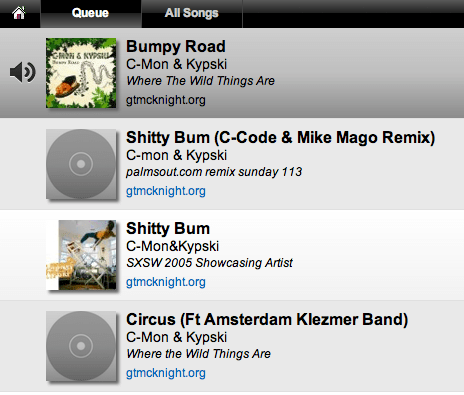

There are many nice touches in ExtensioFM. It keeps a play queue, and when you visit a music site you can easily add music to the queue.

You can edit the play queue easily adding and removing tracks from it.

ExtensionFM also augments a music laden site with music player buttons. So a site that looks like this:

is transformed into something like this:

Dan Kantor says he’ll be adding an option soon that will allow the disabling of this re-formatting for those who don’t like their web pages tampered with.

Unfortunately, ExtensionFM doesn’t always find music on a web page. Certain sites (Hype Machine for example) doesn’t expose Mp3 links so ExtensionFM can’t find the music. Dan says that right now ExtensionFM only grabs links that end in .mp3 or .ogg. It also works on Tumblr since they offer a very easy API to get a user’s audio posts. It is going to support Soundcloud embeds soon as well since they also offer an easy API. So the best way for developers to make sure their songs work with ExtensionFM is to make sure that the audio links are exposed in the html or to use Tumblr, or Soundcloud.

ExtensionFM is still in pre-release mode, but if you are lucky enough to get a release code, get the app, install it (it’s very easy to install), and start organizing your online music listening.