MUSIC EMOTION RECOGNITION: A STATE OF THE ART REVIEWYoungmoo E. Kim, Erik M. Schmidt, Raymond Migneco, Brandon G. Morton Patrick Richardson, Jeffrey Scott, Jacquelin A. Speck, and Douglas Turnbull (pdf)

From the paper: Recognizing musical mood remains a challenging problem primarily due to the inherent ambiguities of human emotions. Though research on this topic is not as mature as some other Music-IR tasks, it is clear that rapid progress is being made. In the past 5 years, the performance of automated systems for music emotion recognition using a wide range of annotated and content-based features (and multi-modal feature combinations) have advanced significantly. As with many Music-IR tasks open problems remain at all levels, from emotional representations and annotation methods to feature selection and machine learning.

While significant advances have been made, the most accurate systems thus far achieve predictions through large-scale machine learning algorithms operating on vast feature sets, sometimes spanning multiple domains, applied to relatively short musical selections. Oftentimes, this approach reveals little in terms of the underlying forces driving the perception of musical emotion (e.g., varying contributions of features) and, in particular, how emotions in music change over time. In the future, we anticipate further collaborations between Music-IR researchers, psychologists, and neuroscientists, which may lead to a greater understanding of not only mood within music, but human emotions in general. Furthermore, it is clear that individu- als perceive emotions within music differently. Given the multiple existing approaches for modeling the ambiguities of musical mood, a truly personalized system would likely need to incorporate some level of individual profiling to adjust its predictions.

This paper has provided a broad survey of the state of the art, highlighting many promising directions for further research. As attention to this problem increases, it is our hope that the progress of this research will continue to accelerate in the near future.

My notes:

Mirex performance on mood classification has held steady for the last few years. Most mood classification systems in Mirex are just adapted genre classifiers.

Categorical vs. dimensional

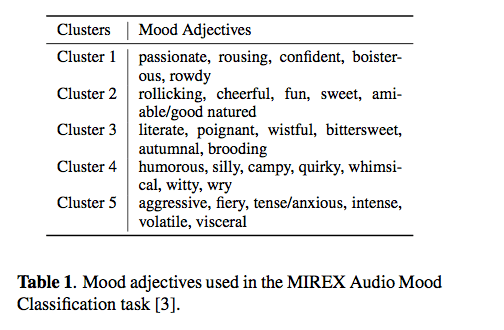

Categorical: Mirex classifies mood into 5 clusters:

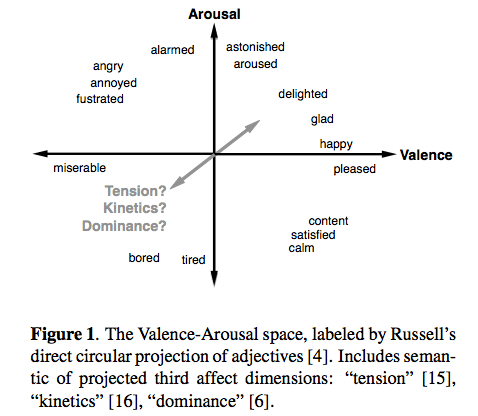

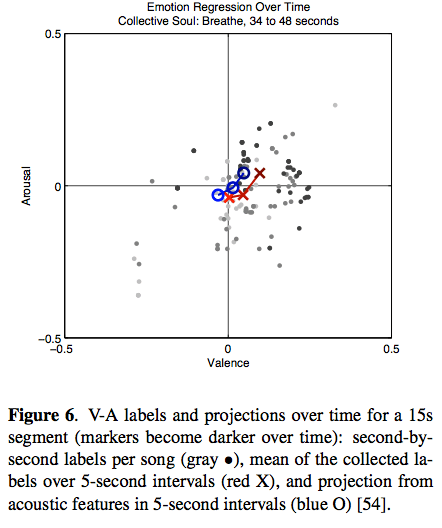

Dimensional: – the ever popular Valence-Arousal space – sometimes called the Thayer Mood model:

Dimensional: – the ever popular Valence-Arousal space – sometimes called the Thayer Mood model:

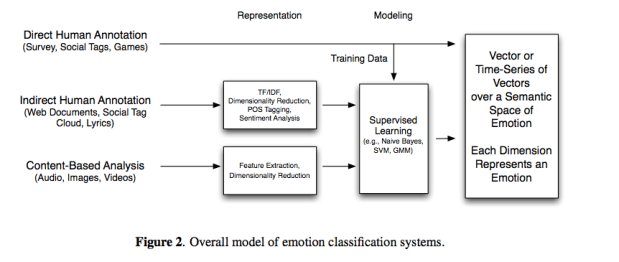

Typical emotion classification system

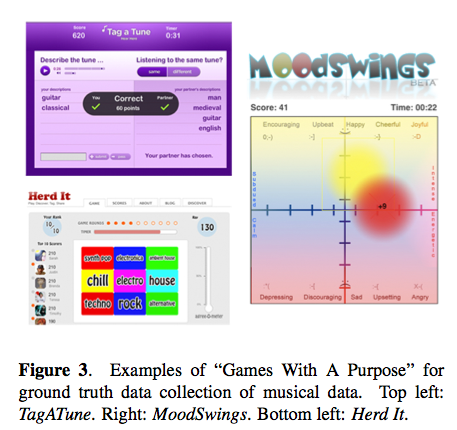

Ground Truth

A big challenge is to come up with groundtruth for training a recognition system. Last.fm tags, GWAP, AMG labels, web documents are common sources.

Lyrics – using lyrics alone has not been too successful for mood classification.

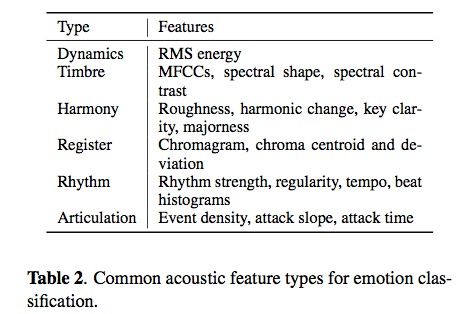

Content-based methods – typical features for mood:

Youngmoo’s latest work (with Eric Schmidt) is showing the distribution and change of emotion over time.

Hybrid systems

- Audio + Lyrics – some to high improvement

- Audio + Tags = good improvement

- Audio + Images = using album art to derive associations to mood

Conclusions – Mood recognition hasn’t improved much in recent years – probably because most systems are not really designed specifically for mood.

This was a great overview of the state-of-the-art. I’d be interested in hearing a much longer version of this talk. The paper and the references will be a great resource for anyone who’s interested in pursuing mood classification.