Posts Tagged The Echo Nest

The Echo Nest Is Joining Spotify: What It Means To Me, and To Developers

Posted by Paul in Music, The Echo Nest on March 6, 2014

I love writing music apps and playing with music data. How much? Every weekend I wake up at 6am and spend a long morning writing a new music app or playing with music data. That’s after a work week at The Echo Nest, where I spend much of my time writing music apps and playing with music data. The holiday break is even more fun, because I get to spend a whole week working on a longer project like Six Degrees of Black Sabbath or the 3D Music Maze.

That’s why I love working for The Echo Nest. We have built an amazing open API, which anyone can use, for free, to create music apps. With this API, you can create phenomenal music discovery apps like Boil the Frog that take you on a journey between any two artists, or Music Popcorn, which guides you through hundreds of music genres, from the arcane to the mainstream. It also powers apps that create new music, like Girl Talk in a Box, which lets you play with your favorite song in the browser, or The Swinger, which creates a Swing version of any song:

One of my roles at The Echo Nest is to be the developer evangelist for our API — to teach developers what our API can do, and get them excited about building things with it. This is the best job ever. I get to go to all parts of the world to attend events like Music Hack Day and show a new crop of developers what they can do with The Echo Nest API. In that regard, it’s also the easiest job ever, because when I show apps that developers have built with The Echo Nest API, like The Bonhamizer and The Infinite Jukebox, or show how to create a Pandora-like radio experience with just a few lines of Python, developers can’t help but get excited about our API.

At The Echo Nest we’ve built what I think is the best, most complete music API — and now our API is about to get so much better.

Today, we’ve announced that The Echo Nest has been acquired by Spotify, the award-winning digital music service. As part of Spotify, The Echo Nest will use our deep understanding of music to give Spotify listeners the best possible personalized music listening experience.

Today, we’ve announced that The Echo Nest has been acquired by Spotify, the award-winning digital music service. As part of Spotify, The Echo Nest will use our deep understanding of music to give Spotify listeners the best possible personalized music listening experience.

Spotify has long been committed to fostering a music app developer ecosystem. They have a number of APIs for creating apps on the web, on mobile devices, and within the Spotify application. They’ve been a sponsor and active participant in Music Hack Days for years now. Developers love Spotify, because it makes it easy to add music to an app without any licensing fuss. It has an incredibly huge music catalog that is available in countries around the world.

Spotify and The Echo Nest APIs already work well together. The Echo Nest already knows Spotify’s music catalog. All of our artist, song, and playlisting APIs can return Spotify IDs, making it easy to build a smart app that plays music from Spotify. Developers have been building Spotify / Echo Nest apps for years. If you go to a Music Hack Day, one of the most common phrases you’ll hear is, “This Spotify app uses The Echo Nest API”.

I am incredibly excited about becoming part of Spotify, especially because of what it means for The Echo Nest API. First, to be clear, The Echo Nest API isn’t going to go away. We are committed to continuing to support our open API. Second, although we haven’t sorted through all the details, you can imagine that there’s a whole lot of data that Spotify has that we can potentially use to enhance our API. Perhaps the first visible change you’ll see in The Echo Nest API as a result of our becoming part of Spotify is that we will be able to keep our view of the Spotify ID space in our Project Rosetta Stone ID mapping layer incredibly fresh. No more lag between when an item appears in Spotify and when its ID appears in The Echo Nest.

The Echo Nest and the Spotify APIs are complementary. Spotify’s API provides everything a developer needs to play music and facilitate interaction with the listener, while The Echo Nest provides deep data on the music itself — what it sounds like, what people are saying about it, similar music its fans should listen to, and too much more to mention here. These two APIs together provide everything you need to create just about any music app you can think of.

I am a longtime fan of Spotify. I’ve been following them now for over seven years. I first blogged about Spotify way back in January of 2007 while they were still in stealth mode. I blogged about the Spotify haircuts, and their serious demeanor:

I blogged about the Spotify application when it was released to private beta (“Woah – Spotify is pretty cool”), and continued to blog about them every time they added another cool feature.

Last month, on a very cold and snowy day in Boston, I sat across a conference table from of bunch of really smart folks with Swedish accents. As they described their vision of a music platform, it became clear to me that they share the same obsession that we do with trying to find the best way to bring fans and music together.

Together, we can create the best music listening experience in history.

I’m totally excited to be working for Spotify now. Still, as with any new beginning, there’s an ending as well. We’ve worked hard, for many years, to build The Echo Nest — and as anyone who’s spent time here knows, we have a very special culture of people totally obsessed with building the best music platform. Of course this will continue, but as we become part of Spotify things will necessarily change, and the time when The Echo Nest was a scrappy music startup when no one in their right mind wanted to be a music startup will be just a sweet memory. To me, this is a bit like graduation day in high school — it is exciting to be moving on to bigger things, but also sad to say goodbye to that crazy time in life.

There is a big difference: When I graduated from high school and went off to college, I had to say goodbye to all my friends, but now as I go off to the college of Spotify, all my friends and classmates from high school are coming with me. How exciting is that!

Learn about a new genre every day

Posted by Paul in code, genre, playlist, The Echo Nest on January 16, 2014

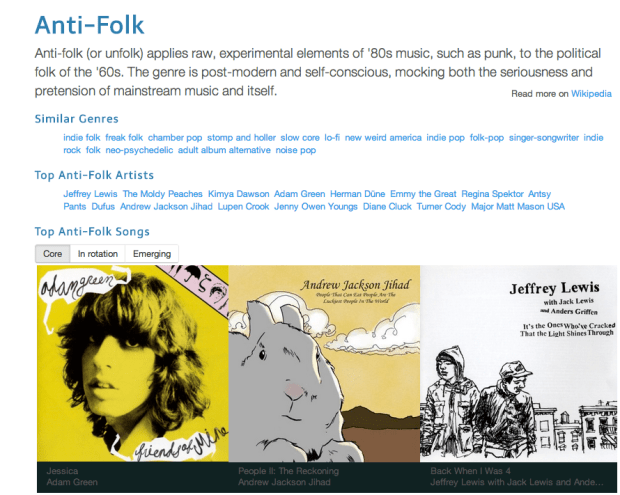

The Echo Nest knows about 800 genres of music (and that number is growing all the time). Among those 800 genres are ones that you already know about, like ‘jazz’,’rock’ and ‘classical’. But there are also hundreds of genres that you’ve probably never heard of. Genres like Filthstep, Dangdut or Skweee. Perhaps the best way to explore the genre space is via Every Noise at Once (built by Echo Nest genre-master Glenn McDonald). Every Noise At Once shows you the whole genre space, allowing you to explore the rich and varied universe of music. However, Every Noise at Once can be like drinking Champagne from a firehose – there’s just too much to take in all at once (it is, after all, every noise – at once). If you’d like to take a slower and more measured approach to learning about new music genres, you may be interested in Genre-A-Day.

Genre-A-Day is a web app that presents a new genre every day. Genre-A-Day tells you about the genre, shows you some representative artists for the genre, lets you explore similar genres, and lets you listen to music in the genre.

If you spend a few minutes every day reading about and listening to a new genre, after a few months you’ll be a much more well-rounded music listener, and after a few years your knowledge of genres will rival most musicologists’.

An easy way to make Genre-A-Day part of your daily routine is to follow @GenreADay on twitter. GenreADay will post a single tweet, once a day like so:

Under the hood – Genre-A-Day was built using the just released genre methods of The Echo Nest API. These methods allow you to get detailed info on the set of genres, the top artists for the genres, similar genres and so on. It also uses the super nifty genre presets in the playlist API that allow you to craft the genre-radio listener for someone who is new to the genre (core), for someone who is a long time listener of the genre (in rotation), or for someone looking for the newest music in that genre (emerging). The source code for Genre-A-Day is on github.

MIDEM Hack Day recap

What better way to spend a weekend on the French Riviera then in a conference room filled with food, soda, coffee and fellow coders and designers hacking on music! That’s what I and 26 other hackers did at the MIDEM Music Hack Day. Hackers came from all over the world to attend and participate in this 3rd annual hacking event to show what kind of of creative output can flow from those that are passionate about music and technology.

Unlike a typical Music Hack Day, this hack day has very limited space so only those with hardcore hacking cred were invited to attend. Hackers were from a wide range of companies and organizations including SoundCloud, Songkick, Gracenote, Musecore, We Make Awesome Sh.t, 7Digital, Reactify, Seevl, Webdoc, MuseCore, REEA, MTG-UPF, and Mint Digital and The Echo Nest. Several of the hackers were independent. The event was organized by Martyn Davies of Hacks & Bants along with help from the MIDEM organizers.

The hacker space provided for us is at the top of the Palais – which is the heart of MIDEM and Cannes. The hacking space has a terrace that overlooks the city, giving an excellent place to unwind and meditate while trying to figure out how to make something work.

The hack day started off with a presentation by Martyn explaining what a Music Hack Day is for the general MIDEM crowd. After which, members of the audience (the Emilys and Amelies) offered some hacking ideas in case any of the hyper-creative hackers attending the Hack Day were not able to come up with their own ideas.

After that, hacking started in force. Coders and designers paired up, hacking designs were sketched and github repositories were pulled and pushed.

The MIDEM Hack Day is longer than your usual Music Hack Day. Instead of the usual 24 hours, hackers have 45 hour to create their stuff. The extra time really makes a difference (especially if you hack like you only have 24 hours).

We had a few visitors during the course of the weekend. Perhaps the most notable was Robert Scoble. We gave him a few demos. My impression (based upon 3 minutes of interaction, so it is quite solid), is that Robert doesn’t care too much about music. (While I was giving him a demo of a very early version of Girl Talk in a Box, Robert reached out and hit the volume down key a few times on my computer. The effrontery of it all!). A number of developers gave Robert demos of their in-progress hacks, including Ben Fields, who at the time, didn’t know he was talking to someone who was Internet-famous.

As day turned to evening, the view from our terrace got more exciting. The NRJ Awards show takes place in the Palais and we had an awesome view of the red carpet. For 5 hours, the sounds of screaming teenagers lining the red carpet added to our hacking soundtrack. Carly Rae, Taylor Swift, One Direction and the great one (Psy) all came down the red carpet below us, adding excitement (and quite a bit of distraction) to the hack.

[youtube http://www.youtube.com/watch?v=OUkLAsphZDM]Yes, Psy did the horsey dance for us. Life is complete.

Finally, after 45 hours of hacking, we were ready to give our demos to the MIDEM audience.

There were 18 hacks created during the weekend. Check out the full list of hacks. Some of my favorites were:

- VidSwapper by Ben Fields. – swaps the audio from one video into another, syncronizing with video hit points along the way.

- RockStar by Pierre-loic Doulcet – RockStar let you direct a rockstar Band using Gesture.

- Miri by Aaron Randal – Miri is a personal assistant, controlled by voice, that specialises in answering music-related questions

- Ephemeral Playback by Alastair Porter – Ephemeral Playback takes the idea of slow music and slows it down even further. Only one song is active at a time. After you have listened to it you must share it to another user via twitter. Once you have shared it you can no longer listen to it.

- Music Collective by the Reactify team – A collaborative music game focussing on the phenomenon of how many people, when working together, form a collective ‘hive mind’.

- Leap Mix by Adam Howard – Control audio tracks with your hands.

It was fun demoing my own hack: Girl Talk in a Box – it is not everyday that a 50 something guy gets to pretend he’s Skrillex in front of a room full of music industry insiders.

All in all, it was a great event. Thanks to Martyn and MIDEM for making us hackers feel welcome at this event. MIDEM is an interesting place, where lots of music business happens. It is rather interesting for us hacker-types to see how this other world lives. No doubt, thanks to MIDEM Music Hack Day synergies were leveraged, silos were toppled, and ARPUs were maximized. Looking forward to next year!

Hear Here update

Posted by Paul in code, The Echo Nest on July 22, 2012

A bit more coding this weekend on ‘Hear Here’ my iPhone app that plays music by nearby artists. It is now feature complete. The list of features is rather small – it really is a ‘do one thing well’, kind of app. It plays music by the nearest artists that match your filter. You can filter currently by the popularity of the artist. If you are adventurous, you can listen to music by all nearby artists, but if you are not so brave you can just listen to music by mainstream or popular artists. The app shows you how far away the ‘now playing’ artist is and shows you how many artists are within a 25 mile radius. All music is streamed from Rdio and of course you’ll need an Rdio subscription to hear full streams. I made my own icon – it is pretty ugly – if you have design skills and want to contribute a logo I’d be very pleased to use it. Here’s a video of the app in action for a user who happens to be in Cupertino:

A bit more coding this weekend on ‘Hear Here’ my iPhone app that plays music by nearby artists. It is now feature complete. The list of features is rather small – it really is a ‘do one thing well’, kind of app. It plays music by the nearest artists that match your filter. You can filter currently by the popularity of the artist. If you are adventurous, you can listen to music by all nearby artists, but if you are not so brave you can just listen to music by mainstream or popular artists. The app shows you how far away the ‘now playing’ artist is and shows you how many artists are within a 25 mile radius. All music is streamed from Rdio and of course you’ll need an Rdio subscription to hear full streams. I made my own icon – it is pretty ugly – if you have design skills and want to contribute a logo I’d be very pleased to use it. Here’s a video of the app in action for a user who happens to be in Cupertino:

[youtube http://www.youtube.com/watch?v=Lfb5P8_8Dpk&hd=1]

Next steps for the app are lots of testing, especially with poor network connectivity. After that, I’ll make sure I’m following all the rules for Rdio and Apple – and once I’m conforming to all the TOS’s and UI guidelines I’ll submit it to the App Store (as a free app).

Roadtrip Mixtape

Posted by Paul in code, data, Music, The Echo Nest on June 17, 2012

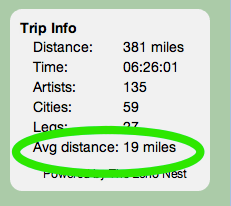

Over the last few years I’ve made a number of 1,000+ mile road trips as I shuttle kids to colleges in far away places. Listening to music has always been a big part of these trips. I thought it’d be nice to be able to listen to music by local artists when driving through a particular region, so I spent a few weekends creating an app called Roadtrip Mixtape that populates a roadtrip playlist with artists that are from the region you are driving through.

To create a playlist, type your starting and ending cities for your roadtrip. The app will use Google’s directions to plan the best route between the two cities. The route will then be broken into 15 minute playlist legs. Each playlist leg is populated by 15 minutes worth of music by nearby artists.

The beginning of each leg is represented by a green ball. You can click on the ball to see what artists will be played during that leg. The app plays music via Rdio using their nifty Web Player API. If you are an Rdio subscriber you can listen to full streams, and if not you get to hear 30 second samples. One bit of interesting info that I show for a route is the ‘Avg distance’. This shows the average distance to each artist on the roadtrip. If this number is low, you are traveling through a musically dense part of the world, and if it is high, you are traveling in a sparse musical region. For instance, for a roadtrip from Boston to New York the average artist distance is 3 miles (about as low as it goes). However, if you are traveling from Omaha to Denver, the average artist distance is 81 miles.

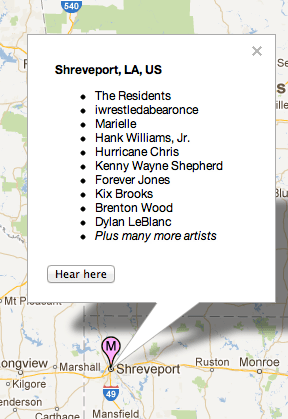

You can also click anywhere on the map to see and listen to nearby artists. For example, if you click on Shreveport you’ll see something like this:

When you click the ‘Hear here’ button, you’ll get a playlist of the hotttest artists from Shreveport.

Listening to nearby artists is quite fun. There’s potential from some extreme sonic whiplash as you drive near a brutal death metal band and then a pop vocalist from the 1950s

The Technical Bits

To build the app I used the new artist location data from The Echo Nest. This (still in beta) feature, allows you to retrieve the location of any artist. Here’s an example API call that retrieves the artist location for Radiohead:

For this app, I collected the locations for the top 100,000 or so most popular artists in the Rdio catalog. These artists were from about 15,000 different cities. I used geopy along with the Yahoo Placefinder geocoder to find the latitude and longitude for each of these cities. For the mapping and route finding, I used version 3.9 of the Google maps API. For music playback I used the Rdio Web Playback API. With the tight integration between the Echo Nest and Rdio ID spaces it was easy to go from a geolocated Echo Nest artist to a list of Rdio track IDs for songs by that artist.

The Bad Bits

As a web app that relies on the flash-based Rdio web player, Roadtrip Mixtape is not really a mobile app. It won’t play music on an iPhone or iPad, so the best way to actually use this app on the road is probably to bring along your tethered laptop. Not the best user experience. Thus, my next weekend project will be to learn a little bit of iOS programming a make a version of this app that runs on an iPhone and an iPad. Stay tuned for the next version.

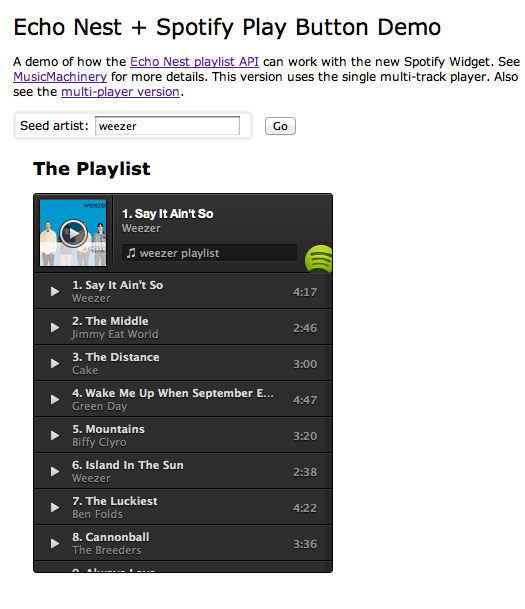

The Spotify Play Button – a lightening demo

Spotify just released a nifty embeddable play button. With the play button you can easily embed Spotify tracks in any web page or blog. Since there’s really tight integration between the Spotify and Echo Nest IDs, I thought I’d make a quick demo that shows how we can use the Echo Nest playlist API and the new Spotify Play button to make playlists.

The demo took about 5 minutes to write (shorter than it is taking to write the blog post). It is simple artist radio on a web page. Give it a go at: Echo Nest + Spotify Play Button Demo

Here’s what it looks like.

Update: Charlie and Samuel pointed out that there is a multi-track player too. I made a demo that uses that too:

The Spotify Play button is really easy to use, looks great. Well done Spotify.

Syncing Echo Nest analysis to Spotify Playback

Posted by Paul in code, Music, The Echo Nest on April 9, 2012

With the recently announced Spotify integration into Rosetta Stone, The Echo Nest now makes available a detailed audio analysis for millions of Spotify tracks. This audio analysis includes summary features such as tempo, loudness, energy, danceability, key and mode, as well as a set of fine-grained segment features that describe details such as where each bar, beat and tatum fall and the detailed pitch, timbral and loudness content of each audio event in the song. These features can be very useful for driving Spotify applications that need to react to what the music sounds like – from advanced dynamic music visualizations like the MIDEM music machine or synchronized music games like Guitar Hero.

I put together a little Spotify App that demonstrates how to synchronize Spotify Playback with the Echo Nest analysis. There’s a short video here of the synchronization:

video on youtube: http://youtu.be/TqhZ2x86RXs

In this video you can see the audio summary for the currently playing song, as well as a display synchronized ‘bar’ and ‘beat’ labels and detailed loudness, timbre and pitch values for the current segment.

How it works:

To get the detailed audio analysis, call the track/profile API with the Spotify Track ID for the track of interest. For example, here’s how to get the track for Radiohead’s Karma Police using the Spotify track ID:

This returns audio summary info for the track, including the tempo, energy and danceability. It also includes a field called the analysis_url which contains an expiring URL to the detailed analysis data. (A very abbreviated excerpt of an analysis is contained in this gist).

To synchronize Spotify playback with the Echo Nest analysis we need to first get the detailed analysis for the now playing track. We can do this by calling the aforementioned track/profile call to get the analysis_url for the detailed analysis, and then retrieve the analysis (it is stored in JSON format, so no reformatting is necessary). There is one technical glitch though. There is no way to make a JSONP call to retrieve the analysis. This prevents you from retrieving the analysis directly into a web app or a Spotify app. To get around this issue, I built a little proxy at labs.echonest.com that supports a JSONP style call to retrieve the contents of the analysis URL. For example, the call:

http://labs.echonest.com/3dServer/analysis?callback=foo &url=http://url_to_the_analysis_json

will return the analysis json wrapped in the foo() callback function. The Echo Nest does plan to add JSONP support to retrieving analysis data, but until then feel free to use my proxy. No guarantees on support or uptime since it is not supported by engineering. Use at your own risk.

Once you have retrieved the analysis you can get the current bar, beat, tatum and segment info based upon the current track position, which you can retrieve from Spotify with: sp.getTrackPlayer().getNowPlayingTrack().position. Since all the events in the analysis are timestamped, it is straightforward to find a corresponding bar,beat, tatum and segment given any song timestamp. I’ve posted a bit of code on gist that shows how I pull out the current bar, beat and segment based on the current track position along with some code that shows how to retrieve the analysis data from the Echo Nest. Feel free to use the code to build your own synchronized Echo Nest/Spotify app.

The Spotify App platform is an awesome platform for building music apps. Now, with the ability to use Echo Nest analysis from within Spotify apps, it is a lot easier to build Spotify apps that synchronize to the music. This opens the door to a whole range of new apps. I’m really looking forward to seeing what developers will build on top of this combined Echo Nest and Spotify platform.

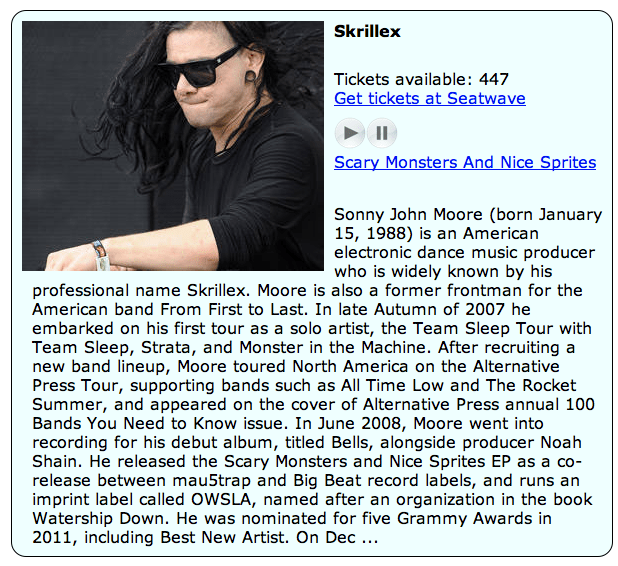

Building a Seatwave + Echo Nest App

Posted by Paul in code, data, Music, The Echo Nest on February 8, 2012

This weekend at Music Hack Day SF, Seatwave is launching their Ticketing and Event API. This API will make it easy for developers to add event discovery and ticket-buying functionality to their apps. At the Echo Nest we’ve incorporated Seatwave artist IDs into our Rosetta ID mapping layer making it possible to use Seatwave IDs directly with the Echo Nest API. This makes it easier for you to use the Seatwave and the Echo Nest APIs together. For instance, you can call the Seatwave API, get artist event IDs in response and use those IDs with the Echo Nest API to get more context about the artist.

This weekend at Music Hack Day SF, Seatwave is launching their Ticketing and Event API. This API will make it easy for developers to add event discovery and ticket-buying functionality to their apps. At the Echo Nest we’ve incorporated Seatwave artist IDs into our Rosetta ID mapping layer making it possible to use Seatwave IDs directly with the Echo Nest API. This makes it easier for you to use the Seatwave and the Echo Nest APIs together. For instance, you can call the Seatwave API, get artist event IDs in response and use those IDs with the Echo Nest API to get more context about the artist.

For example, we can make a call to the Seatwave API to get the set of Featured Contest with an API call:

The results include blocks of events like this:

{

“CategoryId”: 12,

“Currency”: “GBP”,

“Id”: 934,

“ImageURL”: “http://cdn2.seatwave.com/filestore/season/image/thestoneroses_934_1_1_20111018165906.jpg”,

“MinPrice”: 95,

“Name”: “The Stone Roses”,

“SwURL”: “http://www.seatwave.com/the-stone-roses-tickets/season”,

“TicketCount”: 1810

},

{

“CategoryId”: 10,

“Currency”: “GBP”,

“Id”: 702,

“ImageURL”: “http://cdn2.seatwave.com/filestore/season/image/redhotchilipeppers_702_1_1_20110617124457.jpg”,

“MinPrice”: 45,

“Name”: “Red Hot Chili Peppers”,

“SwURL”: “http://www.seatwave.com/red-hot-chilli-peppers-tickets/season”,

“TicketCount”: 1134

},

We see events for the Stone Roses and for RHCP. The Seatwave ID for RHCP is 702. We can use this ID directly with in Echo Nest calls. For instance, to get lots of Echo Nest info on the RHCP using the Seatwave ID, we can make an artist/profile call like so:

To show off the integration of Seatwave and Echo Nest, I’ve built a little web app that shows a list of top Seatwave concerts (generated via the Seatwave API). For each artist, the app shows the number of tickets available, the artist’s biography, along with a play button that will let you listen to a sample of the artist (via 7Digital).

The application is live here: Listen to Top Seatwave Artists. The code is on github: plamere/SWDemo

The Seatwave API is quite easy to work with. They support JSON, JSONP, XML and SOAP(bleh). Lots of good data, very nice artist images, generous affiliate program, easy to understand TOS. Highly recommended. See the Seatwave page in The Echo Nest Developer Center for more info on the Seatwave / Echo Nest integration.

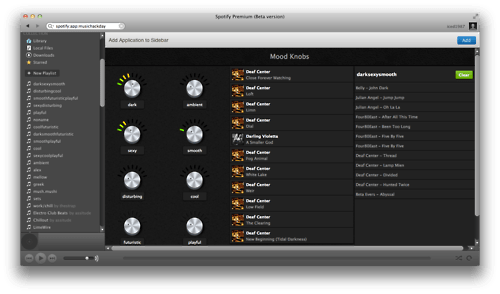

The Future of Mood in Music

Posted by Paul in The Echo Nest on December 6, 2011

One of my favorite hacks from Music Hack Day London is Mood Knobs. It is a Spotify App that generates Echo Nest playlists by mood. Turn some cool virtual analog knobs to generate playlists.

The developers have put the source in github. W00t. Check it all out here: The Future of Mood in Music.

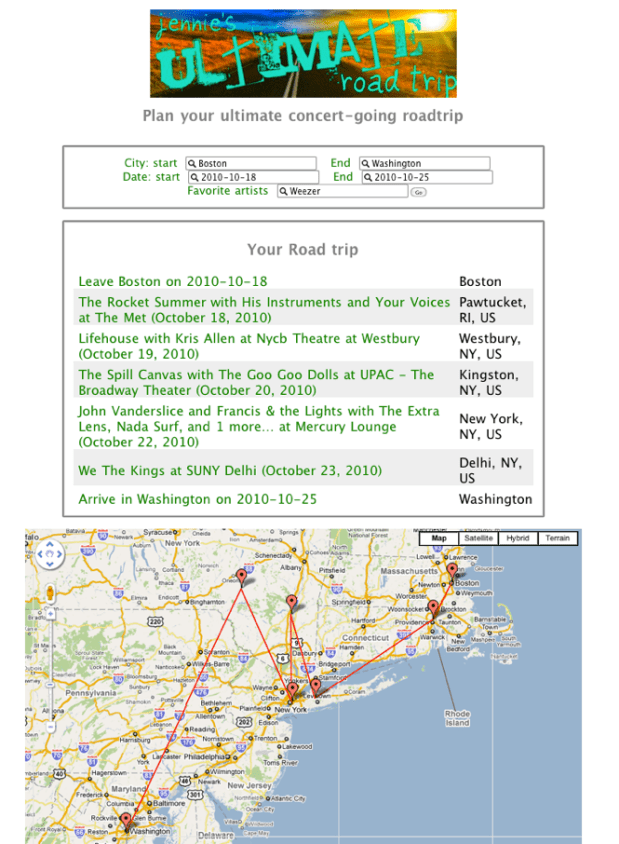

Jennie’s ultimate road trip

Posted by Paul in code, fun, Music, The Echo Nest on October 20, 2010

Last weekend at Music Hack Day Boston, I teamed up with Jennie, my 15-year-old daughter, to build her idea for a music hack which we’ve called Jennie’s Ultimate Road Trip. The hack helps you plan a road trip so that you’ll maximize the number of great concerts you can attend along the way. You give the app your starting and ending city, your starting and ending dates, and the names of some of your favorite artists and Jennie’s Ultimate Road Trip will search through the many events to find the ones that fit your route schedule that you’d like to see and gives you an itinerary and map.

We used the wonderful SongKick API to grab events for all the nearby cities. I was quite surprised at the how many events SongKick would find. For just a single week, in the geographic area between Boston and New York City, SongKick found 1,161 events with 2,168 different artists. More events and more artists makes it easier to find a route that will give a satisfying set of concerts – but it can also make finding a route a bit more computationally challenging too (more on that later). Once we had the set of possible artists that we could visit, we needed to narrow down the list of artists to the ones would be of most interest to the user. To do this we used the new Personal Catalogs feature of the Echo Nest API. We created a personal catalog containing all of the potential artists (so for our trip to NYC from Boston, we’d create a catalog of 2,168 artists). We then used the Echo Nest artist similarity APIs to get recommendations for artists within this catalog. This yielded us a set of 200 artists that best match the user’s taste that would be playing in the area.

The next bit was the tricky bit – first, we subsetted the events to just include events for the recommended set of artists. Then we had to build the optimal route through the events, considering the date and time of the event, the preference the user has for the artist, whether or not we’ve already been to an event for this artist on the trip, how far out of our way the venue is from our ultimate destination and how far the event is from our previous destination. For anyone who saw me looking grouchy on Sunday morning during the hack day it was because it was hard trying to figure out a good cost function that would weigh all of these factors: artist preference, travel time and distance between shows, event history. The computer science folks who read this blog will recognize that this route finding is similar to the ‘travelling salesman problem‘ – but with a twist, instead of finding a route between cities, which don’t tend to move around too much, we have to find a path through a set of artist concerts where every night, the artists are in different places. I call this the ‘travelling rock star’ problem. Ultimately I was pretty happy with how the routing algorithm, it can find a decent route through a thousand events in less than 30 seconds.

Jennie joined me for a few hours at the Music Hack Day – she coded up the HTML for the webform and made the top banner – (it was pretty weird to look over on her computer and see her typing in raw HTML tags with attached CSS attributes – kids these days). We got the demo done in time – and with the power of caching it will generate routes and plot them on a map using the Google API. Unfortunately, if your route doesn’t happen to be in the cache, it can take quite a bit of time to get a route out of the app – gathering events from SongKick, getting the recommendations from the Echo Nest, and finding the optimal route all add up to an app that can take 5 minutes before you get your answer. When I get a bit of time, I’ll take another pass to speed things up. When it is fast enough, I’ll put it online.

It was a fun demo to write. I especially enjoyed working on it with my daughter. And we won the SongKick prize, which was pretty fantastic.