Posts Tagged recommendation

Why streaming recommendations are different than DVD recommendations at Netflix

From Why Netflix Never Implemented The Algorithm That Won The Netflix $1 Million Challenge

An interesting insight:

when people rent a movie that won’t arrive for a few days, they’re making a bet on what they want at some future point. And, people tend to have a more… optimistic viewpoint of their future selves. That is, they may be willing to rent, say, an “artsy” movie that won’t show up for a few days, feeling that they’ll be in the mood to watch it a few days (weeks?) in the future, knowing they’re not in the mood immediately. But when the choice is immediate, they deal with their present selves, and that choice can be quite different.

When I was a Netflix DVD subscriber the Seven Samurai sat on top of my TV for months. My present self never matched the optimistic view I had of my future self.

Xavier’s blog post on Netfix recommendation is worth the read. Dealing with a household with widely different tastes, the importance of the order of presentation of recommendations

What is so special about music?

Posted by Paul in Music, recommendation on October 23, 2011

In the Recommender Systems world there is a school of thought that says that it doesn’t matter what type of items you are recommending. For these folks, a recommender is a black box that takes in user behavior data and outputs recommendations. It doesn’t matter what you are recommending – books, music, movies, Disney vacations, or deodorant. According to this school of thought you can take the system that you use for recommending books and easily repurpose it to recommend music. This is wrong. If you try to build a recommender by taking your collaborative filtering book recommender and applying it to music, you will fail. Music is different. Music is special.

Here are 10 reasons why music is special and why your off-the-shelf collaborative filtering system won’t work so well with music.

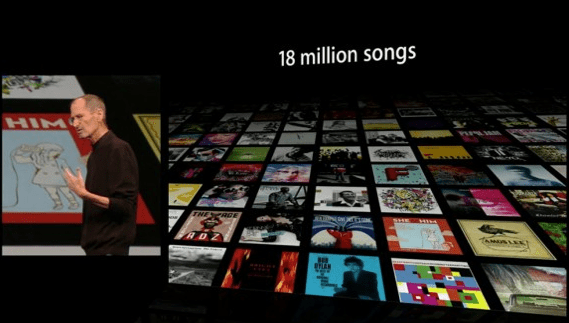

Huge item space – There is a whole lot of music out there. Industrial sized music collections typically have 10 million songs or more. The iTunes music store boasts 18 million songs. The algorithms that worked so wonderfully on the Netfix Dataset (one of the largest CF datasets released, contain user data for 17,770 movies) will not work so well when having to deal with a dataset that is three orders of magnitude larger.

Very low cost per item – When the cost per item is low, the risk of a bad recommendation is low. If you recommend to me a bad Disney Vacation I am out $10,000 and a week of my time. If you recommend a bad song, I hit the skip button and move on to the next.

Many item types – In the music world, there are many things to recommend: tracks, albums, artists, genres, covers, remixes, concerts, labels, playlists, radio stations other listeners etc.

Low consumption time – A book can take a week to read, a movie may take a few hours to watch, a song may take 3 minutes to listen to. Since I can consume music so quickly, I need lots of recommendations (perhaps 30 an hour) to keep my queue filled, whereas 30 book recommendations may keep me reading for a whole year. This has implications for scaling of a recommender. It also ties in with the low cost per item issue. Because music is so cheap and so quick to consume, the risk of a bad recommendation is very low. A music recommender can afford to be more adventurous than other types of recommenders.

Very high per-item reuse – I’ve read my favorite book perhaps half-a-dozen times, I’ve seen my favorite movie 3 times and I’ve probably listened to my favorite song thousands of times. We listen to music over and over again. We like familiar music. A music recommender has to understand the tension between familiarity and novelty. The Netflix movie recommender will never recommend The Bourne Identity to me because it knows that I already watched it, but a good music playlist recommender had better include a good mix of my old favorites along with new music.

Highly passionate users -There’s no more passionate fan than a music fan. This is a two-edged sword. If your recommender introduce a music fan to new music that they like they will transfer some of their passion to your music service. This is why Pandora has such a vocal and passionate user base. On the other hand, if your recommender adds a Nickelback track to a Led Zeppelin playlist you will have to endure the wrath of the slighted fan.

Highly contextual usage – We listen to music differently in different contexts. I may have an exercising playlist, a working playlist, a driving playlist etc. I may make a playlist to show my friends how cool I am when I have them over for a social gathering. Not too many people go to Amazon looking for a list of books that they can read while jogging. A successful music recommender needs to take context into account.

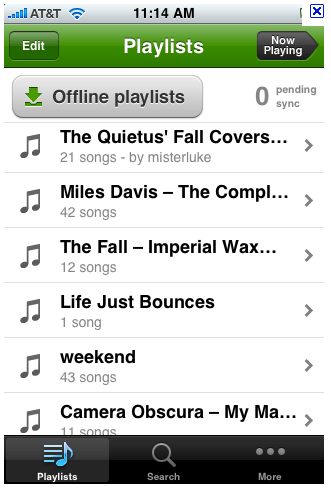

Consumed in sequences – Listening to songs in order has always been a big part of the music experience. We love playlists, mixtapes, DJ mixes, albums. Some people make their living putting songs into interesting order. Your collaborative filtering algorithm doesn’t have the ability to create coherent, interesting playlists with a mix of new music and old favorites

Large Personal Collections – Music fans often have extremely large personal collections – making it easier for recommendation and discovery tools to understand the detailed music taste of a listener. A personalized movie recommender may start with a list of a dozen rated movies, while a music recommender may be able to recommend music based upon many thousands of plays, ratings skips and bans.

Highly Social – Music is social. People love to share music. They express their identity to others by the music they listen to. They give each other playlists and mixtapes. Music is a big part of who we are.

Music is special – but of course, so are books, movies and Disney vacations – every type of item has its own special characteristics that should be taken into account when building recommendation and discovery tools. There’s no one-size-fits-all recommendation algorithm.

Unofficial Artist Guide to SXSW

Posted by Paul in events, Music, recommendation, The Echo Nest on March 4, 2010

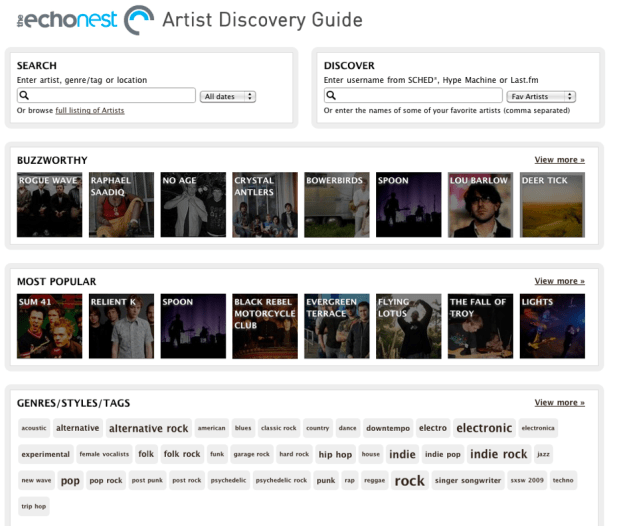

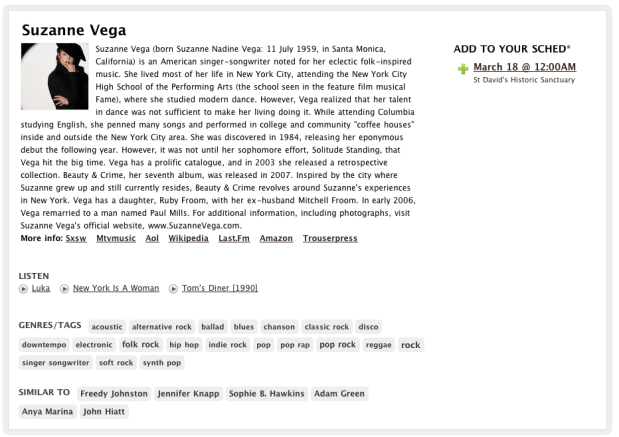

I’m excited! Next week I travel to Austin for a week long computer+music geek-fest at SXSW. A big part of SXSW is the music – there are nearly 2,000 different artists playing at SXSW this year. But that presents a problem – there are so many bands going to SXSW (many I’ve never heard of) that I find it very hard to figure out which bands I should go and see. I need a tool to help me find sift through all of the artists – a tool that will help me decide which artists I should add to my schedule and which ones I should skip. I’m not the only one who was daunted by the large artist list. Taylor McKnight, founder of SCHED*, was thinking the same thing. He wanted to give his users a better way to plan their time at SXSW. And so over a couple of weekends Taylor built (with a little backend support from us) The Unofficial Artist Discovery Guide to SXSW.

The Unofficial Artist Discovery Guide to SXSW is a tool that allows you to explore the many artists attending this year’s SXSW. It lets you search for artists, browse popularity, music style, ‘buzzworthiness’, or similarity to your favorite artists – and it will make recommendations for you based on your music taste (using your Last.fm, Sched* or Hype Machine accounts) . The Artist Guide supplies enough context (bios, images, music, tag clouds, links) to help you decide if you might like an artist.

Here’s the guide:

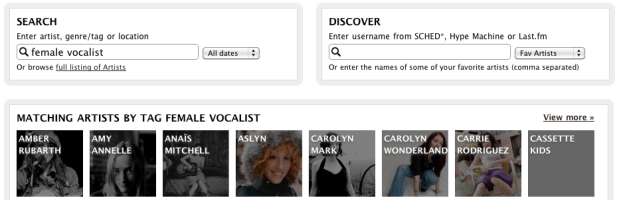

Here’s a quick tour of some of the things you can do with the guide. First off, you can Search for artists by name, genre/tag or location. This helps you find music when you know what you are looking for.

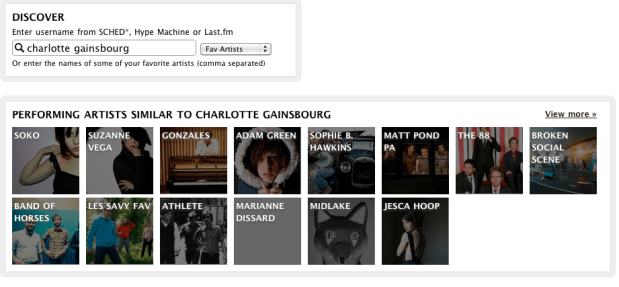

However, you may not always be sure what you are looking for – that’s where you use Discover. This gives you recommendations based on the music you already like. Type in the name of a few artists (even artists that are not playing at SXSW) or your SCHED*, Hype Machine or Last.fm user name, and ‘Discover’ will give you a set of recommendations for SXSW artists based on your music taste. For example, I’ve been listening to Charlotte Gainsbourg lately so I can use the artist guide to help me find SXSW artists that I might like:

If I see an artist that looks interesting I can drill down and get more info about the artist:

From here I can read the artist bio, listen to some audio, explore other similar SXSW artists or add the event to my SCHED* schedule.

From here I can read the artist bio, listen to some audio, explore other similar SXSW artists or add the event to my SCHED* schedule.

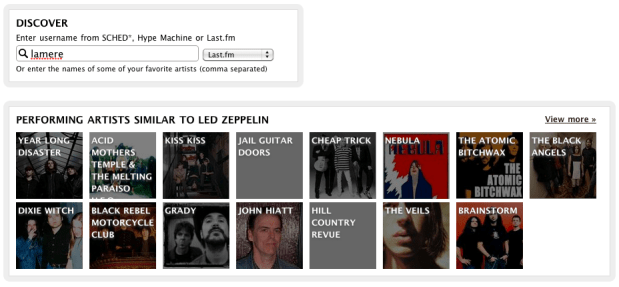

I use Last.fm quite a bit, so I can enter my Last.fm name and get SXSW recommendations based upon my Last.fm top artists. The artist guide tries to mix things up a little bit so if I don’t like the recommendations I see, I can just ask again and I can get a different set. Here are some recommendations based on my recent listening at Last.fm:

If you’ve been using the wonderful SCHED* to keep track of your SXSW calendar you can use the guide to get recommendations based on artists that you’ve already added to your SXSW calendar.

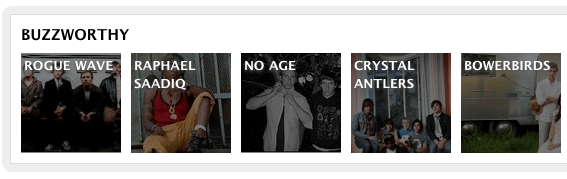

In addition to search and discovery, the guide gives you a number of different ways to browse the SXSW Artist space. You can browse by ‘buzzworthy’ artists – these are artists that are getting the most buzz on the web:

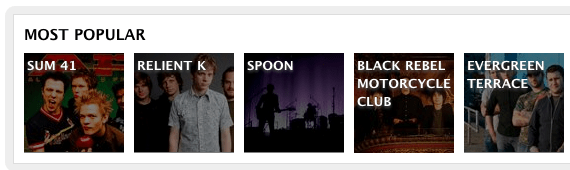

Or the most well-known artists:

You can browse by the style of music via a tag cloud:

And by venue:

Building the guide was pretty straightforward. Taylor used the Echo Nest APIs to get the detailed artist data such as familiarity, popularity, artist bios, links, images, tags and audio. The only data that was not available at the Echo Nest was the venue and schedule info which was provided by Arkadiy (one of Taylor’s colleagues). Even though SXSW artists can be extremely long tail (some don’t even have Myspace pages), the Echo Nest was able to provide really good coverage for these sets (There was coverage for over 95% of the artists). Still there are a few gaps and I suspect there may be a few errors in the data (my favorite wrong image is for the band Abe Vigoda). If you are in a band that is going to SXSW and you see that we have some of your info wrong, send me an email (paul@echonest.com) and I’ll make it right.

We are excited to see the this Artist Discovery guide built on top of the Echo Nest. It’s a great showcase for the Echo Nest developer platform and working with Taylor was great. He’s one of these hyper-creative, energetic types – smart, gets things done and full of new ideas. Taylor may be adding a few more features to the guide before SXSW, so stay tuned and we’ll keep you posted on new developments.

The 6th Beatle

Posted by Paul in Music, recommendation, The Echo Nest on February 12, 2010

When I test-drive a new music recommender I usually start by getting recommendations based upon ‘The Beatles’ (If you like the Beatles, you make like XX). Most recommenders give results that include artists like John Lennon, Paul McCartney, George Harrison, The Who, The Rolling Stones, Queen, Pink Floyd, Bob Dylan, Wings, The Kinks and Beach Boys. These recommendations are reasonable, but they probably won’t help you find any new music. The problem is that these recommenders rely on the wisdom of the crowds and so an extremely popular artist like The Beatles tends to get paired up with other popular artists – the results being that the recommender doesn’t tell you anything that you don’t already know. If you are trying to use a recommender to discover music that sounds like The Beatles, these recommenders won’t really help you – Queen may be an OK recommendation, but chances are good that you already know about them (and The Rolling Stones and Bob Dylan, etc.) so you are not finding any new music.

When I test-drive a new music recommender I usually start by getting recommendations based upon ‘The Beatles’ (If you like the Beatles, you make like XX). Most recommenders give results that include artists like John Lennon, Paul McCartney, George Harrison, The Who, The Rolling Stones, Queen, Pink Floyd, Bob Dylan, Wings, The Kinks and Beach Boys. These recommendations are reasonable, but they probably won’t help you find any new music. The problem is that these recommenders rely on the wisdom of the crowds and so an extremely popular artist like The Beatles tends to get paired up with other popular artists – the results being that the recommender doesn’t tell you anything that you don’t already know. If you are trying to use a recommender to discover music that sounds like The Beatles, these recommenders won’t really help you – Queen may be an OK recommendation, but chances are good that you already know about them (and The Rolling Stones and Bob Dylan, etc.) so you are not finding any new music.

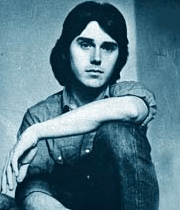

At The Echo Nest we don’t base our artist recommendations solely on the wisdom of crowds, instead we draw upon a number of different sources (including a broad and deep crawl of the music web). This helps us avoid the popularity biases that lead to ineffectual recommendations. For example, looking at some of the Echo Nest recommendations based upon the Beatles we find some artists that you may not see with a wisdom of the crowds recommender – artists that actually sound like the Beatles – not just artists that happened to be popular at the same time as the Beatles. Echo Nest recommendations include artists such as The Beau Brummels , The Dukes of Stratosphear, Flamin’ Groovies and an artist named Emitt Rhodes. I had never ever seen Emitt Rhodes occur in any recommendation based on the Beatles, so I was a bit skeptical, but I took a listen and this is what I found:

Update: Don Tillman points to this Beatle-esque track:

Emitt could be the sixth Beatles. I think it’s a pretty cool recommendation

ISMIR 2009 – The Future of MIR

Posted by Paul in ismir, Music, recommendation on October 29, 2009

This year ISMIR concludes with the 1st Workshop on the Future of MIR. The workshop is organized by students who are indeed the future of MIR.

09:00-10:00 Special Session: 1st Workshop on the Future of MIR

MIR, where we are, where we are going

Session Chair: Amélie Anglade Program Chair of f(MIR)

Meaningful Music Retrieval

Frans Wiering – [pdf]

Notes

- Some unfortunate tendencies: anatomical view of music – a dead body that we do autopsies, time is the loser Traditional production-oriented/

- Measure of similarity: relevance, surprise

- Few interesting applications for end-users

- bad fit to present-day musicological themes

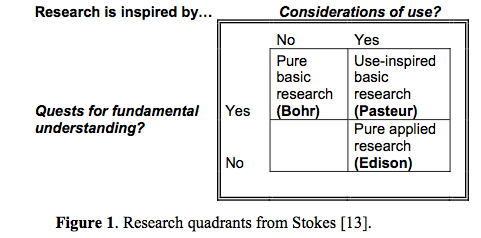

- We are in the world of ‘pure applied research’ – no truth interdisciplinary between music domain knowledge and computer science.

- Music is meaningful (and the underlying personal motivation of most MIR researchers).

- Meaning in musicology – traditionally a taboo suject

- Subjectivity: an indivds. disposition to engage in social and cultural interactions

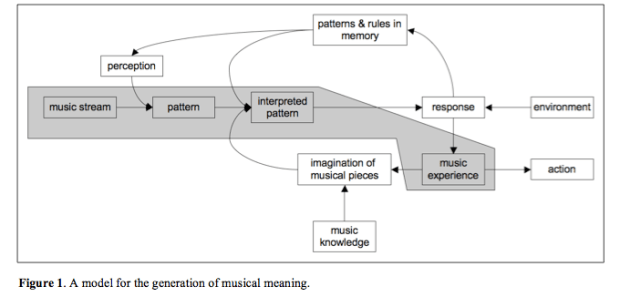

- Meaning generation process – we have a long-term memory for music –

- Can musical meaning provide the ‘big story line’ for MIR?

The Discipline Formerly Known As MIR

Perfecto Herrera, Joan Serrà, Cyril Laurier, Enric Guaus, Emilia Gómez and Xavier Serra

Intro: Our exploration is not a science-fiction essay. We do not try to imagine how music will be conceptualized, experienced and mediated by our yet-to-come research, technological achievements and music gizmos. Alternatively, we reflect on how the discipline should evolve to become consolidated as such, in order it may get an effective future instead of becoming, after a promising start, just a “would-be” discipline.Our vision addresses different aspects: the discipline’s object of study, the employed methodologies, social and cultural impacts (which are out of this long abstract because of space restrictions), and we finish with some (maybe) disturbing issues that could be taken as partial and biased guidelines for future research.

Notes: One motivation for advancing MIR – more banquets!

- MIR is no more about retrieval than computer science is about computers

- Music Information Retrieval – it’s too narrow

- Music Information or Information about Music?

- Interested in the interaction with music information

- We should be asking more profound questions

- music

- content tresasures in short musical exceprts, tracks performances etc.

- context

- music understanding systems

- Most metadata will be generated in the creation / production phase (hmm.. don’t agree necessarily, all the good metadata (tags, who likes what) is based on context and use which is post-hoc)

- Instead of automatic analysis – build systems to help humans help humans

- Music like water? or Music as dog!!! – a friend – companion –

- Personalization, Findability

- Music turing test

Good, provocative talk

Oral Session 2: Potential future MIR applications

Session Chair: Jason Hockman (McGill University), Program Chair of f(MIR)

Machine Listening to Percussion: Current Approaches and Future Directions – [pdf]

Michael Ward

Abstract: approaches have been taken to detect and classify percussive events within music signals for a variety of purposes with differing and converging aims. In this paper an overview of those technologies is presented and a discussion of the issues still to overcome and future possibilities in the field are presented. Finally a system capable of monitoring a student drummer is envisaged which draws together current approaches and future work in the field.

Notes:

- Challengs: Onset detection of isolated drum strokes

- Onset detection and classification of overlapping drum sounds

- Onset detection and classification in the presence of other instruments

- Variability in Percussive sounds . Dozens of criteria effect the sounds produced (strike velocity, angle, position etc.)

- Future Research Areas

- Extension of recognition to include the wide variety of strokes. (open hh, half-open hh, hh foot splash etc)

MIR When All Recordings Are Gone: Recommending Live Music in Real-Time – [pdf]

Marco Lüthy and Jean-Julien Aucouturier

Recommending live and short lived events. Bandsintown, Songkick, gigulate … pay attention to this paper.

Notes:

- Recommendation for live music in real-time

- Coldplay -> free album when you get a ticket to a coldplay concert – give away the music

- NIN -> USB keys in the toilet – which had strange recording on the file – strange sounds – an FFT of the sounds showed phone number and GPS coordinates – turned into a treasure hunt to a NIN nails concert.

- Komuso Tokugawa – an avatar for a musiciaon in second life. Plays in second life, twitters concert announcements (playing wake for Les Paul in 3 minutes)

- ‘How do we get there in time?’

- JJ walked through how to implement a recommender system in second life

- Implicit preference inferred from how long your avatar listens to a concert (Nicole Yankelovich at Sun Labs should look at this stuff)

- Great talk by JJ – full of energy – neat ideas. Good work.

Poster Session

- Global Access to Ethnic Music: The Next Big Challenge?

Olmo Cornelis, Dirk Moelants and Marc Leman - The Future of Music IR: How Do You Know When a Problem Is Solved?

Eric Nichols and Donald Byrd

The Echo Nest Fanalytics

Posted by Paul in Music, recommendation, The Echo Nest on June 25, 2009

At the core of just about everything we do here at the Echo Nest is what we call “The Knowledge”. This is big pile of data that represents everything we know about music. To build ‘The Knowledge’ we crawl the web looking for every bit of info about music. We find music blogs, artist news, album reviews, biographies, audio, images, videos, fan activity and on and on. This gives us a huge set of raw data that represents the global conversation about music. Next, we apply a set of statistical and natural language processing algorithms to this raw data to give us a deeper understanding of what all this data means. For instance, one fundamental algorithm tells us whether a particular web document is about a particular artist. This might be easy for an artist with a distinctive name like Metallica, but may not be so easy for The Rolling Stones (is it the band or the magazine?), and can be hard for bands with ambiguous names like Air and Yes, and can be extremely difficult for artists such as Torsten Pröfrock who tragically has chosen the stage name ‘Various Artists‘ (what was he thinking?). Another algorithm that we apply to music reviews is sentiment analysis. This helps us decide whether or not a reviewer has a positive opinion about the music being reviewed. We can take a review like this one written by Jennie, my 14 year old daughter, and learn whether or not she likes the new album by Beyoncé and whether or not she tends to like R&B and pop music.

At the core of just about everything we do here at the Echo Nest is what we call “The Knowledge”. This is big pile of data that represents everything we know about music. To build ‘The Knowledge’ we crawl the web looking for every bit of info about music. We find music blogs, artist news, album reviews, biographies, audio, images, videos, fan activity and on and on. This gives us a huge set of raw data that represents the global conversation about music. Next, we apply a set of statistical and natural language processing algorithms to this raw data to give us a deeper understanding of what all this data means. For instance, one fundamental algorithm tells us whether a particular web document is about a particular artist. This might be easy for an artist with a distinctive name like Metallica, but may not be so easy for The Rolling Stones (is it the band or the magazine?), and can be hard for bands with ambiguous names like Air and Yes, and can be extremely difficult for artists such as Torsten Pröfrock who tragically has chosen the stage name ‘Various Artists‘ (what was he thinking?). Another algorithm that we apply to music reviews is sentiment analysis. This helps us decide whether or not a reviewer has a positive opinion about the music being reviewed. We can take a review like this one written by Jennie, my 14 year old daughter, and learn whether or not she likes the new album by Beyoncé and whether or not she tends to like R&B and pop music.

In addition to analyzing what people are writing about music, we also try to extract as much meaning as we can from the music itself. We apply digital signal processing and machine learning algorithms to audio allowing us to extract information such as tempo, key, song structure, loudness, energy, harmonic content and timbre from every song.

Traditionally, “The Knowledge” has helped us build tools to help music fans explore and discover music – using all this data helps us predict what type of music a listener might like. For the last year, we’ve offered artist similarity and music recommendation web services around this data. But now we are going to turn this all upside down. Instead of using this data to help listeners find new music, we are going to use this data to help artists find new fans. That is what Fanalytics is all about.

Traditionally, “The Knowledge” has helped us build tools to help music fans explore and discover music – using all this data helps us predict what type of music a listener might like. For the last year, we’ve offered artist similarity and music recommendation web services around this data. But now we are going to turn this all upside down. Instead of using this data to help listeners find new music, we are going to use this data to help artists find new fans. That is what Fanalytics is all about.

For example, music blogs and review sites are becoming increasingly important way for an artist to build buzz around a new release. However, there are thousands of music blogs – each with its own specialty. This becomes a problem for the artist. How can she decide which blogs she should target for promoting her new album? This is one of the problems that Fanalytics tries to solve. With ‘The Knowledge’ we know quite a bit about thousands of music blogs. We know the reputation and the reach of a blog. We know what types of a music a particular author tends to write about, and we know what kinds of music they tend to like. With this knowledge we can make what is essentially a recommendation engine for music promotion. For any artist we can recommend a set blogs and writers that would most likely be interested in writing about the artist.

In addition to this recommendation engine tailored to music promotion, Fanalytics also provides a set of analytics tools that use ‘The Knowledge’ to help artists better understand their audience. For instance, an artist can track everything that is being said online about them – every blog post, news item, music review, video, as well as their online ‘buzz’ – a quantitative measure of how much attention the artist is receiving from reviewers, bloggers, fans, etc.

We have just launched Fanalytics, but apparently we are already seeing strong interest from the labels. (According the press release Interscope, Independent Label Group (WMG), RCA Music Group (Sony) and The Orchard are already on board). That’s not too surprising, the labels are looking for new ways to reach out to fans. As we continue to grow “The Knowledge” here at the Echo Nest I’m sure we will be creating more interesting tools like Fanalytics that are built around the data .

The Passion Index

Posted by Paul in data, fun, Music, recommendation, research, The Echo Nest on June 18, 2009

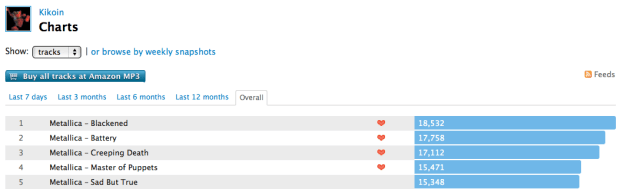

One of the ways that Music 2.0 has changed how we think about music is that there is so much interesting data available about how people are listening to music. Sites like Last.fm automatically track all sorts of interesting data that just was not available before. Forty years ago, a music label like Capitol would know how many copies the album Abbey Road sold in the U.S., but the label wouldn’t know how many times people actually listened to the album. Today, however, our iPods and desktop music players keep careful track of how many times we play each song, album and artist – giving us a whole new way to look at artist popularity.  It’s not just sales figures anymore, its how often are people actually listening to an artist. If you go to Last.fm you can see that The Beatles have over 1.75 million listeners and 168 million plays. It makes it easy for us to see how popular the Beatles are compared to another band (the monkees, for instance have 2.5m plays and 285K listeners).

It’s not just sales figures anymore, its how often are people actually listening to an artist. If you go to Last.fm you can see that The Beatles have over 1.75 million listeners and 168 million plays. It makes it easy for us to see how popular the Beatles are compared to another band (the monkees, for instance have 2.5m plays and 285K listeners).

With all of this new data available, there are some new ways we can look at artists. Instead of just looking at artists in terms of popularity and sales rank, I think it is interesting to see which artists generate the most passionate listeners. These are artists that dominate the playlists of their fans. I think this ‘passion index’ may be an interesting metric to use to help people explore for and discovery music. Artists that attract passionate fans may be longer lived and worth a listeners investment in time and money.

How can we calculate a passion index? There are probably a number of indicators: the number of edits to the bands wikipedia page, the average distance a fan travels to attend a show by the artist, the number of fan sites for an artist. All of these may be a bit difficult to collect, especially for a large set of artists. One simple passion metric is just the average number of artist plays per listener. Presumably if an artist’s listeners are playing an artist’s songs more than average they are more passionate about the artist. One thing that I like about this approach to the passion index is that it is extremely easy to calculate – just divide the total artist plays by the total number of artist listeners and you have the passion index. Yes, there are many confounding factors – for instance, artists with longer songs are penalized – still I think it is a pretty good measure.

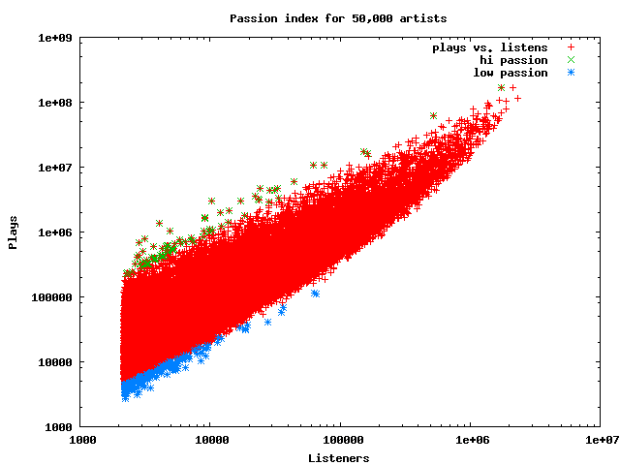

I calculated the passion index for a large collection of artists. I started with about a million artists (it is really nice to have all this data at the Echo Nest;), and filtered these down to the 50K most popular artists. I plotted the number of artist plays vs. the number of artist listeners for each of the 50 K listeners. The plot shows that most artists fall into the central band (normal passion), but some (the green points) are high passion artists and some (the blue points) are low passion artists.

For the 50K artists, the average track plays per artist/listener is just 11 plays (with a std deviation of about 11.5). Considering that there are a substantial number of artists in my iTunes collection that I’ve played only once, this seems pretty resaonable.

So who are the artists with the highest passion index? Here are the top ten:

| Passion | Listeners | Plays | Artist |

|---|---|---|---|

| 332 | 4065 | 1352719 | 上海アリス幻樂団 |

| 292 | 10374 | 3032373 | Belo |

| 245 | 3147 | 773959 | Petos |

| 241 | 2829 | 683191 | Reilukerho |

| 208 | 4887 | 1020538 | Sound Horizon |

| 190 | 24422 | 4652968 | 동방신기 |

| 185 | 9133 | 1691866 | 岡崎律子 |

| 175 | 9171 | 1611106 | Kollegah |

| 173 | 17279 | 3004410 | Super Junior |

| 170 | 62592 | 10662940 | Böhse Onkelz |

I didn’t recognize any of these artists (and I’m not even sure if 上海アリス幻樂団 is really an artist – according to the Japanese wikipedia it is a fan club in Japan  to produce a music game coterie – whatever that means). Belo is a Brazilian pop artist that does indeed seem to have some rather passionate fans.

to produce a music game coterie – whatever that means). Belo is a Brazilian pop artist that does indeed seem to have some rather passionate fans.

It is not surprising that it is hard for popular artists to rank at the very top of the passion index. Popular artists are exposed to many, many listeners which can easily reduce the passion index. Here are the top passion-ranked artists drawn from the top-1000 most popular artists:

| Passion | Listeners | Plays | Artist |

|---|---|---|---|

| 115 | 527653 | 60978053 | In Flames |

| 95 | 1748159 | 167765187 | The Beatles |

| 79 | 2140659 | 170106143 | Radiohead |

| 78 | 282308 | 22071498 | Die Ärzte |

| 75 | 269052 | 20293399 | Mindless Self Indulgence |

| 75 | 691100 | 52217023 | Nightwish |

| 74 | 332658 | 24645786 | Porcupine Tree |

| 74 | 1056834 | 79135038 | Nine Inch Nails |

| 72 | 384574 | 27901385 | Opeth |

| 70 | 601587 | 42563097 | Rise Against |

| 69 | 357317 | 24911669 | Sonata Arctica |

| 69 | 1364096 | 95399150 | Metallica |

| 66 | 460518 | 30625121 | Children of Bodom |

| 66 | 619396 | 41440369 | Paramore |

| 65 | 504464 | 33271871 | Dream Theater |

| 65 | 1391809 | 90888046 | Pink Floyd |

| 64 | 540184 | 34635084 | Brand New |

| 62 | 862468 | 54094977 | Iron Maiden |

| 62 | 1681914 | 105935202 | Muse |

| 61 | 381942 | 23478290 | Beirut |

I find it interesting to see all of the heavy metal bands in the top 20. Metal fans are indeed true fans.

Going to the other end of passion, we find the 20 popular artists that have the least passionate fans:

| Passion | Listeners | Plays | Artist |

|---|---|---|---|

| 6 | 270692 | 1767977 | Julie London |

| 6 | 284087 | 1964292 | Smoke City |

| 6 | 294100 | 1784358 | Dinah Washington |

| 6 | 295200 | 1799303 | The Bangles |

| 6 | 295990 | 1832771 | Donna Summer |

| 6 | 306018 | 1905285 | Bonnie Tyler |

| 6 | 307407 | 2123599 | Buffalo Springfield |

| 6 | 311543 | 2085085 | Franz Schubert |

| 6 | 312078 | 1909769 | The Hollies |

| 6 | 313732 | 2190008 | Tom Jones |

| 6 | 325454 | 2025366 | Eric Prydz |

| 6 | 331837 | 2259892 | Sarah Vaughan |

| 6 | 332072 | 2016898 | Soft Cell |

| 6 | 407622 | 2622570 | Steppenwolf |

| 5 | 275770 | 1605268 | Diana Ross |

| 5 | 281037 | 1615125 | Isaac Hayes |

| 5 | 282095 | 1685959 | The Isley Brothers |

| 5 | 283467 | 1666824 | Survivor |

| 5 | 311867 | 1694947 | Peggy Lee |

| 5 | 333437 | 1925611 | Wham! |

| 5 | 388183 | 2244878 | Kool & The Gang |

I guess people are not too passionate about Soft Cell.

Here’s a passion chart for the top 100 most popular artists. Even the artists at the bottom of this chart are way above average on the passion index.

| Passion | Listeners | Plays | Artist |

|---|---|---|---|

| 95 | 1748159 | 167765187 | The Beatles |

| 79 | 2140659 | 170106143 | Radiohead |

| 74 | 1056834 | 79135038 | Nine Inch Nails |

| 69 | 1364096 | 95399150 | Metallica |

| 65 | 1391809 | 90888046 | Pink Floyd |

| 62 | 1681914 | 105935202 | Muse |

| 61 | 1397442 | 85685015 | System of a Down |

| 61 | 1403951 | 86849524 | Linkin Park |

| 60 | 1346298 | 81762621 | Death Cab for Cutie |

| 57 | 1060269 | 61127025 | Fall Out Boy |

| 56 | 1155877 | 65324424 | Arctic Monkeys |

| 55 | 1897332 | 104932225 | Red Hot Chili Peppers |

| 54 | 950416 | 52019102 | My Chemical Romance |

| 50 | 1131952 | 56622835 | blink-182 |

| 49 | 2313815 | 115653456 | Coldplay |

| 48 | 964970 | 47102550 | Sigur Rós |

| 48 | 1108397 | 53260614 | Modest Mouse |

| 48 | 1350931 | 65865988 | Placebo |

| 47 | 1129004 | 53771343 | Jack Johnson |

| 44 | 1297020 | 57111763 | Led Zeppelin |

| 43 | 1011131 | 43930085 | Kings of Leon |

| 42 | 947904 | 39970477 | Marilyn Manson |

| 42 | 1065375 | 45459226 | Britney Spears |

| 42 | 1246213 | 52656343 | Incubus |

| 42 | 1256717 | 53610102 | Bob Dylan |

| 41 | 1527721 | 62654675 | Green Day |

| 41 | 1881718 | 78473290 | The Killers |

| 40 | 1023666 | 41288978 | Queens of the Stone Age |

| 40 | 1057539 | 42472755 | Kanye West |

| 40 | 1108044 | 44845176 | Interpol |

| 40 | 1247838 | 49914554 | Depeche Mode |

| 40 | 1318140 | 53594021 | Bloc Party |

| 39 | 1266502 | 49492511 | The White Stripes |

| 38 | 1048025 | 40174997 | Evanescence |

| 38 | 1091324 | 42195854 | Pearl Jam |

| 38 | 1734180 | 67541885 | Nirvana |

| 37 | 978342 | 36561552 | The Kooks |

| 37 | 1097968 | 41046538 | The Shins |

| 37 | 1114190 | 42051787 | The Offspring |

| 37 | 1379096 | 51313607 | The Cure |

| 37 | 1566660 | 58923515 | Foo Fighters |

| 36 | 1326946 | 48738588 | The Smashing Pumpkins |

| 35 | 1091278 | 39194471 | Björk |

| 35 | 1271334 | 45619688 | The Strokes |

| 34 | 955876 | 33376744 | Jimmy Eat World |

| 34 | 1251461 | 42949597 | Daft Punk |

| 33 | 989230 | 33257150 | Pixies |

| 33 | 1012060 | 34225186 | Eminem |

| 33 | 1051836 | 35529878 | Avril Lavigne |

| 33 | 1110087 | 36785736 | Johnny Cash |

| 33 | 1121138 | 37645208 | AC/DC |

| 33 | 1161536 | 38615571 | Air |

| 32 | 961327 | 31286528 | The Prodigy |

| 32 | 1038491 | 33270172 | Amy Winehouse |

| 32 | 1410438 | 45614720 | David Bowie |

| 32 | 1641475 | 52612972 | Oasis |

| 32 | 1693023 | 54971351 | U2 |

| 31 | 1258854 | 39598249 | Madonna |

| 31 | 1622198 | 51669720 | Queen |

| 30 | 1032223 | 31750683 | Portishead |

| 30 | 1178755 | 35600916 | Rage Against the Machine |

| 30 | 1249417 | 38284572 | The Doors |

| 30 | 1393406 | 42717325 | Beck |

| 29 | 1030982 | 30044419 | Yeah Yeah Yeahs |

| 29 | 1187160 | 34712193 | Massive Attack |

| 29 | 1348662 | 39131095 | Weezer |

| 29 | 1361510 | 39753640 | Snow Patrol |

| 28 | 985715 | 28485679 | The Postal Service |

| 28 | 1045205 | 30105531 | The Clash |

| 28 | 1305984 | 37807059 | Guns N’ Roses |

| 28 | 1532003 | 43998517 | Franz Ferdinand |

| 27 | 1000950 | 27262441 | Nickelback |

| 27 | 1395278 | 37856776 | Gorillaz |

| 26 | 1503035 | 40161219 | The Rolling Stones |

| 25 | 1345571 | 33741254 | R.E.M. |

| 24 | 1311410 | 32588864 | Moby |

| 23 | 973319 | 22962953 | Audioslave |

| 23 | 976745 | 22557111 | 3 Doors Down |

| 23 | 1123549 | 26696878 | Keane |

| 22 | 998933 | 21995497 | Justin Timberlake |

| 22 | 1025990 | 23145062 | Rihanna |

| 22 | 1109529 | 24687603 | Maroon 5 |

| 22 | 1120968 | 24796436 | Jimi Hendrix |

| 22 | 1160410 | 26641513 | [unknown] |

| 21 | 1151225 | 25081110 | The Who |

| 20 | 1057288 | 22084785 | The Chemical Brothers |

| 20 | 1105159 | 22925198 | Kaiser Chiefs |

| 20 | 1117306 | 22390847 | Nelly Furtado |

| 20 | 1201937 | 25019675 | Aerosmith |

| 20 | 1253613 | 25582503 | Blur |

| 19 | 968885 | 19219364 | Simon & Garfunkel |

| 19 | 974687 | 18528890 | Christina Aguilera |

| 19 | 1025305 | 20157209 | The Cranberries |

| 19 | 1144816 | 22252304 | Michael Jackson |

| 16 | 996649 | 16234996 | Black Eyed Peas |

| 16 | 1019886 | 16618386 | Eric Clapton |

| 15 | 980141 | 15317182 | The Police |

| 15 | 981451 | 15289554 | Dido |

| 14 | 973520 | 13781896 | Elton John |

| 13 | 949742 | 12624027 | The Verve |

I think it would be really interesting to incorporate the passion index into a recommender, so instead of just recommending artists that are similar to artists that a listener already likes, filter the similar artists with a passion filter and offer up the artists that listeners are most passionate about. I think these recommendations would be more valuable to the listener.

Music Explorer FX

Posted by Paul in java, Music, recommendation, The Echo Nest, visualization on June 11, 2009

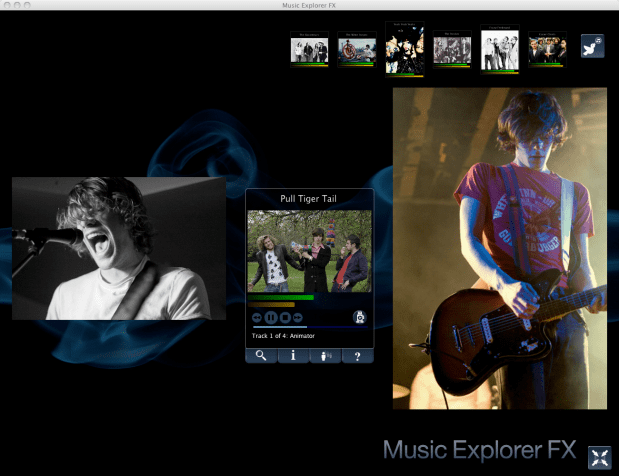

Sten has posted a link to his super nifty Music Explorer FX. Music Explorer FX is a Java Fx application for exploring and discovering music. In some ways, the application is like a much slicker version of Music Plasma or Musicovery. You can explore a particular neighborhood in the music world – looking at artist photos and videos, listening to music, reading reviews and blog posts, and following paths to similar artists. It’s a very engaging application that makes it easy to learn about new bands. I especially like the image gallery mode – when I find a band that I think might be interesting, I hit the play button to listen to their music, and then enter the image gallery to get a slide show of the band playing. Here’s an example of ‘Pull Tiger Tail’ – a band that I just learned about today while exploring with MEFX.

Sten uses a number of APIs to make MEFX happen. He uses the Echo Nest for artist search and to get all sorts of info including artist familiarity, hotness, artist similarity, blogs, news, reviews and audio. He gets artist images from Flickr and Last.fm – and just to make sure he’s relevant in this Twitter-centric world, he uses the Twitter API to let you tweet about any interesting paths you’ve taken through the music space.

We are living in a remarkable world now – there’s such an incredible amount of music available. There are millions of artists creating music in all styles. The challenge for today’s music listener is to find a way to navigate through this music space to find music that they will like. Traditional music recommenders can help, but I really think that applications like the MEFX that enable exploration of the music space are going to be important tools for the adventurous music listener

Spotify + Echo Nest == w00t!

Posted by Paul in Music, The Echo Nest, web services on May 19, 2009

Yesterday, at the SanFran MusicTech Summit, I gave a sneak preview that showed how Spotify is tapping into the Echo Nest platform to help their listeners explore for and discover new music. I must say that I am pretty excited about this. Anyone who has read this blog and its previous incarnation as ‘Duke Listens!’ knows that I am a long time enthusiast of Spotify (both the application and the team). I first blogged about Spotify way back in January of 2007 while they were still in stealth mode. I blogged about the Spotify haircuts, and their serious demeanor:

Yesterday, at the SanFran MusicTech Summit, I gave a sneak preview that showed how Spotify is tapping into the Echo Nest platform to help their listeners explore for and discover new music. I must say that I am pretty excited about this. Anyone who has read this blog and its previous incarnation as ‘Duke Listens!’ knows that I am a long time enthusiast of Spotify (both the application and the team). I first blogged about Spotify way back in January of 2007 while they were still in stealth mode. I blogged about the Spotify haircuts, and their serious demeanor:

I blogged about the Spotify application when it was released to private beta: Woah – Spotify is pretty cool, and continued to blog about them every time they added another cool feature.

I’ve been a daily user of Spotify for 18 months now. It is one of my favorite ways to listen to music on my computer. It gives me access to just about any song that I’d like to hear (with a few notable exceptions – still no Beatles for instance).

It is clear to anyone who uses Spotify for a few hours that having access to millions and millions of songs can be a bit daunting. With so many artists and songs to chose from, it can be hard to decide what to listen to – Barry Schwartz calls this the Paradox of Choice – he says too many options can be confusing and can create anxiety in a consumer. The folks at Spotify understand this. From the start they’ve been building tools to help make it easier for listeners to find music. For instance, they allow you to easily share playlists with your friends. I can create a music inbox playlist that any Spotify user can add music to. If I give the URL to my friends (or to my blog readers) they can add music that they think I should listen to.

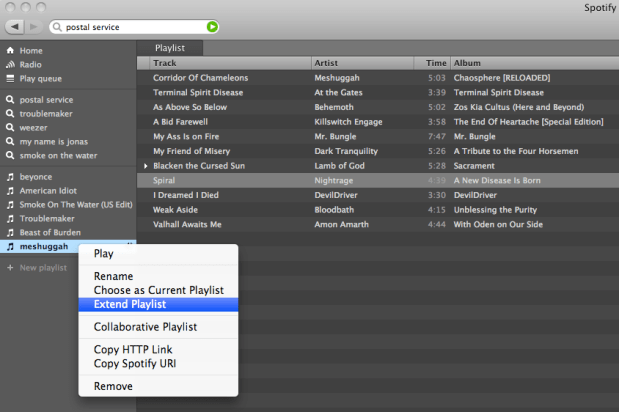

Now with the Spotify / Echo Nest connection, Spotify is going one step further in helping their listeners deal with the paradox of choice. They are providing tools to make it easier for people to explore for and discover new music. The first way that Spotify is tapping in to the Echo Nest platform is very simple, and intuitive. Right click on a playlist, and select ‘Extend Playlist’. When you do that, the playlist will automatically be extended with songs that fit in well with songs that are already in the playlist. Here’s an example:

So how is this different from any other music recommender? Well, there are a number of things going on here. First of all, most music recommenders rely on collaborative filtering (a.k.a. the wisdom of the crowds), to recommend music. This type of music recommendation works great for popular and familiar artists recommendations … if you like the Beatles, you may indeed like the Rolling Stones. But Collaborative Filtering (CF) based recommendations don’t work well when trying to recommend music at the track level. The data is often just to sparse to make recommendations. The wisdom of the crowds model fails when there is no crowd. When one is dealing with a Spotify-sized music collection of many millions of songs, there just isn’t enough user data to give effective recommendations for all of the tracks. The result is that popular tracks get recommended quite often, while less well known music is ignored. To deal with this problem many CF-based recommenders will rely on artist similarity and then select tracks at random from the set of similar artists. This approach doesn’t always work so well, especially if you are trying to make playlists with the recommender. For example, you may want a playlist of acoustic power ballads by hair metal bands of the 80s. You could seed the playlist with a song like Mötley Crüe’s Home Sweet Home, and expect to get similar power ballads, but instead you’d find your playlist populated with standard glam metal fair, with only a random chance that you’d have other acoustic power ballads. There are a boatload of other issues with wisdom of the crowds recommendations – I’ve written about them previously, suffice it to say that it is a challenge to get a CF-based recommender to give you good track-level recommendations.

The Echo Nest platform takes a different approach to track-level recommendation. Here’s what we do:

- Read and understand what people are saying about music – we crawl every corner of the web and read every news article, blog post, music review and web page for every artist, album and track. We apply statistical and natural language processing to extract meaning from all of these words. This gives us a broad and deep understanding of the global online conversation about music

- Listen to all music – we apply signal processing and machine learning algorithms to audio to extract a number perceptual features about music. For every song, we learn a wide variety of attributes about the song including the timbre, song structure, tempo, time signature, key, loudness and so on. We know, for instance, where every drum beat falls in Kashmir, and where the guitar solo starts in Starship Trooper.

- We combine this understanding of what people are saying about music and our understanding of what the music sounds like to build a model that can relate the two – to give us a better way of modeling a listeners reaction to music. There’s some pretty hardcore science and math here. If you are interested in the gory details, I suggest that you read Brian’s Thesis: Learning the meaning of music.

What this all means is that with the Echo Nest platform, if you want to make a playlist of acoustic hair metal power ballads, we’ll be able to do that – we know who the hair metal bands are, and we know what a power ballad sounds like. And since we don’t rely on the wisdom of the crowds for recommendation we can avoid some of the nasty problems that collaborative filtering can lead to. I think that when people get a chance to play with the ‘Extend Playlist’ feature they’ll be happy with the listening experience.

It was great fun giving the Spotify demo at the SanFran MusicTech Summit. Even though Spotify is not available here in the U.S., the buzz that is occuring in Europe around Spotify is leaking across the ocean. When I announced that Spotify would be using the Echo Nest, there’s was an audible gasp from the audience. Some people were seeing Spotify for the first time, but everyone knew about it. It was great to be able to show Spotify using the Echo Nest. This demo was just a sneak preview. I expect there will be lots more interestings to come. Stay tuned.

#recsplease – the Blip.fm Recommender bot

Posted by Paul in Music, recommendation, The Echo Nest, web services on May 5, 2009

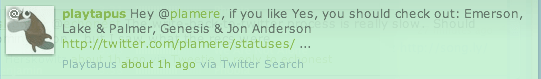

Jason has put together a mashup (ah, that term seems so old and dated now) that combines twitter, blip.fm, and the Echo Nest. When you Blip a song, just add the tag #recsplease to the twitter blip and you’ll get a reply with some artists that you might like to listen to.

Jason has put together a mashup (ah, that term seems so old and dated now) that combines twitter, blip.fm, and the Echo Nest. When you Blip a song, just add the tag #recsplease to the twitter blip and you’ll get a reply with some artists that you might like to listen to.

This is similar to recomme developed by Adam Lindsay but recomme has been down for a few weeks, so clearly there was a twitter-music-recommendation gap that needed to be filled.

Check out Jason’s Blip.fm/twitter recommender bot.