Archive for category ismir

Visualizing the Structure of Pop Music

Posted by Paul in code, data, ismir, Music, music information retrieval, The Echo Nest, visualization on November 19, 2012

The Infinite Jukebox generates plots of songs in which the most similar beats are connected by arcs. I call these plots cantograms. For instance, below is a labeled cantogram for the song Rolling in the Deep by Adele. The song starts at 3:00 on the circle and proceeds clockwise, beat by beat completely around the circle. I’ve labeled the plot so you can see how it aligns with the music. There’s an intro, a first verse, a chorus, a second verse, etc. until the outro and the end of the song.

One thing that’s interesting is that most of the beat similarity connections occur between the beats in the three instances of the chorus. This certainly makes intuitive sense. The verses have different lyrics, so for the most part they won’t be too similar to each other, but the choruses have the same lyrics, the same harmony, the same instrumentation. They may even be, for all we know may even be exactly the same audio, that perfect performance, cut and pasted three times by the audio engineer to make the best sounding version of the song.

Now take a look at the cantogram for another popular song. The plot below shows the beat similarities for the song Tik Tok by Ke$ha. What strikes me the most about this plot is how similar it looks to the plot for Rolling in the Deep. It has the characteristic longer intro+first verse, some minor inter-verse similarities and the very strong similarities between the three choruses.

As we look at more plots for modern pop music we see the same pattern over and over again. In this plot for Lady Gag’s Paparazzi a cantogram we again see the same pattern.

We see it in the plot for Justin Bieber’s Baby:

Taylor Swift’s Fearless has a two verses before the first chorus, shifting it further around the circle, but other than that the pattern holds:

Now compare and contrast the pop cantograms with those from other styles of music. First up is Led Zeppelin’s Stairway to heaven. There’s no discernable repeating chorus, or global song repetition, the only real long-arc repetition occurs during the guitar solo for the last quarter of the song.

Here’s another style of music. Deadmau5’s Raise your weapon. This is electronica (and maybe some dubstep). Clearly from the cantogram we can see that is is not a traditional pop song. Very little long arc repetition, with the densest cluster being the final dubstep break.

Dave Brubeck’s Take Five has a very different pattern, with lots of short term repetition during the first half of the song, while during the second half with Joe Morello’s drum solo there’s a very different pattern.

Green Grass and High Tides has yet a different pattern – no three choruses and out here. (By the way, the final guitar solo is well worth listening to in the Infinite Jukebox. It is the guitar solo that never ends).

The progressive rock anthem Roundabout doesn’t have the Pop Pattern

Nor does Yo-Yo Ma’s performance of the Cello suite No. 1.

Looking at the pop plots one begins to understand that pop music really could be made in a factory. Each song is cut from the same mold. In fact, one of the most successful pop songs in recent years, was produced by a label with factory in its name. Looking at Rebecca Black’s Friday we can tell right away that it is a pop song:

Compare that plot to this years Youtube breakout, Thanksgiving by Nicole Westbrook, (another Ark Music Factory assembly):

The plot has all the makings of the standard pop song for the 2010s.

In the music information retrieval research community there has been quite a bit of research into algorithmically extracting song structure, and visualizations are often part of this work. If you are interested in learning more about this research, I suggest looking at some of the publications by Meinard Müller and Craig Sapp.

Of course, not every pop song will follow the pattern that I’ve shown here. Nevertheless, I find it interesting that this very simple visualization is able to show us something about the structure of the modern pop song, and how similar this structure is across many of the top pop songs.

update: since publishing this post I’ve updated the layout algorithm in the Infinite Jukebox so that songs start and end at 12 Noon and not 3PM, so the plots you see in this post are rotated 90degrees clockwise from what you would see in the jukebox.

Do you do Music Information Retrieval?

Posted by Paul in code, ismir, Music, music information retrieval, The Echo Nest on September 10, 2010

We’re ramping up hiring at the Echo Nest. We’re looking for good MIR people at different experience levels to help us realize the company’s vision of knowing everything about all music automatically. I would guess that we are the closest analog to ISMIR in the industry– we only do music (audio and text), the base technology is straight out of our dissertations (brian, tristan) and we’re active in conferences and universities. We work with an amazing amount of music data on a daily basis and we sell it to some great people and companies that are changing the face of music.

MIR-background candidates are especially encouraged to apply as long as you have relevant experience and want to work on implementation at a very fast growing startup. These are almost all full time positions in our offices near Boston, MA USA. Even if you’re not graduating for a while let us know if you’re interested now.

More info at: http://the.echonest.com/company/jobs/

What Makes Beat Tracking Difficult? A Case Study on Chopin Mazurkas

Posted by Paul in events, ismir, music information retrieval, research on August 13, 2010

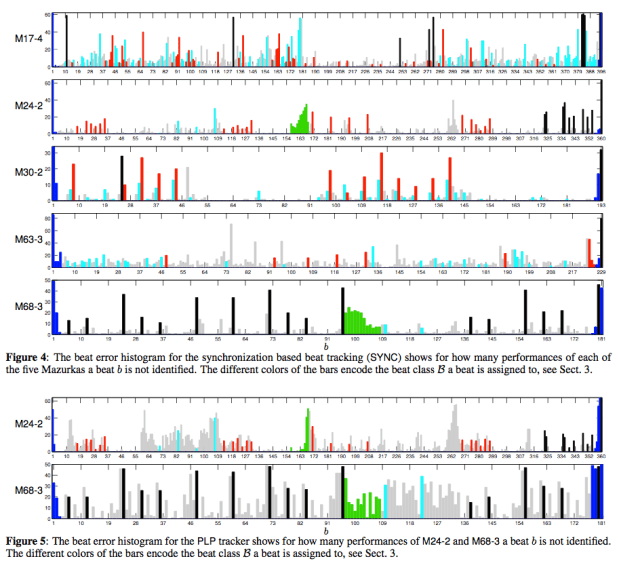

What Makes Beat Tracking Difficult? A Case Study on Chopin Mazurkas

Peter Grosche, Meinard Müller and Craig Stuart Sapp

ABSTRACT – The automated extraction of tempo and beat information from music recordings is a challenging task. Especially in the case of expressive performances, current beat tracking approaches still have significant problems to accurately capture local tempo deviations and beat positions. In this paper, we introduce a novel evaluation framework for detecting critical passages in a piece of music that are prone to tracking errors. Our idea is to look for consistencies in the beat tracking results over multiple performances of the same underlying piece. As another contribution, we further classify the critical passages by specifying musical properties of certain beats that frequently evoke trac ing errors. Finally, considering three conceptually different beat tracking procedures, we conduct a case study on the basis of a challenging test set that consists of a variety of piano performances of Chopin Mazurkas. Our experimental results not only make the limitations of state-of-the-art beat trackers explicit but also deepens the understanding of the underlying music material.

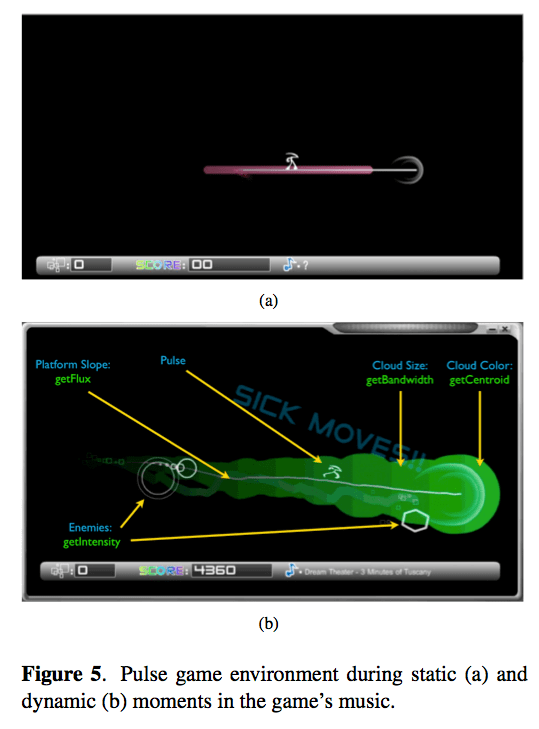

An Audio Processing Library for MIR Application Development in Flash

Posted by Paul in events, ismir, music information retrieval, research on August 13, 2010

An Audio Processing Library for MIR Application Development in Flash

Jeffrey Scott, Raymond Migneco, Brandon Morton, Christian M. Hahn, Paul Diefenbach and Youngmoo E. Kim

The Audio processing Library for Flash affords music-IR researchers the opportunity to generate rich, interactive, real-time music-IR driven applications. The various lev-els of complexity and control as well as the capability to execute analysis and synthesis simultaneously provide a means to generate unique programs that integrate content based retrieval of audio features. We have demonstrated the versatility and usefulness of ALF through the variety of applications described in this paper. As interest in mu sic driven applications intensifies, it is our goal to enable the community of developers and researchers in music-IR and related fields to generate interactive web-based media.

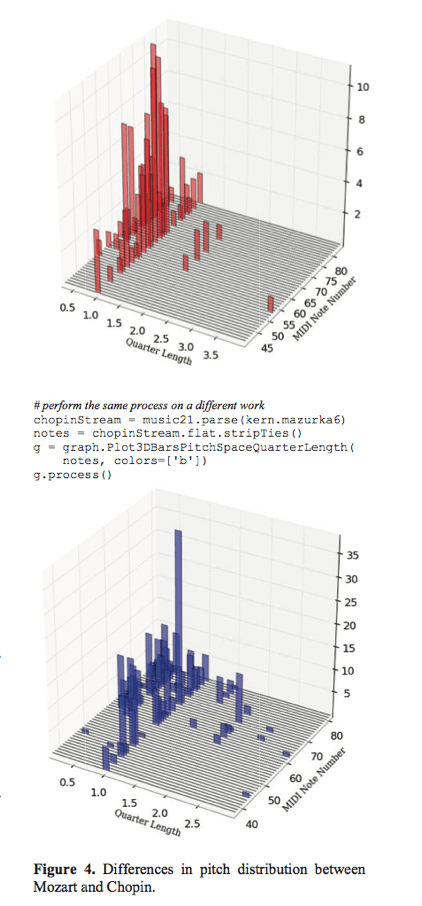

Music21: A Toolkit for Computer-Aided Musicology and Symbolic Music Data

Music21: A Toolkit for Computer-Aided Musicology and Symbolic Music Data

Michael Scott Cuthbert and Christopher Ariza

ABSTRACT – Music21 is an object-oriented toolkit for analyzing, searching, and transforming music in symbolic (score- based) forms. The modular approach of the project allows musicians and researchers to write simple scripts rapidly and reuse them in other projects. The toolkit aims to pro- vide powerful software tools integrated with sophisticated musical knowledge to both musicians with little pro- gramming experience (especially musicologists) and to programmers with only modest music theory skills.

Music21 looks to be a pretty neat toolkit for analyzing and manipulating symbolic music. It’s like Echo Nest Remix for MIDI. The blog has lots more info: music21 blog. You can get the toolkit here: music21

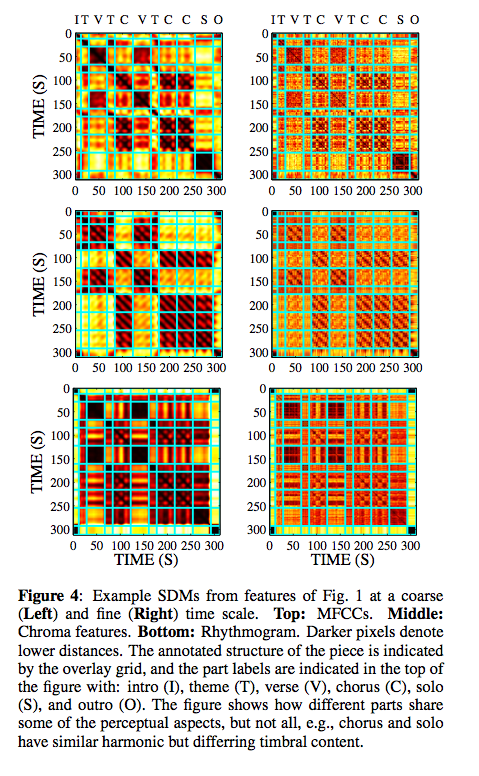

State of the Art Report: Audio-Based Music Structure Analysis

Posted by Paul in events, ismir, music information retrieval, research on August 13, 2010

State of the Art Report: Audio-Based Music Structure Analysis

Jouni Paulus, Meinard Müller and Anssi Klapuri

ABSTRACT – Humans tend to organize perceived information into hierarchies and structures, a principle that also applies to music. Even musically untrained listeners unconsciously analyze and segment music with regard to various musical aspects, for example, identifying recurrent themes or detecting temporal boundaries between contrasting musical parts. This paper gives an overview of state-of-the- art methods for computational music structure analysis, where the general goal is to divide an audio recording into temporal segments corresponding to musical parts and to group these segments into musically meaningful categories. There are many different criteria for segmenting and structuring music audio. In particular, one can identify three conceptually different approaches, which we refer to as repetition-based, novelty-based, and homogeneity- based approaches. Furthermore, one has to account for different musical dimensions such as melody, harmony, rhythm, and timbre. In our state-of-the-art report, we address these different issues in the context of music structure analysis, while discussing and categorizing the most relevant and recent articles in this field.

This presentation is an overview of the music structure analysis problem, and the methods proposed for solving it. The methods have been divided into three categories: novelty-based approaches, homogeneity-based approaches, and repetition-based approaches. The comparison of different methods has been problematic because of the differring goals, but current evaluations suggest that none of the approaches is clearly superior at this time, and that there is still room for considerable improvements.

The ISMIR business meeting

Posted by Paul in events, ismir, music information retrieval, research on August 12, 2010

Notes from the ISMIR business meeting – this is a meeting with the board of ISMIR.

Officers

- President: J. Stephen Downie, University of Illinois at Urbana-Champaign, USA

- Treasurer: George Tzanetakis, University of Victoria, Canada

- Secretary: Jin Ha Lee, University of Illinois at Urbana-Champaign, USA

- President-elect: Tim Crawford, Goldsmiths College, University of London, UK

- Member-at-large: Doug Eck, University of Montreal, Canada

- Member-at-large: Masataka Goto, National Institute of Advanced Industrial Science and Technology, Japan

- Member-at-large: Meinard Mueller, Max-Planck-Institut für Informatik, Germany

Stephen reviewed the roles of the various officers and duties of the various committees. He reminded us that one does not need to be on the board to serve on a subcommittee.

Publication Issues

- website redesign

- Other communities hardly know about ISMIR. Want to help other communities be aware of our research. One way is to make more links to other communities. Entering committees in other communities.

Hosting Issue – will formalize documentation, location planning, site selection.

Name change? There was a nifty debate around the meaning of ISMIR. There was a proposal to change it to ‘International Society for Music Informatics Research’. I recommend, given Doug’s comments about Youtube from this morning that we change the name to: ‘ International Society for Movie Informatics Research’

Review Process: Good discussion about the review process – we want paper bidding and double-blind reviews. Helps avoid gender bias:

Doug snuck in the secret word ‘youtube’ too, just for those hanging out on IRC.

f(MIR) industrial panel

Posted by Paul in ismir, Music, music information retrieval, research on August 12, 2010

- Douglas Eck (Google)

- Greg Mead (Musicmetric)

- Martin Roth (RjDj)

- Ricardo Tarrasch (Meemix)

- moderator: Rebecca Fiebrink (Princeton)

- rjdj – music making apps on devices like iphones

- musicmetric tracks 3 areas: Social networks, network analysis (influential fans), text via focused crawlers, p2p networks

- memix – music recommendation, artist radio, artist similarity, playlists. Pandora-like human analysis on 150K songs – then they learn these tags with machine learning. Look at which features best predict the tags. Important question is ‘what is important for the listeners’. Their aim is to find best parameters for taste prediction.

- google – goal is organize the world’s information. Doug would like to see an open API for companies to collaborate

Rebecca is the moderator.

What do you think is the next big thing? How is tech going to change things in the near future?

- Doug (Google) thinks that ‘music recommendation is solved’ – he’s excited about the cellphone. Also excited about programs like chuck to make it easier for people to create music (nice pandering to the moderator, doug!)

- Ricardo (MeeMix) – the laid back position is the future – reach the specific taste of a user. Personalized advertisements.

- Greg (MusicMetric) – Cloudbased services will help us understand what people want which will yield to playlisting, recommendation, novel players.

- Martin (RjDJ) – Thinks that the phone is really exciting – having all this power in the phone lets you do neat thing. He’s excited about how people will be able to create music – using sensory inputs, ambient audio.

How will tech revolutionize music?

- Doug – being able to collaborate with Arcade Fire on online

- Martin – musically illiterate should be able to make music

- Ricardo – we can help new artists reach the right fans

- Greg – services for helping artists, merchandising, ticket sales etc.

What are the most interesting problems or technical questions?

- Greg – interested in understanding the behavior of the fans. Especially by those on P2P networks. Huge amount of geographic-specific listener data

- Ricardo – more research around taste and recommendation

- Doug – a rant – he had a paper rejected because the paper had something to do with music generation.

- Rebecca – has a MIR for music google group :MIR4Music

- Martin – engineering:increase performance in portable devices – research:how to extract music features from music cheaply

- Ricardo – drumming style is hard to extract – but actually not that important for taste prediction

How would you characterize the relationship between biz and academia

- Greg – there is lots of ‘advanced research’ in academia, while in industry there look at much more applied problems

- Doug – suggests that the leader of an academic lab is key to bridging the gap between biz and academia. Grad students should be active in looking for the internships in industry to get a better understanding of what is needed in industry. It is all about getting grad students jobs in industry.

Audience Q/A

- what tools can we create to help producers of music? – Answer: Youtube. Martin talks about understanding how people use music creation tools. Doug: “Don’t build things that people don’t want.” – to do this you need to try this on real data.

Hmmm … only one audience q/a. sigh …

Good panel, lots of interesting ideas. Here is the future of music:

MIR at Google: Strategies for Scaling to Large Music Datasets Using Ranking and Auditory Sparse-Code Representations

Posted by Paul in events, ismir, music information retrieval, research on August 12, 2010

MIR at Google: Strategies for Scaling to Large Music Datasets Using Ranking and Auditory Sparse-Code Representations

Douglas Eck (Google) (Invited speaker) – There’s no paper associated with this talk.

Machine Listening / Audio analysis – Dick Lyon and Samy Bengio

Main strength:

- Scalable algorithms

- When they do work, they use large sets (like all audio on Youtube, or all audio on the web)

- Sparse High dimensional Representations

- 15 numbers to describe a track

- Auditory / Cohchlear Modeling

- Autotagging at Youtube –

- Retrieval, annotation, ranking, recommendation

Collaboration Opportunities

- Faculty research awards

- Google visiting faculty program

- Student internships

- Google summer of code

- Research Infrastructure

The Future of MIR is already here

- Next generation of listeners are using Youtube – because of the on-demand nature

- Youtube – 2 billion views a day

- Content ID scans over 100 years of video every day

The Bar is already set very high ..

- Current online recommendation is pretty good

- Doug wants to close the loop between music making and music listening

What would you like Google to give back to MIR?

A Roadmap Towards Versatile MIR

Posted by Paul in events, ismir, music information retrieval, research on August 12, 2010

A Roadmap Towards Versatile MIR

Emmanuel Vincent, Stanislaw A. Raczyński, Nobutaka Ono and Shigeki Sagayama

ABSTRACT – Most MIR systems are specifically designed for one appli- cation and one cultural context and suffer from the seman- tic gap between the data and the application. Advances in the theory of Bayesian language and information process- ing enable the vision of a versatile, meaningful and accu- rate MIR system integrating all levels of information. We propose a roadmap to collectively achieve this vision.

Wants to increase versatility of MIR systems across different types of music. Systems adopt a fixed expert viewpoint ( musicologist, musician). Have limited accuracy due to general pattern recognition techniques applied to a bag of features.

Emannuel wants to build an overarching scalable MIR system that successfully deals with the challenge on scalable unsupervised methods and refocuses MIR on symbolic methods. This is the core roadmap of VERSAMUS.

The aim of VERSAMUS is to investigate, design and validate such representations in the framework of Bayesian data analysis, which provides a rigorous way of combining separate feature models in a modular fashion. Tasks to be addressed include the design of a versatile model structure, of a library of feature models and of efficient algorithms for parameter inference and model selection. Efforts will also be dedicated towards the development of a shared modular software platform and a shared corpus of multi-feature annotated music which will be reusable by both partners in the future and eventually disseminated