Posts Tagged brian

How music recommendation works – and doesn’t work

Posted by Paul in Music, music information retrieval, recommendation, The Echo Nest on December 12, 2012

Brian just posted ‘How Music Recommendation works – and doesn’t work‘ over at his Variogr.am blog. It is a must-read for anyone interested in the state of the art in music recommendation. Here’s an excerpt:

Try any hot new artist in Pandora and you’ll get the dreaded:

Pandora not knowing about YUS

This is Pandora showing its lack of scale. They won’t have any information for YUS for some time and may never unless the artist sells well. This is bad news and should make you angry: why would you let a third party act as a filter on top of your very personal experiences with music? Why would you ever use something that “hid” things from you?

Grab a coffee, sit back and read Brian’s post. Highly recommended.

What is that song?

Posted by Paul in Music, The Echo Nest on April 24, 2010

One of the biggest problems faced by music application developers is song identification – that is – given an mp3 file, how can you accurately find the name of the song, album and artist? There are some hints in the mp3 file – the file name and the ID3 tags contain metadata about the track – but anyone who has worked with this metadata knows that this data is notoriously hard to deal with. The metadata is often missing, inconsistently formatted or just plain wrong. The result of this difficulty is that music application developers spend an inordinate amount of time just dealing with song identification.

Here at the Echo Nest we want to make it easy for developers to create music applications so we really want to solve the music metadata problem once and for all. That’s why we’ve created music fingerprinting technology. Today, we are starting to release it to the world.

The Echo Nest music fingerprinter takes a bit of music such as an MP3 and identifies the song based solely on the musical attributes of the song. No matter how messy the metadata is, the fingerprinter can identify the song since it relies on the music to do the identification. On his blog, Echo Nest co-founder Brian Whitman dives into the technical details of the Echo Nest Musical Fingerprinter.

This is not the first audio fingerprinter in the world, but we think our fingerprinter is distinctive in several important ways:

This is not the first audio fingerprinter in the world, but we think our fingerprinter is distinctive in several important ways:

- Very fast – under a second to ID a track

- Very accurate – uses Echo Nest music analysis technology at the core. (we hope to publish some data on ENMFP accuracy real soon)

- Open Data – all of the mapping of fingerprints to songs is open data. Anyone can get the data

- Open server – all of the server code is open – you can host your own FP server if you wish

We want to make sure that anyone who takes advantage of the EN Fingerprinter participates fully in the ENMFP ecosystem – and so it is licensed so that anyone who uses the fingerprinter technology will share their FP/song mapping data with everyone. No walled gardens – if you benefit from the ENMFP you are also helping others that are using the ENMFP.

It is still early days with the fingerprinter – we are doing a soft release. If you want to experiment with the ENMFP and you are at the Amsterdam music hackday this weekend send an email to enmfp@echonest.com with your intended use case. We will get back to you ASAP with a link to libraries for Mac, Windows and Linux.

How much is a song play worth?

Posted by Paul in Music, research, The Echo Nest on March 1, 2010

Over the last 15 years or so, music listening has moved online. Now instead of putting a record on the turntable or a CD in the player, we fire up a music application like iTunes, Pandora or Spotify to listen to music. One interesting side-effect of this transition to online music is that there is now a lot of data about music listening behavior. Sites like Last.fm can keep track of every song that you listen to and offer you all sorts of statistics about your play history. Applications like iTunes can phone home your detailed play history. Listeners leave footprints on P2P networks, in search logs and every time they hit the play button on hundreds of music sites. People are blogging, tweeting and IMing about the concerts they attend, and the songs that they love (and hate). Every day, gigabytes of data about our listening habits are generated on the web.

With this new data come the entrepreneurs who sample the data, churn though it and offer it to those who are trying to figure out how best to market new music. Companies like Big Champagne, Bandmetrics, Musicmetric, Next Big Sound and The Echo Nest among others offer windows into this vast set of music data. However, there’s still a gap in our understanding of how to interpret this data. Yes, we have vast amounts data about music listening on the web, but that doesn’t mean we know how to interpret this data- or how to tie it to the music marketplace. How much is a track play on a computer in London related to a sale of that track in a traditional record store in Iowa? How do searches on a P2P network for a new album relate to its chart position? Is a track illegally made available for free on a music blog hurting or helping music sales? How much does a twitter mention of my song matter? There are many unanswered questions about how online music activity correlates with the more traditional ways of measuring artist success such as music sales and chart position. These are important questions to ask, yet they have been impossible to answer because the people who have the new data (data from the online music world) generally don’t talk to the people who own the old data and vice versa.

We think that understanding this relationship is key and so we are working to answer these questions via a research consortium between The Echo Nest, Yahoo Research and UMG unit Island Def Jam. In this consortium, three key elements are being brought together. Island Def Jam is contributing deep and detailed sales data for its music properties – sales data that is not usually released to the public, Yahoo! Research brings detailed search data (with millions and millions of queries for music) along with deep expertise in analyzing and understanding what search can predict while The Echo Nest brings our understanding of Intenet music activity such as playcount data, friend and fan counts, blog mentions, reviews, mp3 posts, p2p activity as well as second generation metrics as sentiment analysis, audio feature analysis and listener demographics . With the traditional sales data, combined with the online music activity and search data the consortium hopes to develop a predictive model for music by discovering correlations between Internet music activity and market reaction. With this model, we would be able to quantify the relative importance of a good review on a popular music website in terms of its predicted effect on sales or popularity. We would be able to pinpoint and rank various influential areas and platforms on the music web that artists should spend more of their time and energy to reach a bigger fanbase. Combining anonymously observable metrics with internal sales and trend data will give keen insight into the true effects of the internet music world.

There are some big brains working on building this model. Echo Nest co-founder Brian Whitman (He’s a Doctor!) and the team from Yahoo! Research that authored the paper “What Can Search Predict” which looks closely at how to use query volume to forecast openining box-office revenue for feature films. The Yahoo! research team includes a stellar lineup: Yahoo! Principal research scientist Duncan Watts whose research on the early-rater effect is a must read for anyone interested in recommendation and discovery; Yahoo! Principal Research Scientist David Pennock who focuses on algorithmic economics (be sure to read Greg Linden’s take on Seung-Taek Park and David’s paper Applying Collaborative Filtering Techniques to Movie Search for Better Ranking and Browsing); Jake Hoffman, expert in machine learning and data-driven modeling of complex systems; Research Scientist Sharad Goel (see his interesting paper on Anatomy of the Long Tail: Ordinary People with Extraordinary Tastes) and Research Scientist Sébastien Lahaie, expert in marketplace design, reputation systems (I’ve just added his paper Applying Learning Algorithms to Preference Elicitation to my reading list). This is a top-notch team

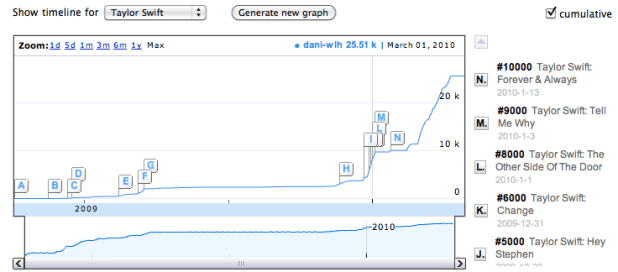

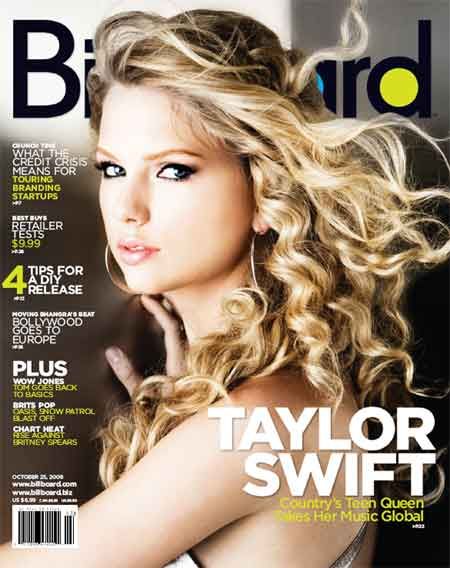

I look forward to the day when we have a predictive model for music that will help us understand how this:

affects this:

.

A Singular Christmas

Posted by Paul in fun, Music, The Echo Nest on November 30, 2009

Oh lookie – Brian has re-posted his Singular Christmas

The SQL Join is destroying music

Posted by Paul in events, Music, The Echo Nest on October 28, 2009

Brian Whitman,one of the founders of the Echo Nest, gave a provocative talk last week at Music and Bits. Some excerpts:

Useless MIR Problems:

- Genre Identification – “Countless PhDs on this useless task. Trying to teach a computer a marketing construct”

Hard but interesting MIR Problems:

- Finding the saddest song in the world

- Predicting Pitchfork and All Music Guide ratings

- Predicting the gender of a listener based upon their music taste

On Recommendation:

- “The best music experience is still very manual… I am still reading about music, not using a recommender.”

- “If we only used collaborative filtering to discover music, the popular artists would eat the unknowns alive.”

- “The SQL Join is destroying music”

Brian’s notes on the talk are on his blog. The slides are online here. Highly recommended:

Music and Bits

Posted by Paul in events, fun, Music, The Echo Nest on October 13, 2009

If you are heading to Amsterdam next week for the Amsterdam Dance Event, you may want to check out the Music & Bits pre-conference. This year Music & Bits is hosting two tracks: a traditional conference-style track with thought leaders from the Music 2.0 space, and a mini-Music Hackday where developers can gather to hack on music APIs to build new and interesting apps.

The Echo Nest will be represented by founder and CTO Brian Whitman. He’ll be giving a keynote talk about the next generation of music search and discovery platform and how these platforms can recommend music or organize your catalog automatically by listening to it, predict which countries to launch your band’s next tour or even help you build synthesizers that play from the entire world of music. It looks to be a really cool talk during a really interesting conference. Wish I were there.

This video from last year gives a taste of what Music & Bits is like: