Posts Tagged The Echo Nest

Name That Artist

Posted by Paul in fun, Music, The Echo Nest, web services on February 14, 2010

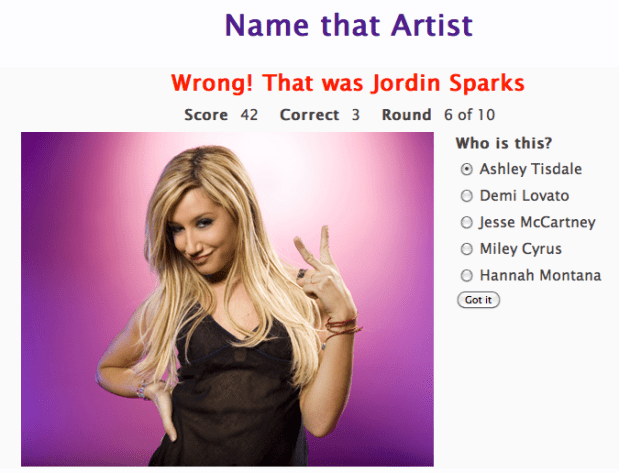

While watching the Olympics over the weekend, I wrote a little web-app game that uses the new Echo Nest get_images call. The game is dead simple. You have to identify the artists in a series of images. You get to chose a level of difficulty and the style of your favorite music, and if you get a high score, your name and score will appear on the Top Scores board. Instead of using a simple score of percent correct, the score gets adjusted by a number of factors. There’s a time bonus, so if you answer fast you get more points, there’s a difficulty bonus, so if you identify unfamiliar artists you get more points, and if you chose the ‘Hard’ level of difficulty you get also get more points for every correct answer. The absolute highest score possible is 600 but that any score above 200 is rather awesome.

The app is extremely ugly (I’m a horrible designer), but it is fun – and it is interesting to see how similar artists from a single genre appear. Give it a go, post some high scores and let me know how you like it.

Jason’s cool screensaver

Posted by Paul in code, fun, Music, The Echo Nest on February 13, 2010

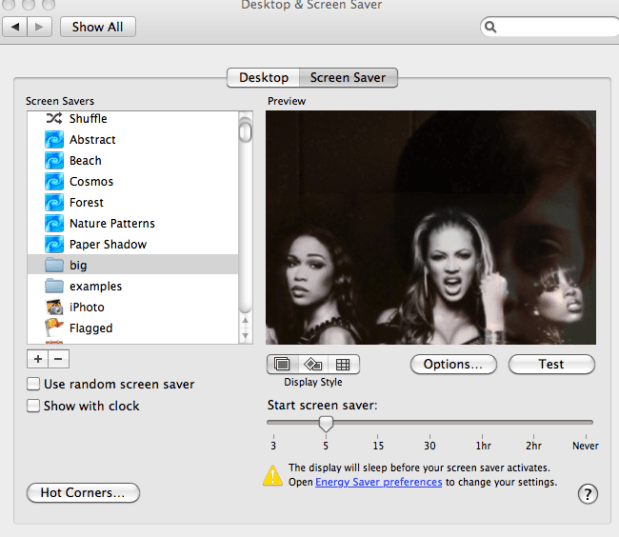

I noticed some really neat images flowing past Jason’s computer over the last week. Whenever Jason was away from his desk, our section of the Echo Nest office would be treated to a very interesting slideshow – mostly of musicians (with an occasional NSFW image (but hey, everything is SFW here at The Echo Nest)). Since Jason is a photographer I first assumed that these were pictures that he took of friends or shows he attended – but Jason is a classical musician and the images flowing by were definitely not of classical musicians – so I was puzzled enough to ask Jason about it. Turns out, Jason did something really cool. He wrote a Python program that gets the top hotttt artists from the Echo Nest, and then collects images for all of those artists and their similars – yielding a huge collection of artist images. He then filters them to include only high res images (thumbnails don’t look great when blown up to screen saver size). He then points is Mac OS Slideshow screensaver at the image folder and voilá – a nifty music-oriented screensaver.

Jason has added his code to the pyechonest examples. So if you are interested in having a nifty screen saver, grab Pyechonest, get an Echo Nest API key if you don’t already have one and run the get_images example. Depending upon how many images you want, it may take a few hours to run. To get 100K images plan to run it over night. Once you’ve done that, point your Pictures screensaver at the image folder and you’re done.

The 6th Beatle

Posted by Paul in Music, recommendation, The Echo Nest on February 12, 2010

When I test-drive a new music recommender I usually start by getting recommendations based upon ‘The Beatles’ (If you like the Beatles, you make like XX). Most recommenders give results that include artists like John Lennon, Paul McCartney, George Harrison, The Who, The Rolling Stones, Queen, Pink Floyd, Bob Dylan, Wings, The Kinks and Beach Boys. These recommendations are reasonable, but they probably won’t help you find any new music. The problem is that these recommenders rely on the wisdom of the crowds and so an extremely popular artist like The Beatles tends to get paired up with other popular artists – the results being that the recommender doesn’t tell you anything that you don’t already know. If you are trying to use a recommender to discover music that sounds like The Beatles, these recommenders won’t really help you – Queen may be an OK recommendation, but chances are good that you already know about them (and The Rolling Stones and Bob Dylan, etc.) so you are not finding any new music.

When I test-drive a new music recommender I usually start by getting recommendations based upon ‘The Beatles’ (If you like the Beatles, you make like XX). Most recommenders give results that include artists like John Lennon, Paul McCartney, George Harrison, The Who, The Rolling Stones, Queen, Pink Floyd, Bob Dylan, Wings, The Kinks and Beach Boys. These recommendations are reasonable, but they probably won’t help you find any new music. The problem is that these recommenders rely on the wisdom of the crowds and so an extremely popular artist like The Beatles tends to get paired up with other popular artists – the results being that the recommender doesn’t tell you anything that you don’t already know. If you are trying to use a recommender to discover music that sounds like The Beatles, these recommenders won’t really help you – Queen may be an OK recommendation, but chances are good that you already know about them (and The Rolling Stones and Bob Dylan, etc.) so you are not finding any new music.

At The Echo Nest we don’t base our artist recommendations solely on the wisdom of crowds, instead we draw upon a number of different sources (including a broad and deep crawl of the music web). This helps us avoid the popularity biases that lead to ineffectual recommendations. For example, looking at some of the Echo Nest recommendations based upon the Beatles we find some artists that you may not see with a wisdom of the crowds recommender – artists that actually sound like the Beatles – not just artists that happened to be popular at the same time as the Beatles. Echo Nest recommendations include artists such as The Beau Brummels , The Dukes of Stratosphear, Flamin’ Groovies and an artist named Emitt Rhodes. I had never ever seen Emitt Rhodes occur in any recommendation based on the Beatles, so I was a bit skeptical, but I took a listen and this is what I found:

Update: Don Tillman points to this Beatle-esque track:

Emitt could be the sixth Beatles. I think it’s a pretty cool recommendation

Introducing Project Rosetta Stone

Posted by Paul in code, Music, The Echo Nest, web services on February 10, 2010

Here at The Echo Nest we want to make the world easier for music app developers. We want to solve as many of the problems that developers face when writing music apps so that the developers can focus on building cool stuff instead of worrying about the basic plumbing . One of the problems faced by music application developers is the issue of ID translation. You may have a collection of music that is in one ID space (Musicbrainz for instance) but you want to use a music service (such as the Echo Nest’s Artist Similarity API) that uses a completely different ID space. Before you can use the service you have to translate your Musicbrainz IDs into Echo Nest IDs, make the similarity call and then, since the artist similarity call returns Echo Nest IDs, you have to then map the IDs back into the Musicbrainz space. The mapping from one id space to another takes time (perhaps even requiring another API method call to ‘search_artists’) and is a potential source of error — mapping artist names can be tricky – for example there are artists like Duran Duran Duran, Various Artists (the electronic musician), DJ Donna Summer, and Nirvana (the 60’s UK band) that will trip up even sophisticated name resolvers.

Here at The Echo Nest we want to make the world easier for music app developers. We want to solve as many of the problems that developers face when writing music apps so that the developers can focus on building cool stuff instead of worrying about the basic plumbing . One of the problems faced by music application developers is the issue of ID translation. You may have a collection of music that is in one ID space (Musicbrainz for instance) but you want to use a music service (such as the Echo Nest’s Artist Similarity API) that uses a completely different ID space. Before you can use the service you have to translate your Musicbrainz IDs into Echo Nest IDs, make the similarity call and then, since the artist similarity call returns Echo Nest IDs, you have to then map the IDs back into the Musicbrainz space. The mapping from one id space to another takes time (perhaps even requiring another API method call to ‘search_artists’) and is a potential source of error — mapping artist names can be tricky – for example there are artists like Duran Duran Duran, Various Artists (the electronic musician), DJ Donna Summer, and Nirvana (the 60’s UK band) that will trip up even sophisticated name resolvers.

We hope to eliminate some of the trouble with mapping IDs with Project Rosetta Stone. Project Rosetta Stone is an update to the Echo Nest APIs to support non-Echo-Nest identifiers. The goal for Project Rosetta Stone is to allow a developer to use any music id from any music API with the Echo Nest web services. For instance, if you have a Musicbrainz ID for weezer, you can call any of the Echo Nest artist methods with the Musicbrainz ID and get results. Additionally, methods that return IDs can be told to return them in different ID spaces. So, for example, you can call artist.get_similar and specify that you want the similar artist results to include Musicbrainz artist IDs.

Dealing with the many different music ID formats One of the issues we have to deal with when trying to support many ID spaces is that the IDs come in many shapes and sizes. Some IDs like Echo Nest and Musicbrainz are self-identifying URLs, (self-identifying means that you can tell what the ID space is and the type of the item being identified (whether it is an artist track, release, playlist etc.)) and some IDs (like Spotify) use self-identifying URNs. However, many ID spaces are non-self identifying – for instance a Napster Artist ID is just a simple integer. Note also that many of the ID spaces have multiple renderings of IDs. Echo Nest has short form IDs (AR7BGWD1187FB59CCB and TR123412876434), Spotify has URL-form IDs (http://open.spotify.com/artist/6S58b0fr8TkWrEHOH4tRVu) and Musicbrainz IDs are often represented with just the UUID fragment (bd0303a-f026-416f-a2d2-1d6ad65ffd68) – and note that the use of Spotify and Napster in these examples are just to demonstrate the wide range of ID format.

We want to make the all of the ID types be self-identifying. IDs that are already self-identifying can be used without change. However, non-self-identifying ID types need to be transformed into a URN-style syntax of the form: vendor:type:vendor-specific-id. So for example, and a Napster track ID would be of the form: ‘napster:track:12345678’

What do we support now? In this first release of Rosetta Stone we are supporting URN-style Musicbrainz ids (probably one of the most requested enhancements to the Echo Nest APIs has been to include support for Musicbrainz). This means that any Echo Nest API method that accepts or returns an Echo Nest ID can also take a Musicbrainz ID. For example to get recent audio found on the web for Weezer, you could make the call with the URN form of the musicbrainz ID for weezer:

http://developer.echonest.com/api/get_audio

?api_key=5ZAOMB3BUR8QUN4PE

&id=musicbrainz:artist:6fe07aa5-fec0-4eca-a456-f29bff451b04

&rows=2&version=3 - (try it)

For a call such as artist.get_similar, if we are using Musicbrainz IDs for input, it is likely that you’ll want your results in the form of Musicbrainz ids. To do this, just add the bucket=id:musicbrainz parameter to indicate that you want Musicbrainz IDs included in the results:

http://developer.echonest.com/api/get_similar

?api_key=5ZAOMB3BUR8QUN4PE

&id=musicbrainz:artist:6fe07aa5-fec0-4eca-a456-f29bff451b04

&rows=10&version=3

&bucket=id:musicbrainz (try it)

<similar>

<artist>

<name>Death Cab for Cutie</name>

<id>music://id.echonest.com/~/AR/ARSPUJF1187B9A14B8</id>

<id type="musicbrainz">musicbrainz:artist:0039c7ae-e1a7-4a7d-9b49-0cbc716821a6</id>

<rank>1</rank>

</artist>

<!– more omitted –>

</similar>

Limiting results to a particular ID space – sometimes you are working within a particular ID space and you only want to include items that are in that space. To support this, Rosetta Stone adds an idlimit parameter to some of the calls. If this is set to ‘Y’ then results are constrained to be within the given ID space. This means that if you want to guarantee that only Musicbrainz artists are returned from the get_top_hottt_artists call you can do so like this:

http://developer.echonest.com/api/get_top_hottt_artists

?api_key=5ZAOMB3BUR8QUN4PE

&rows=20

&version=3

&bucket=id:musicbrainz

&idlimit=Y

What’s Next? In this initial release of Rosetta Stone we’ve built the infrastructure for fast ID mapping. We are currently supporting mapping between Echo Nest Artist IDs and Musicbrainz IDs. We will be adding support for mapping at the track level soon – and keep an eye out for the addition of commercial ID spaces that will allow easy mapping being Echo Nest IDs and those associated with commercial music service providers.

In the near future we’ll be rolling out support to the various clients (pyechonest and the Java client API) to support Rosetta Stone.

As always, we love any feedback and suggestions to make writing music apps easier. So email me (paul@echonest.com) or leave a comment here.

Revisiting the click track

Posted by Paul in Music, The Echo Nest on February 8, 2010

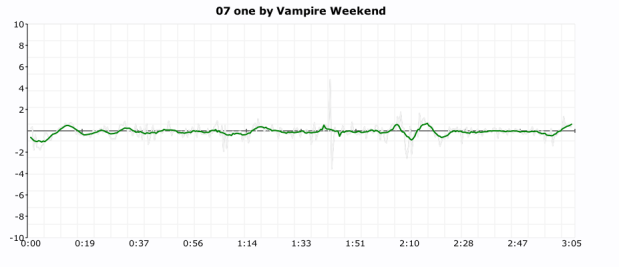

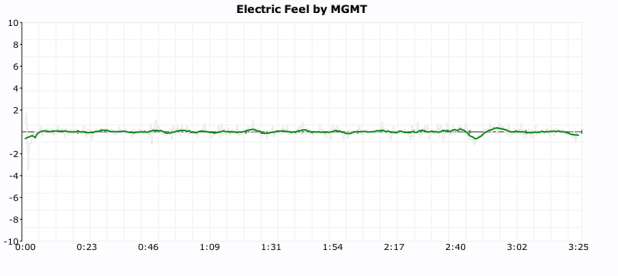

One of my more popular posts from last year was ‘In Search of the Click track‘ where I posted some plots showing the tempo deviations from the average tempo for a number of songs. From these plots it was pretty easy to see which songs had a human setting the beat and which songs had a machine setting the beat (be it a click track, drum machine or an engineer fitting the song to a tempo grid). I got lots of feedback along with many requests to generate click plots for particular drummers. It was a bit of work to generate a click plot (find the audio, upload it to the analyzer, get the results, normalize the data, generate the plot, convert it to an image and finally post it to the web) so I didn’t create too many more.

Last week Brian released the alpha version of a nifty new set of APIs that give access to the analysis data for millions of tracks. Over the weekend, I wrote a web application that takes advantage of the new APIs to make it easy to get a click plot for just about any track. Just type in the name of the artist and track and you’ll get the click plot – you don’t have to find the audio or upload it or wrestle with python or gnuplot. The web app is here:

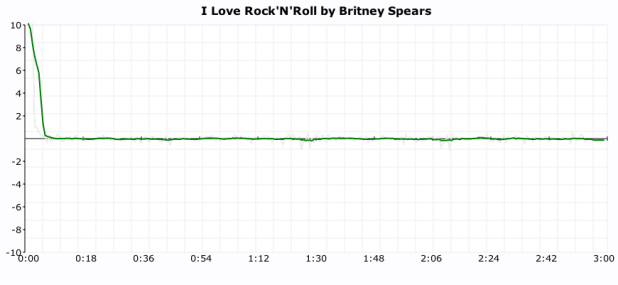

Here are some examples of the output. First up is a plot of “I love rock’n’ roll’ by Britney Spears. The plot shows the tempo deviations from the average song tempo over the course of the song. The plot shows that there’s virtually no deviation at all. Britney is using a machine to set the beat.

Now compare Britney’s plot to the click plot for the song ‘So Lonely’ by the Police:

Here we see lots of tempo variation. There are four main humps each corresponding to each chorus where Police drummer Stewart Copeland accelerates the beat. Over the course of the song there is an increase in the average tempo that build tension and excitement. In this song the tempo is maintained by a thinking, feeling human, whereas Britney is using a coldhearted, sterile machine to set the tempo for her song.

Here we see lots of tempo variation. There are four main humps each corresponding to each chorus where Police drummer Stewart Copeland accelerates the beat. Over the course of the song there is an increase in the average tempo that build tension and excitement. In this song the tempo is maintained by a thinking, feeling human, whereas Britney is using a coldhearted, sterile machine to set the tempo for her song.

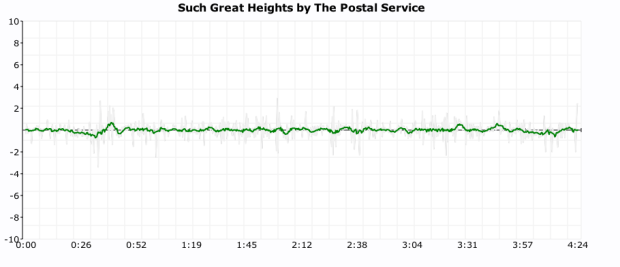

For some types of music, machine generated tempos are appropriate. Electronica, synthpop and techno benefit from an ultra-precise tempo. Some examples are Kraftwerk and The Postal Service:

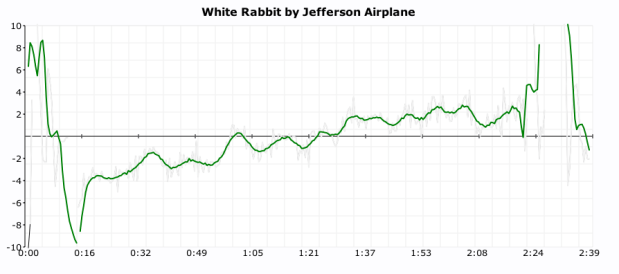

But for many songs, the tempo variations add much to the song. The gradual speed up in Jefferson Airplane’s White Rabbit:

But for many songs, the tempo variations add much to the song. The gradual speed up in Jefferson Airplane’s White Rabbit:

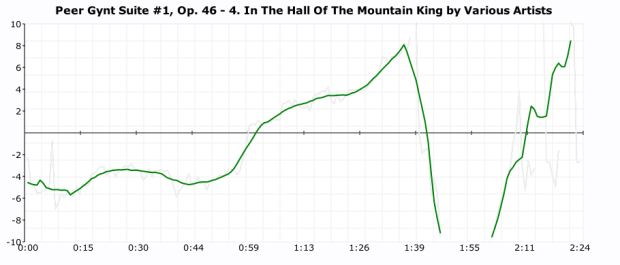

and the crescendo in ‘In the Hall of the Mountain King’:

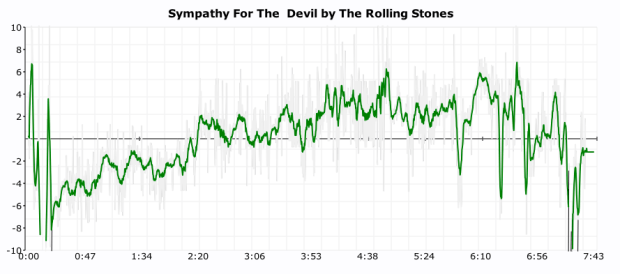

And in the Rolling Stone’s Sympathy for the Devil

And in the Rolling Stone’s Sympathy for the Devil

It is also fun to use the click plots to see how steady drummers are (and to see which ones use clicktracks). Some of my discoveries:

Keith Moon used a click track on ‘Won’t Get Fooled Again’:

(You can see him wearing headphones in this video)

(You can see him wearing headphones in this video)

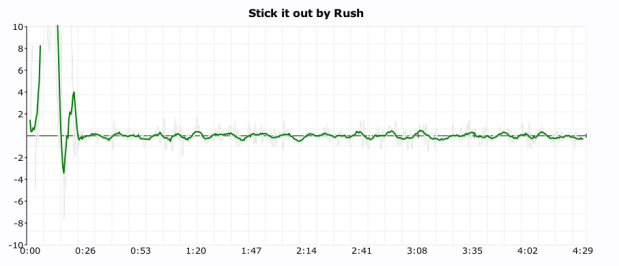

It looks like Neil Peart uses a click track on Stick it out:

Art Blakey can really lay it down without a click track (he really looks like a machine here):

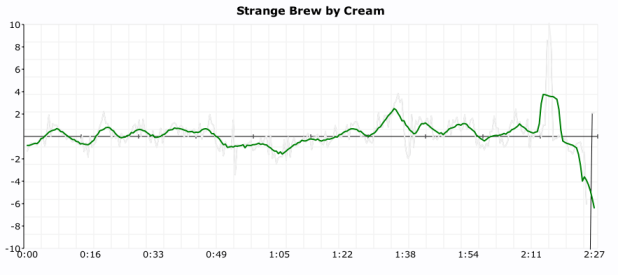

As does Ginger Baker (or does he use a click?):

As does Ginger Baker (or does he use a click?):

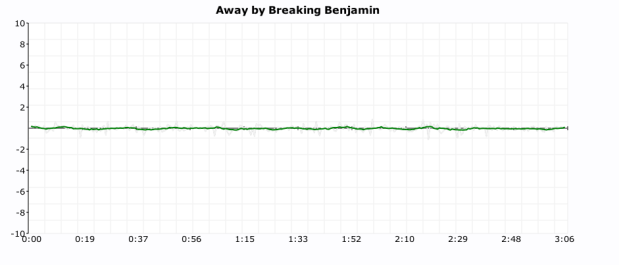

It seems that all of the nümetal bands use clicks:

Breaking Benjamin

Nickelback

As do some of the indie bands:

As do some of the indie bands:

The Decemberists

I find it interesting to look at the various click plots. It gives me a bit more insight into the band and the drummer. However, some types of music such as progressive rock – with its frequent time signature and tempo changes are really hard to plot – which is too bad since many of the best drummers play prog rock.

In addition the plots I attempted a couple of objective metrics that can be used to measure the machine like quality of drummer. The Machine Score is a measure of how often the beat is within a 2 BPM window of the average tempo of the song. Higher numbers indicate that the drummer is more like a machine. This metric is a bit troublesome for songs that change tempo, a song that changes tempo often may have a lower machine score than it should. The Longest run of machine like drumming is the count of the longest stretch of continuous beats that are within 1BPM of the average tempo of the song. Long runs (over a couple hundred beats) tend to indicate that a machine is in charge of the beat. Both these metrics are somewhat helpful in determining whether or not the drumming is live, but I still find that the best determinate is to look at the plot. More work is needed here.

The new click plotter was a fun weekend project. I got to use rgraph – an HTML5 canvas graph library (thanks to Ryan for suggesting client-side plotting), along with cherrypy, pound and, of course, the Brian’s new web services. The whole thing is just 500 lines of code.

I hope you enjoy generating your own click plots. If you find some interesting ones post a link here and I’ll add them to the Gallery of Drummers.

New Echo Nest APIs demoed at the Stockholm Music Hackday

Posted by Paul in events, Music, The Echo Nest, web services on January 30, 2010

Today at the Stockholm Music Hack Day, Echo Nest co-founder Brian Whitman demoed the alpha version of a new set of Echo Nest APIs . There are 3 new public methods and hints about a fourth API method.

- search_tracks: This is IMHO the most awesomest method in the Echo Nest API. This method lets you search through the millions of tracks that the Echo Nest knows about. You can search for tracks based on artist and track title of course, but you can also search based upon how people describe the artist or track (‘funky jazz’, ‘punk cabaret’, ‘screamo’. You can constrain the return results based upon musical attributes (range of tempo, range of loudness, the key/mode), you can even constrain the results based upon the geo-location of the artist. Finally, you can specify how you want the search results ordered. You can sort the results by tempo, loudness, key, mode, and even lat/long.This new method lets you fashion all sorts of interesting queries like:

- Find the slowest songs by Radiohead

- Find the loudest romantic songs

- Find the northernmost rendition of a reggae track

The index of tracks for this API is already quite large, and will continue to grow as we add more music to the Echo Nest. (but note, that this is an alpha version and thus it is subject to the whims of the alpha-god – even as I write this the index used to serve up these queries is being rebuilt so only a small fraction of our set of tracks are currently visible). And BTW if you are at the Stockholm Music Hack Day, look for Brian and ask him about the secret parameter that will give you some special search_tracks goodness!

One of the things you get back from the search_tracks method is a track ID. You can use this track ID to get the analysis for any track using the new get_analysis method. No longer do you need to upload a track to get the analysis for it. Just search for it and we are likely to have the analysis already. This search_tracks method has been the most frequently requested method by our developers, so I’m excited to see this method be released.

- get_analysis – this method will give you the full track analysis for any track, given its track ID. The method couldn’t be simpler, give it a track ID and you get back a big wad-o-json. All of the track analysis, with one call. (Note that for this alpha release, we have a separate track ID space from the main APIs, so IDs for tracks that you’ve analyzed with the released/supported APIs won’t necessarily be available with this method).

- capsule – this is an API that supports this-is-my-jam functionality. Give the API a URL to an XSPF playlist and you’ll get back some json that points you to both a flashplayer url and an mp3 url to a capsulized version of the playlist. In the capsulized version, the song transitions are aligned and beatmatched like an old style DJ would.

Brian also describes a new identify_track method that returns metadata for a track given the Echo Nest a set of musical fingerprint hashcodes. This is a method that you use in conjunction with the new Echo Nest audio fingerprinter (woah!). If you are at the Stockholm music hackday and you are interested in solving the track resolution problem talk to Brian about getting access to the new and nifty audio fingerprinter.

These new APIs are still in alpha – so lots of caveats surround them. To quote Brian: we may pull or throttle access to alpha APIs at a different rate from the supported ones. Please be warned that these are not production ready, we will be making enhancements and restarting servers, there will be guaranteed downtime.

The new APIs hint at the direction we are going here at the Echo Nest. We want to continue to open up our huge quantities of data for developers, making as much of it available as we can to anyone who wants to build music apps. These new APIs return JSON – XML is so old fashioned. All the cool developers are using JSON as the data transport mechanism nowadays: its easy to generate, easy to parse and makes for a very nimble way to work with web-services. We’ll be adding JSON support to all of our released APIs soon.

I’m also really excited about the new fingerprinting technology. Here at the Echo Nest we know how hard it is to deal with artist and track resolution – and we want to solve this problem once and for all, for everybody – so we will soon be releasing an audio fingerprinting system. We want to make this system as open as we can, so we’ll make all the FP data available to anyone. No secret hash-to-ID algorithms, and no private datasets. The Fingerprinter is fast, uses state-of-the-art audio analysis and will be backed by a dataset of fingerprint hashcodes for millions of tracks. I’ll be writing more about the new fingerprinter soon.

These new APIs should give those lucky enough to be in Stockholm this weekend something fun to play with. If you are at the Stockholm Hack Day and you build something cool with these new APIs you may find yourself going home with the much coveted Echo Nest sweatsedo:

Two new sweatsedos in the office

Posted by Paul in fun, The Echo Nest on January 25, 2010

What I see everyday at The Echo Nest

Posted by Paul in fun, The Echo Nest on January 22, 2010

Rearranging the Machine

Posted by Paul in Music, remix, The Echo Nest on January 5, 2010

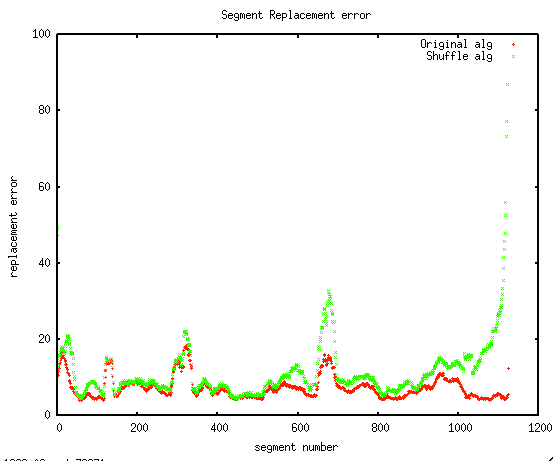

Last month I used Echo Nest remix to rearrange a Nickelback song (See From Nickelback to Bickelnack) by replacing each sound segment with another similar sounding segment. Since Nickelback is notorious for their self-similarity, a few commenters suggested that I try the remix with a different artist to see if the musicality stems from the remix algorithm or from Nickelback’s self-similarity. I also had a few tweaks to the algorithm that I wanted to try out, so I gave it go. Instead of remixing Nickelback I remixed the best selling Christmas song of 2009 Rage Against The Machine’s ‘Killing in the Name’.

Here’s the remix using the exact same algorithm that was used to make the Bickelnack remix:

Like the Bickelnack remix – this remix is still rather musical. (Source for this remix is here: vafroma.py)

A true shuffle: One thing that is a bit unsatisfying about this algorithm is that it is not a true reshuffling of the input. Since the remix algorithm is looking for the nearest match, it is possible for single segment to appear many times in the output while some segments may not appear at all. For instance, of the 1140 segments that make up the original RATM Killing in the Name, only 706 are used to create the final output (some segments are used as many as 9 times in the output). I wanted to make a version that was a true reshuffling, one that used every input segment exactly once in the output, so I changed the inner remix loop to only consider unused segments for insertion. The algorithm is a greedy one, so segments that occur early in the song have a bigger pool of replacement segments to draw on. The result is that as the song progresses, the similarity of replacement segments tends to drop off.

I was curious to see how much extra error there was in the later segments, so I plotted the segment fitting error. In this plot, the red line is the fitting error for the original algorithm and the green line is the fitting error for shuffling algorithm. I was happy to see that for most of the song, there is very little extra error in the shuffling algorithm, things only get bad in the last 10% of the song.

You can hear see and hear the song decay as the pool of replacement segments diminish. The last 30 seconds are quite chaotic. (Remix source for this version is here: vafroma2.py)

More coherence: Pulling song segments from any part of a song to build a new version yields fairly coherent audio, however, the remixed video can be rather chaotic as it seems to switch cameras every second or so. I wanted to make a version of the remix that would reduce the shifting of the camera. To do this, I gave slight preference to consecutive segments when picking the next segment. For example, if I’ve replaced segment 5 with segment 50, when it is time to replace segment 6, I’ll give segment 51 a little extra chance. The result is that the output contains longer sequences of contiguous segments. – nevertheless no segment is ever in its original spot in the song. Here’s the resulting version:

I find this version to be easier to watch. (Source is here: vafroma3.py).

Next experiments along these lines will be to draw segments from a large number of different songs by the same artist, to see if we can rebuild a song without using any audio from the source song. I suspect that Nickelback will again prove themselves to be the masters of self-simlarity:

Here’s the original, un-remixed version of RATM- Killing in the name:

From Nickelback to Bickelnack

I saw that Nickelback just received a Grammy nomination for Best Hard Rock Performance with their song ‘Burn it to the Ground’ and wanted to celebrate the event. Since Nickelback is known for their consistent sound, I thought I’d try to remix their Grammy-nominated performance to highlight their awesome self-similarity. So I wrote a little code to remix ‘Burning to the Ground’ with itself. The algorithm I used is pretty straightforward:

- Break the song down into smallest nuggets of sound (a.k.a segments)

- For each segment, replace it with a different segment that sounds most similar

I applied the algorithm to the music video. Here are the results:

Considering that none of the audio is in its original order, and 38% of the original segments are never used, the remix sounds quite musical and the corresponding video is quite watchable. Compare to the original (warning, it is Nickelback):

Feel free to browse the source code, download remix and try creating your own.