Posts Tagged music hackday

Music Hack Day Hacks – HacKey

Posted by Paul in fun, Music, The Echo Nest on January 31, 2010

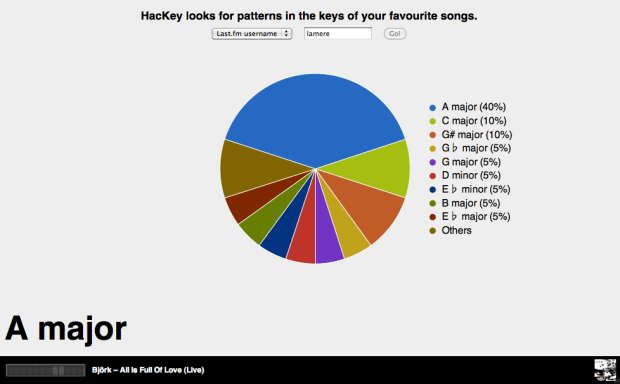

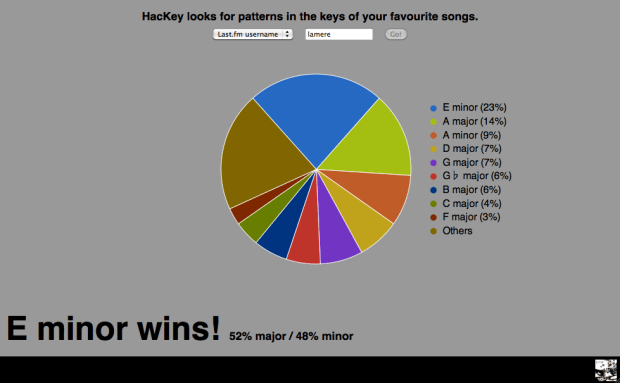

The Stockholm Music Hack Day hacks are starting to roll in. One really neat one is ‘hackKey‘ by Matt Ogle from Last.fm. This hack looks gives you a chart that shows you the keys of your most listened to songs in your last.fm profile:

As you can see my favorite key is E minor. I should put this on a tee-shirt.

The hack uses Brian’s new search_tracks API for the key identification. Cool beans! Will we see flaneur in velour?

I don’t know how well Matt’s hack will scale so I won’t put a link to it here on the blog until after he’s done demoing it at the hack day. Matt’s demo is done so here’s the link: http://users.last.fm/~matt/hackey/

New Echo Nest APIs demoed at the Stockholm Music Hackday

Posted by Paul in events, Music, The Echo Nest, web services on January 30, 2010

Today at the Stockholm Music Hack Day, Echo Nest co-founder Brian Whitman demoed the alpha version of a new set of Echo Nest APIs . There are 3 new public methods and hints about a fourth API method.

- search_tracks: This is IMHO the most awesomest method in the Echo Nest API. This method lets you search through the millions of tracks that the Echo Nest knows about. You can search for tracks based on artist and track title of course, but you can also search based upon how people describe the artist or track (‘funky jazz’, ‘punk cabaret’, ‘screamo’. You can constrain the return results based upon musical attributes (range of tempo, range of loudness, the key/mode), you can even constrain the results based upon the geo-location of the artist. Finally, you can specify how you want the search results ordered. You can sort the results by tempo, loudness, key, mode, and even lat/long.This new method lets you fashion all sorts of interesting queries like:

- Find the slowest songs by Radiohead

- Find the loudest romantic songs

- Find the northernmost rendition of a reggae track

The index of tracks for this API is already quite large, and will continue to grow as we add more music to the Echo Nest. (but note, that this is an alpha version and thus it is subject to the whims of the alpha-god – even as I write this the index used to serve up these queries is being rebuilt so only a small fraction of our set of tracks are currently visible). And BTW if you are at the Stockholm Music Hack Day, look for Brian and ask him about the secret parameter that will give you some special search_tracks goodness!

One of the things you get back from the search_tracks method is a track ID. You can use this track ID to get the analysis for any track using the new get_analysis method. No longer do you need to upload a track to get the analysis for it. Just search for it and we are likely to have the analysis already. This search_tracks method has been the most frequently requested method by our developers, so I’m excited to see this method be released.

- get_analysis – this method will give you the full track analysis for any track, given its track ID. The method couldn’t be simpler, give it a track ID and you get back a big wad-o-json. All of the track analysis, with one call. (Note that for this alpha release, we have a separate track ID space from the main APIs, so IDs for tracks that you’ve analyzed with the released/supported APIs won’t necessarily be available with this method).

- capsule – this is an API that supports this-is-my-jam functionality. Give the API a URL to an XSPF playlist and you’ll get back some json that points you to both a flashplayer url and an mp3 url to a capsulized version of the playlist. In the capsulized version, the song transitions are aligned and beatmatched like an old style DJ would.

Brian also describes a new identify_track method that returns metadata for a track given the Echo Nest a set of musical fingerprint hashcodes. This is a method that you use in conjunction with the new Echo Nest audio fingerprinter (woah!). If you are at the Stockholm music hackday and you are interested in solving the track resolution problem talk to Brian about getting access to the new and nifty audio fingerprinter.

These new APIs are still in alpha – so lots of caveats surround them. To quote Brian: we may pull or throttle access to alpha APIs at a different rate from the supported ones. Please be warned that these are not production ready, we will be making enhancements and restarting servers, there will be guaranteed downtime.

The new APIs hint at the direction we are going here at the Echo Nest. We want to continue to open up our huge quantities of data for developers, making as much of it available as we can to anyone who wants to build music apps. These new APIs return JSON – XML is so old fashioned. All the cool developers are using JSON as the data transport mechanism nowadays: its easy to generate, easy to parse and makes for a very nimble way to work with web-services. We’ll be adding JSON support to all of our released APIs soon.

I’m also really excited about the new fingerprinting technology. Here at the Echo Nest we know how hard it is to deal with artist and track resolution – and we want to solve this problem once and for all, for everybody – so we will soon be releasing an audio fingerprinting system. We want to make this system as open as we can, so we’ll make all the FP data available to anyone. No secret hash-to-ID algorithms, and no private datasets. The Fingerprinter is fast, uses state-of-the-art audio analysis and will be backed by a dataset of fingerprint hashcodes for millions of tracks. I’ll be writing more about the new fingerprinter soon.

These new APIs should give those lucky enough to be in Stockholm this weekend something fun to play with. If you are at the Stockholm Hack Day and you build something cool with these new APIs you may find yourself going home with the much coveted Echo Nest sweatsedo:

Searching for beauty and surprise in popular music

Posted by Paul in events, Music, The Echo Nest on November 23, 2009

During the Boston Music Hack Day, 30 or 40 music hacks were produced. One phenomenal hack was Rob Ochshorn’s Outlier FM. Rob’s goal for the weekend was to utilize technology to search for beauty and surprise in even the most overproduced popular music. He approached this problem by searching musical content for the audio that “exists outside of a song’s constructed and statistical conventions”.

With Outlier FM rob can deconstruct a song into musical atoms, filter away the most common elements, leaving behind the non-conformist bits of music. This yields strange, unpredictable minimal techno-sounding music.

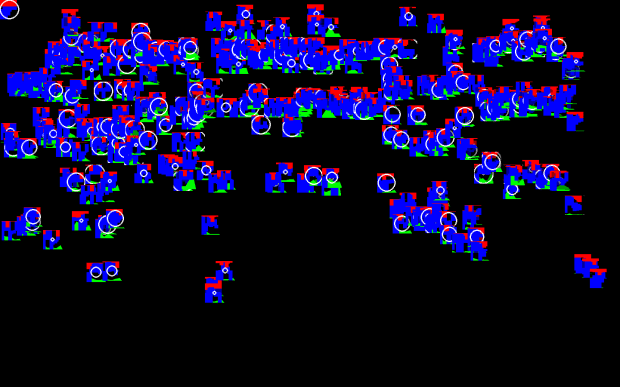

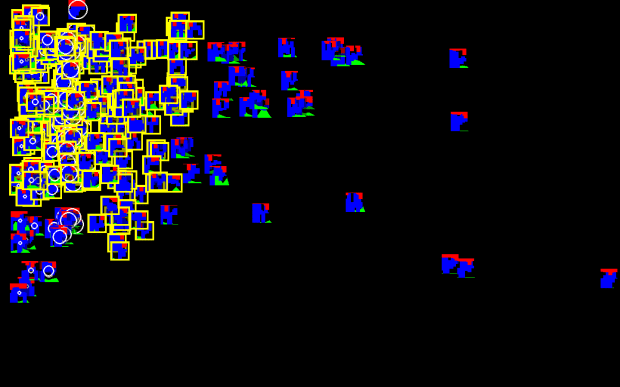

So how does it work? Well, first Outlier FM uses the Echo Nest analyzer to break a song down into the smallest segments. You can then visualize these segments using numerous filters and layout schemes to give you an idea of what the unusual audio segments are:

Next, you can filter out clusters of self-similar segments, leaving just the outliers:

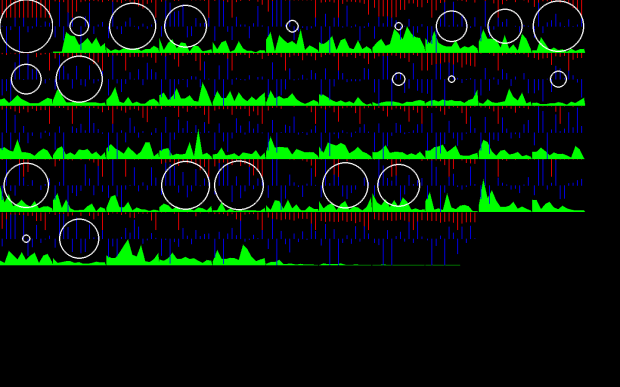

Finally you can order, visualize and render that segments to yield interesting music:

Here’s an example of Outlier FM applied to Here’ Comes the Sun:

Rob’s hack was an amazing weekend effort, he combined music analysis and visualization into a tool that can be used to make interesting sounds. Outlier.fm was voted the best hack for the music hack day weekend. Rob chose as his prize the Sun Ultra 24 workstation with flat panel display donated by Sun Microsystems Startup Essentials. Here’s Rob receiving his prize from Sun.

Congrats to Rob for a well done hack!

Getting Ready for Boston Music Hack Day

Posted by Paul in Music, The Echo Nest on November 11, 2009

Boston Music Hack Day starts in exactly 10 days. At the Hack day you’ll have about 24 hours of hacking time to build something really cool. If you are going to the Hack Day you will want to maximize your hacking time, so here are a few tips to help you get ready.

- Come with an idea or two but be flexible – one of the really neat bits about the Music Hack Day is working with someone that you’ve never met before. So have a few ideas in your back pocket, but keep your ears open on Saturday morning for people who are doing interesting things, introduce yourself and maybe you’ve made a team. At previous hack days all the best hacks seem to be team efforts. If you have an idea that you’d like some help on, or if you are just looking for someone to collaborate with, check out and/or post to the Music Hack Day Ideas Wiki.

- Prep your APIs – there are a number of APIs that you might want to use to create your hack. Before you get to the Hack Day you might want to take a look at the APIs, figure out which ones you might want to use- and get ready to use them. For instance, if you want to build music exploration and discovery tools or apps that remix music, you might be interested in the Echo Nest APIs. To get a head start for the hack day before you get there you should register for an API Key, browse the API documentation then check out our resources page for code examples and to find a client library in your favorite language.

- Decide if you would like to win a prize – Of course the prime motivation is for hacking is the joy of building something really neat – but there will be some prizes awarded to the best hacks. Some of the prizes are general prizes – but some are category prizes (‘best iPhone / iPod hacks’) and some are company-specific prizes (best application that uses the Echo Nest APIs). If you are shooting for a specific prize make sure you know what the conditions for the prize are. (I have my eye on the Ultra 24 workstation and display, graciously donated by my Alma Mata).

To get the hack day jucies flowing check out this nifty slide deck on Music Hackday created by Henrik Berggren:

Build one of these at Boston Music Hack Day

Noah Vawter will be holding a workshop during the Boston Music Hack Day where you can learn how to build a working prototype Exertion Instrument. It is unclear at this time if a leekspin lesson his included. Details on the Exertion Instrument site.

Music and Bits

Posted by Paul in events, fun, Music, The Echo Nest on October 13, 2009

If you are heading to Amsterdam next week for the Amsterdam Dance Event, you may want to check out the Music & Bits pre-conference. This year Music & Bits is hosting two tracks: a traditional conference-style track with thought leaders from the Music 2.0 space, and a mini-Music Hackday where developers can gather to hack on music APIs to build new and interesting apps.

The Echo Nest will be represented by founder and CTO Brian Whitman. He’ll be giving a keynote talk about the next generation of music search and discovery platform and how these platforms can recommend music or organize your catalog automatically by listening to it, predict which countries to launch your band’s next tour or even help you build synthesizers that play from the entire world of music. It looks to be a really cool talk during a really interesting conference. Wish I were there.

This video from last year gives a taste of what Music & Bits is like:

SoundEchoCloudNest

Posted by Paul in code, data, events, Music, The Echo Nest, web services on September 28, 2009

At the recent Berlin Music Hackday, developer Hannes Tydén developed a mashup between SoundCloud and The Echo Nest, dubbed SoundCloudEchoNest. The program uses the SoundCloud and Echo Nest APIs to automatically annotate your SoundCloud tracks with information such as when the track fades in and fades out, the key, the mode, the overall loudness, time signature and the tempo. Also each Echo Nest section is marked. Here’s an example:

This track is annotated as follows:

- echonest:start_of_fade_out=182.34

- echonest:mode=min

- echonest:loudness=-5.521

- echonest:end_of_fade_in=0.0

- echonest:time_signature=1

- echonest:tempo=96.72

- echonest:key=F#

Additionally, 9 section boundaries are annotated.

The user interface to SoundEchoCloudNest is refreshly simple, no GUIs for Hannes:

Hannes has open sourced his code on github, so if you are a Ruby programmer and want to play around with SoundCloud and/or the Echo Nest, check out the code.

Machine tagging of content is becoming more viable. Photos on Flicker can be automatically tagged with information about the camera and exposure settings, geolocation, time of day and so on. Now with APIs like SoundCloud and the Echo Nest, I think we’ll start to see similar machine tagging of music, where basic info such as tempo, key, mode, loudness can be automatically attached to the audio. This will open the doors for all sorts of tools to help us better organize our music.