Last month I used Echo Nest remix to rearrange a Nickelback song (See From Nickelback to Bickelnack) by replacing each sound segment with another similar sounding segment. Since Nickelback is notorious for their self-similarity, a few commenters suggested that I try the remix with a different artist to see if the musicality stems from the remix algorithm or from Nickelback’s self-similarity. I also had a few tweaks to the algorithm that I wanted to try out, so I gave it go. Instead of remixing Nickelback I remixed the best selling Christmas song of 2009 Rage Against The Machine’s ‘Killing in the Name’.

Here’s the remix using the exact same algorithm that was used to make the Bickelnack remix:

Like the Bickelnack remix – this remix is still rather musical. (Source for this remix is here: vafroma.py)

A true shuffle: One thing that is a bit unsatisfying about this algorithm is that it is not a true reshuffling of the input. Since the remix algorithm is looking for the nearest match, it is possible for single segment to appear many times in the output while some segments may not appear at all. For instance, of the 1140 segments that make up the original RATM Killing in the Name, only 706 are used to create the final output (some segments are used as many as 9 times in the output). I wanted to make a version that was a true reshuffling, one that used every input segment exactly once in the output, so I changed the inner remix loop to only consider unused segments for insertion. The algorithm is a greedy one, so segments that occur early in the song have a bigger pool of replacement segments to draw on. The result is that as the song progresses, the similarity of replacement segments tends to drop off.

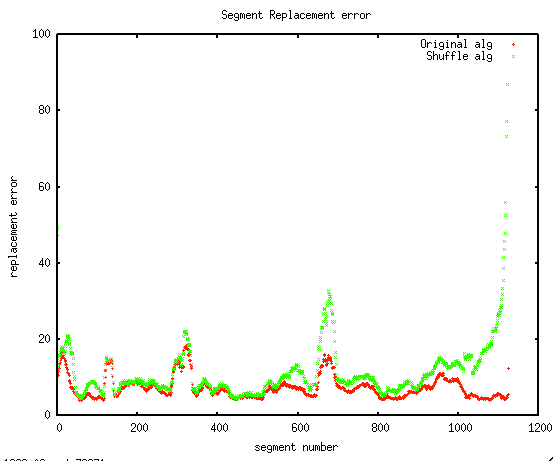

I was curious to see how much extra error there was in the later segments, so I plotted the segment fitting error. In this plot, the red line is the fitting error for the original algorithm and the green line is the fitting error for shuffling algorithm. I was happy to see that for most of the song, there is very little extra error in the shuffling algorithm, things only get bad in the last 10% of the song.

You can hear see and hear the song decay as the pool of replacement segments diminish. The last 30 seconds are quite chaotic. (Remix source for this version is here: vafroma2.py)

More coherence: Pulling song segments from any part of a song to build a new version yields fairly coherent audio, however, the remixed video can be rather chaotic as it seems to switch cameras every second or so. I wanted to make a version of the remix that would reduce the shifting of the camera. To do this, I gave slight preference to consecutive segments when picking the next segment. For example, if I’ve replaced segment 5 with segment 50, when it is time to replace segment 6, I’ll give segment 51 a little extra chance. The result is that the output contains longer sequences of contiguous segments. – nevertheless no segment is ever in its original spot in the song. Here’s the resulting version:

I find this version to be easier to watch. (Source is here: vafroma3.py).

Next experiments along these lines will be to draw segments from a large number of different songs by the same artist, to see if we can rebuild a song without using any audio from the source song. I suspect that Nickelback will again prove themselves to be the masters of self-simlarity:

Here’s the original, un-remixed version of RATM- Killing in the name:

#1 by startlingmoniker on January 21, 2010 - 3:11 pm

This is very interesting stuff– personally, I wonder how it would sound as applied to something like a speech, or even a sermon. Could this be applied across an original and a remix as well, to locate and leave only the differences between the two sources? If I had the slightest idea how to use the Echo Nest thingie myself (I’m no programmer) I’d be trying all sorts of neat stuff!