My Fame goes to 11

Posted by Paul in code, fun, java, Music, The Echo Nest, visualization on November 12, 2009

Sten has released a new version of the ultra-cool, award-winning Music Explorer FX. It has a new feature: The Fame Knob. While you are exploring for music you can set the Fame Knob up or down to control how well known or obscure the artists shown are. If you are looking for mainstream artists set the Fame Knob to high. Looking for new, undiscovered artists? Set the Fame Knob to low.

Sten has released a new version of the ultra-cool, award-winning Music Explorer FX. It has a new feature: The Fame Knob. While you are exploring for music you can set the Fame Knob up or down to control how well known or obscure the artists shown are. If you are looking for mainstream artists set the Fame Knob to high. Looking for new, undiscovered artists? Set the Fame Knob to low.

Sten has also included a number of performance enhancements so everything runs super snappy. Read more about the update on Sten’s blog and give it a whirl.

MOG makes playlists easy

MOG has posted a video demonstrating their new playlist editor for the soon to be released MOG all access.

It looks pretty nifty – lightweight, tag-able, shareable playlists. It’s nice to see MOG paying attention to playlists. With millions of songs to chose from, music discovery gets to be a big problem. Playlists can help with that. Of course, playlists bring their own discovery problems. How can I discovery new playlists that contain music that I like? Currently, most sites that support playlists and playlist sharing only provide limited ways for people to discover new playlists. However, as playlists become more ubiquitous, sites like MOG will need to expand the tools for helping people find new and interesting playlists. Some options for playlist discovery:

- Search for playlists by tag. Example: “Find playlists tagged ’emo’ and ‘christmas'”

- Search for playlist by artist / track. Example: “Find playlists that have songs by Deerhoof”

- Query by example. Example: “Find playlists that are similar to this playlist”

- Popularity. Example: “Play me the most popular playlists” or “Play me the Billboard hot 100”

- Social discovery. Example: “What playlists are my friends listening to now?”

- Expert curated. Example: “Give me the Pitchfork 100 playlist”

- Machine made. Example: “Build me a playlist that is similar to this playlist” or “Build me a playlist for tags: ’emo’, ‘female’, ’90s'”

- Recommended playlists. Example: “Find me playlists that I will like based upon my music taste and my context (e.g. the time of day).

It’s good to see MOG working hard to make the creation of playlists easy. Next step is to make finding new and interesting playlists easy.

Getting Ready for Boston Music Hack Day

Posted by Paul in Music, The Echo Nest on November 11, 2009

Boston Music Hack Day starts in exactly 10 days. At the Hack day you’ll have about 24 hours of hacking time to build something really cool. If you are going to the Hack Day you will want to maximize your hacking time, so here are a few tips to help you get ready.

- Come with an idea or two but be flexible – one of the really neat bits about the Music Hack Day is working with someone that you’ve never met before. So have a few ideas in your back pocket, but keep your ears open on Saturday morning for people who are doing interesting things, introduce yourself and maybe you’ve made a team. At previous hack days all the best hacks seem to be team efforts. If you have an idea that you’d like some help on, or if you are just looking for someone to collaborate with, check out and/or post to the Music Hack Day Ideas Wiki.

- Prep your APIs – there are a number of APIs that you might want to use to create your hack. Before you get to the Hack Day you might want to take a look at the APIs, figure out which ones you might want to use- and get ready to use them. For instance, if you want to build music exploration and discovery tools or apps that remix music, you might be interested in the Echo Nest APIs. To get a head start for the hack day before you get there you should register for an API Key, browse the API documentation then check out our resources page for code examples and to find a client library in your favorite language.

- Decide if you would like to win a prize – Of course the prime motivation is for hacking is the joy of building something really neat – but there will be some prizes awarded to the best hacks. Some of the prizes are general prizes – but some are category prizes (‘best iPhone / iPod hacks’) and some are company-specific prizes (best application that uses the Echo Nest APIs). If you are shooting for a specific prize make sure you know what the conditions for the prize are. (I have my eye on the Ultra 24 workstation and display, graciously donated by my Alma Mata).

To get the hack day jucies flowing check out this nifty slide deck on Music Hackday created by Henrik Berggren:

Spotifying over 200 Billboard charts

Posted by Paul in code, data, fun, web services on November 8, 2009

Yesterday, I Spotified the Billboard Hot 100 – making it easy to listen to the charts. This morning I went one step further and Spotified all of the Billboard Album and Singles charts.

The Spotified Billboard Charts

That’s 128 singles charts (which includes charts like Luxembourg Digital Songs, Hot Mainstream R&B/Hip-Hop Song and Hot Ringtones ) and 83 album charts including charts like Top Bluegrass Albums, Top Cast Albums and Top R&B Catalog Albums.

In these 211 charts you’ll find 6,482 Spotify tracks, 2354 being unique (some tracks, like Miley Cyrus’s ‘The Climb’ appear on many charts).

Building the charts stretches the API limits of the Billboard API (only 1,500 calls allowed per day!), as well as stretches my patience (making about 10K calls to the Spotify API while trying not to exceed the rate limit, means it takes a couple of hours to resolve all the tracks). Nevertheless, it was a fun little project. And it shows off the Spotify catalog quite well. For popular western music they have really good coverage.

Requests for the Billboard API: Please increase the usage limit by 10 times. 1,500 calls per day is really limiting, especially when trying to debug a client library.

Requests for the Spotify API: Please, Please Please!!! – make it possible to create and modify Spotify playlists via web services.

The Billboard Hot 100. In Spotify.

Posted by Paul in code, fun, web services on November 7, 2009

Inspired by Oscar’s 1001 Albums You Must Hear Before You Die …. in Spotify I put together an app that gets the Top charts from Billboard (using the nifty Billboard API) and resolves them to a Spotify ID – giving you a top 100 chart that you can play.

The Billboard Hot 100 in Spotify

Here’s the Top 10:

- I Gotta Feeling by The Black Eyed Peas

Weeks on chart:16 Peak:1 - Down by Jay Sean Lil Wayne

Weeks on chart:13 Peak:2 - Party In The U.S.A. by Miley Cyrus

Weeks on chart:7 Peak:2 - Run This Town by Jay-Z, Rihanna & Kanye West

Weeks on chart:9 Peak:2 - Whatcha Say by Jason DeRulo

Weeks on chart:7 Peak:5 - You Belong With Me by Taylor Swift

Weeks on chart:23 Peak:2 - Paparazzi by Lady Gaga

Weeks on chart:5 Peak:7 - Use Somebody by Kings Of Leon

Weeks on chart:35 Peak:4 - Obsessed by Mariah Carey

Weeks on chart:12 Peak:7 - Empire State Of Mind by Jay-Z + Alicia Keys

Weeks on chart:3 Peak:5

Note that the Billboard API purposely offers up slightly stale charts, so this is really the top 100 of a few weeks ago. I never listen to the Top 100, and I hadn’t heard of 50% of the artists so listening to the Billboard Top 100 was quite enlightening. I was surprised at how far removed the Top 100 is from the music that I (and everyone I know) listen to every day.

To build the list I used my Jspot – and a (yet to be released) Java client for the Billboard API. (If you are interested in this API, let me know and I’ll stick it up on google code). Of course it’d be really nifty if you could specify get and listen to a chart for a given week (i.e. let me listen to the Billboard chart for the week that I graduated from High School). Sound like something to do for Boston Music Hackday.

Update: I’ve made another list that is a little bit more inline with my own music tastes:

The Spotified Billboard Top Modern Rock/Alternative Albums

Who isn’t coming to Boston Music Hackday?

Look at all the companies and organizations coming to Boston Music Hack Day. Time is running out, only a few slots are left, so if you want to go, sign up soon.

Build one of these at Boston Music Hack Day

Noah Vawter will be holding a workshop during the Boston Music Hack Day where you can learn how to build a working prototype Exertion Instrument. It is unclear at this time if a leekspin lesson his included. Details on the Exertion Instrument site.

Where is my JSpot?

Posted by Paul in code, fun, Music, web services on November 3, 2009

I like Spotify. I like Java. So I combined them. Here’s a Java client for the new Spotify metadata API: JSpot

This client lets you do things like search for a track by name and get the Spotify ID for the track so you can play the track in Spotify. This is useful for all sorts of things like building web apps that use Spotify to play music, or perhaps to build a Playdar resolver so you can use Spotify and Playdar together.

Here’s some sample code that prints out the popularity and spotify ID for all versions of Weezer’s ‘My Name Is Jonas’.

Spotify spotify = new Spotify();

Results<Track> results = spotify.searchTrack("Weezer", "My name is Jonas");

for (Track track : results.getItems()) {

System.out.printf("%.2f %s \n", track.getPopularity(), track.getId());

}

This prints out:

If you have Spotify and you click on those links, and those tracks are available in your locale you should hear Weezer’s nerd anthem.

You can search for artists, albums and tracks and you can get all sorts of information back such as release dates for albums, countries where the music can be played, track length, popularity for artists, tracks and albums. It is very much a 0.1 release. The search functionality is complete so its quite useful, but I haven’t implemented the ‘lookup’ methods yet. There some javadocs. There’s a jar file: jspot.jar. And it is all open source: jspot at google code.

Poolcasting: an intelligent technique to customise music programmes for their audience

Posted by Paul in Music, music information retrieval, research on November 2, 2009

In preparation for his defense, Claudio Baccigalupo has placed online his thesis: Poolcasting: an intelligent technique to customise music programmes for their audience. It looks to be an in depth look at playlisting.

Here’s the abstract:

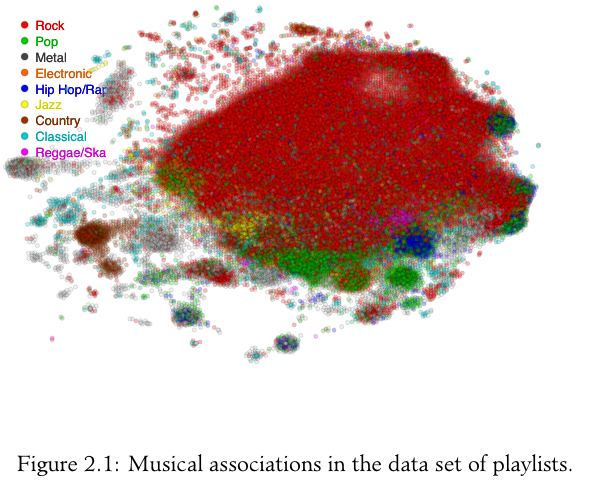

Poolcasting is an intelligent technique to customise musical sequences for groups of listeners. Poolcasting acts like a disc jockey, determining and delivering songs that satisfy its audience. Satisfying an entire audience is not an easy task, especially when members of the group have heterogeneous preferences and can join and leave the group at different times. The approach of poolcasting consists in selecting songs iteratively, in real time, favouring those members who are less satisfied by the previous songs played.

Poolcasting additionally ensures that the played sequence does not repeat the same songs or artists closely and that pairs of consecutive songs ‘flow’ well one after the other, in a musical sense. Good disc jockeys know from expertise which songs sound well in sequence; poolcasting obtains this knowledge from the analysis of playlists shared on the Web. The more two songs occur closely in playlists, the more poolcasting considers two songs as associated, in accordance with the human experiences expressed through playlists. Combining this knowledge and the music profiles of the listeners, poolcasting autonomously generates sequences that are varied, musically smooth and fairly adapted for a particular audience.

A natural application for poolcasting is automating radio programmes. Many online radios broadcast on each channel a random sequence of songs that is not affected by who is listening. Applying poolcasting can improve radio programmes, playing on each channel a varied, smooth and group-customised musical sequence. The integration of poolcasting into a Web radio has resulted in an innovative system called Poolcasting Web radio. Tens of people have connected to this online radio during one year providing first-hand evaluation of its social features. A set of experiments have been executed to evaluate how much the size of the group and its musical homogeneity affect the performance of the poolcasting technique.

I’m quite interested in this topic so it looks like my reading list is set for the week.

The Future of the Music Industry

Posted by Paul in Music, recommendation on November 1, 2009

Last week NPR’s On the Media had a special show called ‘The Future of Music’ – all about the current state of the music industry and where it is all going. The hour is broken into a number of sections:

- Facing the (Free) music – about what has happened in the 10 years since Napster – Yep Spotify gets a mention. Choice quote by Hilary Rosen – “Napster was a missed opportunity’

- They Say That I stole this – about the legalities of sampling (with interviews with Girl Talk among others)

- Played Out – interview with John Scher about the state of live music

- Teens on Tunes – interviews with teens about where they get their music. Answer: Limewire

- Charting the Charts – interesting piece about the charts – the history of billboard, and the next generation of tracking including an interview with Bandmetrics founder Duncan Freeman (way to go Duncan!)

- Why I’m not afraid to take your money – interesting interview with Amanda Palmer about how artists make money in today’s music world

One thing that they didn’t talk about at all was music discovery – no mention of the role of the critic, music blogs, hype machine, no discussion of the role social sites like last.fm play in music discovery, no mention of automated tools for music discovery like recommenders and playlisters. Maybe next year, when everyone has access to infinite music, we’ll see more emphasis on discovery tools.

It was a great show. Highly recommended: NPR’s On the Media Special Edition: The Future of the Music Industry