Archive for category ismir

ON THE APPLICABILITY OF PEER-TO-PEER DATA IN MUSIC INFORMATION RETRIEVAL RESEARCH

Posted by Paul in ismir, Music, music information retrieval, research on August 11, 2010

ON THE APPLICABILITY OF PEER-TO-PEER DATA IN MUSIC INFORMATION RETRIEVAL RESEARCH (pdf)

Noam Koenigstein, Yuval Shavitt, Ela Weinsberg, and Udi Weinsberg

abstract:Peer-to-Peer (p2p) networks are being increasingly adopted as an invaluable resource for various music information re- trieval (MIR) tasks, including music similarity, recommen- dation and trend prediction. However, these networks are usually extremely large and noisy, which raises doubts re- garding the ability to actually extract sufficiently accurate information.

This paper evaluates the applicability of using data orig- inating from p2p networks for MIR research, focusing on partial crawling, inherent noise and localization of songs and search queries. These aspects are quantified using songs collected from the Gnutella p2p network. We show that the power-law nature of the network makes it relatively easy to capture an accurate view of the main-streams using relatively little effort. However, some applications, like trend prediction, mandate collection of the data from the “long tail”, hence a much more exhaustive crawl is needed. Furthermore, we present techniques for overcoming noise originating from user generated content and for filtering non informative data, while minimizing information loss

Observation – CF systems tend to outperform content-based systems until you get in the long tail – so to improved CF systems, you need more long tail data. This work explores how to get more long tail data by mining p2p networks.

Observation – CF systems tend to outperform content-based systems until you get in the long tail – so to improved CF systems, you need more long tail data. This work explores how to get more long tail data by mining p2p networks.

P2P systems have some problems – privacy concerns, data collection is hard. High user churn, very noisy data, some users delete content from shared folders right away, sparsity

P2P mining Shared folders are useful for similarity, search queries are useful for trends.

Lots of p2p challenges and steps – getting IP addresses for p2p nodes, filtering out non-musical content, geo-identification, anonymization.

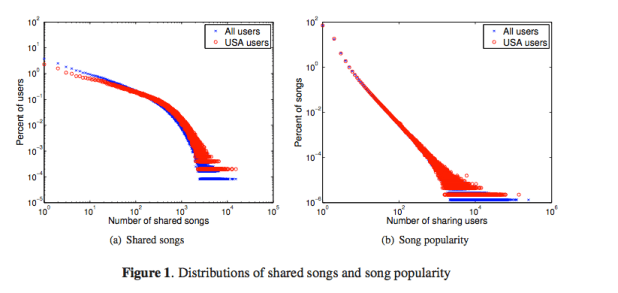

Dealing with sparsity: 1.2 million users, but average of 1 artist/song data point for each artist/song relation. These graphs show song popularity in shared folders. They use this data to help filter out non-typical users.

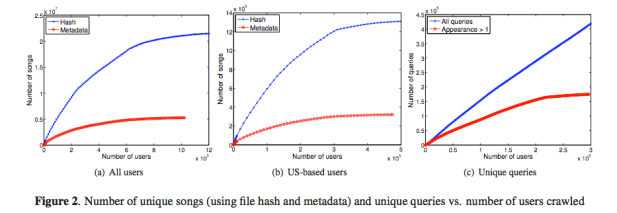

Identifying songs: Use the hash file – but of course many songs have many different digital copies – so they also look at the (noisy) metadata.

Songs Discovery Rate

Once you reach about 1/3 of the network you’ve found most of the tracks if you use metadata for resolving. If you use the hashes, you need to crawl 70% of the network.

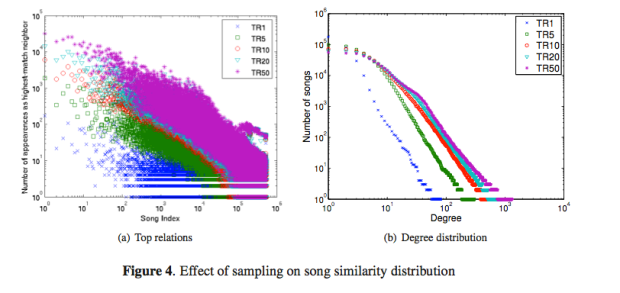

Using shared folders for similarity

There’s a preferential attachment model for popular songs

Conclusion: P2P data is good source of long tail data, but dealing with the noisy data is hard. The p2p data is especially good for building similarity models localized to countries. A good talk with from someone with lots of experience with p2p stuff.

MUSIC EMOTION RECOGNITION: A STATE OF THE ART REVIEW

Posted by Paul in ismir, music information retrieval, research on August 11, 2010

MUSIC EMOTION RECOGNITION: A STATE OF THE ART REVIEWYoungmoo E. Kim, Erik M. Schmidt, Raymond Migneco, Brandon G. Morton Patrick Richardson, Jeffrey Scott, Jacquelin A. Speck, and Douglas Turnbull (pdf)

From the paper: Recognizing musical mood remains a challenging problem primarily due to the inherent ambiguities of human emotions. Though research on this topic is not as mature as some other Music-IR tasks, it is clear that rapid progress is being made. In the past 5 years, the performance of automated systems for music emotion recognition using a wide range of annotated and content-based features (and multi-modal feature combinations) have advanced significantly. As with many Music-IR tasks open problems remain at all levels, from emotional representations and annotation methods to feature selection and machine learning.

While significant advances have been made, the most accurate systems thus far achieve predictions through large-scale machine learning algorithms operating on vast feature sets, sometimes spanning multiple domains, applied to relatively short musical selections. Oftentimes, this approach reveals little in terms of the underlying forces driving the perception of musical emotion (e.g., varying contributions of features) and, in particular, how emotions in music change over time. In the future, we anticipate further collaborations between Music-IR researchers, psychologists, and neuroscientists, which may lead to a greater understanding of not only mood within music, but human emotions in general. Furthermore, it is clear that individu- als perceive emotions within music differently. Given the multiple existing approaches for modeling the ambiguities of musical mood, a truly personalized system would likely need to incorporate some level of individual profiling to adjust its predictions.

This paper has provided a broad survey of the state of the art, highlighting many promising directions for further research. As attention to this problem increases, it is our hope that the progress of this research will continue to accelerate in the near future.

My notes:

Mirex performance on mood classification has held steady for the last few years. Most mood classification systems in Mirex are just adapted genre classifiers.

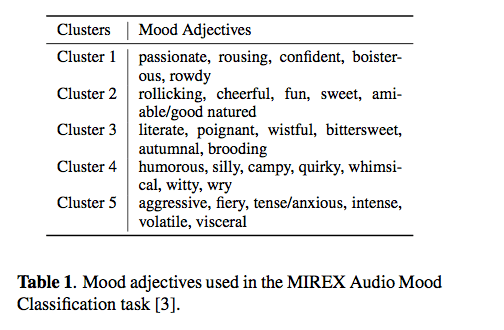

Categorical vs. dimensional

Categorical: Mirex classifies mood into 5 clusters:

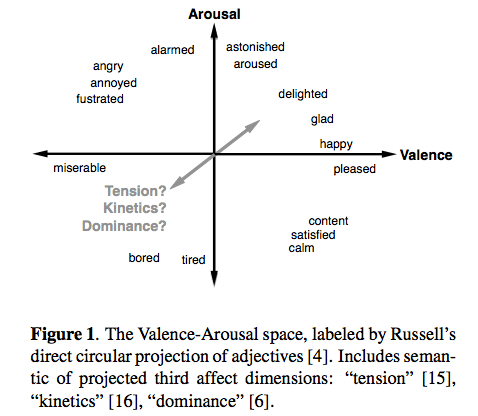

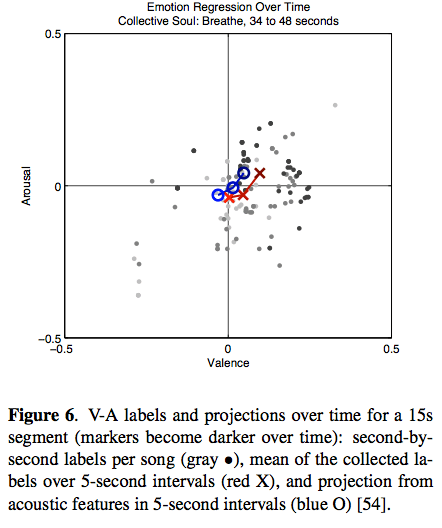

Dimensional: – the ever popular Valence-Arousal space – sometimes called the Thayer Mood model:

Dimensional: – the ever popular Valence-Arousal space – sometimes called the Thayer Mood model:

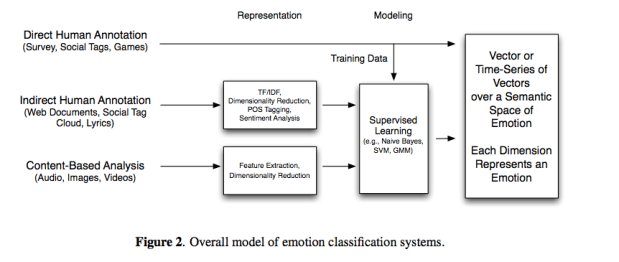

Typical emotion classification system

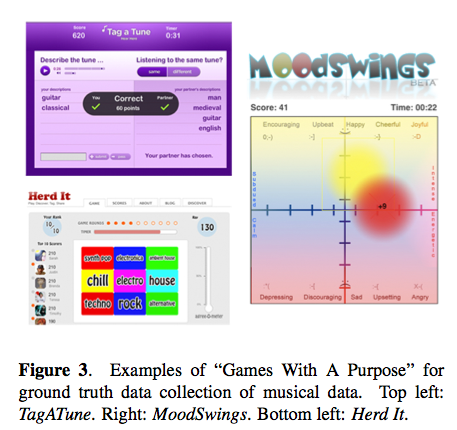

Ground Truth

A big challenge is to come up with groundtruth for training a recognition system. Last.fm tags, GWAP, AMG labels, web documents are common sources.

Lyrics – using lyrics alone has not been too successful for mood classification.

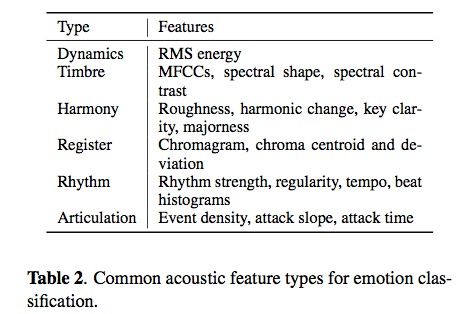

Content-based methods – typical features for mood:

Youngmoo’s latest work (with Eric Schmidt) is showing the distribution and change of emotion over time.

Hybrid systems

- Audio + Lyrics – some to high improvement

- Audio + Tags = good improvement

- Audio + Images = using album art to derive associations to mood

Conclusions – Mood recognition hasn’t improved much in recent years – probably because most systems are not really designed specifically for mood.

This was a great overview of the state-of-the-art. I’d be interested in hearing a much longer version of this talk. The paper and the references will be a great resource for anyone who’s interested in pursuing mood classification.

Solving Misheard Lyric Search Queries ….

Posted by Paul in ismir, music information retrieval, research on August 10, 2010

Solving Misheard Lyric Search Queries using a Probabilistic Model of Speech Sounds

Hussein Hirjee and Daniel G. Brown

People often use lyrics to find songs – and they get them wrong. Some examples ‘Nirvana’ = “Don’t walk on guns, burn your friends”. Approach: look at using phonetic similarity. They adapt ‘blast’ from DNA sequence matching to the problem. Lyrics are represented as a sequence of matrix.

For training, they get data from ‘misheard lyrics’ sites like KissThisGuy.com. They align the misheard with the real lyrics – to build a model of frequently misheard phonemes. They tested with KissThisGuy.com misheard lyrics. Scored with 5 different models.

Evaluation: Mean Reciprocal Rank and Hit Rank by Rank. The approach compared well with previous techniques. Still, 17% of lyrics are still not identified – some are just bad queries, but dealing with short queries is a source of errors. They also looked at phoneme confusion, in particular confusions caused by singing.

Future work: look at phoneme trigrams, and build a web site. Quesitioner suggests that they create a mondegreen generator

Good presentation, interesting, fun problem area.

APPROXIMATE NOTE TRANSCRIPTION FOR THE IMPROVED IDENTIFICATION OF DIFFICULT CHORDS

Posted by Paul in events, ismir, music information retrieval, research on August 10, 2010

APPROXIMATE NOTE TRANSCRIPTION FOR THE IMPROVED IDENTIFICATION OF DIFFICULT CHORDS – Matthias Mauch and Simon Dixon

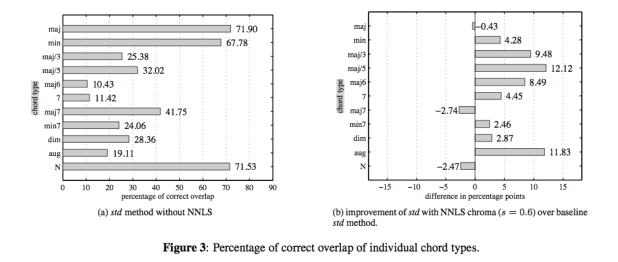

This is a new chroma extraction method using a non-negative least squares (NNLS) algorithm for prior approximate note transcription. Twelve different chroma methods were tested for chord transcription accuracy on popular music, using an existing high- level probabilistic model. The NNLS chroma features achieved top results of 80% accuracy that significantly exceed the state of the art by a large margin.

We have shown that the positive influence of the approximate transcription is particularly strong on chords whose harmonic structure causes ambiguities, and whose identification is therefore difficult in approaches without prior approximate transcription. The identification of these difficult chord types was substantially increased by up to twelve percentage points in the methods using NNLS transcription.

Matthias is an enthusiastic presenter who did not hesitate to jump onto the piano to demonstrate ‘difficult chords’. Very nice presentation.

Locating Tune Changes and Providing a Semantic Labelling of Sets of Irish Traditional Tunes

Posted by Paul in events, ismir, music information retrieval on August 10, 2010

Locating Tune Changes and Providing a Semantic Labelling of Sets of Irish Traditional Tunes by Cillian Kelly (pdf)

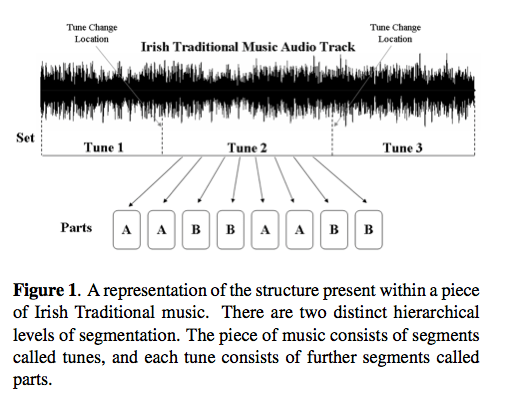

Abstract – An approach is presented which provides the tune change loca- tions within a set of Irish Traditional tunes. Also provided are semantic labels for each part of each tune within the set. A set in Irish Traditional music is a number of individual tunes played segue. Each of the tunes in the set are made up of structural segments called parts. Musical variation is a prominent characteristic of this genre. However, a certain set of notes known as ‘set accented tones’ are considered impervious to musical variation. Chroma information is extracted at ‘set accented tone’ locations within the music. The resulting chroma vectors are grouped to represent the parts of the music. The parts are then compared with one another to form a part similarity matrix. Unit kernels which represent the possible structures of an Irish Traditional tune are matched with the part similarity matrix to determine the tune change locations and semantic part labels.

Identifying Repeated Patterns in Music …

Posted by Paul in events, ismir, music information retrieval on August 10, 2010

I am at ISMIR this week, blogging sessions and papers that I find interesting.

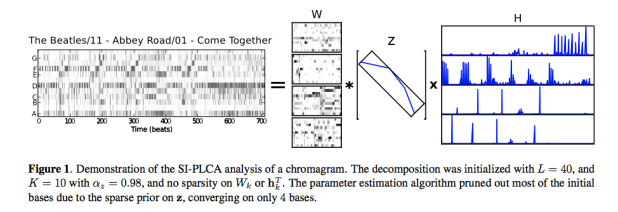

Identifying Repeated Patterns in Music using Sparse Convolutive Non-Negative Matrix Factorization – Ron Weiss, Juan Bello (pdf)

Problem: Looking at repetition in music – verse, chorus, repeated motifs. Can one identify high level and short term structiure simulataneous from audio? Lots of math in this.

Ron describes an unsupervised, data-driven, method for automatically identifying repeated patterns in music by analyzing a feature matrix using a variant of sparse convolutive non-negative matrix factorization. They utilize sparsity constraints to automatically identify the number of patterns and their lengths, parameters that would normally need to be fixed in advance. The proposed analysis is applied to beat- synchronous chromagrams in order to concurrently extract repeated harmonic motifs and their locations within a song. They show how this analysis can be used for long- term structure segmentation, resulting in an algorithm that is competitive with other state-of-the-art segmentation algorithms based on hidden Markov models and self similarity matrices.

One particular application is riff identification for music thumbnailing. Another application is structure segmentation – verse chorus, bridge etc.)

The code is open-sourced here: http://ronw.github.com/siplca-segmentation/

This was a really interesting presentation, with great examples. Excellent work. This one should be a candidate for best paper IMHO.

What’s Hot? Estimating Country Specific Artist Popularity

I am at ISMIR this week, blogging sessions and papers that I find interesting.

What’s Hot? Estimating Countrhy Specific Artist Popularity

Markus Schedl, Tim Pohle, Noam Koenigstein, Peter Knees

Traditional charts are not perfect, not available in on countries, have biases (sales vs. plays), don’t incorporate non-sales channels like p2p. inhomogenity between countries .

Approach: Look at different channels: Google, Twitter, shared folders in Gnutella, Last.fm

- Google: “led zeppelin” + “france” but applied a popularity filter to reduce affect of overall popularity

- twiiter – geolocated major citiies of the world using freebase. Used twitter APIs with #nowplaying hashtag along with the geolocation api to search for plays in a particular country

- P2p shared folders – gnutella network – gathered a million gnutella IP addresses, gathered the metadata for the shared folders at each address, used IP2location to resolve to a geographic location

- Last.fm – retreive top 400 listeners in each country. For these top 400 listeners, retrieve the top-played artists.

Evaluation: Retrieve Last.fm most popular. Use top-n rank overlap for scoring. Compared the 4 different sources. Each approach was prone to certain distortions and bias. For future they hope to combine these sources to build a hybrid system that combines best attributes of all approaches.

Some preliminary Playlist Survey results

[tweetmeme source= ‘plamere’ only_single=false] I’m conducting a somewhat informal survey on playlisting to compare how well playlists created by an expert radio DJ compare to those generated by a playlisting algorithm and a random number generator. So far, nearly 200 people have taken the survey (Thanks!). Already I’m seeing some very interesting results. Here’s a few tidbits (look for a more thorough analysis once the survey is complete).

People expect human DJs to make better playlists:

The survey asks people to try to identify the origin of a playlist (human expert, algorithm or random) and also rate each playlist. We can look at the ratings people give to playlists based on what they think the playlist origin is to get an idea of people’s attitudes toward human vs. algorithm creation.

Predicted Origin Rating ---------------- ------ Human expert 3.4 Algorithm 2.7 Random 2.1

We see that people expect humans to create better playlists than algorithms and that algorithms should give better playlists than random numbers. Not a surprising result.

Human DJs don’t necessarily make better playlists:

Now lets look at how people rated playlists based on the actual origin of the playlists:

Actual Origin Rating ------------- ------ Human expert 2.5 Algorithm 2.7 Random 2.6

These results are rather surprising. Algorithmic playlists are rated highest, while human-expert-created playlists are rated lowest, even lower than those created by the random number generator. There are lots of caveats here, I haven’t done any significance tests yet to see if the differences here really matter, the survey size is still rather small, and the survey doesn’t present real-world playlist listening conditions, etc. Nevertheless, the results are intriguing.

I’d like to collect more survey data to flesh out these results. So if you haven’t already, please take the survey:

The Playlist Survey

Thanks!

10 Awesome things about ISMIR 2009

ISMIR 2009 is over – but it will not be soon forgotten. It was a wonderful event, with seemingly flawless execution. Some of my favorite things about the conference this year:

- The proceedings – distributed on a USB stick hidden in a pen that has a laser! And the battery for the laser recharges when you plug the USB stick into your computer. How awesome is that!? (The printed version is very nice too, but it doesn’t have a laser).

- The hotel – very luxurious while at the same time, very affordable. I had a wonderful view of Kobe, two very comfortable beds and a toilet with more controls than the dashboard on my first car.

- The presentation room – very comfortable with tables for those sitting towards the front, great audio and video and plenty of power and wireless for all.

- The banquet – held in the most beautiful room in the world with very exciting Taiko drumming as entertainment.

- The details – it seems like the organizing team paid attention to every little detail and request – they had taped numbers on the floor so that the 30 folks giving their 30 second pitches during poster madness would know just where to stand, to the signs on the coffeepots telling you that the coffee was being made, to the signs on the train to the conference center welcoming us to ISMIR 2009. It seems like no detail was left to chance.

- The food – our stomachs were kept quite happy – with sweet breads and pastries every morning, bento boxes for lunch, and coffee, juices, waters, and the mysterious beverage ‘black’ that I didn’t dare to try. My absolute favorite meal was the box lunch during the tutorial day – it was a box with a string – when you are ready to eat you give the string a sharp tug – wait a few minutes for the magic to do its job and then you open the box and eat a piping hot bowl of noodles and vegetables. Almost as cool as the laser-augmented proceedings.

- The city – Kobe is a really interesting city – I spent a few days walking around and was fascinated by it all. I really felt like I was walking around in the future. It was extremely clean, the people will very polite, friendly and always willing to help. Going into some parts of town was sensory overload, the colors, sounds, smells, the sights were overwhelming – it was really fun.

- the Keynote – music making robots – what more is there to say.

- The Program – the quality of papers was very high – there was some outstanding posters and oral presentations. Much thanks to George and Keiji for organizing the reviews to create a great program. (More on my favorite posters and papers in an upcoming post)

- f(mir) – The student-organized workshop looked at what MIR research would look like in 10, 20 or even 50 years (basically after I’m dead and gone). The presentations in this workshop were quite provactive – well done students!

I write this post as I sit in the airport in Osaka waiting for my flight home. I’m tired, but very energized to explore the many new ideas that I encountered at the conference. It was a great week. I want to extend my personal thanks to Professor Fujinaga and Professor Goto and the rest of the conference committee for putting together a wonderful week.

ISMIR Oral Session – Sociology and Ethnomusicology

Session Title: Sociology & Ethnomusicology

Session Chair: Frans Wiering (Universiteit Utrecht, Netherland)

Exploring Social Music Behavior: An Investigation of Music Selection at Parties

Sally Jo Cunningham and David M. Nichols

Abstract: This paper builds an understanding how music is currently listened to by small (fewer than 10 individuals) to medium-sized (10 to 40 individuals) gatherings of people—how songs are chosen for playing, how the music fits in with other activities of group members, who supplies the music, the hardware/software that supports song selection and presentation. This fine-grained context emerges from a qualitative analysis of a rich set of participant observations and interviews focusing on the selection of songs to play at social gatherings. We suggest features for software to support music playing at parties.

Notes:

- What happens at parties, especially informal small and medium sized parties

- Observations and interviews – 43 party observations

- Analyzing the data: key events that drive the activity, patterns of behavior, social roles

- Observations

- music selection cannot require fine motor movements (because of drinking and holding their drings) (Drinking dislexia)

- Need for large displays

- Party collection from different donors, sources, media

- Pre-party: host collection

- As party progresses: additional contributions (ipods, thumbdrives, etc)

- Challenge: bring together into a single browseable searchable collection

- Roles: Host, guest, guest of honor. Host provides initial collection, party playlist. High stress ‘guilty pleasures’

- Guests: may contribute, could insult the host, may modify party playlist if receive the invitation from the host. Voting jukeboxes may help

- Guest of Honor had ultimate control

- insertion into playlist, looking for specific song, type of song.

- Delete songs from playlist without disrupting the party

- Setting and maintaining atmosphere

- softer for starts, move to faster louder, ending with chilling out

- What next:other situations, long car ride

- Questions: Spotify turned into the best party

Great study, great presentation.

Music and Geography: Content Description of Musical Audio from Different Parts of the World

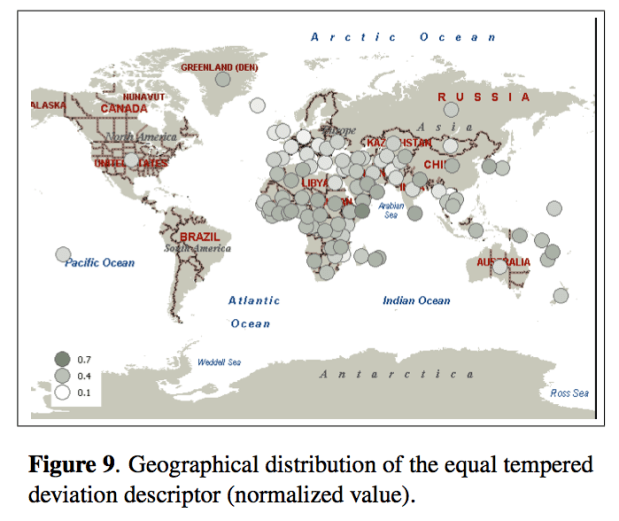

Emilia Gómez, Martín Haro and Perfecto Herrera

Abstract: This paper analyses how audio features related to different musical facets can be useful for the comparative analysis and classification of music from diverse parts of the world. The music collection under study gathers around 6,000 pieces, including traditional music from different geographical zones and countries, as well as a varied set of Western musical styles. We achieve promising results when trying to automatically distinguish music from Western and non-Western traditions. A 86.68% of accuracy is obtained using only 23 audio features, which are representative of distinct musical facets (timbre, tonality, rhythm), indicating their complementarity for music description. We also analyze the relative performance of the different facets and the capability of various descriptors to identify certain types of music. We finally present some results on the relationship between geographical location and musical features in terms of extracted descriptors. All the reported outcomes demonstrate that automatic description of audio signals together with data mining techniques provide means to characterize huge music collections from different traditions, complementing ethnomusicological manual analysis and providing a link between music and geography.

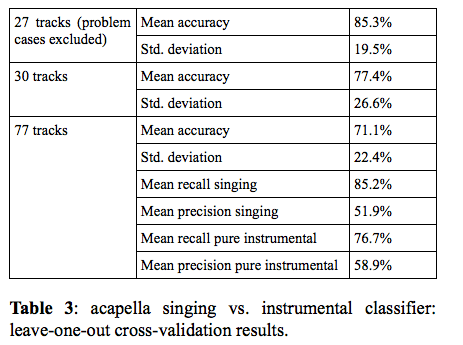

You Call That Singing? Ensemble Classification for Multi-Cultural Collections of Music Recordings

Polina Proutskova and Michael Casey

Abstract: The wide range of vocal styles, musical textures and re- cording techniques found in ethnomusicological field recordings leads us to consider the problem of automatic- ally labeling the content to know whether a recording is a song or instrumental work. Furthermore, if it is a song, we are interested in labeling aspects of the vocal texture: e.g. solo, choral, acapella or singing with instruments. We present evidence to suggest that automatic annotation is feasible for recorded collections exhibiting a wide range of recording techniques and representing musical cultures from around the world. Our experiments used the Alan Lomax Cantometrics training tapes data set, to encourage future comparative evaluations. Experiments were con- ducted with a labeled subset consisting of several hun- dred tracks, annotated at the track and frame levels, as acapella singing, singing plus instruments or instruments only. We trained frame-by-frame SVM classifiers using MFCC features on positive and negative exemplars for two tasks: per-frame labeling of singing and acapella singing. In a further experiment, the frame-by-frame classifier outputs were integrated to estimate the predominant content of whole tracks. Our results show that frame-by- frame classifiers achieved 71% frame accuracy and whole track classifier integration achieved 88% accuracy. We conclude with an analysis of classifier errors suggesting avenues for developing more robust features and classifier strategies for large ethnographically diverse collections.