Session Title: Sociology & Ethnomusicology

Session Chair: Frans Wiering (Universiteit Utrecht, Netherland)

Exploring Social Music Behavior: An Investigation of Music Selection at Parties

Sally Jo Cunningham and David M. Nichols

Abstract: This paper builds an understanding how music is currently listened to by small (fewer than 10 individuals) to medium-sized (10 to 40 individuals) gatherings of people—how songs are chosen for playing, how the music fits in with other activities of group members, who supplies the music, the hardware/software that supports song selection and presentation. This fine-grained context emerges from a qualitative analysis of a rich set of participant observations and interviews focusing on the selection of songs to play at social gatherings. We suggest features for software to support music playing at parties.

Notes:

- What happens at parties, especially informal small and medium sized parties

- Observations and interviews – 43 party observations

- Analyzing the data: key events that drive the activity, patterns of behavior, social roles

- Observations

- music selection cannot require fine motor movements (because of drinking and holding their drings) (Drinking dislexia)

- Need for large displays

- Party collection from different donors, sources, media

- Pre-party: host collection

- As party progresses: additional contributions (ipods, thumbdrives, etc)

- Challenge: bring together into a single browseable searchable collection

- Roles: Host, guest, guest of honor. Host provides initial collection, party playlist. High stress ‘guilty pleasures’

- Guests: may contribute, could insult the host, may modify party playlist if receive the invitation from the host. Voting jukeboxes may help

- Guest of Honor had ultimate control

- insertion into playlist, looking for specific song, type of song.

- Delete songs from playlist without disrupting the party

- Setting and maintaining atmosphere

- softer for starts, move to faster louder, ending with chilling out

- What next:other situations, long car ride

- Questions: Spotify turned into the best party

Great study, great presentation.

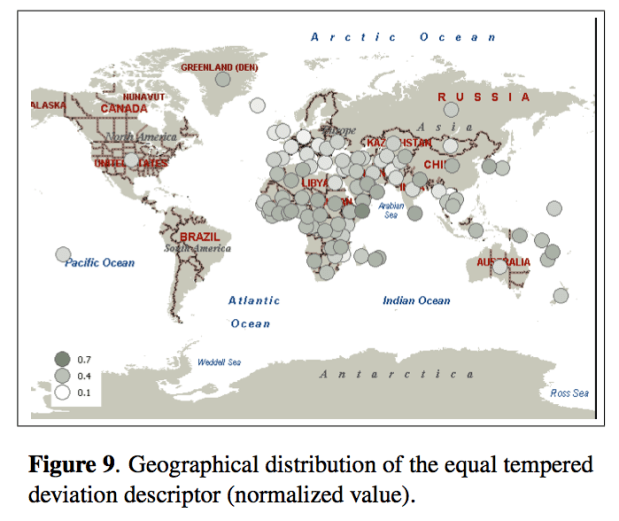

Music and Geography: Content Description of Musical Audio from Different Parts of the World

Emilia Gómez, Martín Haro and Perfecto Herrera

Abstract: This paper analyses how audio features related to different musical facets can be useful for the comparative analysis and classification of music from diverse parts of the world. The music collection under study gathers around 6,000 pieces, including traditional music from different geographical zones and countries, as well as a varied set of Western musical styles. We achieve promising results when trying to automatically distinguish music from Western and non-Western traditions. A 86.68% of accuracy is obtained using only 23 audio features, which are representative of distinct musical facets (timbre, tonality, rhythm), indicating their complementarity for music description. We also analyze the relative performance of the different facets and the capability of various descriptors to identify certain types of music. We finally present some results on the relationship between geographical location and musical features in terms of extracted descriptors. All the reported outcomes demonstrate that automatic description of audio signals together with data mining techniques provide means to characterize huge music collections from different traditions, complementing ethnomusicological manual analysis and providing a link between music and geography.

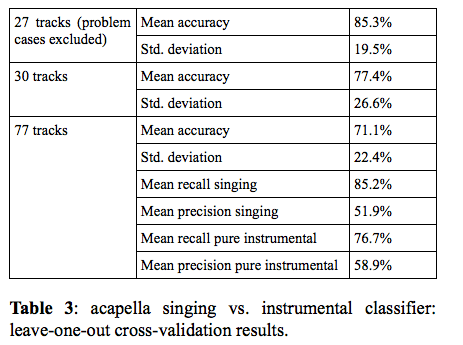

You Call That Singing? Ensemble Classification for Multi-Cultural Collections of Music Recordings

Polina Proutskova and Michael Casey

Abstract: The wide range of vocal styles, musical textures and re- cording techniques found in ethnomusicological field recordings leads us to consider the problem of automatic- ally labeling the content to know whether a recording is a song or instrumental work. Furthermore, if it is a song, we are interested in labeling aspects of the vocal texture: e.g. solo, choral, acapella or singing with instruments. We present evidence to suggest that automatic annotation is feasible for recorded collections exhibiting a wide range of recording techniques and representing musical cultures from around the world. Our experiments used the Alan Lomax Cantometrics training tapes data set, to encourage future comparative evaluations. Experiments were con- ducted with a labeled subset consisting of several hun- dred tracks, annotated at the track and frame levels, as acapella singing, singing plus instruments or instruments only. We trained frame-by-frame SVM classifiers using MFCC features on positive and negative exemplars for two tasks: per-frame labeling of singing and acapella singing. In a further experiment, the frame-by-frame classifier outputs were integrated to estimate the predominant content of whole tracks. Our results show that frame-by- frame classifiers achieved 71% frame accuracy and whole track classifier integration achieved 88% accuracy. We conclude with an analysis of classifier errors suggesting avenues for developing more robust features and classifier strategies for large ethnographically diverse collections.

#1 by subpixel on November 9, 2009 - 11:00 am

Re: music selection at parties

It would be interesting to see what sort of parties/gatherings came out of the list and whether any of them included DJs. Perhaps it is not so usual for student parties to have DJs, but a lot of “older” parties do, and this makes a massive difference, especially when many of the guests are “into” music.

Perhaps in this study, DJs (if any) were considered as guest(s) of honor.

It is also curious that, of 43 separate “observations”, the longest (party) was only 4 hours, and there was no mention of any kind of live music (eg a band or individual musician(s), performing or jamming). The progression of music is (probably) quite different for events of different expected duration, especially ones where people come and go at different times. eg, I don’t think it is unusual to go to a house party where people might arrive anywhere between 8pm and 3am, some perhaps later and possibly in distinct waves (such as people arriving in the morning for a daytime chillout, lunchtime BBQ or what have you).

I also wonder if the study predicted and made allowances for “observations” that spanned multiple venues, where an individual (or even group) might view a sequence of distinct “parties” as a single “event” (let’s call it a “night”).

-spxl