Archive for category ismir

Predicting Development of Research in Music Based on Parallels with Natural Language Processing

Posted by Paul in ismir, music information retrieval, research on August 12, 2010

It is the f(MIR) workshop – The Future of MIR – What will MIR be like in 5 or 20 years?

This is the f(MIR) session. Always a highlight at ISMIR

Predicting Development of Research in Music Based on Parallels with Natural Language Processing

Jacek Wołkowicz and Vlado Kešelj

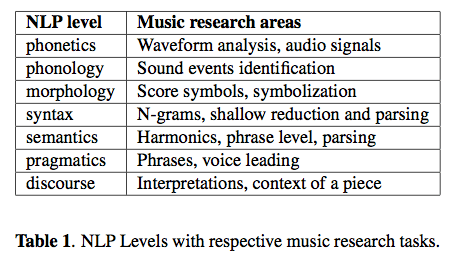

ABSTRACT – The hypothesis of the paper is that the domain of Nat- ural Languages Processing (NLP) resembles current re- search in music so one could benefit from this by employ- ing NLP techniques to music. In this paper the similarity between both domains is described. The levels of NLP are listed with pointers to respective tasks within the research of computational music. A brief introduction to history of NLP enables locating music research in this history. Pos- sible directions of research in music, assuming its affinity to NLP, are introduced. Current research in generational and statistical music modeling is compared to similar NLP theories. The paper is concluded with guidelines for music research and information retrieval.

Notes: The speaker points out the similarities and differences between NLP and MIR.

- Most people are illiterates (i.e. can’t read/write music)

- Much more complex representation

- Limited space of all possible pieces (not sure I agree, the argument is that anyone can generate text/speech, but not so much for music)

History of NLP

- Grammars, Chomsky, Turing Test

- Period of optimism: automatic translation – but failed

- Data mining and statistical methods. Large corpora, brown, wordnet

- Semantics defined by statistics

Algorithms vs. Data: Algorithms don’t matter much, it is all about the data. More data is better.

Comparing Music Objects: similar to the Text Translation problem

What needs to be done:

- Web crawling companies need to give MIR more data

- Convince publishers to annotate data

- Collect parallel data (MIDI / audio)

Accurate Real-time Windowed Time Warping

Posted by Paul in events, ismir, music information retrieval, research on August 11, 2010

Accurate Real-time Windowed Time Warping

Robert Macrae and Simon Dixon

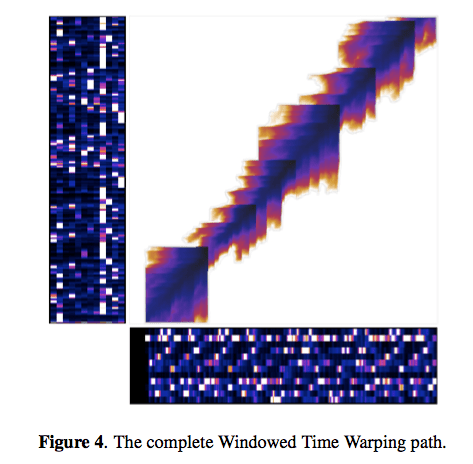

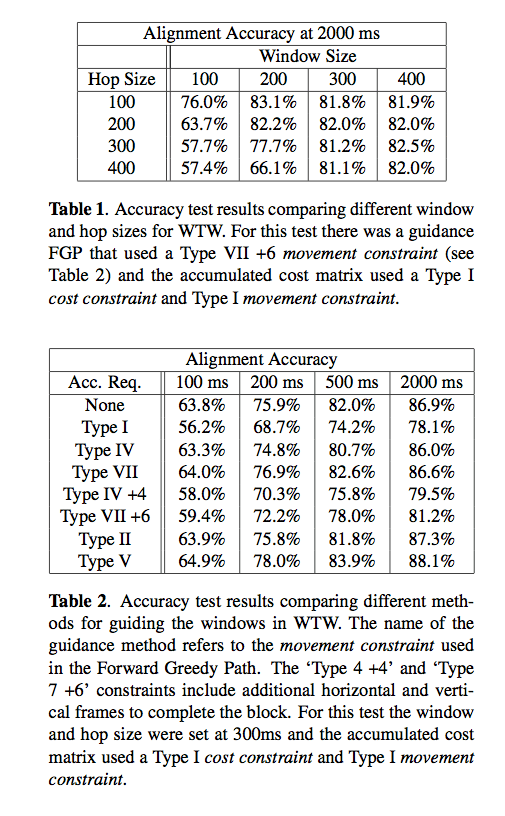

ABSTRACT – Dynamic Time Warping (DTW) is used to find alignments between two related streams of information and can be used to link data, recognise patterns or find similarities. Typically, DTW requires the complete series of both input streams in advance and has quadratic time and space requirements. As such DTW is unsuitable for real-time applications and is inefficient for aligning long sequences. We present Windowed Time Warping (WTW), a variation on DTW that, by dividing the path into a series of DTW windows and making use of path cost estimation, achieves alignments with an accuracy and efficiency superior to other leading modifications and with the capability of synchronising in real-time. We demonstrate this method in a score following application. Evaluation of the WTW score following system found 97.0% of audio note onsets were correctly aligned within 2000 ms of the known time. Results also show reductions in execution times over state-of-the- art efficient DTW modifications.

Idea: Frame window features – (sub dtw frames). Each path can be calculated sequentially, so less history needs to be retained which is important for performance.

Works in linear time like previous systems, but with the smaller history it can work entirely in memory, so it avoids the problem of needing to store the history on disk. Nice demo of a real-time time warping.

A Multi-pass Algorithm for Accurate Audio-to-Score Alignment

Posted by Paul in ismir, Music, music information retrieval, research on August 11, 2010

A Multi-pass Algorithm for Accurate Audio-to-Score Alignment

Bernhard Niedermayer and Gerhard Widmer

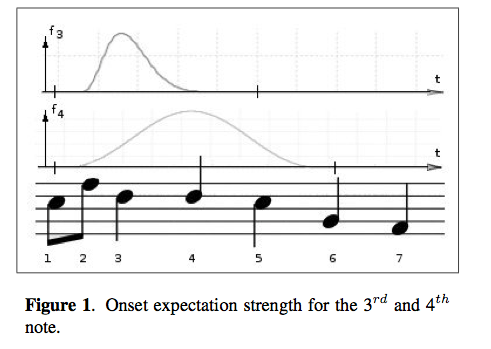

ABSTRACT – Most current audio-to-score alignment algorithms work on the level of score time frames; i.e., they cannot differentiate between several notes occurring at the same discrete time within the score. This level of accuracy is sufficient for a variety of applications. However, for those that deal with, for example, musical expression analysis such micro timings might also be of interest. Therefore, we propose a method that estimates the onset times of individual notes in a post-processing step. Based on the initial alignment and a feature obtained by matrix factorization, those notes for which the confidence in the alignment is high are chosen as anchor notes. The remaining notes in between are revised, taking into account the additional information about these anchors and the temporal relations given by the score. We show that this method clearly outperforms a reference method that uses the same features but does not differenti- ate between anchor and non-anchor notes.

The main contribution is the introduction of an expectation strength function modeling the expected onset time of a note between two anchors. Although results are encouraging, there are specific circumstances where the algorithm fails, i.e., temporal displacement of notes is large.

Understanding Features and Distance Functions for Music Sequence Alignment

Posted by Paul in events, ismir, music information retrieval, research on August 11, 2010

Understanding Features and Distance Functions for Music Sequence Alignment – Ozgur Izmirli and Roger Dannenberg

ABSTRACT We investigate the problem of matching symbolic representations directly to audio based representations for applications that use data from both domains. One such application is score alignment, which aligns a sequence of frames based on features such as chroma vectors and distance functions such as Euclidean distance. Good representations are critical, yet current systems use ad hoc constructions such as the chromagram that have been shown to work quite well. We investigate ways to learn chromagram-like representations that optimize the classification of “matching” vs. “non-matching” frame pairs of audio and MIDI. New representations learned automatically from examples not only perform better than the chromagram representation but they also reveal interesting projection structures that differ distinctly from the traditional chromagram.

Roger and Ozgur present a method for learning features for score alignment. They bypass the traditional chromagram feature with a feature that is learned projection of the audio spectrum. Results show that the new features work better than chroma.

Predicting High-level Music Semantics Using Social Tags via Ontology-based Reasoning

Predicting High-level Music Semantics Using Social Tags via Ontology-based Reasoning

Jun Wang, Xiaoou Chen, Yajie Hu and Tao Feng

ABSTRACT – High-level semantics such as “mood” and “usage” are very useful in music retrieval and recommendation but they are normally hard to acquire. Can we predict them from a cloud of social tags? We propose a semantic iden- tification and reasoning method: Given a music taxonomy system, we map it to an ontology’s terminology, map its finite set of terms to the ontology’s assertional axioms, and then map tags to the closest conceptual level of the referenced terms in WordNet to enrich the knowledge base, then we predict richer high-level semantic informa- tion with a set of reasoning rules. We find this method predicts mood annotations for music with higher accuracy, as well as giving richer semantic association information, than alternative SVM-based methods do.

In this paper, the authors use word-net to map social tags to a professional taxonomy and then use these for traditional tagging tasks such as classification and mood identification.

Learning Tags that Vary Within a Song

Posted by Paul in events, ismir, music information retrieval, research on August 11, 2010

Learning Tags that Vary Within a Song

Michael I. Mandel, Douglas Eck and Yoshua Bengio

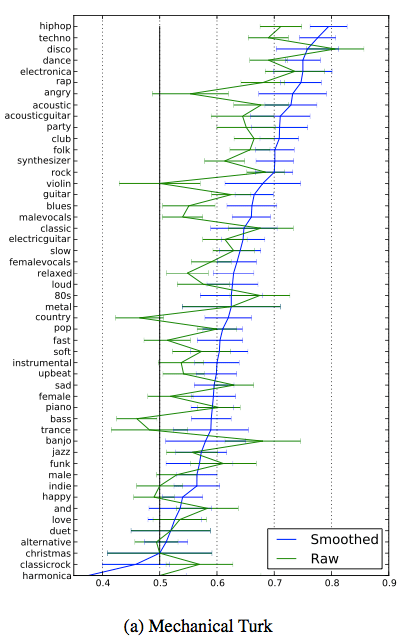

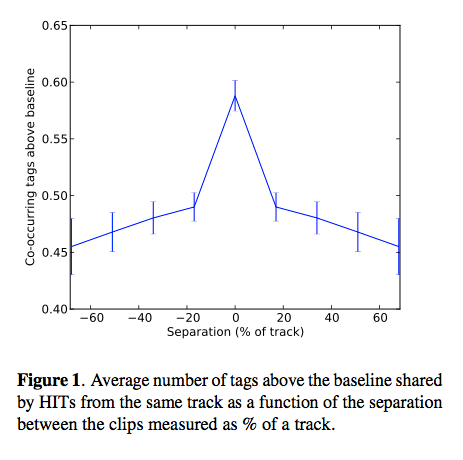

Abstract:This paper examines the relationship between human generated tags describing different parts of the same song. These tags were collected using Amazon’s Mechanical Turk service. We find that the agreement between different people’s tags decreases as the distance between the parts of a song that they heard increases. To model these tags and these relationships, we describe a conditional restricted Boltzmann machine. Using this model to fill in tags that should probably be present given a context of other tags, we train automatic tag classifiers (autotaggers) that outperform those trained on the original data.

Michael enlisted the Amazon Turk to tag music. They paid for about a penny per tag. About 11% of the tags were spam. He then looked at co-occurence data that showed interesting patterns, especially as related to the distance between clips in a single track.

Results shows that smoothing of the data with a boltzmann machine tends to give better accuracy when tag data is sparse.

Results shows that smoothing of the data with a boltzmann machine tends to give better accuracy when tag data is sparse.

Sparse Multi-label Linear Embedding Within Nonnegative Tensor Factorization Applied to Music Tagging

Posted by Paul in events, ismir, music information retrieval, research on August 11, 2010

Sparse Multi-label Linear Embedding Within Nonnegative Tensor Factorization Applied to Music Tagging Yannis Panagakis, Constantine Kotropoulos and Gonzalo R. Arce

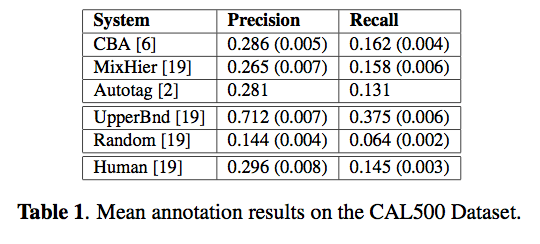

Abstract: A novel framework for music tagging is proposed. First, each music recording is represented by bio-inspired auditory temporal modulations. Then, a multilinear subspace learning algorithm based on sparse label coding is developed to effectively harness the multi-label information for dimensionality reduction. The proposed algorithm is referred to as Sparse Multi-label Linear Embedding Non- negative Tensor Factorization, whose convergence to a stationary point is guaranteed. Finally, a recently proposed method is employed to propagate the multiple labels of training auditory temporal modulations to auditory temporal modulations extracted from a test music recording by means of the sparse l1 reconstruction coefficients. The overall framework, that is described here, outperforms both humans and state-of-the-art computer audition systems in the music tagging task, when applied to the CAL500 dataset.

This paper gets the ‘Title that rolls off the tongue best’ award. I don’t understand all of the math for this one, but some notes – the wavelet-based features used, seem to be good at discriminating at the genre level. He compares the system to Doug Turnbull’s MixHier and to the system that we built at Sun labs with Thierry, Doug, Francois and myself (Autotagger: A model for predicting social tags from acoustic features on Large Music Databases)

.

MIREX 2010

Posted by Paul in events, ismir, music information retrieval, research on August 11, 2010

Dr. Downie gave a summary of MIREX 2010 – the evaluation track for MIR (it is like TREC for MIR). Results are here: MIREX-2010 results

Matthias Mauch makes a case for improving chord recognition by making the MIREX tasks harder. He’d like to gently increase the difficulty.

Improving the Generation of Ground Truths Based on Partially Ordered Lists

Posted by Paul in ismir, music information retrieval, research on August 11, 2010

Improving the Generation of Ground Truths Based on Partially Ordered Lists

Julián Urbano, Mónica Marrero, Diego Martín and Juan Lloréns

abstract: Ground truths based on partially ordered lists have been used for some years now to evaluate the effectiveness of Music Information Retrieval systems, especially in tasks related to symbolic melodic similarity. However, there has been practically no meta-evaluation to measure or improve the correctness of these evaluations. In this paper we revise the methodology used to generate these ground truths and disclose some issues that need to be addressed. In particular, we focus on the arrangement and aggrega- tion of the relevant results, and show that it is not possi- ble to ensure lists completely consistent. We develop a measure of consistency based on Average Dynamic Re- call and propose several alternatives to arrange the lists, all of which prove to be more consistent than the original method. The results of the MIREX 2005 evaluation are revisited using these alternative ground truths.

Current approach of Partially Ordered Lists for evaluating tasks like melody similarity may not be the best way to evaluate.

- They are expensive

- They have some odd results

- They are hard to replicate

- leave out relevant results

- Inconsistencies among the expert evaluations are not treated properly

Authors propose an alternate aggregation:

- All: a new group is started if the pivot incipit is significantly different from every incipit in the current group. This should lead to larger groups.

- Any: a new group is started if the pivot incipit is significantly different from any incipit in the current group. This should lead to smaller groups.

- Prev: a new group is started if the pivot incipit is significantly different from the previous one.

Authors applied the new ranking system to MIREX 2005 – resulting in lowered performance and modifying ranking of several systems.

A Cartesian Ensemble of Feature Subspace Classifiers for Music Categorization

Posted by Paul in ismir, Music, music information retrieval, research on August 11, 2010

A Cartesian Ensemble of Feature Subspace Classifiers for Music Categorization (pdf)

Thomas Lidy, Rudolf Mayer, Andreas Rauber, Pedro J. Ponce de León, Antonio Pertusa, and Jose Manuel Iñesta

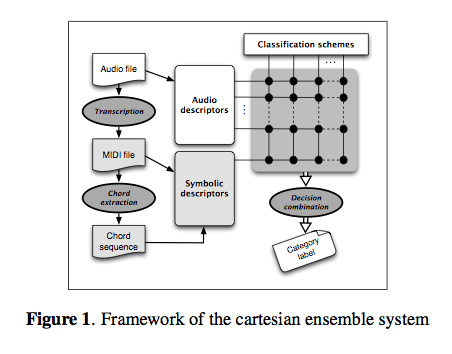

Abstract: We present a cartesian ensemble classification system that is based on the principle of late fusion and feature sub- spaces. These feature subspaces describe different aspects of the same data set. The framework is built on the Weka machine learning toolkit and able to combine arbitrary fea- ture sets and learning schemes. In our scenario, we use it for the ensemble classification of multiple feature sets from the audio and symbolic domains. We present an extensive set of experiments in the context of music genre classifi- cation, based on numerous Music IR benchmark datasets, and evaluate a set of combination/voting rules. The results show that the approach is superior to the best choice of a single algorithm on a single feature set. Moreover, it also releases the user from making this choice explicitly.

An ensemble classification system built on top of Weka:

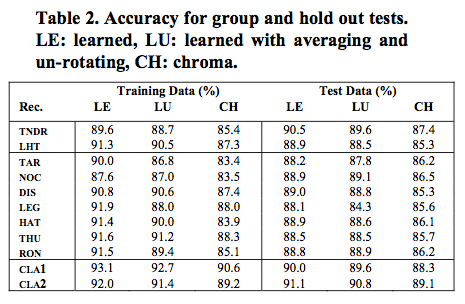

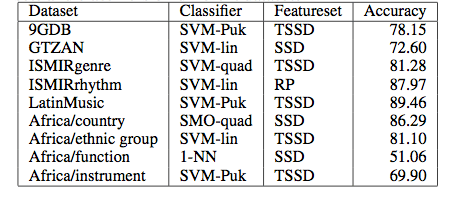

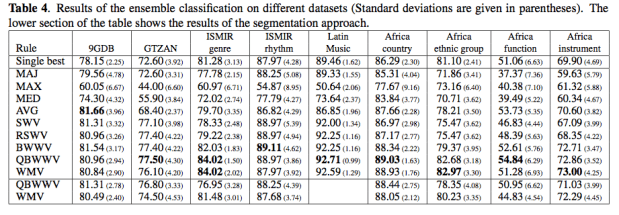

Results, using different datasets, classifiers and feature sets:

Results, using different datasets, classifiers and feature sets:

Execution times were about 10 seconds per song, so rather slow for large collections.

The ensemble approach delivered superior results through adding a reasonable amount of feature sets and classifiers. However, they did not discover a combination rule that always outperforms all the others.