ON THE APPLICABILITY OF PEER-TO-PEER DATA IN MUSIC INFORMATION RETRIEVAL RESEARCH (pdf)

Noam Koenigstein, Yuval Shavitt, Ela Weinsberg, and Udi Weinsberg

abstract:Peer-to-Peer (p2p) networks are being increasingly adopted as an invaluable resource for various music information re- trieval (MIR) tasks, including music similarity, recommen- dation and trend prediction. However, these networks are usually extremely large and noisy, which raises doubts re- garding the ability to actually extract sufficiently accurate information.

This paper evaluates the applicability of using data orig- inating from p2p networks for MIR research, focusing on partial crawling, inherent noise and localization of songs and search queries. These aspects are quantified using songs collected from the Gnutella p2p network. We show that the power-law nature of the network makes it relatively easy to capture an accurate view of the main-streams using relatively little effort. However, some applications, like trend prediction, mandate collection of the data from the “long tail”, hence a much more exhaustive crawl is needed. Furthermore, we present techniques for overcoming noise originating from user generated content and for filtering non informative data, while minimizing information loss

Observation – CF systems tend to outperform content-based systems until you get in the long tail – so to improved CF systems, you need more long tail data. This work explores how to get more long tail data by mining p2p networks.

Observation – CF systems tend to outperform content-based systems until you get in the long tail – so to improved CF systems, you need more long tail data. This work explores how to get more long tail data by mining p2p networks.

P2P systems have some problems – privacy concerns, data collection is hard. High user churn, very noisy data, some users delete content from shared folders right away, sparsity

P2P mining Shared folders are useful for similarity, search queries are useful for trends.

Lots of p2p challenges and steps – getting IP addresses for p2p nodes, filtering out non-musical content, geo-identification, anonymization.

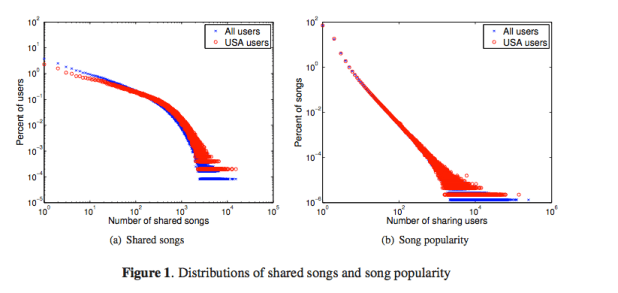

Dealing with sparsity: 1.2 million users, but average of 1 artist/song data point for each artist/song relation. These graphs show song popularity in shared folders. They use this data to help filter out non-typical users.

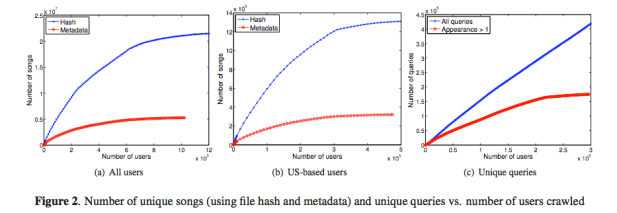

Identifying songs: Use the hash file – but of course many songs have many different digital copies – so they also look at the (noisy) metadata.

Songs Discovery Rate

Once you reach about 1/3 of the network you’ve found most of the tracks if you use metadata for resolving. If you use the hashes, you need to crawl 70% of the network.

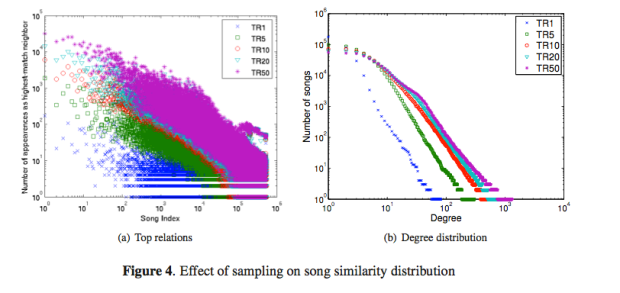

Using shared folders for similarity

There’s a preferential attachment model for popular songs

Conclusion: P2P data is good source of long tail data, but dealing with the noisy data is hard. The p2p data is especially good for building similarity models localized to countries. A good talk with from someone with lots of experience with p2p stuff.