Posts Tagged survey

Some preliminary Playlist Survey results

[tweetmeme source= ‘plamere’ only_single=false] I’m conducting a somewhat informal survey on playlisting to compare how well playlists created by an expert radio DJ compare to those generated by a playlisting algorithm and a random number generator. So far, nearly 200 people have taken the survey (Thanks!). Already I’m seeing some very interesting results. Here’s a few tidbits (look for a more thorough analysis once the survey is complete).

People expect human DJs to make better playlists:

The survey asks people to try to identify the origin of a playlist (human expert, algorithm or random) and also rate each playlist. We can look at the ratings people give to playlists based on what they think the playlist origin is to get an idea of people’s attitudes toward human vs. algorithm creation.

Predicted Origin Rating ---------------- ------ Human expert 3.4 Algorithm 2.7 Random 2.1

We see that people expect humans to create better playlists than algorithms and that algorithms should give better playlists than random numbers. Not a surprising result.

Human DJs don’t necessarily make better playlists:

Now lets look at how people rated playlists based on the actual origin of the playlists:

Actual Origin Rating ------------- ------ Human expert 2.5 Algorithm 2.7 Random 2.6

These results are rather surprising. Algorithmic playlists are rated highest, while human-expert-created playlists are rated lowest, even lower than those created by the random number generator. There are lots of caveats here, I haven’t done any significance tests yet to see if the differences here really matter, the survey size is still rather small, and the survey doesn’t present real-world playlist listening conditions, etc. Nevertheless, the results are intriguing.

I’d like to collect more survey data to flesh out these results. So if you haven’t already, please take the survey:

The Playlist Survey

Thanks!

The Playlist Survey

[tweetmeme source= ‘plamere’ only_single=false] Playlists have long been a big part of the music experience. But making a good playlist is not always easy. We can spend lots of time crafting the perfect mix, but more often than not, in this iPod age, we are likely to toss on a pre-made playlist (such as an album), have the computer generate a playlist (with something like iTunes Genius) or (more likely) we’ll just hit the shuffle button and listen to songs at random. I pine for the old days when Radio DJs would play well-crafted sets – mixes of old favorites and the newest, undiscovered tracks – connected in interesting ways. These professionally created playlists magnified the listening experience. The whole was indeed greater than the sum of its parts.

The tradition of the old-style Radio DJ continues on Internet Radio sites like Radio Paradise. RP founder/DJ Bill Goldsmith says of Radio Paradise: “Our specialty is taking a diverse assortment of songs and making them flow together in a way that makes sense harmonically, rhythmically, and lyrically — an art that, to us, is the very essence of radio.” Anyone who has listened to Radio Paradise will come to appreciate the immense value that a professionally curated playlist brings to the listening experience.

I wish I could put Bill Goldsmith in my iPod and have him craft personalized playlists for me – playlists that make sense harmonically, rhythmically and lyrically, and customized to my music taste, mood and context . That, of course, will never happen. Instead I’m going to rely on computer algorithms to generate my playlists. But how good are computer generated playlists? Can a computer really generate playlists as good as Bill Goldsmith, with his decades of knowledge about good music and his understanding of how to fit songs together?

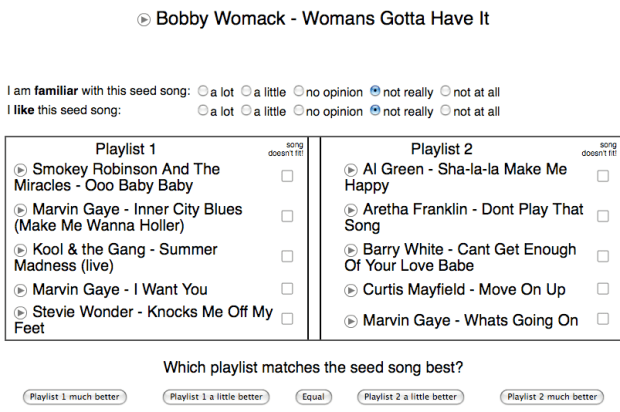

To help answer this question, I’ve created a Playlist Survey – that will collect information about the quality of playlists generated by a human expert, a computer algorithm and a random number generator. The survey presents a set of playlists and the subject rates each playlist in terms of its quality and also tries to guess whether the playlist was created by a human expert, a computer algorithm or was generated at random.

Bill Goldsmith and Radio Paradise have graciously contributed 18 months of historical playlist data from Radio Paradise to serve as the expert playlist data. That’s nearly 50,000 playlists and a quarter million song plays spread over nearly 7,000 different tracks.

The Playlist Survey also servers as a Radio DJ Turing test. Can a computer algorithm (or a random number generator for that matter) create playlists that people will think are created by a living and breathing music expert? What will it mean, for instance, if we learn that people really can’t tell the difference between expert playlists and shuffle play?

Ben Fields and I will offer the results of this Playlist when we present Finding a path through the Jukebox – The Playlist Tutorial – at ISMIR 2010 in Utrecth in August. I’ll also follow up with detailed posts about the results here in this blog after the conference. I invite all of my readers to spend 10 to 15 minutes to take The Playlist Survey. Your efforts will help researchers better understand what makes a good playlist.

Take the Playlist Survey

Help scientists build better playlists

Luke Barrington, a Music Information Retrieval researcher at UCSD, is trying to improve the state of the art in automatic playlist generation. He’s conducting a survey and he needs your help.

If you are interested in helping out, take the survey.

Here are the details from Luke:

With music similarity sites like Pandora.com or iTunes’ Genius feature that recommends playlists, based on a song that we like, our MIR domain of music similarity and recommendation is finding a mass audience. But are these systems any good? Could we make something better?

This is what I’m trying to figure out and I would like to include your opinion in my analysis.

We are conducting an experiment where you can listen to playlists that are recommended, based on a “seed song”, and evaluate these recommendations. We are comparing different recommendation systems, including Genius, artist similarity and tag-based similarity. Most importantly, we’re are trying to discover the important factors that go into creating and evalutating a playlist.

If you’d like to participate in the experiment by listening to and evaluating some playlists, please go to:

http://theremin.ucsd.edu/playlist/

As an incentive, we’re offering a $20 iTunes gift card to whoever rates the most playlists (but it’s about quality, not quantity!)

To learn more, ask questions or make suggestions, feel free to drop me a line.

Thanks for your help,

Thanks for your help,

Luke Barrington,

Computer Audition Laboratory

U.C. San Diego

van.ucsd.edu