Archive for category web services

The Echo Nest gets ready for Boston Music Hack Day

Posted by Paul in code, java, Music, The Echo Nest, web services on November 19, 2009

We’ve been extremely busy this week at the Echo Nest getting ready for the Boston Music Hack Day. Not only have we been figuring out menus, panel room assignments, and dealing with a waitlist, we’ve also been releasing a set of new API features. Here’s a quick rundown of what we’ve done:

- get_images – a frequent request from developers – we now have an API method that will let you get images for an artist. Note that we are releasing this method as a sneak preview for the hack day – we have images for over 60 thousand artists, but we will be aggressively adding more images over the next few weeks (60 thousand artists is a lot of artists, but we’d like to have lots more). We’ll also be expanding our sources of images to include many more sources. The results of the get_images are already good. 95% of the time you’ll get images. Over the next few weeks, the results will get even better.

- get_biographies – another frequent request from developers – we now have a get_biographies API method that will return a set of artist biographies for any artist. We currently have biographies for about a quarter million artists – and just as with get_images – we are working hard to expand the breadth and depth of this coverage. Nevertheless, with coverage for a quarter million artists, 99.99% of the time when you ask for a biography we’ll have it.

- get_similar – we’ve expanded the number of similar artists you can get back from get_similar from 15 to 100. This gives you lots more info for building playlisting and music discovery apps.

- buckets – one issue that our developers have had was that to fill out info on an artist often took a number of calls to the Echo Nest – one to get similars, one to get audio, one for video, familiarity, hotttnesss etc. To fill out an artist page it could take half a dozen calls. To reduce the number of calls needed to get artist information we’ve added a ‘bucket’ parameter to the search_artist, the get_similar and the get_profile calls. The bucket parameter allows you to specify which additional artist info should be returned in the call. You can specify ‘audio,’ ‘biographies,’ ‘blogs,’ ‘familiarity,’ ‘hotttnesss,’ ‘news,’ ‘reviews,’ ‘urls,’, ‘images’ or ‘video’ and whenever you get artist data back you’ll get the specified info included. For example with the call:

http://developer.echonest.com/api/get_profile ?api_key=EHY4JJEGIOFA1RCJP &id=music://id.echonest.com/~/AR/ARH6W4X1187B99274F &version=3 &bucket=familiarity &bucket=hotttnessswill return an artist block that looks like this:

<artist> <name>Radiohead</name> <id>music://id.echonest.com/~/AR/ARH6W4X1187B99274F</id> <familiarity>0.899230928024</familiarity> <hotttnesss>0.847409181874</hotttnesss> </artist>

There’s another new feature that we are starting to roll out. It’s called Echo Source – it allows the developer to get content (such as images, audio, video etc.) based upon license info. Echo Source is a big deal and deserves a whole post – but that’s going to have to wait until after Music Hack Day. Suffice it to say that with Echo Source you’ll have a new level of control over what content the Echo Nest API returns.

We’ve updated our Java and Python libraries to support the new calls. So grab yourself an API key and start writing some music apps.

Spotifying over 200 Billboard charts

Posted by Paul in code, data, fun, web services on November 8, 2009

Yesterday, I Spotified the Billboard Hot 100 – making it easy to listen to the charts. This morning I went one step further and Spotified all of the Billboard Album and Singles charts.

The Spotified Billboard Charts

That’s 128 singles charts (which includes charts like Luxembourg Digital Songs, Hot Mainstream R&B/Hip-Hop Song and Hot Ringtones ) and 83 album charts including charts like Top Bluegrass Albums, Top Cast Albums and Top R&B Catalog Albums.

In these 211 charts you’ll find 6,482 Spotify tracks, 2354 being unique (some tracks, like Miley Cyrus’s ‘The Climb’ appear on many charts).

Building the charts stretches the API limits of the Billboard API (only 1,500 calls allowed per day!), as well as stretches my patience (making about 10K calls to the Spotify API while trying not to exceed the rate limit, means it takes a couple of hours to resolve all the tracks). Nevertheless, it was a fun little project. And it shows off the Spotify catalog quite well. For popular western music they have really good coverage.

Requests for the Billboard API: Please increase the usage limit by 10 times. 1,500 calls per day is really limiting, especially when trying to debug a client library.

Requests for the Spotify API: Please, Please Please!!! – make it possible to create and modify Spotify playlists via web services.

The Billboard Hot 100. In Spotify.

Posted by Paul in code, fun, web services on November 7, 2009

Inspired by Oscar’s 1001 Albums You Must Hear Before You Die …. in Spotify I put together an app that gets the Top charts from Billboard (using the nifty Billboard API) and resolves them to a Spotify ID – giving you a top 100 chart that you can play.

The Billboard Hot 100 in Spotify

Here’s the Top 10:

- I Gotta Feeling by The Black Eyed Peas

Weeks on chart:16 Peak:1 - Down by Jay Sean Lil Wayne

Weeks on chart:13 Peak:2 - Party In The U.S.A. by Miley Cyrus

Weeks on chart:7 Peak:2 - Run This Town by Jay-Z, Rihanna & Kanye West

Weeks on chart:9 Peak:2 - Whatcha Say by Jason DeRulo

Weeks on chart:7 Peak:5 - You Belong With Me by Taylor Swift

Weeks on chart:23 Peak:2 - Paparazzi by Lady Gaga

Weeks on chart:5 Peak:7 - Use Somebody by Kings Of Leon

Weeks on chart:35 Peak:4 - Obsessed by Mariah Carey

Weeks on chart:12 Peak:7 - Empire State Of Mind by Jay-Z + Alicia Keys

Weeks on chart:3 Peak:5

Note that the Billboard API purposely offers up slightly stale charts, so this is really the top 100 of a few weeks ago. I never listen to the Top 100, and I hadn’t heard of 50% of the artists so listening to the Billboard Top 100 was quite enlightening. I was surprised at how far removed the Top 100 is from the music that I (and everyone I know) listen to every day.

To build the list I used my Jspot – and a (yet to be released) Java client for the Billboard API. (If you are interested in this API, let me know and I’ll stick it up on google code). Of course it’d be really nifty if you could specify get and listen to a chart for a given week (i.e. let me listen to the Billboard chart for the week that I graduated from High School). Sound like something to do for Boston Music Hackday.

Update: I’ve made another list that is a little bit more inline with my own music tastes:

The Spotified Billboard Top Modern Rock/Alternative Albums

Where is my JSpot?

Posted by Paul in code, fun, Music, web services on November 3, 2009

I like Spotify. I like Java. So I combined them. Here’s a Java client for the new Spotify metadata API: JSpot

This client lets you do things like search for a track by name and get the Spotify ID for the track so you can play the track in Spotify. This is useful for all sorts of things like building web apps that use Spotify to play music, or perhaps to build a Playdar resolver so you can use Spotify and Playdar together.

Here’s some sample code that prints out the popularity and spotify ID for all versions of Weezer’s ‘My Name Is Jonas’.

Spotify spotify = new Spotify();

Results<Track> results = spotify.searchTrack("Weezer", "My name is Jonas");

for (Track track : results.getItems()) {

System.out.printf("%.2f %s \n", track.getPopularity(), track.getId());

}

This prints out:

If you have Spotify and you click on those links, and those tracks are available in your locale you should hear Weezer’s nerd anthem.

You can search for artists, albums and tracks and you can get all sorts of information back such as release dates for albums, countries where the music can be played, track length, popularity for artists, tracks and albums. It is very much a 0.1 release. The search functionality is complete so its quite useful, but I haven’t implemented the ‘lookup’ methods yet. There some javadocs. There’s a jar file: jspot.jar. And it is all open source: jspot at google code.

Who’s going to Boston Music Hackday?

Posted by Paul in events, fun, Music, The Echo Nest, web services on October 21, 2009

Look at all the companies and organizations going to Music Hack Day.

The Echo Nest

The Echo Nest SoundCloud

SoundCloud Indaba Music

Indaba MusicHarmonix

Amie Street

8tracks

Playdar

Bandsintown

Tourfilter

NPR

Boxee

Sonos

Aviary

Conduit Labs

Topspin Media

Noteflight

Dorkbot

Libre.fm

Libre.fm

It promises to be a really fun weekend. If you are interested in hacking music and working with the folks that are building the celestial jukebox make sure you sign up, slots are going fast. There’s one guy I’d  to get to come to the hack day. I’m sure he’d be fascinated with all that goes on.

to get to come to the hack day. I’m sure he’d be fascinated with all that goes on.

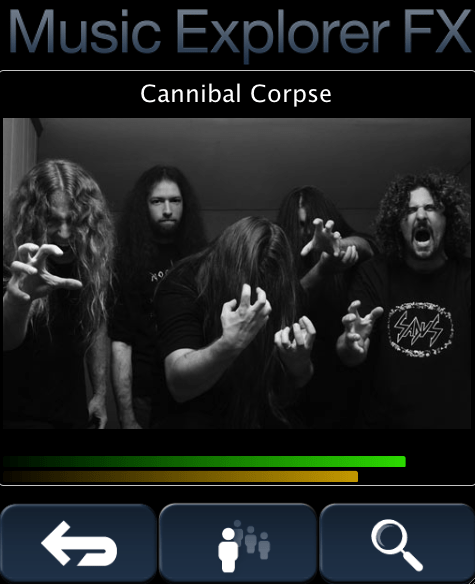

Music Explorer FX – Mobile Edition

Posted by Paul in java, Music, recommendation, The Echo Nest, web services on October 21, 2009

Sten has created a mobile music discovery application that runs on a mobile device. The application shows similar artists using Echo Nest data. You can read about the app and give it a try (it runs on a desktop too), on Sten’s Blog: Music Explorer FX Mobile Edition

Installing Playdar

Posted by Paul in code, fun, Music, The Echo Nest, web services on October 18, 2009

A few people have asked me the steps to go through to install playdar. Official instructions are here: Playdar source code. This is what I did to get it running on my Mac:

- Download and install XCode from Apple

- Download build and install Erlang

- Install MacPorts if you haven’t already done so

- Download and install git

- Install Taglib

- Grab the latest Playdar source:

git clone git://github.com/RJ/playdar-core.git - Build it by typing ‘make’ at the top level

- Copy etc/playdar.conf.example to etc/playdar.conf

- If you want to include the Echo Nest resolver do these bits:

- Get an Echo Nest API key from here: developer.echonest.com

- Download and install pyechonest (the python client for the Echo Nest library):

- Add your Echo Nest API key to echonest-resolver.py (at around line 22)

- Make sure the echonest-resolver.py is executable (chmod +x path/to/contrib/echonest-resolver.py)

- Edit etc/playdar.conf and add the path to the resolver in the scripts list. Line 22-26 should look something like this:

{scripts,[ "/Users/plamere/tools/playdar-core/contrib/echonest/echonest-resolver.py" %"/path/to/a/resolver/script1.py", %"/path/to/a/resolver/script2.py" ]}.

- If you want to enable p2p sharing remove “p2p” from the module blacklist in the playdar.conf (around line 59)

- start Playdar with:

./start-dev.sh

- To add your local music to playdar – in a separate window type:

./playdarctl start-debug

./playdarctl scan /path/to/your/music - At this point, playdar should be running. You can check its status by going to:

http://localhost:60210/

- Try Playdar by going to http://www.playdar.org/demos/search.html. Click the ‘connect’ button to connect to Playdar – then search for a track – if Playdar finds it, it should appear in the search results. Start listening to music. Then visit Playlick and start building playlists.

- If p2p is enabled you can add a friends music collection to Playdar by typing this into the Erlang console window:

p2p_router:connect("hostname.example.com", 3389).

That’s a long way to go to get Playdar installed – so it is still only for the highly motivated, but people are working on making this easy – so if you aren’t ready to spend an hour tinkering with installs, wait a few days and there will be an easier way to install it all.

Updated Java client for the Echo Nest API

Posted by Paul in code, java, Music, The Echo Nest, web services on October 15, 2009

We’ve pushed out a new version of the open source Java client for the Echo Nest API. The new version provides support for the different versions of the Echo Nest analyzer. You can use the traditional, but somewhat temperamental version 1 of the analyzer, or the spiffy new, ultra-stable version 3 of the analyzer. By default, the Java client uses the new analyzer version, but if you need your application to work the exactly the same way that it did six months ago you can always use the older version.

Here’s a bit of Java code that will print out the tempo of all the songs in a directory:

void showBPMS(File dir) throws EchoNestException {

TrackAPI trackAPI = new TrackAPI();

File[] files = dir.listFiles();

for (File f : files) {

if (f.getAbsolutePath().toLowerCase().endsWith(".mp3")) {

String id = trackAPI.uploadTrack(f, true);

System.out.printf("Tempo 6%.3f %s\n",

trackAPI.getTempo(id).getValue(), f.getAbsoluteFile());

}

}

}

Running this code on a folder containing the new Breaking Benjamin album yields this output:

Tempo 85.57 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/01 - Fade Away.mp3 Tempo 108.01 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/02 - I Will Not Bow.mp3 Tempo 168.81 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/03 - Crawl.mp3 Tempo 156.75 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/04 - Give Me A Sign.mp3 Tempo 85.51 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/05 - Hopeless.mp3 Tempo 68.34 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/06 - What Lies Beneath.mp3 Tempo 116.94 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/07 - Anthem Of The Angels.mp3 Tempo 85.50 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/08 - Lights Out.mp3 Tempo 125.77 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/09 - Dear Agony.mp3 Tempo 94.99 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/10 - Into The Nothing.mp3 Tempo 160.38 /Users/plamere/Music/Amazon MP3/Breaking Benjamin/Dear Agony/11 - Without You.mp3

You can download the new Java client from the echo-nest-java-api code repository. The new version is: echo-nest-java-api-1.2.zip

SoundEchoCloudNest

Posted by Paul in code, data, events, Music, The Echo Nest, web services on September 28, 2009

At the recent Berlin Music Hackday, developer Hannes Tydén developed a mashup between SoundCloud and The Echo Nest, dubbed SoundCloudEchoNest. The program uses the SoundCloud and Echo Nest APIs to automatically annotate your SoundCloud tracks with information such as when the track fades in and fades out, the key, the mode, the overall loudness, time signature and the tempo. Also each Echo Nest section is marked. Here’s an example:

This track is annotated as follows:

- echonest:start_of_fade_out=182.34

- echonest:mode=min

- echonest:loudness=-5.521

- echonest:end_of_fade_in=0.0

- echonest:time_signature=1

- echonest:tempo=96.72

- echonest:key=F#

Additionally, 9 section boundaries are annotated.

The user interface to SoundEchoCloudNest is refreshly simple, no GUIs for Hannes:

Hannes has open sourced his code on github, so if you are a Ruby programmer and want to play around with SoundCloud and/or the Echo Nest, check out the code.

Machine tagging of content is becoming more viable. Photos on Flicker can be automatically tagged with information about the camera and exposure settings, geolocation, time of day and so on. Now with APIs like SoundCloud and the Echo Nest, I think we’ll start to see similar machine tagging of music, where basic info such as tempo, key, mode, loudness can be automatically attached to the audio. This will open the doors for all sorts of tools to help us better organize our music.

Tour of the Music Hackday Boston site

Posted by Paul in code, events, fun, Music, The Echo Nest, web services on September 24, 2009

The Boston Music Hackday is being held at Microsoft’s New England Research and Development Center (aka The NERD). Jon, Elissa and I took a tour of the space on Tuesday, and I must say I was very impressed. The place is tailor made for hacking. There’s open space big enough for 300 hackers to gather to show their demos, there’s plenty of informal space for hacking, there are small and large conference rooms for break out sessions, there’s wireless, there are plenty of power outlets, kitchen facilities, soda coolers and great views of Boston. This space is being donated by Microsoft – and I must say that my opinion of Microsoft has gone up substantially after I’ve seen how generous they’ve been with the space. Plus, the space is simply beautiful.

This hackday is shaping up to be something special. I’m pretty sure that we’ll hit our 300 person capacity, so register soon if you want to guarantee a spot.