Archive for category web services

MeToo – a scrobbler for the room

Posted by Paul in code, fun, Music, The Echo Nest, web services on June 11, 2010

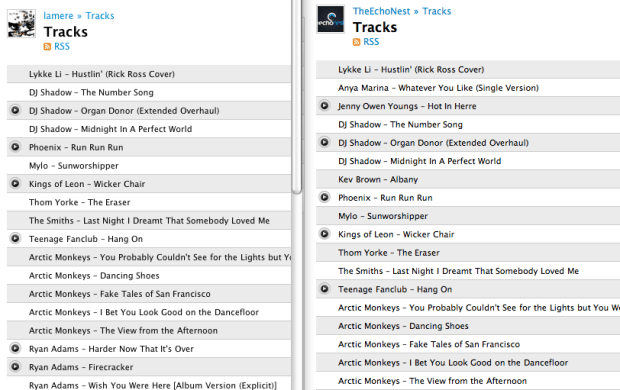

[tweetmeme source= ‘plamere’ only_single=false] One of the many cool things about working at the Echo Nest is that we have an Sonos audio system with single group playlist for the office. Anyone from the CEO to the greenest intern can add music to the listening queue for everyone to listen to. The office, as a whole has a rather diverse taste in music and as a result I’ve been exposed to lots of interesting music. However, the downside of this is that since I’m not listening to music being played on my personal computer, every day I have 10 hours of music listening that is never scrobbled, and as they say, if it doesn’t scrobble, it doesn’t count. Sure the Sonos system scrobbles all of the plays to the Echo Nest account on Last.fm but I’d also like it to scrobble it to my account so I can use nifty apps like Lee Byron’s Last.fm Listening History or Matt Ogle’s Bragging Rights on my own scrobbles.

[tweetmeme source= ‘plamere’ only_single=false] One of the many cool things about working at the Echo Nest is that we have an Sonos audio system with single group playlist for the office. Anyone from the CEO to the greenest intern can add music to the listening queue for everyone to listen to. The office, as a whole has a rather diverse taste in music and as a result I’ve been exposed to lots of interesting music. However, the downside of this is that since I’m not listening to music being played on my personal computer, every day I have 10 hours of music listening that is never scrobbled, and as they say, if it doesn’t scrobble, it doesn’t count. Sure the Sonos system scrobbles all of the plays to the Echo Nest account on Last.fm but I’d also like it to scrobble it to my account so I can use nifty apps like Lee Byron’s Last.fm Listening History or Matt Ogle’s Bragging Rights on my own scrobbles.

This morning while listening to that nifty Emeralds album, I decided that I’d deal with those scrobble gaps once and for all. So I wrote a little python script called MeToo that keeps my scrobbles up to date. It’s really quite simple. Whenever I’m in the office, I fire up MeToo. MeToo watches the most recent tracks played on The Echo Nest account and whenever a new track is played, it scrobbles it to my personal account. In effect, my scrobbles will track the office scrobbles. When I’m not listening I just close my laptop and the scrobbling stops.

The script itself is pretty simple – I used pylast to do interfacing to Last.fm – the bulk of the logic is less than 20 lines of code. I start the script like so:

% python metoo.py TheEchoNest lamere

when I do that, MeToo will continuously monitor most recently played tracks on TheEchoNest and scrobble the plays on my account. When I close my laptop, the script is naturally suspended – so even though music may continue to play in the office, my laptop won’t scrobble it.

I suspect that this use case is relatively rare, and so there’s probably not a big demand for something like MeToo, but if you are interested in it, leave a comment. If I see some interest, I’ll toss it up on google code so anyone can use it.

It feels great to be scrobbling again!

Earworm and Capsule at Music Hack Day San Francisco

Posted by Paul in events, Music, remix, The Echo Nest, web services on May 14, 2010

[tweetmeme source= ‘plamere’ only_single=false] This weekend The Echo Nest is releasing some new remix functionality – Earworm and Capsule. Earworm lets you create a new version of a song that is any length you want. Would you like 2 minute version of Stairway to Heaven? Or a 3 hour version of Freebird? Or an Infinitely long version of Sex Machine? Earworm can do that. Here’s a 60 minute version of a little Rolling Stones ditty:

Capsule takes a list of tracks and optimizes the song transitions by reordering them and applying automatic beat matching and cross fading to give you a seamless playlist. It is really neat stuff. Here’s an example of a capsule between two Bob Marley songs:

It makes a nice little Bob Marley medley.

Jason writes about Capsule and Earworm and some other new features in remix in his new (and rather awesome) blog: Running With Data – Earworm and Capsule. Check it out.

Here come the music apps

Posted by Paul in Music, The Echo Nest, web services on May 12, 2010

[tweetmeme source= ‘plamere’ only_single=false] As a music application developer, I have long been vexed by a problem that has made building and releasing a music application very difficult – where do I get the music? A music application needs music – but adding music to an application is very hard. I really have just a few choices: (1) I can use unlicensed content and hope nobody notices, (2) I can try to make the deals with the labels, (3) I can restrict my app to non-demand radio and pay per-stream royalties, or (4) I can just skip the music. None of these options is very appealing to me – If my application gets popular I will either get sued by the labels or swamped by music licensing fees. It is better for me if no one notices my app at all. Even resources like album art and 30 second samples are tightly held by the content owners.

What a crazy world! We are at this incredible point in the history of music with millions of tracks at our fingertips. Now more than ever, we need new ways to explore, organize and share music – but any kind of creativity in this space is stymied. I could build the coolest music app in the world that could help millions of people connect with music, but without a source of legal content, my application will never see the light of day. In my last year while working at the Echo Nest, I’ve seen some really amazing music applications made by very creative developers. These are apps that would make your jaw drop – but you’ll never see them. The apps are languishing on the virtual shelf because there’s no good way to get legal content for the apps.

This weekend at Music Hack Day San Francisco we are going to change this. We are going to make it possible for developers to build applications around music content and release the applications to the world without having to worry about music licensing. To do this, we are working with Play.me a new digital music service that offers on-demand music. With the Echo Nest / Play.me program a developer can write music applications using all of the usual Echo Nest APIs – and include streaming content from the millions of songs in the Play.me catalog. Play.me is very generous with its content giving a user 5 hours per week of on-demand music (once a user goes beyond their 5 hour allotment, full-streams are replaced with 30 second streams). Play.me’s strategy here is simple – they hope that by encouraging innovative applications built around their content they will attract more paying subscribers who get access to unlimited streams. The Echo Nest and Play.me platforms are well integrated letting developers write apps that take advantage of all the deep Echo Nest data – artist similarities, news, reviews, blogs, bios, images, video and even our deep track-level music analysis for every artist and track in the Play.me catalog. This is a big deal for music application developers. We can finally build applications around real music without having to worry about being sued or going broke paying licensing fees if our apps get popular. And if our application brings new subscribers to Play.me, we can make money through an affiliate program. (Here’s the fine print – Play.me is currently US only (sorry, rest of the world), and to hear the full streams you need to register with Play.me (you just need an email address, no credit cards required))

This weekend at Music Hack Day San Francisco we are going to change this. We are going to make it possible for developers to build applications around music content and release the applications to the world without having to worry about music licensing. To do this, we are working with Play.me a new digital music service that offers on-demand music. With the Echo Nest / Play.me program a developer can write music applications using all of the usual Echo Nest APIs – and include streaming content from the millions of songs in the Play.me catalog. Play.me is very generous with its content giving a user 5 hours per week of on-demand music (once a user goes beyond their 5 hour allotment, full-streams are replaced with 30 second streams). Play.me’s strategy here is simple – they hope that by encouraging innovative applications built around their content they will attract more paying subscribers who get access to unlimited streams. The Echo Nest and Play.me platforms are well integrated letting developers write apps that take advantage of all the deep Echo Nest data – artist similarities, news, reviews, blogs, bios, images, video and even our deep track-level music analysis for every artist and track in the Play.me catalog. This is a big deal for music application developers. We can finally build applications around real music without having to worry about being sued or going broke paying licensing fees if our apps get popular. And if our application brings new subscribers to Play.me, we can make money through an affiliate program. (Here’s the fine print – Play.me is currently US only (sorry, rest of the world), and to hear the full streams you need to register with Play.me (you just need an email address, no credit cards required))

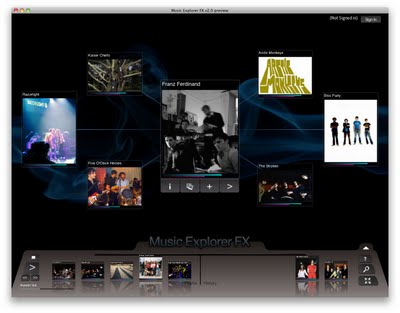

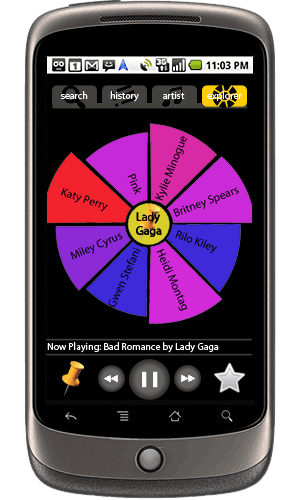

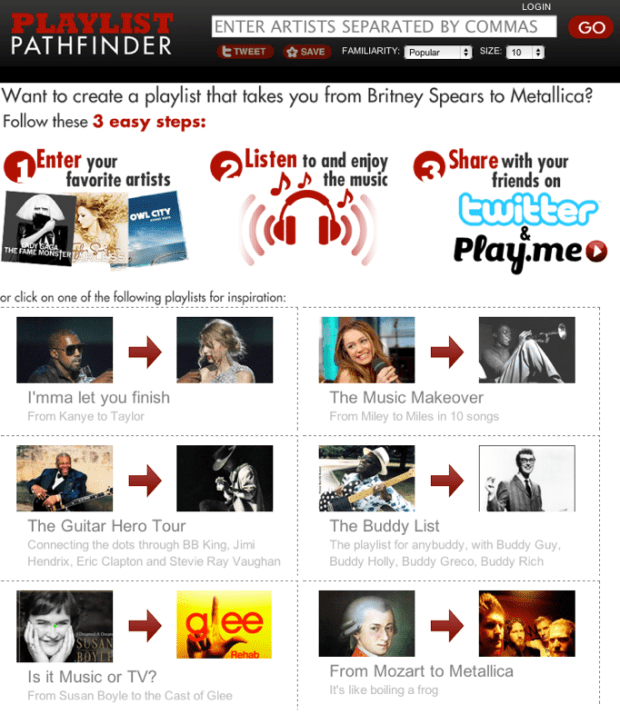

There are already some apps that have been built on top of the Echo Nest / Play.me APIs:

MusicExplorerFX – The award-winning Music Exploration tool.

Slice – a music exploration and discovery application for the Android Platform

PlaylistPathfinder – a novel application that creates playlists by finding paths through the Echo Nest artist similarity space.

I’ll write in more depth about these apps in subsequent posts – but the story for these apps are nearly identical – they were cool apps that were languishing on the music shelf because there was no way to release them with licensed content. Now the apps can be released to the world and even help the application developer make some money.

Over the years, we’ve seen many different ways for people to discovery new music come and go. When I was growing up, the radio DJ was the primary way people people discovered new music. The DJ was the tastemaker for the generation. For the next generation, I think music apps will be one of the primary ways people discover new music.

If you have idea about a cool new music app, but have been stymied by the problem of how to get content for your app, check out this program. More details will be forthcoming during Music Hack Day San Francisco.

The Echo Nest Song API

Posted by Paul in Music, The Echo Nest, web services on April 24, 2010

- Performance – api method calls run faster – on average API methods are running 3X faster than the older version.

- JSON Output – all of our methods now support JSON output in addition to XML. This greatly simplifies writing client libraries for the Echo Nest

- Nimble coding – with the new architecture it will be much easier for us to roll out new features – so expect to see new features added to the Echo Nest platform every month

- No cruft – we are revisiting our APIs to try to eliminate inconsistencies, redundancies and unnecessary features to make them as clean as we can.

The beta version of our next generation APIs are here: http://beta.developer.echonest.com/

The first significant new API we are adding is the Song API – this gives you all sorts of ways to search for and retrieve song level data. With the song API you can do the following:

- search for songs via artist name, song title, and description. You can affect the results with constraints and sorts:

- constrain the results by a number of factors including musical attributes like tempo, loudness, time signature and key, artist hotttnesss, location

- sort – the results by any of the attributes

- Find similar songs – find similar songs to a seed song

- Find profile – get all sorts of info about a song including audio, audio summary info, track data for different catalogs, song hottttnesss, artist_hotttnesss, artist_location, and detailed track analysis

- Identify songs – works in conjunction with the ENMFP

There are lots of things you can do with this API. Here’s just a quick sample of the types of queries you can make:

Find the loudest thrash songs

song/search?sort=loudness-desc&description=thrash

Find indie songs for jogging

song/search?min_tempo=120&description=indie&max_tempo=125

Fetch the tempo of Hey Jude

search?title=hey+jude&bucket=audio_summary&artist=the+beatles

Fetch the track audio and analysis of Bad Romance

search?title=bad+romance&bucket=tracks&bucket=id:paulify&artist=lady+gaga

Find songs similar to Bad Romance

song/similar?id=SOAOBBG127D9789749

- jen-api – a java client

- beta_pyechonest – a new branch of the venerable pyechonest library. Grab it from SVN with

svn checkout http://pyechonest.googlecode.com/svn/branches/ beta-pyechonest-read-only

I’ll be writing more about all of the new APIs real soon. Access the beta Echo Nest APIs here:

Lady Gaga meets Edward Tufte

Posted by Paul in Music, The Echo Nest, visualization, web services on March 22, 2010

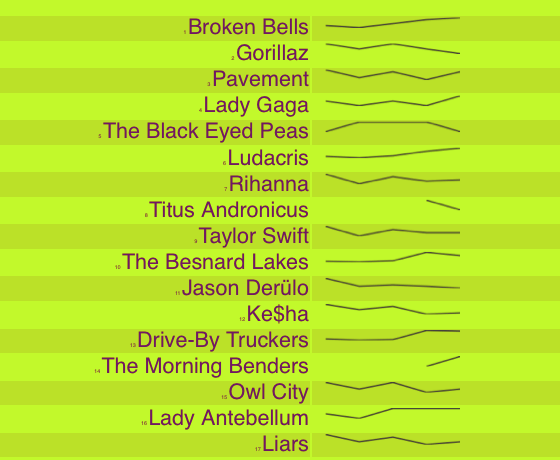

In his spare time, Echo Nest developer Reid Draper built hotttnesss.com – a neat web app that shows the top 50 hotttest artists (according to the Echo Nest get_top_hottt_artists) along with sparklines showing the historical hotttnesss for the last week. Reid used the nifty jquery sparklines plugin to make it happen. Mouse over an artist name to get links to the Last.fm and Spotify pages for the artist so you can find out what the big deal is about Broken Bells or lyaz.

Name That Artist

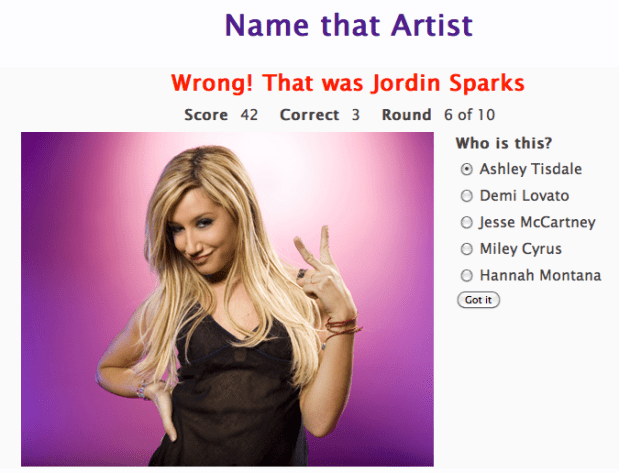

Posted by Paul in fun, Music, The Echo Nest, web services on February 14, 2010

While watching the Olympics over the weekend, I wrote a little web-app game that uses the new Echo Nest get_images call. The game is dead simple. You have to identify the artists in a series of images. You get to chose a level of difficulty and the style of your favorite music, and if you get a high score, your name and score will appear on the Top Scores board. Instead of using a simple score of percent correct, the score gets adjusted by a number of factors. There’s a time bonus, so if you answer fast you get more points, there’s a difficulty bonus, so if you identify unfamiliar artists you get more points, and if you chose the ‘Hard’ level of difficulty you get also get more points for every correct answer. The absolute highest score possible is 600 but that any score above 200 is rather awesome.

The app is extremely ugly (I’m a horrible designer), but it is fun – and it is interesting to see how similar artists from a single genre appear. Give it a go, post some high scores and let me know how you like it.

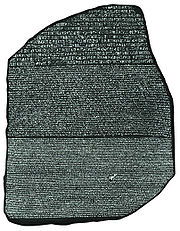

Introducing Project Rosetta Stone

Posted by Paul in code, Music, The Echo Nest, web services on February 10, 2010

Here at The Echo Nest we want to make the world easier for music app developers. We want to solve as many of the problems that developers face when writing music apps so that the developers can focus on building cool stuff instead of worrying about the basic plumbing . One of the problems faced by music application developers is the issue of ID translation. You may have a collection of music that is in one ID space (Musicbrainz for instance) but you want to use a music service (such as the Echo Nest’s Artist Similarity API) that uses a completely different ID space. Before you can use the service you have to translate your Musicbrainz IDs into Echo Nest IDs, make the similarity call and then, since the artist similarity call returns Echo Nest IDs, you have to then map the IDs back into the Musicbrainz space. The mapping from one id space to another takes time (perhaps even requiring another API method call to ‘search_artists’) and is a potential source of error — mapping artist names can be tricky – for example there are artists like Duran Duran Duran, Various Artists (the electronic musician), DJ Donna Summer, and Nirvana (the 60’s UK band) that will trip up even sophisticated name resolvers.

Here at The Echo Nest we want to make the world easier for music app developers. We want to solve as many of the problems that developers face when writing music apps so that the developers can focus on building cool stuff instead of worrying about the basic plumbing . One of the problems faced by music application developers is the issue of ID translation. You may have a collection of music that is in one ID space (Musicbrainz for instance) but you want to use a music service (such as the Echo Nest’s Artist Similarity API) that uses a completely different ID space. Before you can use the service you have to translate your Musicbrainz IDs into Echo Nest IDs, make the similarity call and then, since the artist similarity call returns Echo Nest IDs, you have to then map the IDs back into the Musicbrainz space. The mapping from one id space to another takes time (perhaps even requiring another API method call to ‘search_artists’) and is a potential source of error — mapping artist names can be tricky – for example there are artists like Duran Duran Duran, Various Artists (the electronic musician), DJ Donna Summer, and Nirvana (the 60’s UK band) that will trip up even sophisticated name resolvers.

We hope to eliminate some of the trouble with mapping IDs with Project Rosetta Stone. Project Rosetta Stone is an update to the Echo Nest APIs to support non-Echo-Nest identifiers. The goal for Project Rosetta Stone is to allow a developer to use any music id from any music API with the Echo Nest web services. For instance, if you have a Musicbrainz ID for weezer, you can call any of the Echo Nest artist methods with the Musicbrainz ID and get results. Additionally, methods that return IDs can be told to return them in different ID spaces. So, for example, you can call artist.get_similar and specify that you want the similar artist results to include Musicbrainz artist IDs.

Dealing with the many different music ID formats One of the issues we have to deal with when trying to support many ID spaces is that the IDs come in many shapes and sizes. Some IDs like Echo Nest and Musicbrainz are self-identifying URLs, (self-identifying means that you can tell what the ID space is and the type of the item being identified (whether it is an artist track, release, playlist etc.)) and some IDs (like Spotify) use self-identifying URNs. However, many ID spaces are non-self identifying – for instance a Napster Artist ID is just a simple integer. Note also that many of the ID spaces have multiple renderings of IDs. Echo Nest has short form IDs (AR7BGWD1187FB59CCB and TR123412876434), Spotify has URL-form IDs (http://open.spotify.com/artist/6S58b0fr8TkWrEHOH4tRVu) and Musicbrainz IDs are often represented with just the UUID fragment (bd0303a-f026-416f-a2d2-1d6ad65ffd68) – and note that the use of Spotify and Napster in these examples are just to demonstrate the wide range of ID format.

We want to make the all of the ID types be self-identifying. IDs that are already self-identifying can be used without change. However, non-self-identifying ID types need to be transformed into a URN-style syntax of the form: vendor:type:vendor-specific-id. So for example, and a Napster track ID would be of the form: ‘napster:track:12345678’

What do we support now? In this first release of Rosetta Stone we are supporting URN-style Musicbrainz ids (probably one of the most requested enhancements to the Echo Nest APIs has been to include support for Musicbrainz). This means that any Echo Nest API method that accepts or returns an Echo Nest ID can also take a Musicbrainz ID. For example to get recent audio found on the web for Weezer, you could make the call with the URN form of the musicbrainz ID for weezer:

http://developer.echonest.com/api/get_audio

?api_key=5ZAOMB3BUR8QUN4PE

&id=musicbrainz:artist:6fe07aa5-fec0-4eca-a456-f29bff451b04

&rows=2&version=3 - (try it)

For a call such as artist.get_similar, if we are using Musicbrainz IDs for input, it is likely that you’ll want your results in the form of Musicbrainz ids. To do this, just add the bucket=id:musicbrainz parameter to indicate that you want Musicbrainz IDs included in the results:

http://developer.echonest.com/api/get_similar

?api_key=5ZAOMB3BUR8QUN4PE

&id=musicbrainz:artist:6fe07aa5-fec0-4eca-a456-f29bff451b04

&rows=10&version=3

&bucket=id:musicbrainz (try it)

<similar>

<artist>

<name>Death Cab for Cutie</name>

<id>music://id.echonest.com/~/AR/ARSPUJF1187B9A14B8</id>

<id type="musicbrainz">musicbrainz:artist:0039c7ae-e1a7-4a7d-9b49-0cbc716821a6</id>

<rank>1</rank>

</artist>

<!– more omitted –>

</similar>

Limiting results to a particular ID space – sometimes you are working within a particular ID space and you only want to include items that are in that space. To support this, Rosetta Stone adds an idlimit parameter to some of the calls. If this is set to ‘Y’ then results are constrained to be within the given ID space. This means that if you want to guarantee that only Musicbrainz artists are returned from the get_top_hottt_artists call you can do so like this:

http://developer.echonest.com/api/get_top_hottt_artists

?api_key=5ZAOMB3BUR8QUN4PE

&rows=20

&version=3

&bucket=id:musicbrainz

&idlimit=Y

What’s Next? In this initial release of Rosetta Stone we’ve built the infrastructure for fast ID mapping. We are currently supporting mapping between Echo Nest Artist IDs and Musicbrainz IDs. We will be adding support for mapping at the track level soon – and keep an eye out for the addition of commercial ID spaces that will allow easy mapping being Echo Nest IDs and those associated with commercial music service providers.

In the near future we’ll be rolling out support to the various clients (pyechonest and the Java client API) to support Rosetta Stone.

As always, we love any feedback and suggestions to make writing music apps easier. So email me (paul@echonest.com) or leave a comment here.

New Echo Nest APIs demoed at the Stockholm Music Hackday

Posted by Paul in events, Music, The Echo Nest, web services on January 30, 2010

Today at the Stockholm Music Hack Day, Echo Nest co-founder Brian Whitman demoed the alpha version of a new set of Echo Nest APIs . There are 3 new public methods and hints about a fourth API method.

- search_tracks: This is IMHO the most awesomest method in the Echo Nest API. This method lets you search through the millions of tracks that the Echo Nest knows about. You can search for tracks based on artist and track title of course, but you can also search based upon how people describe the artist or track (‘funky jazz’, ‘punk cabaret’, ‘screamo’. You can constrain the return results based upon musical attributes (range of tempo, range of loudness, the key/mode), you can even constrain the results based upon the geo-location of the artist. Finally, you can specify how you want the search results ordered. You can sort the results by tempo, loudness, key, mode, and even lat/long.This new method lets you fashion all sorts of interesting queries like:

- Find the slowest songs by Radiohead

- Find the loudest romantic songs

- Find the northernmost rendition of a reggae track

The index of tracks for this API is already quite large, and will continue to grow as we add more music to the Echo Nest. (but note, that this is an alpha version and thus it is subject to the whims of the alpha-god – even as I write this the index used to serve up these queries is being rebuilt so only a small fraction of our set of tracks are currently visible). And BTW if you are at the Stockholm Music Hack Day, look for Brian and ask him about the secret parameter that will give you some special search_tracks goodness!

One of the things you get back from the search_tracks method is a track ID. You can use this track ID to get the analysis for any track using the new get_analysis method. No longer do you need to upload a track to get the analysis for it. Just search for it and we are likely to have the analysis already. This search_tracks method has been the most frequently requested method by our developers, so I’m excited to see this method be released.

- get_analysis – this method will give you the full track analysis for any track, given its track ID. The method couldn’t be simpler, give it a track ID and you get back a big wad-o-json. All of the track analysis, with one call. (Note that for this alpha release, we have a separate track ID space from the main APIs, so IDs for tracks that you’ve analyzed with the released/supported APIs won’t necessarily be available with this method).

- capsule – this is an API that supports this-is-my-jam functionality. Give the API a URL to an XSPF playlist and you’ll get back some json that points you to both a flashplayer url and an mp3 url to a capsulized version of the playlist. In the capsulized version, the song transitions are aligned and beatmatched like an old style DJ would.

Brian also describes a new identify_track method that returns metadata for a track given the Echo Nest a set of musical fingerprint hashcodes. This is a method that you use in conjunction with the new Echo Nest audio fingerprinter (woah!). If you are at the Stockholm music hackday and you are interested in solving the track resolution problem talk to Brian about getting access to the new and nifty audio fingerprinter.

These new APIs are still in alpha – so lots of caveats surround them. To quote Brian: we may pull or throttle access to alpha APIs at a different rate from the supported ones. Please be warned that these are not production ready, we will be making enhancements and restarting servers, there will be guaranteed downtime.

The new APIs hint at the direction we are going here at the Echo Nest. We want to continue to open up our huge quantities of data for developers, making as much of it available as we can to anyone who wants to build music apps. These new APIs return JSON – XML is so old fashioned. All the cool developers are using JSON as the data transport mechanism nowadays: its easy to generate, easy to parse and makes for a very nimble way to work with web-services. We’ll be adding JSON support to all of our released APIs soon.

I’m also really excited about the new fingerprinting technology. Here at the Echo Nest we know how hard it is to deal with artist and track resolution – and we want to solve this problem once and for all, for everybody – so we will soon be releasing an audio fingerprinting system. We want to make this system as open as we can, so we’ll make all the FP data available to anyone. No secret hash-to-ID algorithms, and no private datasets. The Fingerprinter is fast, uses state-of-the-art audio analysis and will be backed by a dataset of fingerprint hashcodes for millions of tracks. I’ll be writing more about the new fingerprinter soon.

These new APIs should give those lucky enough to be in Stockholm this weekend something fun to play with. If you are at the Stockholm Hack Day and you build something cool with these new APIs you may find yourself going home with the much coveted Echo Nest sweatsedo:

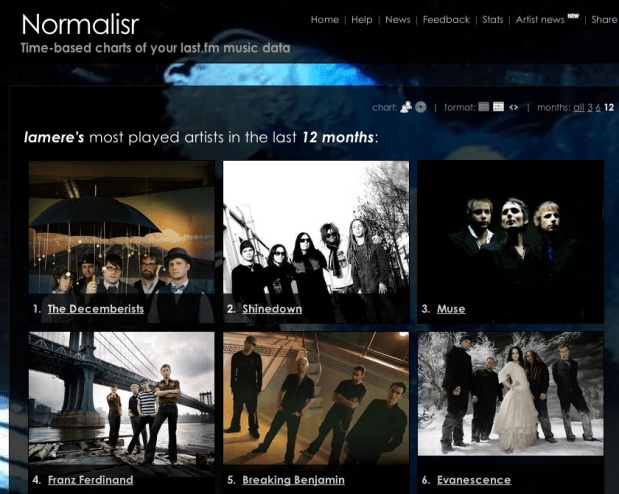

Normalisr – Time-based charts of your last.fm data

Posted by Paul in Music, web services on December 14, 2009

Worth checking out: Normalisr