Archive for category Music

LOOKING THROUGH THE “GLASS CEILING”: A CONCEPTUAL FRAMEWORK FOR THE PROBLEMS OF SPECTRAL SIMILARITY

LOOKING THROUGH THE “GLASS CEILING”: A CONCEPTUAL FRAMEWORK FOR THE PROBLEMS OF SPECTRAL SIMILARITY

Alexandros Nanopoulos

Ioannis Karydis, Milosˇ Radovanovic, Mirjana Ivanovic

Abstract: Spectral similarity measures have been shown to exhibit good performance in several Music Information Retrieval (MIR) applications. They are also known, however, to pos- sess several undesirable properties, namely allowing the existence of hub songs (songs which frequently appear in nearest neighbor lists of other songs), “orphans” (songs which practically never appear), and difficulties in distin- guishing the farthest from the nearest neighbor due to the concentration effect caused by high dimensionality of data space. In this paper we develop a conceptual framework that allows connecting all three undesired properties. We show that hubs and “orphans” are expected to appear in high-dimensional data spaces, and relate the cause of their appearance with the concentration property of distance / similarity measures. We verify our conclusions on real mu- sic data, examining groups of frames generated by Gaus- sian Mixture Models (GMMs), considering two similar- ity measures: Earth Mover’s Distance (EMD) in combi- nation with Kullback-Leibler (KL) divergence, and Monte Carlo (MC) sampling. The proposed framework can be useful to MIR researchers to address problems of spectral similarity, understand their fundamental origins, and thus be able to develop more robust methods for their remedy.

Problem is mainly due to the high-dimensional vector space – so problems like hubs, orphans are expected. So, lets look at how to deal with this problem of high-dimensionality.

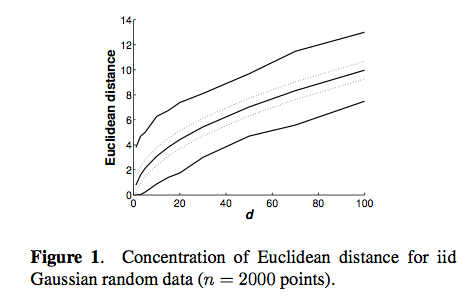

One problem, in Euclidean space, as we get into higher dimensions it harder to distinguish between the farthest and the nearest neighbor in high dimensions.

This is a natural result of high dimensionality and leads to the problem of hubs and orphans.

This is a natural result of high dimensionality and leads to the problem of hubs and orphans.

Another way of looking at this is to show the ratio between the standard deviation andof the neighbor distances as a function of dimensionality:

Conclusion – high dimensionality is responsible for problems of hubs, orphans and the concentration effect.

This was an interesting talk and has lots of potential impact on spectral similarity.

Help researchers understand earworms

Posted by Paul in Music, music information retrieval, research on July 29, 2010

Researchers at Goldsmiths, University of London, in a collaboration with the BBC 6 and the British Academy, are conducting research to find out about the music in people’s heads, sometimes called ’musical imagery’. They want to know what songs are the most common, whether people like it or don’t, what triggers it, and if some people have music in their head all the time, etc.

To help researchers understand this phenomenon, take part in a questionnaire (and you could win £150 too). I took the survey, it took about 10 minutes. They do ask some rather personal questions that seem related to one’s tendency towards compulsive behavior. (yes, I do sometimes count the stairs that I’m walking up).

It looks to be an interesting research project. More details about it are here: The Earwomery.com

Visual Music

Posted by Paul in code, data, events, fun, Music, The Echo Nest, visualization on July 28, 2010

The week long Visual Music Collaborative Workshop held at the Eyebeam just finished up. This was an invite-only event where participants did a deep dive into sound analysis techniques, openGL programming, and interfacing with mobile control devices.

Here’s one project built during the week that uses The Echo Nest analysis output:

(Via Aaron Meyers)

The Music App Summit

Posted by Paul in events, Music, startup, The Echo Nest on July 23, 2010

Billboard has long been known for tracking the hottest artists, albums and songs. Now they are moving into new territory – Music Apps. In October Billboard is hosting a Music App Summit – a day focused on the world of mobile music apps. The summit will focus on new companies and technologies that are now building the next generation of music applications for mobile devices. The summit has some awesome speakers and panelist lined up from a cross section of domains (technology, business and music) like Ge Wang, Ted Cohen, Dave Kusek, Brian Zisk and The Echo Nest’s CEO Jim Lucchese.

At the core of the summit are Billboard’s first ever Music App Awards. Billboard is giving awards to the best apps in a number of categories:

- Best Artist-based App: Apps created specifically for an individual artist

- Best Music Streaming App: Apps that allow users to stream, download or otherwise enjoy music, such as Internet radio or on-demand.

- Best Music Engagement App: Apps that lets users engage in music in various ways, such as music games, music ID services, etc.

- Best Music Creation App: App that lets users make their own music.

- Best Branded App: App that best incorporates a sponsor with music capabilities to promote both the sponsor’s message and highlight the music

- Best Touring App: App created in conjunction with a specific tour or festival

Judges for the apps include Eliot Van Buskirk of Wired, Ian Rogers of Top Spin and Grammy Award winner MC Hammer.

Winning developers receive some modest prizes – but the real award is getting to demo your app to the attendees of the summit – the movers and shakers of the music industry will be there looking for that killer music app – the winner in each of the app categories will get to show their stuff. If you have a mobile music app consider submitting it to the Music App Awards. The submission deadline is July 30.

Some NameDropper stats

Posted by Paul in code, fun, Music, The Echo Nest on July 11, 2010

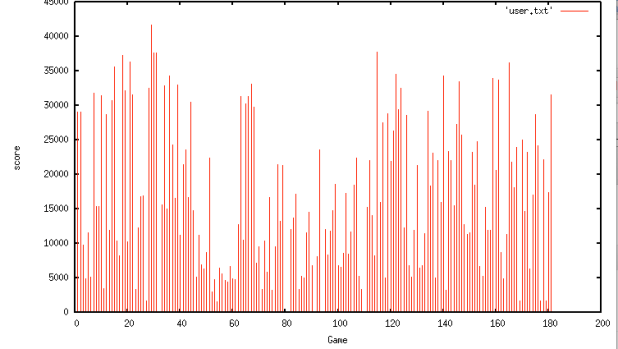

The NameDropper has been live for less than a day and already I ‘ve collected some good data from the game play. Here are some stats:

Total games played: 1841

Total unique players: 462

Total play time: 30hrs, 20mins, 36 seconds

The artists that were most frequently confused with fake artists were:

The Name Dropper

Posted by Paul in data, fun, Music, The Echo Nest, web services on July 10, 2010

[tweetmeme source= ‘plamere’ only_single=false]

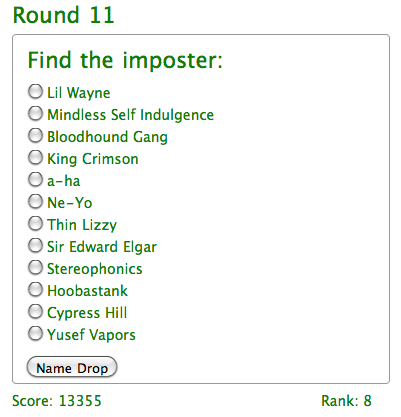

TL;DR; I built a game called Name Dropper that tests your knowledge of music artists.

One bit of data that we provide via our web APIs is Artist Familiarity. This is a number between 0 and 1 that indicates how likely it is that someone has heard of that artists. There’s no absolute right answer of course – who can really tell if Lady Gaga is more well known than Barbara Streisand or whether Elvis is more well known than Madonna. But we can certainly say that The Beatles are more well known, in general, than Justin Bieber.

To make sure our familiarity scores are good, we have a Q/A process where a person knowledgeable in music ranks our familiarity score by scanning through a list of artists ordered in descending familiarity until they start finding artists that they don’t recognize. The further they get into the list, the better the list is. We can use this scoring technique to rank multiple different familiarity algorithms quickly and accurately.

One thing I noticed, is that not only could we tell how good our familiarity score was with this technique, this also gives a good indication of how well the tester knows music. The further a tester gets into a list before they can’t recognize artists, the more they tend to know about music. This insight led me to create a new game: The Name Dropper.

The Name Dropper is a simple game. You are presented with a list of dozen artist names. One name is a fake, the rest are real.

If you find the fake, you go onto the next round, but if you get fooled, the game is over. At first, it is pretty easy to spot the fakes, but each round gets a little harder, and sooner or later you’ll reach the point where you are not sure, and you’ll have to guess. I think a person’s score is fairly representative of how broad their knowledge of music artists are.

The biggest technical challenge in building the application was coming up with a credible fake artist name generator. I could have used Brian’s list of fake names – but it was more fun trying to build one myself. I think it works pretty well. I really can’t share how it works since that could give folks a hint as to what a fake name might look like and skew scores (I’m sure it helps boost my own scores by a few points). The really nifty thing about this game is it is a game-with-a-purpose. With this game I can collect all sorts of data about artist familiarity and use the data to help improve our algorithms.

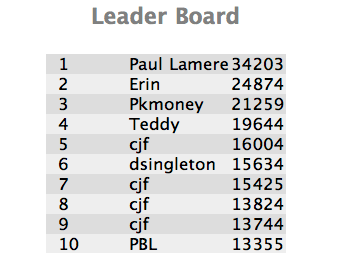

So go ahead, give the Name Dropper a try and see if you can push me out of the top spot on the leaderboard:

Play the Name Dropper

Some preliminary Playlist Survey results

[tweetmeme source= ‘plamere’ only_single=false] I’m conducting a somewhat informal survey on playlisting to compare how well playlists created by an expert radio DJ compare to those generated by a playlisting algorithm and a random number generator. So far, nearly 200 people have taken the survey (Thanks!). Already I’m seeing some very interesting results. Here’s a few tidbits (look for a more thorough analysis once the survey is complete).

People expect human DJs to make better playlists:

The survey asks people to try to identify the origin of a playlist (human expert, algorithm or random) and also rate each playlist. We can look at the ratings people give to playlists based on what they think the playlist origin is to get an idea of people’s attitudes toward human vs. algorithm creation.

Predicted Origin Rating ---------------- ------ Human expert 3.4 Algorithm 2.7 Random 2.1

We see that people expect humans to create better playlists than algorithms and that algorithms should give better playlists than random numbers. Not a surprising result.

Human DJs don’t necessarily make better playlists:

Now lets look at how people rated playlists based on the actual origin of the playlists:

Actual Origin Rating ------------- ------ Human expert 2.5 Algorithm 2.7 Random 2.6

These results are rather surprising. Algorithmic playlists are rated highest, while human-expert-created playlists are rated lowest, even lower than those created by the random number generator. There are lots of caveats here, I haven’t done any significance tests yet to see if the differences here really matter, the survey size is still rather small, and the survey doesn’t present real-world playlist listening conditions, etc. Nevertheless, the results are intriguing.

I’d like to collect more survey data to flesh out these results. So if you haven’t already, please take the survey:

The Playlist Survey

Thanks!

The Playlist Survey

[tweetmeme source= ‘plamere’ only_single=false] Playlists have long been a big part of the music experience. But making a good playlist is not always easy. We can spend lots of time crafting the perfect mix, but more often than not, in this iPod age, we are likely to toss on a pre-made playlist (such as an album), have the computer generate a playlist (with something like iTunes Genius) or (more likely) we’ll just hit the shuffle button and listen to songs at random. I pine for the old days when Radio DJs would play well-crafted sets – mixes of old favorites and the newest, undiscovered tracks – connected in interesting ways. These professionally created playlists magnified the listening experience. The whole was indeed greater than the sum of its parts.

The tradition of the old-style Radio DJ continues on Internet Radio sites like Radio Paradise. RP founder/DJ Bill Goldsmith says of Radio Paradise: “Our specialty is taking a diverse assortment of songs and making them flow together in a way that makes sense harmonically, rhythmically, and lyrically — an art that, to us, is the very essence of radio.” Anyone who has listened to Radio Paradise will come to appreciate the immense value that a professionally curated playlist brings to the listening experience.

I wish I could put Bill Goldsmith in my iPod and have him craft personalized playlists for me – playlists that make sense harmonically, rhythmically and lyrically, and customized to my music taste, mood and context . That, of course, will never happen. Instead I’m going to rely on computer algorithms to generate my playlists. But how good are computer generated playlists? Can a computer really generate playlists as good as Bill Goldsmith, with his decades of knowledge about good music and his understanding of how to fit songs together?

To help answer this question, I’ve created a Playlist Survey – that will collect information about the quality of playlists generated by a human expert, a computer algorithm and a random number generator. The survey presents a set of playlists and the subject rates each playlist in terms of its quality and also tries to guess whether the playlist was created by a human expert, a computer algorithm or was generated at random.

Bill Goldsmith and Radio Paradise have graciously contributed 18 months of historical playlist data from Radio Paradise to serve as the expert playlist data. That’s nearly 50,000 playlists and a quarter million song plays spread over nearly 7,000 different tracks.

The Playlist Survey also servers as a Radio DJ Turing test. Can a computer algorithm (or a random number generator for that matter) create playlists that people will think are created by a living and breathing music expert? What will it mean, for instance, if we learn that people really can’t tell the difference between expert playlists and shuffle play?

Ben Fields and I will offer the results of this Playlist when we present Finding a path through the Jukebox – The Playlist Tutorial – at ISMIR 2010 in Utrecth in August. I’ll also follow up with detailed posts about the results here in this blog after the conference. I invite all of my readers to spend 10 to 15 minutes to take The Playlist Survey. Your efforts will help researchers better understand what makes a good playlist.

Take the Playlist Survey

MeToo – a scrobbler for the room

Posted by Paul in code, fun, Music, The Echo Nest, web services on June 11, 2010

[tweetmeme source= ‘plamere’ only_single=false] One of the many cool things about working at the Echo Nest is that we have an Sonos audio system with single group playlist for the office. Anyone from the CEO to the greenest intern can add music to the listening queue for everyone to listen to. The office, as a whole has a rather diverse taste in music and as a result I’ve been exposed to lots of interesting music. However, the downside of this is that since I’m not listening to music being played on my personal computer, every day I have 10 hours of music listening that is never scrobbled, and as they say, if it doesn’t scrobble, it doesn’t count. Sure the Sonos system scrobbles all of the plays to the Echo Nest account on Last.fm but I’d also like it to scrobble it to my account so I can use nifty apps like Lee Byron’s Last.fm Listening History or Matt Ogle’s Bragging Rights on my own scrobbles.

[tweetmeme source= ‘plamere’ only_single=false] One of the many cool things about working at the Echo Nest is that we have an Sonos audio system with single group playlist for the office. Anyone from the CEO to the greenest intern can add music to the listening queue for everyone to listen to. The office, as a whole has a rather diverse taste in music and as a result I’ve been exposed to lots of interesting music. However, the downside of this is that since I’m not listening to music being played on my personal computer, every day I have 10 hours of music listening that is never scrobbled, and as they say, if it doesn’t scrobble, it doesn’t count. Sure the Sonos system scrobbles all of the plays to the Echo Nest account on Last.fm but I’d also like it to scrobble it to my account so I can use nifty apps like Lee Byron’s Last.fm Listening History or Matt Ogle’s Bragging Rights on my own scrobbles.

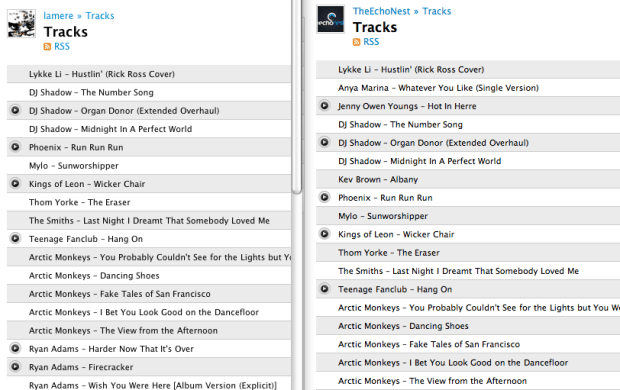

This morning while listening to that nifty Emeralds album, I decided that I’d deal with those scrobble gaps once and for all. So I wrote a little python script called MeToo that keeps my scrobbles up to date. It’s really quite simple. Whenever I’m in the office, I fire up MeToo. MeToo watches the most recent tracks played on The Echo Nest account and whenever a new track is played, it scrobbles it to my personal account. In effect, my scrobbles will track the office scrobbles. When I’m not listening I just close my laptop and the scrobbling stops.

The script itself is pretty simple – I used pylast to do interfacing to Last.fm – the bulk of the logic is less than 20 lines of code. I start the script like so:

% python metoo.py TheEchoNest lamere

when I do that, MeToo will continuously monitor most recently played tracks on TheEchoNest and scrobble the plays on my account. When I close my laptop, the script is naturally suspended – so even though music may continue to play in the office, my laptop won’t scrobble it.

I suspect that this use case is relatively rare, and so there’s probably not a big demand for something like MeToo, but if you are interested in it, leave a comment. If I see some interest, I’ll toss it up on google code so anyone can use it.

It feels great to be scrobbling again!

We swing both ways

Perhaps one of the most frequently asked questions about Tristan’s Swinger is whether it can be used to ‘Un-swing’ a song. Can you take a song that already swings and straighten it out? Indeed, the answer is yes – we can swing both ways – but it is harder to unswing than it is to swing. Ammon on Happy Blog, the Happy Blog has given de-swinging a go with some success with his de-swinging of Revolution #1. Read his post and have a listen at Taking the swing out of songs. I can’t wait for the day when we can turn on the TV to watch and listen to MTV-Unswung.

Perhaps one of the most frequently asked questions about Tristan’s Swinger is whether it can be used to ‘Un-swing’ a song. Can you take a song that already swings and straighten it out? Indeed, the answer is yes – we can swing both ways – but it is harder to unswing than it is to swing. Ammon on Happy Blog, the Happy Blog has given de-swinging a go with some success with his de-swinging of Revolution #1. Read his post and have a listen at Taking the swing out of songs. I can’t wait for the day when we can turn on the TV to watch and listen to MTV-Unswung.