Archive for category hacking

What is a Music Hack Day?

With 3 new Music Hack Days announced this week, it might be time for you to check out what goes on at a Music Hack Day. Here are some videos that give a taste of what it’s like:

Music Hack Day Paris 2013

Music Hack Day Sydney 2012**

Music Hack Day 2012 Barcelona

Music Hack Day NYC 2011

For more info on what a Music Hack Day is like read: What happens at a Music Hack Day. I hope to see you all at one of the upcoming events.

**It is strange how a non-hacker made it onto the thumbnail for the Sydney video. Dude, It’s Sydney Australia, not Sydney Lawrence ;).

Upcoming Music Hack Days – Chicago, Bologna and NYC

Posted by Paul in events, hacking, Music, The Echo Nest on September 3, 2013

Fall is traditional Music Hack Day season, and 2013 is shaping up to be the strongest yet. Three Music Hack Days have just been announced:

- Chicago – September 21st and 22nd – this will be the first ever Music Hack Day in Chicago.

- Bologna – October 5th and 6th – in collaboration with roBOt Festival 2013. The first Music Hack Day in Italy.

- New York – October 18th and 19th – being held in Spotify’s nifty new offices.

There will no doubt be more hack days before the end of the year including the traditional Boston and London events. You can check out the full schedule and sign up to be notified whenever at a new Music Hack Day is announced at MusicHackDay.org.

Music Hack Day is an international 24-hour event where programmers, designers and artists come together to conceptualize, build and demo the future of music. Software, hardware, mobile, web, instruments, art – anything goes as long as it’s music related.

The Saddest Stylophone – my #wowhack2 hack

Posted by Paul in hacking, Music, The Echo Nest on August 15, 2013

Last week, I ventured to Gothenburg Sweden to participate in the Way Out West Hack 2 – a music-oriented hackathon associated with the Way Out West Music Festival.

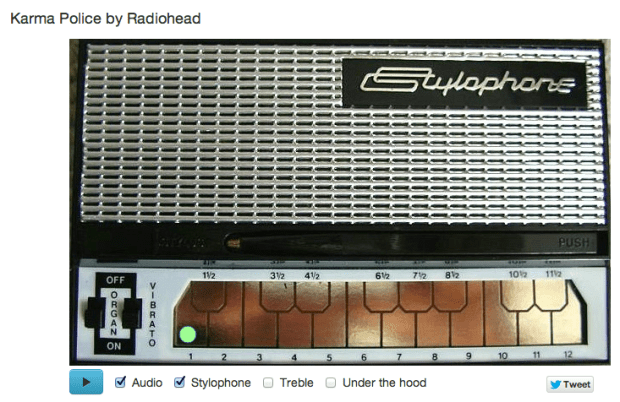

I was, of course, representing and supporting The Echo Nest API during the hack, but I also put together my own Echo Nest-based hack: The Saddest Stylophone. The hack creates an auto accompaniment for just about any song played on the Stylophone – an analog synthesizer toy created in the 60s that you play with a stylus.

Two hacking pivots on the way … The road to the Saddest Stylophone was by no means a straight line. In fact, when I arrived at #wowhack2 I had in mind a very different hack – but after the first hour at the hackathon it became clear that the WIFI at the event was going to be sketchy at best, and it was going to be very slow going for any hack (including the hack I had planned) that was going to need zippy access to to the web, and so after an hour I shelved that idea for another hack day. The next idea was to see if I could use the Echo Nest analysis data to convert any song to an 8-bit chiptune version.  This is not new ground, Brian McFee had a go at this back at the 2012 MIT Music Hack Day. I thought it would be interesting to try a different approach and use an off-the-shelf 8bit software synth and the Echo Nest pitch data. My intention was to use a Javascript sound engine called jsfx to generate the audio. It seemed like it pretty straightforward way to create authentic 8bit sounds. In small doses jsfx worked great, but when I started to create sequences of overlapping sounds my browser would crash. Every time. After spending a few hours trying to figure out a way to get jsfx to work reliably, I had to abandon jsfx. It just wasn’t designed to generate lots of short overlapping and simultaneous sounds, and so I spent some time looking for another synthesizer. I finally settled on timbre.js. Timbre.js seemed like a fully featured synth. Anyone with a Csound background

This is not new ground, Brian McFee had a go at this back at the 2012 MIT Music Hack Day. I thought it would be interesting to try a different approach and use an off-the-shelf 8bit software synth and the Echo Nest pitch data. My intention was to use a Javascript sound engine called jsfx to generate the audio. It seemed like it pretty straightforward way to create authentic 8bit sounds. In small doses jsfx worked great, but when I started to create sequences of overlapping sounds my browser would crash. Every time. After spending a few hours trying to figure out a way to get jsfx to work reliably, I had to abandon jsfx. It just wasn’t designed to generate lots of short overlapping and simultaneous sounds, and so I spent some time looking for another synthesizer. I finally settled on timbre.js. Timbre.js seemed like a fully featured synth. Anyone with a Csound background would be comfortable with creating sounds with Timbre.js It did not take long before I was generating tones that were tracking the melody and chord changes of a song. My plan was to create a set of tone generators, and dynamically control the dynamics envelope based upon the Echo Nest segment data. This is when I hit my next roadblock. The timbre.js docs are pretty good, but I just couldn’t find out how to dynamically adjust parameters such as the ADSR table. I’m sure there’s a way to do it, but when there’s only 12 hours left in a 24 hour hackathon, the two hours spent looking through JS library source seemed like forever, and I began to think that I’d not figure out how to get fine grained control over the synth. I was pretty happy with how well I was able to track a song and play along with it, but without ADSR control or even simple control over dynamics the output sounded pretty crappy. In fact I hadn’t heard anything that sounded so bad since I heard @skattyadz

would be comfortable with creating sounds with Timbre.js It did not take long before I was generating tones that were tracking the melody and chord changes of a song. My plan was to create a set of tone generators, and dynamically control the dynamics envelope based upon the Echo Nest segment data. This is when I hit my next roadblock. The timbre.js docs are pretty good, but I just couldn’t find out how to dynamically adjust parameters such as the ADSR table. I’m sure there’s a way to do it, but when there’s only 12 hours left in a 24 hour hackathon, the two hours spent looking through JS library source seemed like forever, and I began to think that I’d not figure out how to get fine grained control over the synth. I was pretty happy with how well I was able to track a song and play along with it, but without ADSR control or even simple control over dynamics the output sounded pretty crappy. In fact I hadn’t heard anything that sounded so bad since I heard @skattyadz  play a tune on his Stylophone at the Midem Music Hack Day earlier this year. That thought turned out to be the best observation I had during the hackathon. I could hide all of my troubles trying to get a good sounding output by declaring that my hack was a Stylophone simulator. Just like a Stylophone, my app would not be capable of playing multiple tones at once, it would not have complex changes in dynamics, it would only have a one and half octave range, it would not even have a pleasing tone. All I’d need to do would be to convincingly track a melody or harmonic line in a song and I’d be successful. And so, after my third pivot, I finally had a hack that I felt I’d be able to finish in time for the demo session and not embarrass myself. I was quite pleased with the results.

play a tune on his Stylophone at the Midem Music Hack Day earlier this year. That thought turned out to be the best observation I had during the hackathon. I could hide all of my troubles trying to get a good sounding output by declaring that my hack was a Stylophone simulator. Just like a Stylophone, my app would not be capable of playing multiple tones at once, it would not have complex changes in dynamics, it would only have a one and half octave range, it would not even have a pleasing tone. All I’d need to do would be to convincingly track a melody or harmonic line in a song and I’d be successful. And so, after my third pivot, I finally had a hack that I felt I’d be able to finish in time for the demo session and not embarrass myself. I was quite pleased with the results.

How does it work? The Sad Stylophone takes advantage of the Echo Nest detailed analysis. The analysis provides detailed information about a song. It includes information about where all the bars and beats are, and includes a very detailed map of the segments of a song. Segments are typically small, somewhat homogenous audio snippets in a song, corresponding to musical events (like a strummed chord on the guitar or a brass hit from the band).

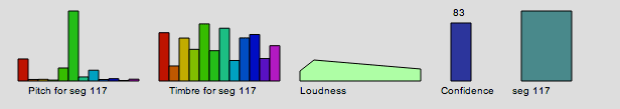

A single segment contains detailed information on the pitch, timbre, loudness. For pitch it contains a vector of 12 floating point values that correspond to the amount of energy at each of the notes in the 12-note western scale. Here’s a graphic representation of a single segment:

This graphic shows the pitch vector, the timbre vector, the loudness, confidence and duration of a segment.

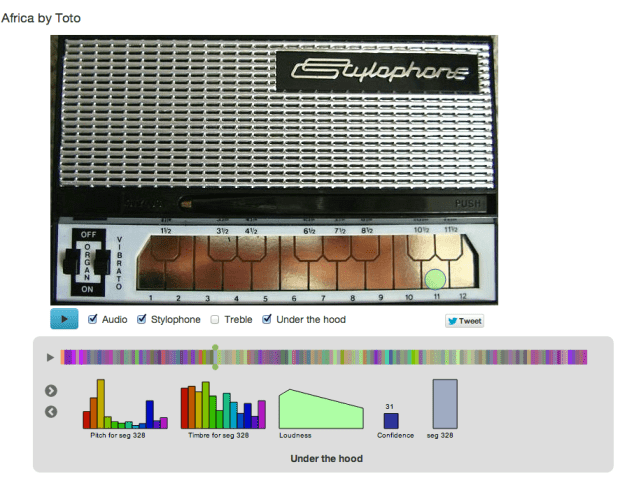

The Saddest Stylophone only uses the pitch, duration and confidence data from each segment. First, it filters segments to combine short, low confidence segments with higher confidence segments. Next it filters out segments that don’t have a predominant frequency component in the pitch vector. Then for each surviving segment, it picks the strongest of the 12 pitch bins and maps that pitch to a note on the Stylophone. Since the Stylophone supports an octave and a half (20 notes), we need to map 12 notes onto 20 notes. We do this by unfolding the 12 bins by reducing inter-note jumps to less than half an octave when possible. For example, if between segment one and segment two we would jump 8 notes higher, we instead check to see if it would be possible to jump to 4 notes lower instead (which would be an octave lower than segment two) while still remaining within the Stylophone range. If so, we replace the upward long jump with the downward, shorter jump. The result of this a list of notes and timings mapped on to the 20 notes of the Stylophone. We then map the note onto the proper frequency and key position – the rest is just playing the note via timbre.js at the proper time in sync with the original audio track and animating the stylus using Raphael.

I’ve upgraded the app to include an Under the hood selection that, when clicked opens up a visualization that shows the detailed info for a segment, so you can follow along and see how each segment is mapped onto a note. You can interact with visualization, stepping through the segments, and auditioning and visualizing them.

That’t the story of the Saddest Stylophone – it was not the hack I thought I was going to make when I got to #wowhack – but I was pleased with the result, when The Sad Stylophone plays well, it really can make any song sound sadder and more pathetic. Its a win. I’m not the only one – wired.co.uk listed it as one of the five best hacks at the hackathon.

Give it a try at Saddest Stylophone.

Rock Steady – My Music Ed Hack

This weekend I’m at The Music Education Hack in New York City where educators and technologists are working together to transform music education in New York City. My hack, Rock Steady, is a drummer training app for the iPhone. You use the app to measure how well you can keep a steady beat. Here’s how it works:

First you add songs from your iTunes collection. The app will then use The Echo Nest to analyze the song and map out all of the beats. Once the song is ready you enter Rock Steady training mode: The app will show you the current tempo of the song. Your goal then is to match the tempo by using your phone as a drumstick and tapping out the beat. You are scored based upon how well you match the tempo. There are three modes: matching mode – in this easy-peesy mode you listen to the song and match the tempo. A bit harder is silent mode – you listen to the song for a few seconds and then try to maintain the tempo on your own. Finally there’s bonzo mode – here the music is playing, but instead of you matching the music, the music matches you. If you speed up, the music speeds up, if you slow down, the music slows down. This is the trickiest mode – you have to keep a steady beat and not be fooled by the band that is following you.

This is my first iOS hack. I got to use lots of new stuff, such as Core Motion to detect the beats. I stole lots of code from the iOS version of the Infinite Jukebox (all the track upload and analysis stuff). It was a fun hack to build. If anyone thinks it is interesting I may try to finish it and put it in the app store.

Here’s a video:

[youtube http://www.youtube.com/watch?v=UsJ7RBkRAag]

Two music hackathons in NYC next weekend ….

Next weekend, (starting friday, June 28th) there are two music-related hackathons in NYC. First up, there’s The Hamr

Hacking Audio and Music Research (HAMR)

Organized by Colin Raffel is HAMR: Hacking Music and Audio Research. This hackathon is focused on music research with a goal of testing out new ideas rather than making a finished product. The focus of HAMR is on the development of new techniques for analyzing, processing, and synthesizing audio and music signals. HAMR will be modeled after a traditional hack day in that it will involve a weekend of fast-paced work with an emphasis on trying something new rather than finishing something polished. However, this event will deviate from the typical hack day in its focus on research (rather than commercial) applications. In addition to HAMRing out work, the event will include presentations, discussions, and informal workshops. Registration is free and researchers from any stage in their career are encouraged to participate. Read more about Hamr

The other hacking event is Music Education Hack

Music Education Hack

The goal of the Music Education Hack is to explore how technology and help transform music education in NYC schools. Hackers will have 24 hours to ideate, collaborate and innovate, before presenting their work to a panel of esteemed judges for a grand prize of $5,000. The Hacker teams will have access to New York City teachers as part of the creation process as they focus on building products that incorporate music and technology into the education space. For more info visit the Music Education Hack registration page.

The goal of the Music Education Hack is to explore how technology and help transform music education in NYC schools. Hackers will have 24 hours to ideate, collaborate and innovate, before presenting their work to a panel of esteemed judges for a grand prize of $5,000. The Hacker teams will have access to New York City teachers as part of the creation process as they focus on building products that incorporate music and technology into the education space. For more info visit the Music Education Hack registration page.