Paul

I'm the Director of Developer Community at The Echo Nest, a research-focused music intelligence startup that provides music information services to developers and partners through a data mining and machine listening platform. I am especially interested in hybrid music recommenders and using visualizations to aid music discovery.

ISMIR Poster Madness

Posted in Music on October 26, 2009

A new feature of ISMIR this year – Poster Madness – poster presenters have 30 seconds to pitch their stuff. Closest thing to a researcher cage match that we’ll see here at ISMIR. Posters that caught my eye:

- An Analysis of ISMIR Proceedings by Jin Ha Lee – she had to carry a big poster. A visualization of the authorship space

- ISMIR Tag Cloud Browser – really cool – http://asp.cp.jku.at/ismircloud/

- Meinard Mueller on the spot – where’s Verena? Tempo curves for performance analysis

- Matija: Field recordings of folk music and interviews – automatic segmentation and labeling this data

- Thibault – musc classificatoin on timbral features – playlists- hmmm what’s new here?

- Frieder Stolzenburg – Harmony perception =

- Musical instrument detector – this looks really neat. Can detect prescence of 10 instruments – T

- Univeristy of Crete – Andre – rhytmic similary of turkish music

- Onset detection

- Dominkus – shades of music – dealing with heterogenous music similarity -subsong similarities – looks really neat

- NOrberto – onset detection – an ensemble technique

- Lyric emotion detection – NLP, fuzzy clustering, Yajiee HU

- Peter Knees – Browsing Music Recommndation Networks – content-based similarity – 40% songs are never recommended. Hubs!

- KDDI – Full-automatic DJ mixing system. Tempo adjustment – must see this.

- Hiding information in a music score? WTF?

- Improving Musical Concept detection – Taiwan University

- Laurent oudre – template based chord recognition – simple fast

- Tag-aware spectral clustering of music items. Ioannis Karydis. 3 way relationships. Good paper, will see this.

- Christ Santora, F0 estimation – MDCT –

- SOM of folksongs – a music visualization of folk music from 22 cultures

- Using XML-Formatted scores in real-time applications –

ISMIR Oral Session 1 – Knowledge on the Web

Oral Session 1A – Knowledge on the Web

Oral Session 1A – The first Oral Session of ISMIR 2009, chaired by Malcolm Slaney of Yahoo! Research

Integrating musicology’s heterogeneous data sources for better exploration

by David Bretherton, Daniel Alexander Smith, mc schraefel,

Richard Polfreman, Mark Everist, Jeanice Brooks, and Joe Lambert

Project Link: http://www.mspace.fm/projects/musicspace

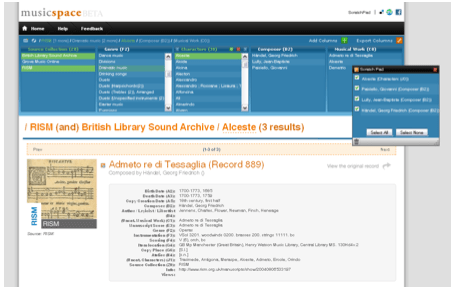

Musicologists consult many data sources, musicspace tries to integrate these resources. Here’s a screenshot of what they are trying to build.

Wirking with many public and private organizations (From British Libary to Naxos).

Motivation: Many musicologist queries are just intractible because: Need to consult several resources, they are multipart queries (require *pen and paper*), insufficient granularity of metadata or serch options. Solution: Integrate sources, optimally interactive UI, increase granularity.

Difficulties: Many formats for data sources,

Strategies: Increase granularity of data by making annotations explicit. Generate metadata – fallback on human intelligence, inspired by Amazon turk – to clean and extract data.

User Interface – David demonstrated the faceted browser to satisfy a complex query (find all the composers that montiverdi’s scribe was also the scribe for). I liked the dragging columns.

There are issues with linked databases from multiple sources – when one source goes away (for instance, for licensing reason), the links break.

An Ecosystem for transparent music similarity in an open world

By Kurt Jacobson, Yves Raimond, Mark Sandler

Link http://classical.catfishsmooth.net/slides/

Kurt was really fast, was hard to take coherent notes, so my fragmented notes are here.

Assumption: you can use music similarity for recommendation. Music similarity:

Tversky’s suggested that you can’t really put similarity into a euclidean space. It is not symmetric. He suggests a contrast model basd on comparing features – analagous to ‘bag of features’.

What does music similarity realy mean? We can’t say! Means different things in different contexts. Context is important. Make similarity be a RDF concept. A hierarchy of similarity was too limiting. Similarity now has properties. We reify the simialriy, how, how much etc.

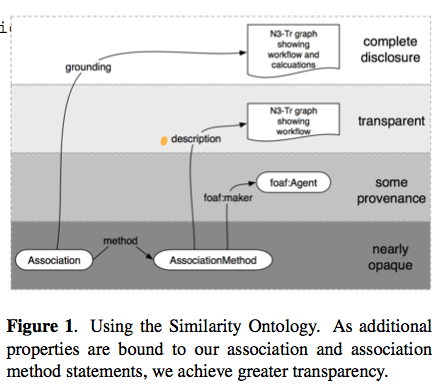

Association method with levels of transparency as follows:

Example implementation: http://classical.catfishsmooth.net/about/

Kurt demoed the system showing how he can create a hybrid query for timbral, key and composer influence similarity. It was a nice demo.

Future work: digitally signed similarity statements – neat idea.

Kurt challenges the Last.fm, BMATs and the Echo Nests and anyone who provides similarity information: Why not publish MuSim?

Interfaces for document representation in digital music libraries

By Andrew Hankinson Laurent Pugin Ichiro Fujinaga

Goal: Designing user interfaces for displaying music scores

Part of the RISM project – music digitization and metadata

Bring together information for many resources.

Five Considerations

- Preservation of Document integrity – image gallery approach doesn’t give you a sense of the complete document

- Simultaneous viewing of parts – for example, the tenor and bass may be separated in the work without window juggling.

- Provide multiple page resolutions – zooming is important

- Optimized page loading

- Image + Metadata should be presented simultaneously

Current Work

Implemented a prototype viewer that takes the 5 considerations into account. Andy gave a demo of the prototype – seems to be quite an effective tool for displaying and browsing music scores:

A good talk,well organized and presented – nice demo.

Oral Session 1B – Performance Recognition

Oral Session 1B, chaired by Simon Dixon (Queen Mary)

Body movement in music information retrieval

by Rolf Inge Godøy and Alexander Refsum Jensenius

Abstract: We can see many and strong links between music and hu- man body movement in musical performance, in dance, and in the variety of movements that people make in lis- tening situations. There is evidence that sensations of hu- man body movement are integral to music as such, and that sensations of movement are efficient carriers of infor- mation about style, genre, expression, and emotions. The challenge now in MIR is to develop means for the extrac- tion and representation of movement-inducing cues from musical sound, as well as to develop possibilities for using body movement as input to search and navigation inter- faces in MIR.

Links between body movement and music everywhere. Performers, listeners, etc. Movement is integral to music experience. Suggest that studying music-related body movement can help our understanding of music.

One example: http://www.youtube.com/watch?v=MxCuXGCR8TE

Relate music sound to subject images.

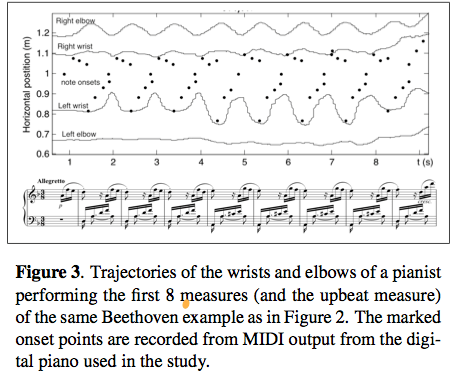

Looking at performance of pianists – creates a motiongram – and a motion capture.

Listeners have lots of knowledge about sound producing motions/actions. These motions are integral to music perception. There’s a constant mental model of the sound/action. Example: Air guitar vs. Real Guitar

This was a thought provoking talk. wonder how the music-action model works when the music controller is no longer acoustic – do we model music motions when using a laptop as our instrument?

Who is who in the end? Recognizing pianists by their final ritardandi

by Raarten Grachten and Gerhrad Widmer

Some examples Harasiewicz, vs Ashkenazy – similar to how different people walk down the stairs.

Why? Structure asks for it or … they just feel like it. How much of this is specific to the performer? Fixed effects: transmitting moods, clarifying musical structure. Transient effects: Spontaneous deicsions, motor noise, performance errors.

Study – looking at piece specific vs. performance specific tempo variation. Results: Global tempo from the piece, local variation is performance specific.

Method:

- Define a performance norm

- Determine where performers significantly deviate

- Model the deviations

- Classify performances based on the model

In actuality, use the average performance as a norm. Note also there may be errors in annotations that have to be accounted for.

Here are the deviations from the performance norm for various performers.

Accuracy ranges from 65.31 to 43.53 – (50% is random baseline). Results are not overwhelming, but still interesting considering the simplistic model.

Interesting questions about students and schools and styles that may influence results.

All in all, an interesting talk and very clearly presented.

ISMIR Keynote – Ten Years of Ismir

Posted in Music on October 26, 2009

Ten years of ISMIR – Reflections on challenges and opportunities

Three founding fathers of ISMIR: J. Stephen Downie, Donald Byrd and Tim Crawford

Prehistoric background Tim Crawford described the early challenges in finding music and difficulties in using computers in the early days. Stephen talks about his early days as a flutist and his personal challenges in finding music from incipits and his strong bias for retrieval and evaluation instilled by his advisor.

In 1999, Don Byrd, working with Bruce Croft at UMASS, Tim working at Kings College in London got a grant to look at music information retrieval.

1999 – two conferences – ACM SIGIR + ACM Digital Libraries. Stephen organized an exploratory workshop on music information retrieval – that’s where Stephen and Don met and proposed ISMIR. 13 August 1999 – ISMIR was born (in a bar in Berkley CA.)

ISMIR timeline:

- 1999 – MIR Workshop

- 2000 – First ISMIR – Plymouth MA – 88 Attendees, 11 different countries, 9 invited talks, 10 papers, 16 posters. Highlights: Beth Logan presents MFCCs, Tzanetakis and Cook: Marsyas, Foote: Arthur paper

- 2002 – switch symposium to conference – to make it easier to get funding

- Growing collaborations. 50% of all papers are from 3 or 4 authors

- 2009 – ISMIR becomes the International Society of Music Information Retrieval

Evaluation History

- 1999 Trec-like evaluation proposed

- 2001 Bloomington meeting – manifesto for content providers to supply data

- 2002 / 2003 – funding from the Mellon corporation

- 2004 – Barcelona – MTG created the audio description contest

- 2005 – First MIREX

- MIREX Breakdown

- 469 algorithm runs

- 129 – train/test machine learning tests

- 139 search tasks

- 22 unique tasks

- 16 tasks in audio domain

- 3 hybrid tasks

- No symbolic tasks in 2009

ISMIR: External Success Factors – Audo Compression, growth of online audio, Standards like MPEG-7, dot.com bubble (Google for music)

ISMIR: Internal Success Factors: – Communications resources – the music-ir@ircam.fr mailing list and collected proceedings. Diversity in backgrouns in the steering committee, quality in programme chairs and committees. Policy of inclusiveness – not premised on high rejection rates, multiple avenues for presntation. General support for the Audio Description Contest and MIREX.

Five Key Challenges for ISMIR

- Embracing Users – engage more with potential user-communities (performing musicians, film makers, musicologists, sound archivists, music eduatiors and music enthusiasts of all types)

- Digging deeper into music itself– find the ‘music’ within the signal, move beyond simple timbral approaches, move beyond simgle features to create hybrid musically principled features, deeper understanding of what features mean musically, hybrid symbol and audio systems.

- Increasing musial diversity – widen our horizons beyond western popular music

- Rebalancing our music portfolio – Use audio ‘symbolic’ and (catalog) metadata together

- Developing comprehensive MIR systems: work towards complete, usable, scalable systems, even if they are not perfect. In text IR world, prototype systems have been pivotal (smart, managing gigabytes, terrier

The Grand Challenge: Complete Systems

- Something for people to use

- Engage with our potential user-community

- Users and humans music become more aware how humans hear music, listen to music respond to it and think about it.

- New discipline of music informatics based in higher-level (human) query rather than low-level feature-matching

Challenges:

- Need to find a way to encourage and reward development and improvement – to move things to the next level – problem is it is hard to publish something that is built on previous work but has no novel contribution

- Academic vs. industrial priorities

- Music retrieval vs multimedia retrieval we have a lot to learn from conferences like ACM MM.

IMPACT! – is the new academic Rock’n’roll – to get funding, must show impact, perhaps more important than publishing.

Live from ISMIR

This week I’m attending ISMIR – the 10th International Society for Music Information Retrieval Conference being held in Kobe Japan. At this conference researchers gather to advance the state of the art in music information retrieval. It is a varied bunch including librarians, musicologists, experts in signal processing, machine learning, text IR, visualization, HCI. I’ll be trying to blog the various talks and poster sessions throughout the conference, (but at some point the jetlag will kick in – making it hard for me to think, let alone type. It’s 9AM – the keynote is starting …

This week I’m attending ISMIR – the 10th International Society for Music Information Retrieval Conference being held in Kobe Japan. At this conference researchers gather to advance the state of the art in music information retrieval. It is a varied bunch including librarians, musicologists, experts in signal processing, machine learning, text IR, visualization, HCI. I’ll be trying to blog the various talks and poster sessions throughout the conference, (but at some point the jetlag will kick in – making it hard for me to think, let alone type. It’s 9AM – the keynote is starting …

Opening Remarks

Masataka and Ich give the opening remarks. First some stats:

- 286 attendees from 28 countries

- 212 submissions fro 29 countries

- 123 papers (58%) accepted

- 214 reviewers

Tutorial Day at ISMIR

Posted in events, fun, Music, music information retrieval on October 26, 2009

Monday was tutorial day. After months of preparation, Justin finally got to present our material. I was a bit worried that our timing on the talk would be way out of wack and we’d have to self edit on the fly – but all of our time estimates seemed to be right on the money. whew! The tutorial was well attended with 80 or so registered – and lots of good questions at the end. All in all I was pleased at how it turned out. Here’s Justin talking about Echo Nest features:

After the tutorial a bunch of us went into town for dinner. 15 of us managed to find a restaurant that could accommodate us – and after lots of miming and pointing at pictures on the menu we managed to get a good meal. Lots of fun.

Walking around Kobe

My tutorial co-presenter Justin and I spent the a few hours walking around the center of Kobe before we did our final tutorial preparation. I had a great time, Kobe is really a fun city. It was great to see the “Welcome to Kobe’ ISMIR 2009 signs on the Port Liner (the Monorail that goes from the main trainstation to the conference center).

I had sushi in a conveyor-belt sushi restaurant. Justin patiently walked me through the protocols and condiments. I had a really fun time. Being in Japan is really like being in the future.

My first moments in Kobe – ISMIR 2009

After a 26 hours of travel from Nashua to Kobe Japan via Bostin, NYC, Tokyo to Osaka I arrived to find an extremely comfortable hotel at the conference center:

The conference hotel is the Portopia Hotel. It is quite nice. Here’s the lobby:

And the tower:

I went for a walk this morning to find an American-sized cup of coffee (24 oz is standard issue at Dunkin’s). This is the closest thing I could find. Looks like I’ll need another source of caffeine on this trip:

Thanks to Masataka, Ichiro and the rest of the conference committee for providing such a wonderful venue for ISMIR 2009.

The last quiet moment ….

Posted in fun on October 23, 2009

Using Visualizations for Music Discovery

Posted in code, data, events, fun, Music, music information retrieval, research, The Echo Nest, visualization on October 22, 2009

On Monday, Justin and I will present our magnum opus – a three-hour long tutorial entitled: Using Visualizations for Music Discovery. In this talk we look the various techniques that can be used for visualization of music. We include a survey of the many existing visualizations of music, as well as talk about techniques and algorithms for creating visualizations. My hope is that this talk will be inspirational as well as educational spawning new music discovery visualizations. I’ve uploaded a PDF of our slide deck to slideshare. It’s a big deck, filled with examples, but note that large as it is, the PDF isn’t the whole talk. The tutorial will include many demonstrations and videos of visualizations that just are not practical to include in a PDF. If you have the chance, be sure to check out the tutorial at ISMIR in Kobe on the 26th.