Oral Session 1A – Knowledge on the Web

Oral Session 1A – The first Oral Session of ISMIR 2009, chaired by Malcolm Slaney of Yahoo! Research

Integrating musicology’s heterogeneous data sources for better exploration

by David Bretherton, Daniel Alexander Smith, mc schraefel,

Richard Polfreman, Mark Everist, Jeanice Brooks, and Joe Lambert

Project Link: http://www.mspace.fm/projects/musicspace

Musicologists consult many data sources, musicspace tries to integrate these resources. Here’s a screenshot of what they are trying to build.

Wirking with many public and private organizations (From British Libary to Naxos).

Motivation: Many musicologist queries are just intractible because: Need to consult several resources, they are multipart queries (require *pen and paper*), insufficient granularity of metadata or serch options. Solution: Integrate sources, optimally interactive UI, increase granularity.

Difficulties: Many formats for data sources,

Strategies: Increase granularity of data by making annotations explicit. Generate metadata – fallback on human intelligence, inspired by Amazon turk – to clean and extract data.

User Interface – David demonstrated the faceted browser to satisfy a complex query (find all the composers that montiverdi’s scribe was also the scribe for). I liked the dragging columns.

There are issues with linked databases from multiple sources – when one source goes away (for instance, for licensing reason), the links break.

An Ecosystem for transparent music similarity in an open world

By Kurt Jacobson, Yves Raimond, Mark Sandler

Link http://classical.catfishsmooth.net/slides/

Kurt was really fast, was hard to take coherent notes, so my fragmented notes are here.

Assumption: you can use music similarity for recommendation. Music similarity:

Tversky’s suggested that you can’t really put similarity into a euclidean space. It is not symmetric. He suggests a contrast model basd on comparing features – analagous to ‘bag of features’.

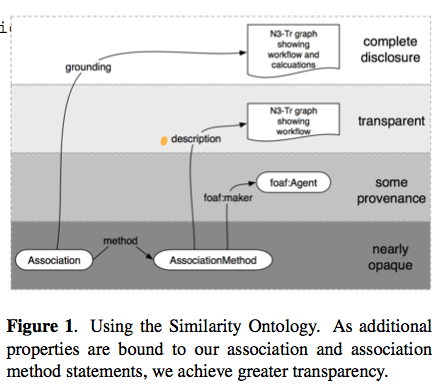

What does music similarity realy mean? We can’t say! Means different things in different contexts. Context is important. Make similarity be a RDF concept. A hierarchy of similarity was too limiting. Similarity now has properties. We reify the simialriy, how, how much etc.

Association method with levels of transparency as follows:

Example implementation: http://classical.catfishsmooth.net/about/

Kurt demoed the system showing how he can create a hybrid query for timbral, key and composer influence similarity. It was a nice demo.

Future work: digitally signed similarity statements – neat idea.

Kurt challenges the Last.fm, BMATs and the Echo Nests and anyone who provides similarity information: Why not publish MuSim?

Interfaces for document representation in digital music libraries

By Andrew Hankinson Laurent Pugin Ichiro Fujinaga

Goal: Designing user interfaces for displaying music scores

Part of the RISM project – music digitization and metadata

Bring together information for many resources.

Five Considerations

- Preservation of Document integrity – image gallery approach doesn’t give you a sense of the complete document

- Simultaneous viewing of parts – for example, the tenor and bass may be separated in the work without window juggling.

- Provide multiple page resolutions – zooming is important

- Optimized page loading

- Image + Metadata should be presented simultaneously

Current Work

Implemented a prototype viewer that takes the 5 considerations into account. Andy gave a demo of the prototype – seems to be quite an effective tool for displaying and browsing music scores:

A good talk,well organized and presented – nice demo.

Oral Session 1B – Performance Recognition

Oral Session 1B, chaired by Simon Dixon (Queen Mary)

Body movement in music information retrieval

by Rolf Inge Godøy and Alexander Refsum Jensenius

Abstract: We can see many and strong links between music and hu- man body movement in musical performance, in dance, and in the variety of movements that people make in lis- tening situations. There is evidence that sensations of hu- man body movement are integral to music as such, and that sensations of movement are efficient carriers of infor- mation about style, genre, expression, and emotions. The challenge now in MIR is to develop means for the extrac- tion and representation of movement-inducing cues from musical sound, as well as to develop possibilities for using body movement as input to search and navigation inter- faces in MIR.

Links between body movement and music everywhere. Performers, listeners, etc. Movement is integral to music experience. Suggest that studying music-related body movement can help our understanding of music.

One example: http://www.youtube.com/watch?v=MxCuXGCR8TE

Relate music sound to subject images.

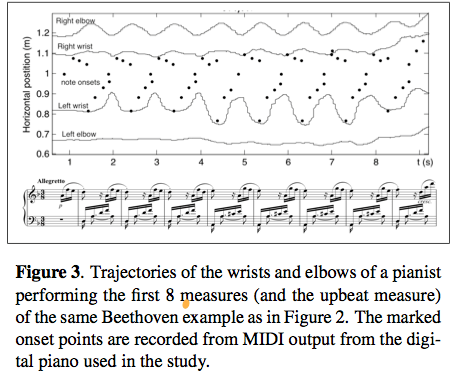

Looking at performance of pianists – creates a motiongram – and a motion capture.

Listeners have lots of knowledge about sound producing motions/actions. These motions are integral to music perception. There’s a constant mental model of the sound/action. Example: Air guitar vs. Real Guitar

This was a thought provoking talk. wonder how the music-action model works when the music controller is no longer acoustic – do we model music motions when using a laptop as our instrument?

Who is who in the end? Recognizing pianists by their final ritardandi

by Raarten Grachten and Gerhrad Widmer

Some examples Harasiewicz, vs Ashkenazy – similar to how different people walk down the stairs.

Why? Structure asks for it or … they just feel like it. How much of this is specific to the performer? Fixed effects: transmitting moods, clarifying musical structure. Transient effects: Spontaneous deicsions, motor noise, performance errors.

Study – looking at piece specific vs. performance specific tempo variation. Results: Global tempo from the piece, local variation is performance specific.

Method:

- Define a performance norm

- Determine where performers significantly deviate

- Model the deviations

- Classify performances based on the model

In actuality, use the average performance as a norm. Note also there may be errors in annotations that have to be accounted for.

Here are the deviations from the performance norm for various performers.

Accuracy ranges from 65.31 to 43.53 – (50% is random baseline). Results are not overwhelming, but still interesting considering the simplistic model.

Interesting questions about students and schools and styles that may influence results.

All in all, an interesting talk and very clearly presented.

#1 by Kurt Jacobson on October 28, 2009 - 10:58 pm

Hi Paul,

Your tireless blogging is an inspiration to us all :-)

I’ve made a brief blog post that I hope explains the MuSim idea in a nice short digestable bit:

http://kurtisrandom.blogspot.com/2009/10/more-on-similarity-ontology.html

-Kurt J