Paul

I'm the Director of Developer Community at The Echo Nest, a research-focused music intelligence startup that provides music information services to developers and partners through a data mining and machine listening platform. I am especially interested in hybrid music recommenders and using visualizations to aid music discovery.

10 Awesome things about ISMIR 2009

ISMIR 2009 is over – but it will not be soon forgotten. It was a wonderful event, with seemingly flawless execution. Some of my favorite things about the conference this year:

- The proceedings – distributed on a USB stick hidden in a pen that has a laser! And the battery for the laser recharges when you plug the USB stick into your computer. How awesome is that!? (The printed version is very nice too, but it doesn’t have a laser).

- The hotel – very luxurious while at the same time, very affordable. I had a wonderful view of Kobe, two very comfortable beds and a toilet with more controls than the dashboard on my first car.

- The presentation room – very comfortable with tables for those sitting towards the front, great audio and video and plenty of power and wireless for all.

- The banquet – held in the most beautiful room in the world with very exciting Taiko drumming as entertainment.

- The details – it seems like the organizing team paid attention to every little detail and request – they had taped numbers on the floor so that the 30 folks giving their 30 second pitches during poster madness would know just where to stand, to the signs on the coffeepots telling you that the coffee was being made, to the signs on the train to the conference center welcoming us to ISMIR 2009. It seems like no detail was left to chance.

- The food – our stomachs were kept quite happy – with sweet breads and pastries every morning, bento boxes for lunch, and coffee, juices, waters, and the mysterious beverage ‘black’ that I didn’t dare to try. My absolute favorite meal was the box lunch during the tutorial day – it was a box with a string – when you are ready to eat you give the string a sharp tug – wait a few minutes for the magic to do its job and then you open the box and eat a piping hot bowl of noodles and vegetables. Almost as cool as the laser-augmented proceedings.

- The city – Kobe is a really interesting city – I spent a few days walking around and was fascinated by it all. I really felt like I was walking around in the future. It was extremely clean, the people will very polite, friendly and always willing to help. Going into some parts of town was sensory overload, the colors, sounds, smells, the sights were overwhelming – it was really fun.

- the Keynote – music making robots – what more is there to say.

- The Program – the quality of papers was very high – there was some outstanding posters and oral presentations. Much thanks to George and Keiji for organizing the reviews to create a great program. (More on my favorite posters and papers in an upcoming post)

- f(mir) – The student-organized workshop looked at what MIR research would look like in 10, 20 or even 50 years (basically after I’m dead and gone). The presentations in this workshop were quite provactive – well done students!

I write this post as I sit in the airport in Osaka waiting for my flight home. I’m tired, but very energized to explore the many new ideas that I encountered at the conference. It was a great week. I want to extend my personal thanks to Professor Fujinaga and Professor Goto and the rest of the conference committee for putting together a wonderful week.

ISMIR Oral Session – Sociology and Ethnomusicology

Session Title: Sociology & Ethnomusicology

Session Chair: Frans Wiering (Universiteit Utrecht, Netherland)

Exploring Social Music Behavior: An Investigation of Music Selection at Parties

Sally Jo Cunningham and David M. Nichols

Abstract: This paper builds an understanding how music is currently listened to by small (fewer than 10 individuals) to medium-sized (10 to 40 individuals) gatherings of people—how songs are chosen for playing, how the music fits in with other activities of group members, who supplies the music, the hardware/software that supports song selection and presentation. This fine-grained context emerges from a qualitative analysis of a rich set of participant observations and interviews focusing on the selection of songs to play at social gatherings. We suggest features for software to support music playing at parties.

Notes:

- What happens at parties, especially informal small and medium sized parties

- Observations and interviews – 43 party observations

- Analyzing the data: key events that drive the activity, patterns of behavior, social roles

- Observations

- music selection cannot require fine motor movements (because of drinking and holding their drings) (Drinking dislexia)

- Need for large displays

- Party collection from different donors, sources, media

- Pre-party: host collection

- As party progresses: additional contributions (ipods, thumbdrives, etc)

- Challenge: bring together into a single browseable searchable collection

- Roles: Host, guest, guest of honor. Host provides initial collection, party playlist. High stress ‘guilty pleasures’

- Guests: may contribute, could insult the host, may modify party playlist if receive the invitation from the host. Voting jukeboxes may help

- Guest of Honor had ultimate control

- insertion into playlist, looking for specific song, type of song.

- Delete songs from playlist without disrupting the party

- Setting and maintaining atmosphere

- softer for starts, move to faster louder, ending with chilling out

- What next:other situations, long car ride

- Questions: Spotify turned into the best party

Great study, great presentation.

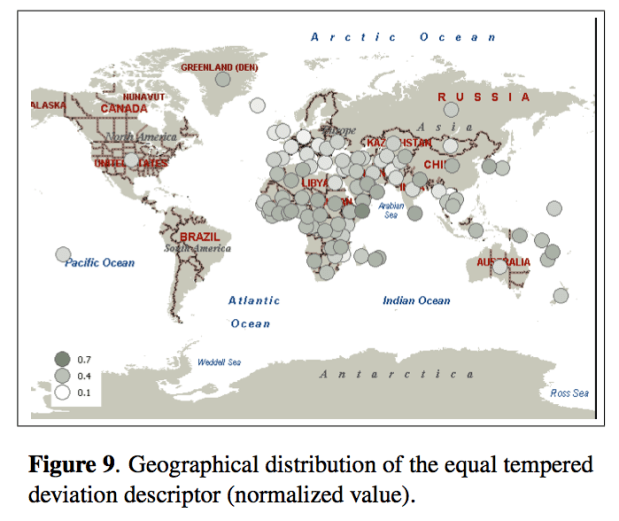

Music and Geography: Content Description of Musical Audio from Different Parts of the World

Emilia Gómez, Martín Haro and Perfecto Herrera

Abstract: This paper analyses how audio features related to different musical facets can be useful for the comparative analysis and classification of music from diverse parts of the world. The music collection under study gathers around 6,000 pieces, including traditional music from different geographical zones and countries, as well as a varied set of Western musical styles. We achieve promising results when trying to automatically distinguish music from Western and non-Western traditions. A 86.68% of accuracy is obtained using only 23 audio features, which are representative of distinct musical facets (timbre, tonality, rhythm), indicating their complementarity for music description. We also analyze the relative performance of the different facets and the capability of various descriptors to identify certain types of music. We finally present some results on the relationship between geographical location and musical features in terms of extracted descriptors. All the reported outcomes demonstrate that automatic description of audio signals together with data mining techniques provide means to characterize huge music collections from different traditions, complementing ethnomusicological manual analysis and providing a link between music and geography.

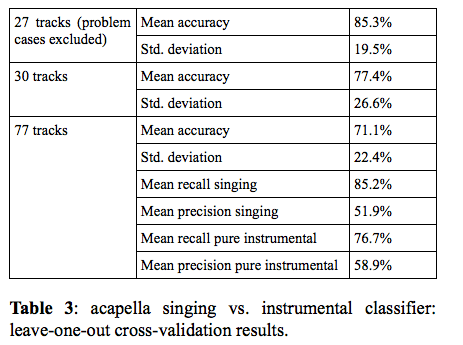

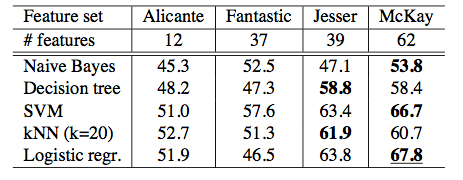

You Call That Singing? Ensemble Classification for Multi-Cultural Collections of Music Recordings

Polina Proutskova and Michael Casey

Abstract: The wide range of vocal styles, musical textures and re- cording techniques found in ethnomusicological field recordings leads us to consider the problem of automatic- ally labeling the content to know whether a recording is a song or instrumental work. Furthermore, if it is a song, we are interested in labeling aspects of the vocal texture: e.g. solo, choral, acapella or singing with instruments. We present evidence to suggest that automatic annotation is feasible for recorded collections exhibiting a wide range of recording techniques and representing musical cultures from around the world. Our experiments used the Alan Lomax Cantometrics training tapes data set, to encourage future comparative evaluations. Experiments were con- ducted with a labeled subset consisting of several hun- dred tracks, annotated at the track and frame levels, as acapella singing, singing plus instruments or instruments only. We trained frame-by-frame SVM classifiers using MFCC features on positive and negative exemplars for two tasks: per-frame labeling of singing and acapella singing. In a further experiment, the frame-by-frame classifier outputs were integrated to estimate the predominant content of whole tracks. Our results show that frame-by- frame classifiers achieved 71% frame accuracy and whole track classifier integration achieved 88% accuracy. We conclude with an analysis of classifier errors suggesting avenues for developing more robust features and classifier strategies for large ethnographically diverse collections.

ISMIR Oral Session – Folk Songs

Session Title: Folk songs

Session Chair: Remco C. Veltkamp (Universiteit Utrecht, Netherland)

Global Feature Versus Event Models for Folk Song Classification

Ruben Hillewaere, Bernard Manderick and Darrell Conklin

Abstract: Music classification has been widely investigated in the past few years using a variety of machine learning approaches. In this study, a corpus of 3367 folk songs, divided into six geographic regions, has been created and is used to evaluate two popular yet contrasting methods for symbolic melody classification. For the task of folk song classification, a global feature approach, which summarizes a melody as a feature vector, is outperformed by an event model of abstract event features. The best accuracy obtained on the folk song corpus was achieved with an ensemble of event models. These results indicate that the event model should be the default model of choice for folk song classification.

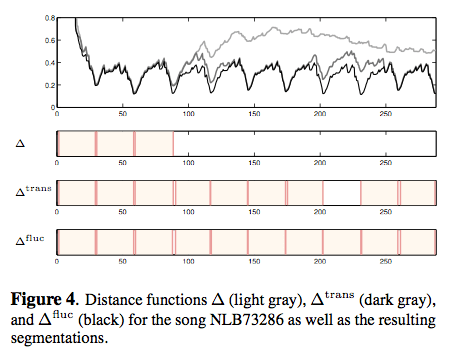

Robust Segmentation and Annotation of Folk Song Recordings

Meinard Mueller, Peter Grosche and Frans Wiering

Abstract: Even though folk songs have been passed down mainly by oral tradition, most musicologists study the relation between folk songs on the basis of score-based transcriptions. Due to the complexity of audio recordings, once having the transcriptions, the original recorded tunes are often no longer studied in the actual folk song research though they still may contain valuable information. In this paper, we introduce an automated approach for segment- ing folk song recordings into its constituent stanzas, which can then be made accessible to folk song researchers by means of suitable visualization, searching, and navigation interfaces. Performed by elderly non-professional singers, the main challenge with the recordings is that most singers have serious problems with the intonation, fluctuating with their voices even over several semitones throughout a song. Using a combination of robust audio features along with various cleaning and audio matching strategies, our approach yields accurate segmentations even in the presence of strong deviations.

Notes: Interesting talk (as always) by Meinard about dealing with real world problems when dealing with folk song audio recordings.

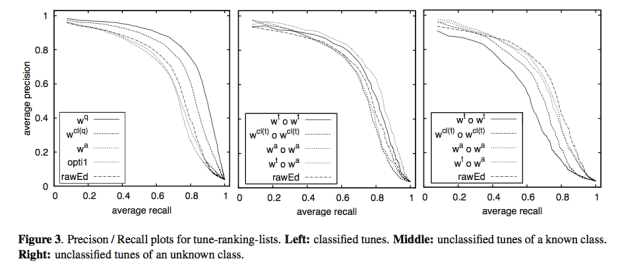

Supporting Folk-Song Research by Automatic Metric Learning and Ranking

Korinna Bade, Andreas Nurnberger, Sebastian Stober, Jörg Garbers and Frans Wiering

Abstract: In folk song research, appropriate similarity measures can be of great help, e.g. for classification of new tunes. Several measures have been developed so far. However, a particular musicological way of classifying songs is usually not directly reflected by just a single one of these measures. We show how a weighted linear combination of different basic similarity measures can be automatically adapted to a specific retrieval task by learning this metric based on a special type of constraints. Further, we describe how these constraints are derived from information provided by experts. In experiments on a folk song database, we show that the proposed approach outperforms the underlying basic similarity measures and study the effect of different levels of adaptation on the performance of the retrieval system.

ISMIR 2009 – The Future of MIR

Posted in ismir, Music, recommendation on October 29, 2009

This year ISMIR concludes with the 1st Workshop on the Future of MIR. The workshop is organized by students who are indeed the future of MIR.

09:00-10:00 Special Session: 1st Workshop on the Future of MIR

MIR, where we are, where we are going

Session Chair: Amélie Anglade Program Chair of f(MIR)

Meaningful Music Retrieval

Frans Wiering – [pdf]

Notes

- Some unfortunate tendencies: anatomical view of music – a dead body that we do autopsies, time is the loser Traditional production-oriented/

- Measure of similarity: relevance, surprise

- Few interesting applications for end-users

- bad fit to present-day musicological themes

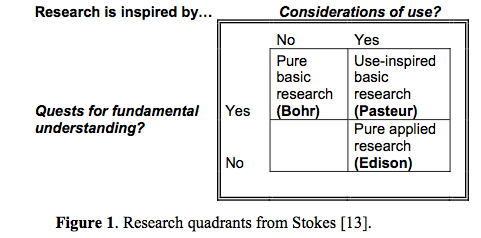

- We are in the world of ‘pure applied research’ – no truth interdisciplinary between music domain knowledge and computer science.

- Music is meaningful (and the underlying personal motivation of most MIR researchers).

- Meaning in musicology – traditionally a taboo suject

- Subjectivity: an indivds. disposition to engage in social and cultural interactions

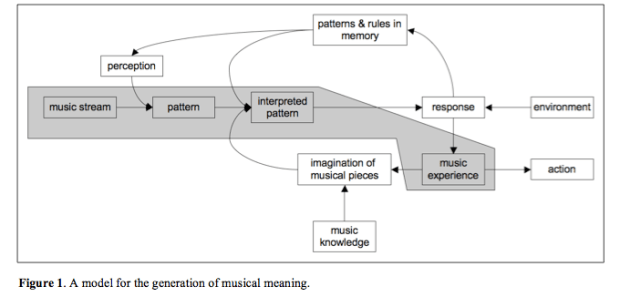

- Meaning generation process – we have a long-term memory for music –

- Can musical meaning provide the ‘big story line’ for MIR?

The Discipline Formerly Known As MIR

Perfecto Herrera, Joan Serrà, Cyril Laurier, Enric Guaus, Emilia Gómez and Xavier Serra

Intro: Our exploration is not a science-fiction essay. We do not try to imagine how music will be conceptualized, experienced and mediated by our yet-to-come research, technological achievements and music gizmos. Alternatively, we reflect on how the discipline should evolve to become consolidated as such, in order it may get an effective future instead of becoming, after a promising start, just a “would-be” discipline.Our vision addresses different aspects: the discipline’s object of study, the employed methodologies, social and cultural impacts (which are out of this long abstract because of space restrictions), and we finish with some (maybe) disturbing issues that could be taken as partial and biased guidelines for future research.

Notes: One motivation for advancing MIR – more banquets!

- MIR is no more about retrieval than computer science is about computers

- Music Information Retrieval – it’s too narrow

- Music Information or Information about Music?

- Interested in the interaction with music information

- We should be asking more profound questions

- music

- content tresasures in short musical exceprts, tracks performances etc.

- context

- music understanding systems

- Most metadata will be generated in the creation / production phase (hmm.. don’t agree necessarily, all the good metadata (tags, who likes what) is based on context and use which is post-hoc)

- Instead of automatic analysis – build systems to help humans help humans

- Music like water? or Music as dog!!! – a friend – companion –

- Personalization, Findability

- Music turing test

Good, provocative talk

Oral Session 2: Potential future MIR applications

Session Chair: Jason Hockman (McGill University), Program Chair of f(MIR)

Machine Listening to Percussion: Current Approaches and Future Directions – [pdf]

Michael Ward

Abstract: approaches have been taken to detect and classify percussive events within music signals for a variety of purposes with differing and converging aims. In this paper an overview of those technologies is presented and a discussion of the issues still to overcome and future possibilities in the field are presented. Finally a system capable of monitoring a student drummer is envisaged which draws together current approaches and future work in the field.

Notes:

- Challengs: Onset detection of isolated drum strokes

- Onset detection and classification of overlapping drum sounds

- Onset detection and classification in the presence of other instruments

- Variability in Percussive sounds . Dozens of criteria effect the sounds produced (strike velocity, angle, position etc.)

- Future Research Areas

- Extension of recognition to include the wide variety of strokes. (open hh, half-open hh, hh foot splash etc)

MIR When All Recordings Are Gone: Recommending Live Music in Real-Time – [pdf]

Marco Lüthy and Jean-Julien Aucouturier

Recommending live and short lived events. Bandsintown, Songkick, gigulate … pay attention to this paper.

Notes:

- Recommendation for live music in real-time

- Coldplay -> free album when you get a ticket to a coldplay concert – give away the music

- NIN -> USB keys in the toilet – which had strange recording on the file – strange sounds – an FFT of the sounds showed phone number and GPS coordinates – turned into a treasure hunt to a NIN nails concert.

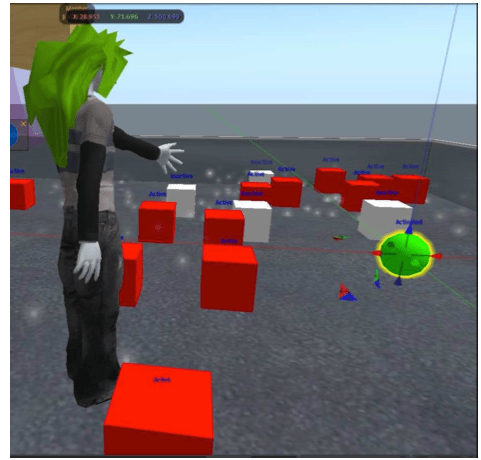

- Komuso Tokugawa – an avatar for a musiciaon in second life. Plays in second life, twitters concert announcements (playing wake for Les Paul in 3 minutes)

- ‘How do we get there in time?’

- JJ walked through how to implement a recommender system in second life

- Implicit preference inferred from how long your avatar listens to a concert (Nicole Yankelovich at Sun Labs should look at this stuff)

- Great talk by JJ – full of energy – neat ideas. Good work.

Poster Session

- Global Access to Ethnic Music: The Next Big Challenge?

Olmo Cornelis, Dirk Moelants and Marc Leman - The Future of Music IR: How Do You Know When a Problem Is Solved?

Eric Nichols and Donald Byrd

ISMIR 2009 – The Industry Panel

On Thursday I participated in the ISMIR industrial panel. 8 members of industry talked about the issues and challenges that they face in industry. I had a good time on the panel, the panelists were all on target and very thoughtful, and there were great questions from the audience. I’m happy too that the IRC channel offered a place for those to vent without the session turning into SXSW-style riot.

Justin Donaldson kept good notes on the panel and has posted them on his blog: ISMIR 2009 Industry Panel

Taiko at the ISMIR 2009 Banquet

During the ISMIR Banquet (held in the most beautiful place in the world, the Kobe Kachoen) we were entertained by the Maturishu a Taiko performance group. They were just fantastic:

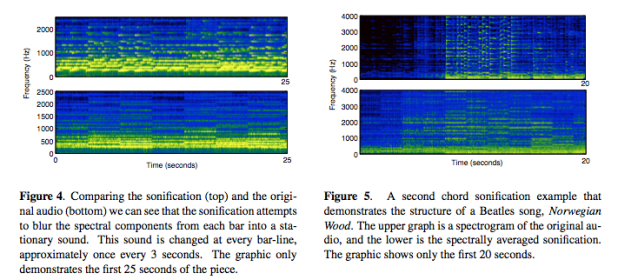

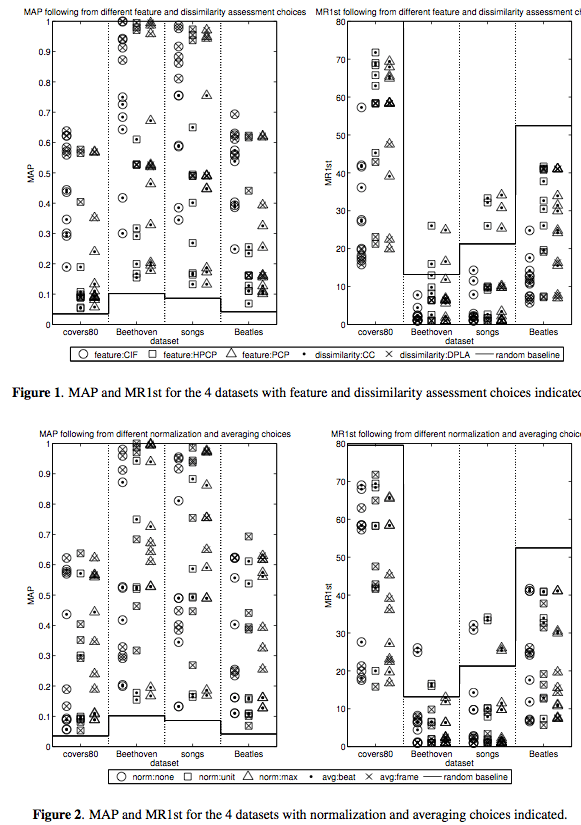

ISMIR Oral Session 7 – Harmonic & Melodic Similarity and Summarization

Posted in Music on October 29, 2009

10:30-12:30 Oral Session (OS7) – Harmonic & Melodic Similarity and Summarization

Session Chair: Emilia Gómez (Universitat Pompeu Fabra, Spain)

Nicola Orio and Antonio Rodà

- high-level music dimensions are not reliably computed from audio

- musicologists are more interested in scores

- results with symbolic formats can be a reference for audio-based approaches

- melodic similarity is not a solved problem

- Overview of the approach:

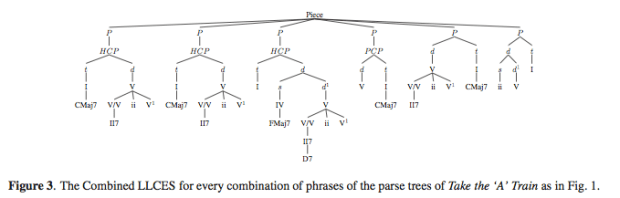

by Bas de Haas, Martin Rohrmeier, Remco Veltkamp and Frans Wiering

- Extract chord labels from audio and symbolic data (not the research focus)

- Not all info is in the data. Need a grammatical model of tonal harmony

- Conveying musical structure (slowing down at boundaries for example)

- Prosody ( stress, direction, grouping) – the heart of the matter

- Musical Affect (happy, sad, etc) – not easy, so ignores this one

This was a very good talk.

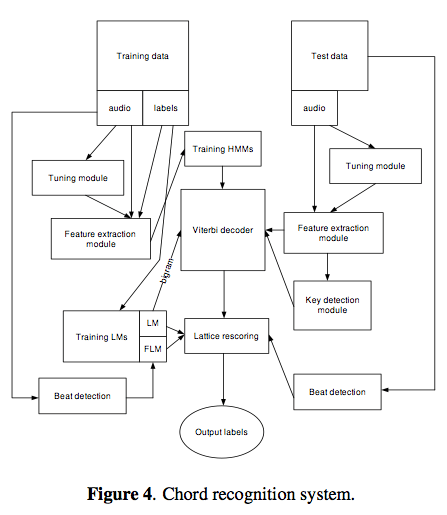

Maksim Khadkevich and Maurizio Omologo

Sam Ferguson and Densil Cabrera

Cynthia C.S. Liem and Alan Hanjalic

ISMIR Keynote – Wind instrument-playing humanoid robots

What’s not to love!?!? Robots and Music! This was a great talk.

Wind instrument-playing humanoid robots

Atsuo Takanishi

Some history of robots:

Wabot-2 – early music playing robot

Wabian-2 – walking robots

Emotional Robots

Kobian: Emotional humanoid robot

Voice Producing Robots

Music Performance Robots

(Compare)

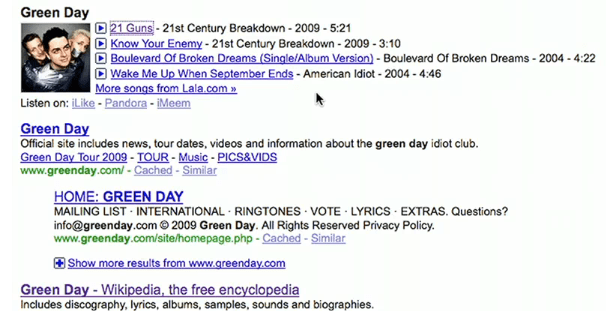

Google’s new music search

Posted in Music on October 28, 2009

The news wires are abuzz with Google’s new music search feature. The new Google feature will allow users to search for an artist, song, album or lyric and get a music result that will include album art and a ‘play’ button that will let you listen to the music. MySpace and Lala will be serving up the music and you’ll be able to play any song in full just once. The music results will also include links to Pandora, imeem and Rhapsody. Lyrics search is provided by Gracenote.

Here’s the video announcement:

It’s about time that Google starts to include the ability to listen to search results – this will help. It’s pretty cool, but I don’t think it changes the music discovery game too much. Search is not discovery.

Update: The Register is particularly unimpressed: “Trying to forcefeed punters a lousy service is a bad idea, amplified by the assumption that if Facebook and Google are the feeding tube, we’ll suck it up.”

The SQL Join is destroying music

Posted in events, Music, The Echo Nest on October 28, 2009

Brian Whitman,one of the founders of the Echo Nest, gave a provocative talk last week at Music and Bits. Some excerpts:

Useless MIR Problems:

- Genre Identification – “Countless PhDs on this useless task. Trying to teach a computer a marketing construct”

Hard but interesting MIR Problems:

- Finding the saddest song in the world

- Predicting Pitchfork and All Music Guide ratings

- Predicting the gender of a listener based upon their music taste

On Recommendation:

- “The best music experience is still very manual… I am still reading about music, not using a recommender.”

- “If we only used collaborative filtering to discover music, the popular artists would eat the unknowns alive.”

- “The SQL Join is destroying music”

Brian’s notes on the talk are on his blog. The slides are online here. Highly recommended: