Posts Tagged google

How do you spell ‘Britney Spears’?

Posted by Paul in code, data, Music, music information retrieval, research, The Echo Nest on July 28, 2011

I’ve been under the weather for the last couple of weeks, which has prevented me from doing most things, including blogging. Luckily, I had a blog post sitting in my drafts folder almost ready to go. I spent a bit of time today finishing it up, and so here it is. A look at the fascinating world of spelling correction for artist names.

In today’s digital music world, you will often look for music by typing an artist name into a search box of your favorite music app. However this becomes a problem if you don’t know how to spell the name of the artist you are looking for. This is probably not much of a problem if you are looking for U2, but it most definitely is a problem if you are looking for Röyksopp, Jamiroquai or Britney Spears. To help solve this problem, we can try to identify common misspellings for artists and use these misspellings to help steer you to the artists that you are looking for.

In today’s digital music world, you will often look for music by typing an artist name into a search box of your favorite music app. However this becomes a problem if you don’t know how to spell the name of the artist you are looking for. This is probably not much of a problem if you are looking for U2, but it most definitely is a problem if you are looking for Röyksopp, Jamiroquai or Britney Spears. To help solve this problem, we can try to identify common misspellings for artists and use these misspellings to help steer you to the artists that you are looking for.

A spelling corrector in 21 lines of code

A good place for us to start is a post by Peter Norvig (Director of Research at Google) called ‘How to write a spelling corrector‘ which presents a fully operational spelling corrector in 21 lines of Python. (It is a phenomenal bit of code, worth the time studying it). At the core of Peter’s algorithm is the concept of the edit distance which is a way to represent the similarity of two strings by calculating the number of operations (inserts, deletes, replacements and transpositions) needed to transform one string into the other. Peter cites literature that suggests that 80 to 95% of spelling errors are within an edit distance of 1 (meaning that most misspellings are just one insert, delete, replacement or transposition away from the correct word). Not being satisfied with that accuracy, Peter’s algorithm considers all words that are within an edit distance of 2 as candidates for his spelling corrector. For Peter’s small test case (he wrote his system on a plane so he didn’t have lots of data nearby), his corrector covered 98.9% of his test cases.

Spell checking Britney

A few years ago, the smart folks at Google posted a list of Britney Spears spelling corrections that shows nearly 600 variants on Ms. Spears name collected in three months of Google searches. Perusing the list, you’ll find all sorts of interesting variations such as ‘birtheny spears’ , ‘brinsley spears’ and ‘britain spears’. I suspect that some these queries (like ‘Brandi Spears’) may actually not be for the pop artist. One curiosity in the list is that although there are 600 variations on the spelling of ‘Britney’ there is exactly one way that ‘spears’ is spelled. There’s no ‘speers’ or ‘spheres’, or ‘britany’s beers’ on this list.

One thing I did notice about Google’s list of Britneys is that there are many variations that seem to be further away from the correct spelling than an edit distance of two at the core of Peter’s algorithm. This means that if you give these variants to Peter’s spelling corrector, it won’t find the proper spelling. Being an empiricist I tried it and found that of the 593 variants of ‘Britney Spears’, 200 were not within an edit distance of two of the proper spelling and would not be correctable. This is not too surprising. Names are traditionally hard to spell, there are many alternative spellings for the name ‘Britney’ that are real names, and many people searching for music artists for the first time may have only heard the name pronounced and have never seen it in its written form.

Making it better with an artist-oriented spell checker

A 33% miss rate for a popular artist’s name seems a bit high, so I thought I’d see if I could improve on this. I have one big advantage that Peter didn’t. I work for a music data company so I can be pretty confident that all the search queries that I see are going to be related to music. Restricting the possible vocabulary to just artist names makes things a whole lot easier. The algorithm couldn’t be simpler. Collect the names of the top 100K most popular artists. For each artist name query, find the artist name with the smallest edit distance to the query and return that name as the best candidate match. This algorithm will let us find the closest matching artist even if it is has an edit distance of more than 2 as we see in Peter’s algorithm. When I run this against the 593 Britney Spears misspellings, I only get one mismatch – ‘brandi spears’ is closer to the artist ‘burning spear’ than it is to ‘Britney Spears’. Considering the naive implementation, the algorithm is fairly fast (40 ms per query on my 2.5 year old laptop, in python).

Looking at spelling variations

With this artist-oriented spelling checker in hand, I decided to take a look at some real artist queries to see what interesting things I could find buried within. I gathered some artist name search queries from the Echo Nest API logs and looked for some interesting patterns (since I’m doing this at home over the weekend, I only looked at the most recent logs which consists of only about 2 million artist name queries).

Artists with most spelling variations

Not surprisingly, very popular artists are the most frequently misspelled. It seems that just about every permutation has been made in an attempt to spell these artists.

- Michael Jackson – Variations: michael jackson, micheal jackson, michel jackson, mickael jackson, mickal jackson, michael jacson, mihceal jackson, mickeljackson, michel jakson, micheal jaskcon, michal jackson, michael jackson by pbtone, mical jachson, micahle jackson, machael jackson, muickael jackson, mikael jackson, miechle jackson, mickel jackson, mickeal jackson, michkeal jackson, michele jakson, micheal jaskson, micheal jasckson, micheal jakson, micheal jackston, micheal jackson just beat, micheal jackson, michal jakson, michaeljackson, michael joseph jackson, michael jayston, michael jakson, michael jackson mania!, michael jackson and friends, michael jackaon, micael jackson, machel jackson, jichael mackson

- Justin Bieber – Variations: justin bieber, justin beiber, i just got bieber’ed by, justin biber, justin bieber baby, justin beber, justin bebbier, justin beaber, justien beiber, sjustin beiber, justinbieber, justin_bieber, justin. bieber, justin bierber, justin bieber<3 4 ever<3, justin bieber x mstrkrft, justin bieber x, justin bieber and selens gomaz, justin bieber and rascal flats, justin bibar, justin bever, justin beiber baby, justin beeber, justin bebber, justin bebar, justien berbier, justen bever, justebibar, jsustin bieber, jastin bieber, jastin beiber, jasten biber, jasten beber songs, gestin bieber, eiine mainie justin bieber, baby justin bieber,

- Red Hot Chili Peppers – Variations: red hot chilli peppers, the red hot chili peppers, red hot chilli pipers, red hot chilli pepers, red hot chili, red hot chilly peppers, red hot chili pepers, hot red chili pepers, red hot chilli peppears, redhotchillipeppers, redhotchilipeppers, redhotchilipepers, redhot chili peppers, redhot chili pepers, red not chili peppers, red hot chily papers, red hot chilli peppers greatest hits, red hot chilli pepper, red hot chilli peepers, red hot chilli pappers, red hot chili pepper, red hot chile peppers

- Mumford and Sons – Variations: mumford and sons, mumford and sons cave, mumford and son, munford and sons, mummford and sons, mumford son, momford and sons, modfod and sons, munfordandsons, munford and son, mumfrund and sons, mumfors and sons, mumford sons, mumford ans sons, mumford and sonns, mumford and songs, mumford and sona, mumford and, mumford &sons, mumfird and sons, mumfadeleord and sons

- Katy Perry – Even an artist with a seemingly very simple name like Katy Perry has numerous variations: katy perry, katie perry, kate perry, kathy perry, katy perry ft.kanye west, katty perry, katy perry i kissed a girl, peacock katy perry, katyperry, katey parey, kety perry, kety peliy, katy pwrry, katy perry-firework, katy perry x, katy perry, katy perris, katy parry, kati perry, kathy pery, katey perry, katey perey, katey peliy, kata perry, kaity perry

Some other most frequently misspelled artists:

- Britney Spears

- Linkin Park

- Arctic Monkeys

- Katy Perry

- Guns N’ Roses

- Nicki Minaj

- Muse

- Weezer

- U2

- Oasis

- Moby

- Flyleaf

- Seether

- byran adams – ryan adams

- Underworld – Uverworld

Where did my Google Music go?

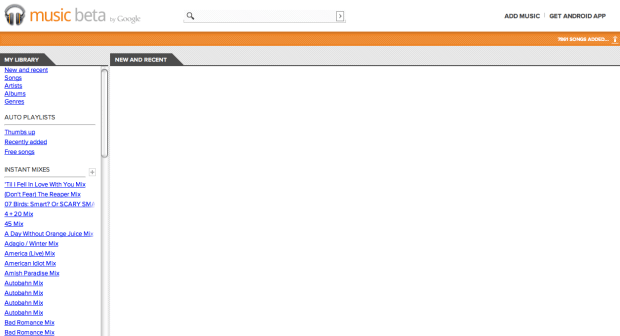

I just fired up my Google Music account this afternoon and this is what I found:

All 7,861 songs are gone. I hope they come back. Apparently, I’m not the only one this is happening to.

Update – all my music has returned sometime overnight.

Google Instant Mix and iTunes Genius fix their WTFs

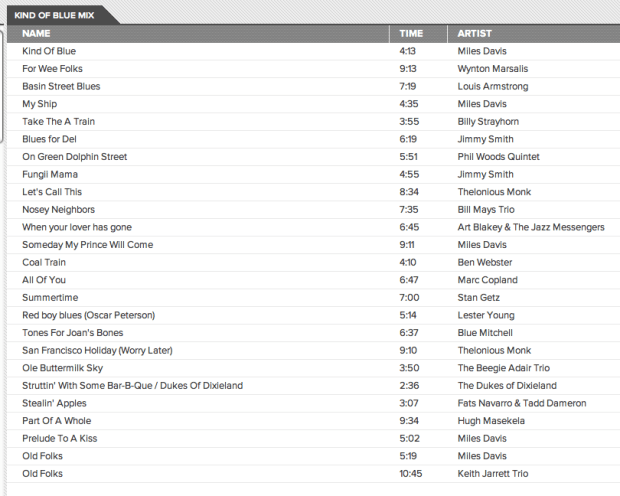

Last week I compared the playlisting capabilities of iTunes Genius, Google’s new Instant Mix and The Echo Nest’s Playlist API. I found that Google’s Instant Mix Playlist were filled with many WTF selections (Coldplay on a Miles Davis playlist) and iTunes Genius had problems generating playlists for any track by the Beatles. I rechecked some of the playlists today to see how they were doing. It looks like both services have received an upgrade since my last post. Here’s the new Google Instant Mix playlist based on a Miles Davis seed song:

All the big WTFs from last week’s test are gone – yay Google for fixing this so quickly. The only problem I see is the doubled ‘Old Folks’ song, but that’s not a WTF. However, I can’t give Google Instant Mix a clean slate yet. Google had a chance to study my particular collection (they asked, and I gave them my permission to do so), so I am sure that they paid particular attention to the big WTFs from last week. I’ll need to test again with a new collection and different seeds to see if their upgrade is a general one. Still, for the limited seeds that I tried, the WTFs seem to be gone.

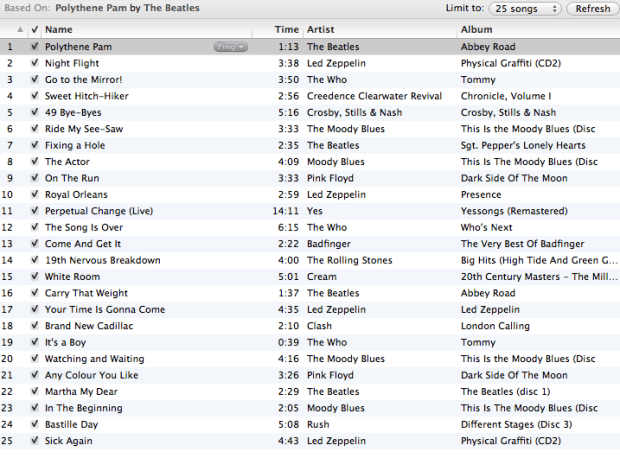

Similarly, iTunes seems to have had an upgrade. Last week, it couldn’t make any playlist from a Beatles’s song, but this week they can. Here’s a playlist created with iTunes Genius with Polythene Pam as a seed:

Genius creates a serviceable playlist, with no WTFs with the Beatles as a seed, so like Google they were able to clear up their WTFs that I noted from last weeks post. No clean slate for Apple though .. I have seen some comments about how Genius appears to have problems generating playlists for new tracks. More investigation is needed to understand if this is really a problem.

Given the traffic that last week’s post received, it is not surprising that these companies noticed the problems and dug in and fixed the problems quickly. I like to think that my post made playlisting just a little bit better for a few million people.

How good is Google’s Instant Mix?

Posted by Paul in Music, playlist, The Echo Nest on May 14, 2011

This week, Google launched the beta of its music locker service where you can upload all your music to the cloud and listen to it from anywhere. According to Techcrunch, Google’s Paul Joyce revealed that the Music Beta killer feature is ‘Instant Mix,’ Google’s version of Genius playlists, where you can select a song that you like and the music manager will create a playlist based on songs that sound similar. I wondered how good this ‘killer feature’ of Music Beta really was and so I decided to try to evaluate how well Instant Mix works to create playlists.

This week, Google launched the beta of its music locker service where you can upload all your music to the cloud and listen to it from anywhere. According to Techcrunch, Google’s Paul Joyce revealed that the Music Beta killer feature is ‘Instant Mix,’ Google’s version of Genius playlists, where you can select a song that you like and the music manager will create a playlist based on songs that sound similar. I wondered how good this ‘killer feature’ of Music Beta really was and so I decided to try to evaluate how well Instant Mix works to create playlists.

The Evaluation

Google’s Instant Mix, like many playlisting engines, creates a playlist of songs given a seed song. It tries to find songs that go well with the seed song. Unfortunately, there’s no solid objective measure to evaluate playlists. There’s no algorithm that we can use to say whether one playlist is better than another. A good playlist derived from a single seed will certainly have songs that sound similar to the seed, but there are many other aspects as well: the mix of the familiar and the new, surprise, emotional arc, song order, song transitions, and so on. If you are interested in the perils of playlist evaluation, check out this talk Dr. Ben Fields and I gave at ISMIR 2010: Finding a path through the jukebox. The Playlist tutorial. (Warning, it is a 300 slide deck). Adding to the difficulty in evaluating the Instant Mix is that since it generates playlists within an individual’s music collection, the universe of music that it can draw from is much smaller than a general playlisting engine such as we see with a system like Pandora. A playlist may appear to be poor because it is filled with songs that are poor matches to the seed, but in fact those songs actually may be the best matches within the individual’s music collection.

Evaluating playlists is hard. However, there is something that we can do that is fairly easy to give us an idea of how well a playlisting engine works compared to others. I call it the WTF test. It is really quite simple. You generate a playlist, and just count the number of head-scratchers in the list. If you look at a song in a playlist and say to yourself ‘How the heck did this song get in this playlist’ you bump the counter for the playlist. The higher the WTF count the worse the playlist. As a first order quality metric, I really like the WTF Test. It is easy to apply, and focuses on a critical aspect of playlist quality. If a playlist is filled with jarring transitions, leaving the listener with iPod whiplash as they are jerked through songs of vastly different styles, it is a bad playlist.

For this evaluation, I took my personal collection of music (about 7,800 tracks) and enrolled it into 3 systems; Google Music, iTunes and The Echo Nest. I then created a set of playlist using each system and counted the WTFs for each playlist. I picked seed songs based on my music taste (it is my collection of music so it seemed like a natural place to start).

The Systems

I compared three systems: iTunes Genius, Google Instant Mix, and The Echo Nest playlisting API. All of them are black box algorihms, but we do know a little bit about them:

- iTunes Genius – this system seems to be a collaborative filtering algorithm driven from purchase data acquired via the iTunes music store. It may use play, skip and ratings to steer the playlisting engine. More details about the system can be found in: Smarter than Genius? Human Evaluation of Music Recommender Systems. This is a one button system – there are no user-accessible controls that affect the playlisting algorithm.

- Google Instant Mix – there is no data published on how this system works. It appears to be a hybrid system that uses collaborative filtering data along with acoustic similarity data. Since Google Music does give attribution to Gracenote, there is a possibility that some of Gracenote’s data is used in generating playlists. This is a one button system. There are no user-accessible controls that affect the playlisting algorithm.

- The Echo Nest playlist engine – this is a hybrid system that uses cultural, collaborative filtering data and acoustic data to build the playlist. The cultural data is gleaned from a deep crawl of the web. The playlisting engine takes into account artist popularity, familiarity, cultural similarity, and acoustic similarity along with a number of other attributes There are a number of controls that can be set to control the playlists: variety, adventurousness, style, mood, energy. For this evaluation, the playlist engine was configured to create playlists with relatively low variety with songs by mostly mainstream artists. The configuration of the engine was not changed once the test was started.

The Collection

For this evaluation I’ve used my personal iTunes music collection of about 7,800 songs. I think it is a fairly typical music collection. It has music of a wide variety of styles. It contains music of my taste (70s progrock and other dad-core, indie and numetal), music from my kids (radio pop, musicals), some indie, jazz, and a whole bunch of Canadian music from my friend Steve. There’s also a bunch of podcasts as well. It has the usual set of metadata screwups that you see in real-life collections (3 different spellings of Björk for example). I’ve placed a listing of all the music in the collection at Paul’s Music Collection if you are interested in all of the details.

The Caveats

Although I’ve tried my best to be objective, I clearly have a vested interest in the outcome of this evaluation. I work for a company that has its own playlisting technology. I have friends that work for Google. I like Apple products. So feel free to be skeptical about my results. I will try to do a few things to make it clear that I did not fudge things. I’ll show screenshots of results from the 3 playlisting sources, as opposed to just listing songs. (I’m too lazy to try to fake screenshots). I’ll also give API command I used for the Echo Nest playlists so you can generate those results yourself. Still, I won’t blame the skeptics. I encourage anyone to try a similar A/B/C evaluation on their own collection so we can compare results.

The Trials

For each trial, I picked a seed song, generated a 25 song playlist using each system, and counted the WTFs in each list. I show the results as screenshots from each system and I mark each WTF that I see with a red dot.

Trial #1 – Miles Davis – Kind of Blue

I don’t have a whole lot of Jazz in my collection, so I thought this would be a good test to see if a playlister could find the Jazz amidst all the other stuff.

First up is iTunes Genius

This looks like an excellent mix. All jazz artists. The most WTF results are the Blood, Sweat and Tears tracks – which is Jazz-Rock fusion, or the Norah Jones tracks which are more coffee house, but neither of these tracks rise above the WTF level. Well done iTunes! WTF score: 0

Next up is The Echo Nest.

As with iTunes, the Echo Nest playlist has no WTFs, all hardcore jazz. I’d be pretty happy with this playlist, especially considering the limited amount of Jazz in my collection. I think this playlist may even be a bit better than the iTunes playlist. It is a bit more hardcore Jazz. If you are listening to Miles Davis, Norah Jones may not be for you. Well done Echo Nest. WTF score: 0

If you want to generate a similar playlist via our api use this API command:

http://developer.echonest.com/api/v4/playlist/static?api_key=3YDUQHGT9ZVUBFBR0&format=json &limit=true&song_id=SOAQMYC12A8C13A0A8 &type=song-radio&bucket=id%3ACAQHGXM12FDF53542C &variety=.12&artist_min_hotttnesss=.4

Next up is google:

I’ve marked the playlist with red dots on the songs that I consider to be WTF songs. There are 18(!) songs on this 25 song playlist that are not justifiable. There’s electronica, rock, folk, Victorian era brass band and Coldplay. Yes, that’s right, there’s Coldplay on a Miles Davis playlist. WTF score: 18

After Trial 1 Scores are: iTunes: 0 WTFs, The Echo Nest 0 WTFs, Google Music: 18 WTFs

Trial #2 – Lady Gaga – Bad Romance

First up is iTunes:

Next up: The Echo Nest

Next up, Google Instant Mix

Google’s Instant Mix for Lady Gaga’s Bad Romance seems filled with non sequitur. Tracks by Dave Brubeck (cool jazz), Maynard Ferguson (big band jazz), are mixed in with tracks by Ice Cube and They Might be Giants. The most appropriate track in the playlist is a 20 year old track by Madonna. I think I was pretty lenient in counting WTFs on this one. Even then, it scores pretty poorly. WTF Score: 13

After Trial 2 Scores are: iTunes: 2 WTFs, The Echo Nest 0 WTFs, Google Music: 31WTFs

Trial #3 – The Nice – Rondo

First up: iTunes:

Next up is The Nest:

Next up is Google Instant Mix:

I would not like to listen to this playlist. It has a number songs that are just too far out. ABBA, Simon & Garfunkel, are WTF enough, but this playlist takes WTF three steps further. First offense, including a song with the same title more than once. This playlist has two versions of ‘Side A-Popcorn’. That’s a no-no in playlisting (except for cover playlists). Next offense is the song ‘I think I love you’ by the Partridge family. This track was not in my collection. It was one of the free tracks that Google gave me when I signed up. 70s bubblegum pop doesn’t belong on this list. However,as bad as The Partridge family song is, it is not the worst track on the playlist. That award goes to FM 2.0: The future of Internet Radio’. Yep, Instant Mix decided that we should conclude a prog rock playlist with an hour long panel about the future of online music. That’s a big WTF. I can’t imagine what algorithm would have led to that choice. Google really deserves extra WTF points for these gaffes, but I’ll be kind. WTF Score: 11

After Trial 3 Scores are: iTunes: 2 WTFs, The Echo Nest 0 WTFs, Google Music: 42WTFs

Trial #4 – Kraftwerk – Autobahn

I don’t have too much electronica, but I like to listen to it, especially when I’m working. Let’s try a playlist based on the group that started it all.

First up, iTunes.

iTunes nails it here. Not a bad track. Perfect playlist for programming. Again, well done iTunes. WTF Score: 0

Next up, The Echo Nest

Another solid playlist, No WTFs. It is a bit more vocal heavy than the iTunes playlist. I think I prefer the iTunes version a bit more because of that. Still, nothing to complain about here: WTF Score: 0

Next Up Google

After listening to this playlist, I am starting to wonder if Google is just messing with us. They could do so much better by selecting songs at random within a top level genre than what they are doing now. This playlist only has 6 songs that can be considered OK, the rest are totally WTF. WTF Score: 18

After Trial 4 Scores are: iTunes: 2 WTFs, The Echo Nest 0 WTFs, Google Music: 60 WTFs

Trial #5 The Beatles – Polythene Pam

For the last trial I chose the song Polythene Pam by The Beatles. It is at the core of the amazing bit on side two of Abbey Road. The zenith of the Beatles music are (IMHO) the opening chords to this song. Lets see how everyone does:

First up: iTunes

iTunes gets a bit WTF here. They can’t offer any recommendations based upon this song. This is totally puzzling to me since The Beatles have been available in the iTunes store for quite a while now. I tried to generate playlists seeded with many different Beatles songs and was not able to generate one playlist. Totally WTF. I think that not being able to generate a playlist for any Beatles song as seed should be worth at least 10 WTF points. WTF Score: 10

Next Up: The Echo Nest

No worries with The Echo Nest playlist. Probably not the most creative playlist, but quite serviceable. WTF Score: 0

Next up Google

Instant Mix scores better on this playlist than it has on the other four. That’s not because I think they did a better job on this playlist, it is just that since the Beatles cover such a wide range of music styles, it is not hard to make a justification for just about any song. Still, I do like the variety in this playlist. There are just two WTFs on this playlist. WTF Score: 2.

After Trial 5 Scores are: iTunes: 12 WTFs, The Echo Nest 0 WTFs, Google Music: 62 WTFs

(lower scores are better)

Conclusions

I learned quite a bit during this evaluation. First of all, Apple Genius is actually quite good. The last time I took a close look at iTunes Genius was 3 years ago. It was generating pretty poor recommendations. Today, however, Genius is generating reliable recommendations for just about any track I could throw at it, with the notable exception of Beatles tracks.

I was also quite pleased to see how well The Echo Nest playlister performed. Our playlist engine is designed to work with extremely large collections (10million tracks) or with personal sized collections. It has lots of options to allow you to control all sorts of aspects of the playlisting. I was glad to see that even when operating in a very constrained situation of a single seed song, with no user feedback it performed well. I am certainly not an unbiased observer, so I hope that anyone who cares enough about this stuff will try to create their own playlists with The Echo Nest API and make their own judgements. The API docs are here: The Echo Nest Playlist API.

However, the biggest surprise of all in this evaluation is how poorly Google’s Instant Mix performed. Nearly half of all songs in Instant Mix playlists were head scratchers – songs that just didn’t belong in the playlist. These playlists were not usable. It is a bit of a puzzle as to why the playlists are so bad considering all of the smart people at Google. Google does say that this release is a Beta, so we can give them a little leeway here. And I certainly wouldn’t count Google out here. They are data kings, and once the data starts rolling from millions of users, you can bet that their playlists will improve over time, just like Apple’s did. Still, when Paul Joyce said that the Music Beta killer feature is ‘Instant Mix’, I wonder if perhaps what he meant to say was “the feature that kills Google Music is ‘Instant Mix’.”

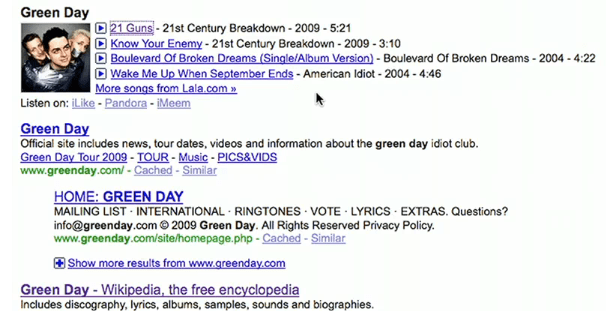

Google’s new music search

The news wires are abuzz with Google’s new music search feature. The new Google feature will allow users to search for an artist, song, album or lyric and get a music result that will include album art and a ‘play’ button that will let you listen to the music. MySpace and Lala will be serving up the music and you’ll be able to play any song in full just once. The music results will also include links to Pandora, imeem and Rhapsody. Lyrics search is provided by Gracenote.

Here’s the video announcement:

It’s about time that Google starts to include the ability to listen to search results – this will help. It’s pretty cool, but I don’t think it changes the music discovery game too much. Search is not discovery.

Update: The Register is particularly unimpressed: “Trying to forcefeed punters a lousy service is a bad idea, amplified by the assumption that if Facebook and Google are the feeding tube, we’ll suck it up.”

Magnatagatune – a new research data set for MIR

Posted by Paul in data, Music, music information retrieval, research, tags, The Echo Nest on April 1, 2009

Edith Law (of TagATune fame) and Olivier Gillet have put together one of the most complete MIR research datasets since uspop2002. The data (with the best name ever) is called magnatagatune. It contains:

- Human annotations collected by Edith Law’s TagATune game.

- The corresponding sound clips from magnatune.com, encoded in 16 kHz, 32kbps, mono mp3. (generously contributed by John Buckman, the founder of every MIR researcher’s favorite label Magnatune)

- A detailed analysis from The Echo Nest of the track’s structure and musical content, including rhythm, pitch and timbre.

- All the source code for generating the dataset distribution

Some detailed stats of the data calculated by Olivier are:

- clips: 25863

- source mp3: 5405

- albums: 446

- artists: 230

- unique tags: 188

- similarity triples: 533

- votes for the similarity judgments: 7650

This dataset is one stop shopping for all sorts of MIR related tasks including:

- Artist Identification

- Genre classification

- Mood Classification

- Instrument identification

- Music Similarity

- Autotagging

- Automatic playlist generation

As part of the dataset The Echo Nest is providing a detailed analysis of each of the 25,000+ clips. This analysis includes a description of all musical events, structures and global attributes, such as key, loudness, time signature, tempo, beats, sections, and harmony. This is the same information that is provided by our track level API that is described here: developer.echonest.com.

Note that Olivier and Edith mention me by name in their release announcement, but really I was just the go between. Tristan (one of the co-founders of The Echo Nest) did the analysis and The Echo Nest compute infrastructure got it done fast (our analysis of the 25,000 tracks took much less time than it did to download the audio).

I expect to see this dataset become one of the oft-cited datasets of MIR researchers.

Here’s the official announcement:

Edith Law, John Buckman, Paul Lamere and myself are proud to announce the release of the Magnatagatune dataset.

This dataset consists of ~25000 29s long music clips, each of them annotated with a combination of 188 tags. The annotations have been collected through Edith’s “TagATune” game (http://www.gwap.com/gwap/gamesPreview/tagatune/). The clips are excerpts of songs published by Magnatune.com – and John from Magnatune has approved the release of the audio clips for research purposes. For those of you who are not happy with the quality of the clips (mono, 16 kHz, 32kbps), we also provide scripts to fetch the mp3s and cut them to recreate the collection. Wait… there’s more! Paul Lamere from The Echo Nest has provided, for each of these songs, an “analysis” XML file containing timbre, rhythm and harmonic-content related features.

The dataset also contains a smaller set of annotations for music similarity: given a triple of songs (A, B, C), how many players have flagged the song A, B or C as most different from the others.

Everything is distributed freely under a Creative Commons Attribution – Noncommercial-Share Alike 3.0 license ; and is available here: http://tagatune.org/Datasets.html

This dataset is ever-growing, as more users play TagATune, more annotations will be collected, and new snapshots of the data will be released in the future. A new version of TagATune will indeed be up by next Monday (April 6). To make this dataset grow even faster, please go to http://www.gwap.com/gwap/gamesPreview/tagatune/ next Monday and start playing.

Enjoy!

The Magnatagatune team