10:30-12:30 Oral Session (OS7) – Harmonic & Melodic Similarity and Summarization

Session Chair: Emilia Gómez (Universitat Pompeu Fabra, Spain)

(OS7-1) A Measure of Melodic Similarity based on a Graph Representation of the Music Structure

Nicola Orio and Antonio Rodà

Nicola Orio and Antonio Rodà

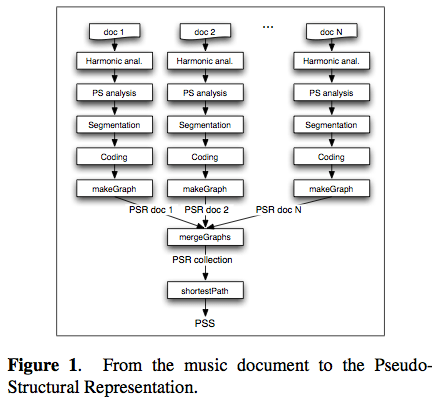

Abstract: Content-based music retrieval requires to define a similarity measure between music documents. In this paper, we propose a novel similarity measure between melodic con- tent, as represented in symbolic notation, that takes into account musicological aspects on the structural function of the melodic elements. The approach is based on the representation of a collection of music scores with a graph structure, where terminal nodes directly describe the mu- sic content, internal nodes represent its incremental generalization, and arcs denote the relationships among them. The similarity between two melodies can be computed by analyzing the graph structure and finding the shortest path between the corresponding nodes inside the graph. Preliminary results in terms of music similarity are presented using a small test collection.

Notes:

- high-level music dimensions are not reliably computed from audio

- musicologists are more interested in scores

- results with symbolic formats can be a reference for audio-based approaches

- melodic similarity is not a solved problem

- Overview of the approach:

(OS7-2) Modeling Harmonic Similarity Using a Generative Grammar of Tonal Harmony

by Bas de Haas, Martin Rohrmeier, Remco Veltkamp and Frans Wiering

by Bas de Haas, Martin Rohrmeier, Remco Veltkamp and Frans Wiering

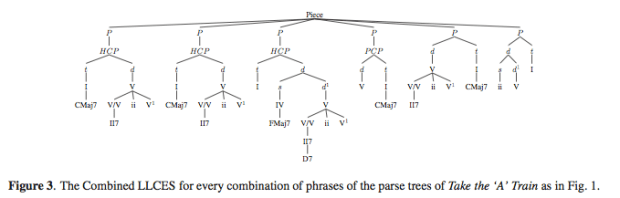

Abstract: In this paper we investigate a new approach to the similarity of tonal harmony. We create a fully functional re- modeling of an earlier version of Rohrmeier’s grammar of harmony. With this grammar an automatic harmonic analysis of a sequence of symbolic chord labels is obtained in the form of a parse tree. The harmonic similarity is determined by finding and examining the largest labeled common embeddable subtree (LLCES) of two parse trees. For the calculation of the LLCES a new O(min(n, m)nm) time algorithm is presented, where n and m are the sizes of the trees. For the analysis of the LLCES we propose six distance measures that exploit several structural characteristics of the Combined LLCES. We demonstrate in a retrieval experiment that at least one of these new methods significantly outperforms a baseline string matching approach and thereby show that using additional musical knowledge from music cognitive and music theoretic mod- els actually helps improving retrieval performance.

Notes: Harmonic similarity based on chord sequence similarities. Good for cover songs, plagiarism, improvised music. Use a generative model of tonal harmony and compare parse trees.

- Extract chord labels from audio and symbolic data (not the research focus)

- Not all info is in the data. Need a grammatical model of tonal harmony

Christopher Raphael

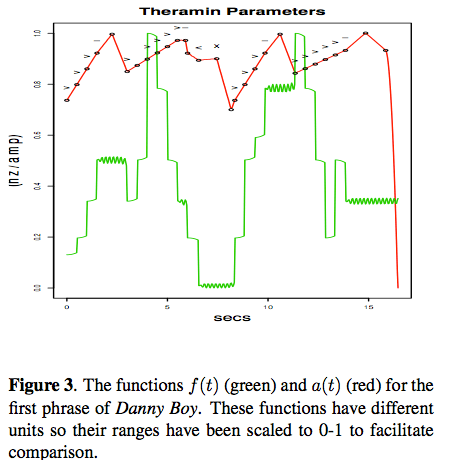

Abstract: A method for expressive melody synthesis is presented seeking to capture the structural and prosodic (stress, direction, and grouping) elements of musical interpretation. The interpretation of melody is represented through a hierarchical structural decomposition and a note-level prosodic annotation. An audio performance of the melody is constructed using the time-evolving frequency and intensity functions. A method is presented that transforms the expressive annotation into the frequency and intensity functions, thus giving the audio performance. In this framework, the problem of expressive rendering is cast as estimation of structural decomposition and the prosodic annotation. Examples are presented on a dataset of around 50 folk-like melodies, realized both from hand-marked and estimated annotations.

Notes: More interested in continuous instruments (theremin is the minimal possible instrument). He’s trying to represent and estimate interpretation itself rather than mapping score into performance decisions.

Taxonomy of expression

- Conveying musical structure (slowing down at boundaries for example)

- Prosody ( stress, direction, grouping) – the heart of the matter

- Musical Affect (happy, sad, etc) – not easy, so ignores this one

How to represent musical expression: passing tones, stress, receeding.

This was a very good talk.

(OS7-4) Use of Hidden Markov Models and Factored Language Models for Automatic Chord Recognition

Maksim Khadkevich and Maurizio Omologo

Maksim Khadkevich and Maurizio Omologo

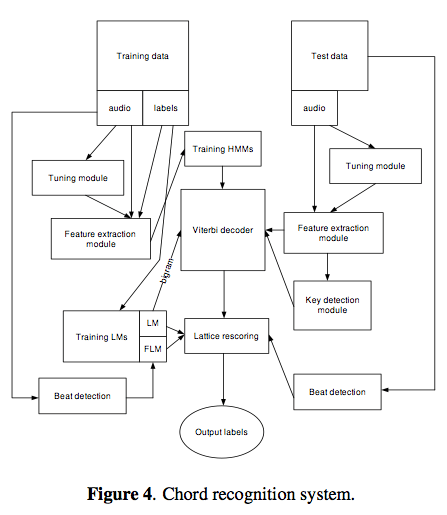

Abstract: This paper focuses on automatic extraction of acoustic chord sequences from a musical piece. Standard and factored language models are analyzed in terms of applicability to the chord recognition task. Pitch class profile vectors that represent harmonic information are extracted from the given audio signal. The resulting chord sequence is obtained by running a Viterbi decoder on trained hidden Markov models and subsequent lattice rescoring, applying the language model weight. We performed several experiments using the proposed technique. Results obtained on 175 manually-labeled songs provided an increase in accuracy of about 2%.

(OS7-5) Auditory Spectral Summarisation for Audio Signals with Musical Applications

Sam Ferguson and Densil Cabrera

Sam Ferguson and Densil Cabrera

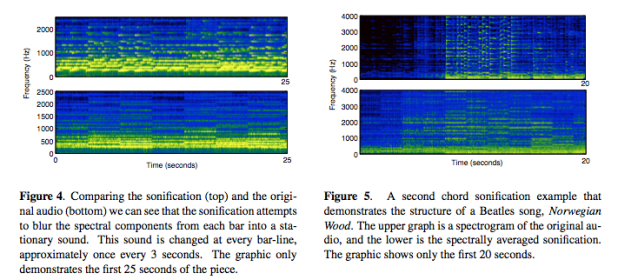

Abstract: Methods for spectral analysis of audio signals and their graphical display are widespread. However, assessing music and audio in the visual domain involves a number of challenges in the translation between auditory images into mental or symbolically represented concepts. This paper presents a spectral analysis method that exists entirely in the auditory domain, and results in an auditory presentation of a spectrum. It aims to strip a segment of audio sig- nal of its temporal content, resulting in a quasi-stationary signal that possesses a similar spectrum to the original signal. The method is extended and applied for the purpose of music summarisation.

Notes: Statistical Sonification, listen to spectra can be aided by audio steady state. The time domain algorithm. (TJ should do this at the Echo Nest!)

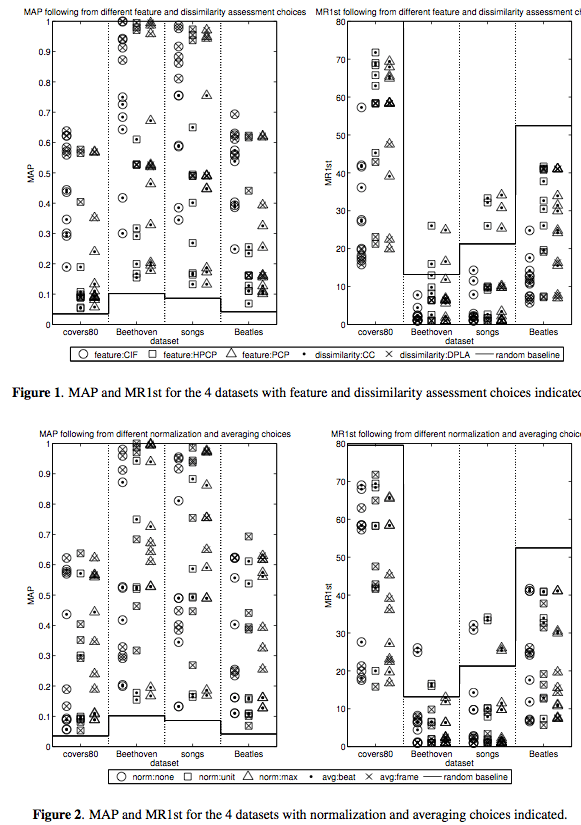

(OS7-6) Cover Song Retrieval: A Comparative Study of System Component Choices

Cynthia C.S. Liem and Alan Hanjalic

Cynthia C.S. Liem and Alan Hanjalic

Abstract:The Cover Song Retrieval (CSR) problem has received considerable attention in the MIREX 2006-2008 evaluation sessions. While the reported performance figures provide a general idea about the strengths of the submitted systems, it is not clear what actually causes the reported performance of a certain system. In other words, the question arises whether some system component design choices are more critical for a system’s performance results than others. In order to obtain a better understanding of the performance of current CSR approaches and to give recommendations for future research in the field of CSR, we designed and performed a comparative study involving system component design approaches from the best-performing systems in MIREX 2006 and 2007. The datasets used for evaluation were carefully chosen to cover the broad spectrum of the cover song domain, while still providing designated test cases. While the choice of the dissimilarity assessment method was found to cause the largest CSR performance boost and very good retrieval results were obtained on classical opus retrieval cases, results obtained on a new test case, involving recordings originat- ing from different microphone sets, point out new challenges in optimizing the feature representation step.

A very thorough, interesting talk.

#1 by jeremy on November 1, 2009 - 10:10 pm

Great series of writeups, Paul. Thank you. Sounds like a really good conference — sad to have missed it.