Building a music map

Posted by Paul in data, fun, java, Music, research, The Echo Nest, visualization, web services on May 31, 2009

I like maps, especially maps that show music spaces – in fact I like them so much I have one framed, hanging in my kitchen. I’d like to create a map for all of music. Like any good map, this map should work at multiple levels; it should help you understand the global structure of the music space, while allowing you to dive in and see fine detailed structure as well. Just as Google maps can show you that Africa is south of Europe and moments later that Stark st. intersects with Reservoir St in Nashua NH a good music map should be able to show you at a glance how Jazz, Blues and Rock relate to each other while moments later let you find an unknown 80s hair metal band that sounds similar to Bon Jovi.

My goal is to build a map of the artist space, one the allows you to explore the music space at a global level, to understand how different music styles relate, but then also will allow you to zoom in and explore the finer structure of the artist space.

I’m going to base the music map on the artist similarity data collected from the Echo Nest artist similarity web service. This web service lets you get 15 most similar artists for any artist. Using this web service I collected the artist similarity info for about 70K artists along with each artists familiarity and hotness.

Some Explorations

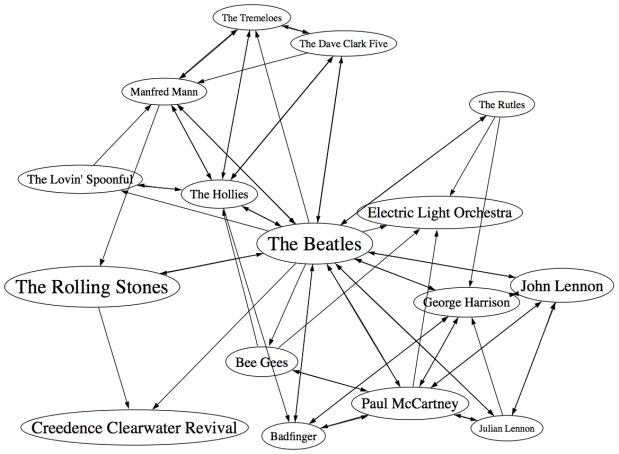

It would be silly to start trying to visualize 70K artists right away – the 250K artist-to-artist links would overwhelm just about any graph layout algorithm. The graph would look like this. So I started small, with just the near neighbors of The Beatles. (Beatles-K1) For my first experiment, I graphed the the nearest neighbors to The Beatles. This plot shows how the the 15 near neighbors to the Beatles all connect to each other.

In the graph, artist names are sized proportional to the familiarity of the artist. The Beatles are bigger than The Rutles because they are more familiar. I think the graph is pretty interesting, showing how all of the similar artists of the Beatles relate to each other, however, the graph is also really scary because it shows 64 interconnections for these 16 artists. This graph is just showing the outgoing links for the Beatles, if we include the incoming links to the Beatles (the artist similarity function is asymettric so outgoing similarities and incoming similarities are not the same), it becomes a real mess:

If you extend this graph one more level – to include the friends of the friends of The Beatles (Beatles-K2), the graph becomes unusable. Here’s a detail, click to see the whole mess. It is only 116 artists with 665 edges, but already you can see that it is not going to be usable.

Eliminating the edges

Clearly the approach of drawing all of the artist connections is not going to scale to beyond a few dozen artists. One approach is to just throw away all of the edges. Instead of showing a graph representation, use an embedding algorithm like MDS or t-SNE to position the artists in the space. These algorithms layout items by attempting to minimize the energy in the layout. It’s as if all of the similar items are connected by invisible springs which will push all of the artists into positions that minimize the overall tension in the springs. The result should show that similar artists are near each other, and dissimilar artists are far away. Here’s a detail for an example for the friends of the friends of the Beatles plot. (Click on it to see the full plot)

I find this type of visualization to be quite unsatisfying. Without any edges in the graph I find it hard to see any structure. I think I would find this graph hard to use for exploration. (Although it is fun though to see the clustering of bands like The Animals, The Turtles, The Byrds, The Kinks and the Monkeee).

Drawing some of the edges

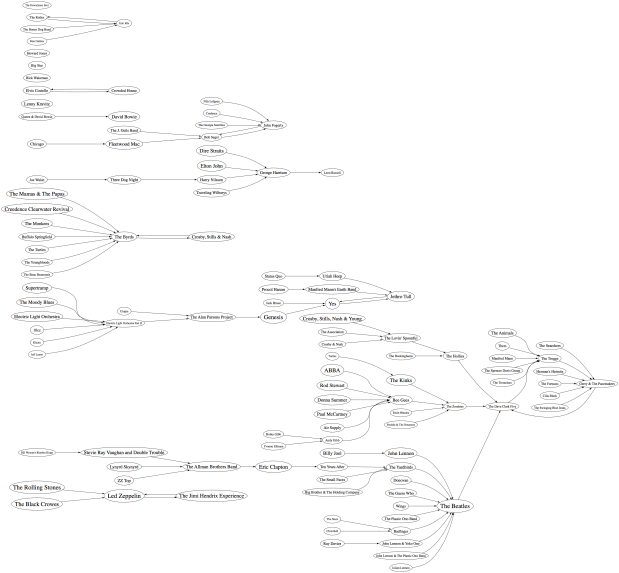

We can’t draw all of the edges, the graph just gets too dense, but if we don’t draw any edges, the map loses too much structure making it less useful for exploration. So lets see if we can only draw some of the edges – this should bring back some of the structure, without overwhelming us with connections. The tricky question is “Which edges should I draw?”. The obvious choice would be to attach each artist to the artist that it is most similar to. When apply this to the Beatles-K2 neighborhood we get something like this:

This clearly helps quite a bit. We no longer have the bowl of spaghetti, while we can still see some structure. We can even see some clustering that make sense (Led Zeppelin is clustered with Jimi Hendrix and the Rolling Stones while Air Supply is closer to the Bee Gees). But there are some problems with this graph. First, it is not completely connected, there are a 14 separate clusters varying from a size of 1 to a size of 57. This disconnection is not really acceptable. Second, there are a number of non-intuitive flows from familiar to less familiar artists. It just seems wrong that bands like the Moody Blues, Supertramp and ELO are connected to the rest of the music world via Electric Light Orchestra II (shudder).

To deal with the ELO II problem I tried a different strategy. Instead of attaching an artist to its most similar artist, I attach it to the most similar artist that also has the closest, but greater familiarity. This should prevent us from attaching the Moody Blues to the world via ELO II, since ELO II is of much less familiarity than the Moody Blues. Here’s the plot:

Now we are getting some where. I like this graph quite a bit. It has a nice left to right flow from popular to less popular, we are not overwhelmed with edges, and ELO II is in its proper subservient place. The one problem with the graph is that it is still disjoint. We have 5 clusters of artists. There’s no way to get to ABBA from the Beatles even though we know that ABBA is a near neighbor to the Beatles. This is a direct product of how we chose the edges. Since we are only using some of the edges in the graph, there’s a chance that some subgraphs will be disjoint. When I look at the a larger neighborhood (Beatles-K3), the graph becomes even more disjoint with a hundred separate clusters. We want to be able to build a graph that is not disjoint at all, so we need a new way to select edges.

Minimum Spanning Tree

One approach to making sure that the entire graph is connected is to generate the minimum spanning tree for the graph. The minimum spanning tree of a graph minimizes the number of edges needed to connect the entire graph. If we start with a completely connected graph, the minimum spanning tree is guarantee to result in a completely connected graph. This will eliminate our disjoint clusters. For this next graph, built the minimum spanning tree of the Beatles-K2 graph.

As predicted, we no longer have separate clusters within the graph. We can find a path between any two artists in the graph. This is a big win, we should be able to scale this approach up to an even larger number of artists without ever having to worry about disjoint clusters. The whole world of music is connected in a single graph. However, there’s something a bit unsatisfying about this graph. The Beatles are connected to only two other artists: John Lennon & The Plastic Ono Band and The Swinging Blue Jeans. I’ve never heard of the Swinging Blue Jeans. I’m sure they sound a lot like the Beatles, but I’m also sure that most Beatles fans would not tie the two bands together so closely. Our graph topology needs to be sensitive to this. One approach is to weight the edges of the graph differently. Instead of weighting them by similarity, the edges can be weighted by the difference in familiarity between two artists. The Beatles and Rolling Stones have nearly identical familiarities so the weight between them would be close to zero, while The Beatles and the Swinging Blue Jeans have very different familiarities, so the weight on the edge between them would be very high. Since the minimum spanning is trying to reduce the overall weight of the edges in the graph, it will chose low weight edges before it chooses high weight edges. The result is that we will still end up with a single graph, with none of the disjoint clusters, but artists will be connected to other artists of similar familiarity when possible. Let’s try it out:

Now we see that popular bands are more likely to be connected to other popular bands, and the Beatles are no longer directly connected to “The Swinging Blue Jeans”. I’m pretty happy with this method of building the graph. We are not overwhelmed by edges, we don’t get a whole forest of disjoint clusters, and the connections between artists makes sense.

Of course we can build the graph by starting from different artists. This gives us a deep view into that particular type of music. For instance, here’s a graph that starts from Miles Davis:

Here’s a near neighbor graph starting from Metallica:

And here’s one showing the near neighbors to Johann Sebastian Bach:

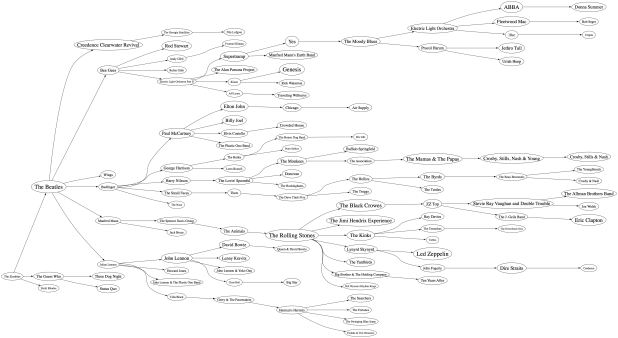

This graphing technique works pretty well, so lets try an larger set of artists. Here I’m plotting the top 2,000 most popular artists. Now, unlike the Beatles neighborhood, this set of artists is not guaranteed to be connected, so we may have some disjoint cluster in the plot. That is expected and reasonable. The image of the resulting plot is rather large (about 16MB) so here’s a small detail, click on the image to see the whole thing. I’ve also created a PDF version which may be easier to browse through.

I pretty pleased with how these graphs have turned out. We’ve taken a very complex space and created a visualization that shows some of the higher level structure of the space (jazz artists are far away from the thrash artists) as well as some of the finer details – the female bubblegum pop artists are all near each other. The technique should scale up to even larger sets of artists. Memory and compute time become the limiting factors, not graph complexity. Still, the graphs aren’t perfect – seemingly inconsequential artists sometimes appear as gateways into whole sub genre. A bit more work is needed to figure out a better ordering for nodes in the graph.

Some things I’d like to try, when I have a bit of spare time:

- Create graphs with 20K artists (needs lots of memory and CPU)

- Try to use top terms or tags of nearby artists to give labels to clusters of artists – so we can find the Baroque composers or the hair metal bands

- Color the nodes in a meaningful way

- Create dynamic versions of the graph to use them for music exploration. For instance, when you click on an artist you should be able to hear the artist and read what people are saying about them.

To create these graphs I used some pretty nifty tools:

- The Echo Nest developer web services – I used these to get the artist similarity, familiarity and hotness data. The artist similarity data that you get from the Echo Nest is really nice. Since it doesn’t rely directly on collaborative filtering approaches it avoids the problems I’ve seen with data from other sources of artist similarity. In particular, the Echo Nest similarity data is not plagued by hubs (for some music services, a popular band like Coldplay may have hundreds or thousands of near neighbors due to a popularity bias inherent in CF style recommendation). Note that I work at the Echo Nest. But don’t be fooled into thinking I like the Echo Nest artist similarity data because I work there. It really is the other way around. I decided to go and work at the Echo Nest because I like their data so much.

- Graphviz – a tool for rendering graphs

- Jung – a Java library for manipulating graphs

If you have any ideas about graphing artists – or if you’d like to see a neighborhood of a particular artist. Please let me know.

Beat Rotation Experiments

Posted by Paul in Music, remix, research, The Echo Nest on May 29, 2009

Doug Repetto, researcher at Columbia University (and founder of dorkbot), has been taking the Echo Net Remix API for a spin. Doug is interested in how beat displacement and re-ordering affects the perception of different kinds of music. To kick off his research, he’s created some really interesting beat rotation experiments. Here’s a couple of examples.

Rich Skaggs & Friends playing Bill Monroe, “Big Mon”, rotated so that the beats are in order 2 3 4 1 2 3 4 1 2 3 4 1 2 3 4 1:

The same song rotated so that the beats are in order 1 3 4 2 1 3 4 2 1 3 4 2 1 3 4 2:

This time rotated so that the beats are in order 1 3 4 2 1 2 3 4 1 3 4 2 1 2 3 4:

All 3 versions are musically interesting and sound different. I’m amazed at how music that sounds so complex can be manipulated so simply to give such interesting results. Doug has lots more examples of his experiments: rotational energy and centripetal force. If you are interested in computational remixology, it is worth checking out.

I may never use iTunes again

On the Spotify blog there’s a video demo of Spotify running on Android (the Google mobile OS). This is a demo of work-in-progress, but already it shows that just as Spotify is pushing the bounds on the desktop, they are going to push the bounds on mobile devices. The demo shows that you get the full Spotify experience on your device. You can listen to just about any song by any artist. No waiting for music to load, it just starts playing right away. All your Spotify playlists are available on your device. You don’t have to do that music shuffle game that you play with the iPod – where you have to decide on Sunday what songs you will want to listen to on Tuesday.

On the Spotify blog there’s a video demo of Spotify running on Android (the Google mobile OS). This is a demo of work-in-progress, but already it shows that just as Spotify is pushing the bounds on the desktop, they are going to push the bounds on mobile devices. The demo shows that you get the full Spotify experience on your device. You can listen to just about any song by any artist. No waiting for music to load, it just starts playing right away. All your Spotify playlists are available on your device. You don’t have to do that music shuffle game that you play with the iPod – where you have to decide on Sunday what songs you will want to listen to on Tuesday.

I think the killer feature in the demo is offline syncing. You can make any playlist available for listening even when you are offline. When you mark a playlist for offline sync, the tracks in the playlist are downloaded to your device allowing you to listen to them in those places that have no Internet connection (such as a plane, the subway or Vermont). The demo also shows how Spotify keeps all your playlists magically in sync. Add a song to one of your Spotify playlists while sitting at your computer and the corresponding playlist on your device is instantly updated. Totally cool. I do worry that the record labels may balk at the offline sync feature. Spotify may be pushing the bounds further than the labels want to go, by letting us listen to any music at any time, whether at home, in the office or mobile.

Much of my daily music listening is now through the Spotify desktop client. The folks at Spotify continue to add music at a phenomenal rate (100K new tracks in the last week). The only reason I ever fire up iTunes now is to synchronize music to my iPhone. It is no secret that Spotify is also working on an iPhone version of their mobile app. I can’t wait to get a hold of it. When that happens, I may never use iTunes again.

Check out the demo:

Artist similarity, familiarity and hotness

Posted by Paul in Music, recommendation, The Echo Nest, visualization, web services on May 25, 2009

The Echo Nest developer web services offer a number of interesting pieces of data about an artist, including similar artists, artist familiarity and artist hotness. Familiarity is an indication of how well known the artist is, while hotness (which we spell as the zoolanderish ‘hotttnesss’) is an indication of how much buzz the artist is getting right now. Top familiar artists are band like Led Zeppelin, Coldplay, and The Beatles, while top ‘hottt’ artists are artists like Katy Perry, The Boy Least Likely to, and Mastodon.

The Echo Nest developer web services offer a number of interesting pieces of data about an artist, including similar artists, artist familiarity and artist hotness. Familiarity is an indication of how well known the artist is, while hotness (which we spell as the zoolanderish ‘hotttnesss’) is an indication of how much buzz the artist is getting right now. Top familiar artists are band like Led Zeppelin, Coldplay, and The Beatles, while top ‘hottt’ artists are artists like Katy Perry, The Boy Least Likely to, and Mastodon.

I was interested in understanding how familiarity, hotness and similarity interact with each other, so I spent my Memorial day morning creating a couple of plots to help me explore this. First, I was interested in learning how the familiarity of an artist relates to the familiarity of that artists’s similar artists. When you get the similar artists for an artist, is there any relationship between the familiarity of these similar artists and the seed artist? Since ‘similar artists’ are often used for music discovery, it seems to me that on average, the similar artists should be less familiar than the seed artist. If you like the very familiar Beatles, I may recommend that you listen to ‘Bon Iver’, but if you like the less familiar ‘Bon Iver’ I wouldn’t recommend ‘The Beatles’. I assume that you already know about them. To look at this, I plotted the average familiarity for the top 15 most similar artists for each artist along with the seed artist’s familiarity. Here’s the plot:

In this plot, I’ve take the top 18,000 most familiar artists, ordered them by familiarity. The red line is the familiarity of the seed artist, and the green cloud shows the average familiarity of the similar artists. In the plot we can see that there’s a correlation between artist familiarity and the average familiarity of similar artists. We can also see that similar artists tend to be less familiar than the seed artist. This is exactly the behavior I was hoping to see. Our similar artist function yields similar artists that, in general, have an average famililarity that is less than the seed artist.

In this plot, I’ve take the top 18,000 most familiar artists, ordered them by familiarity. The red line is the familiarity of the seed artist, and the green cloud shows the average familiarity of the similar artists. In the plot we can see that there’s a correlation between artist familiarity and the average familiarity of similar artists. We can also see that similar artists tend to be less familiar than the seed artist. This is exactly the behavior I was hoping to see. Our similar artist function yields similar artists that, in general, have an average famililarity that is less than the seed artist.

This plot can help us q/a our artist similarity function. If we see the average familiarity for similar artists deviates from the standard curve, there may be a problem with that particular artist. For instance, T-Pain has a familiarity of 0.869, while the average familiarity of T-Pain’s similar artists is 0.340. This is quite a bit lower than we’d expect – so there may be something wrong with our data for T-Pain. We can look at the similars for T-Pain and fix the problem.

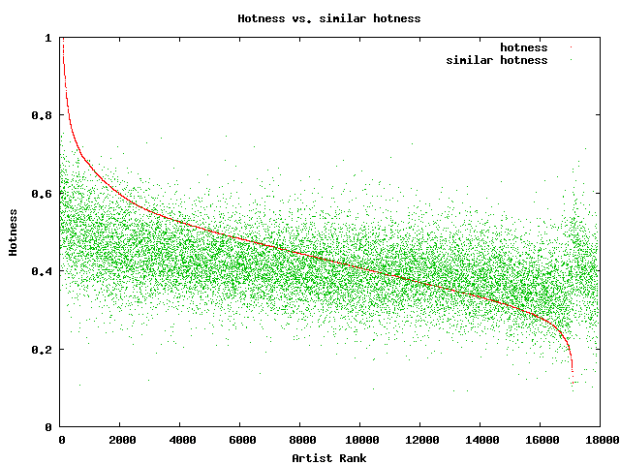

For hotness, the desired behavior is less clear. If a listener starting from a medium hot artist is looking for new music, it is unclear whether or not they’d like a hotter or colder artist. To see what we actually do, I looked at how the average hotness for similar artists compare to the hotness of the seed artist. Here’s the plot:

In this plot, the red curve is showing the hotness of the top 18,000 most familiar artists. It is interesting to see the shape of the curve, there are very few ultra-hot artists (artists with a hotness about .8) and very few familiar, ice cold artists (with a hotness of less than 0.2). The average hotness of the similar artists seems to be somewhat correlated with the hotness of the seed artist. But markedly less than with the familiarity curve. For hotness if your seed artist is hot, you are likely to get less hot similar artists, while if the seed artist is not hot, you are likely to get hotter artists. That seems like reasonable behavior to me.

In this plot, the red curve is showing the hotness of the top 18,000 most familiar artists. It is interesting to see the shape of the curve, there are very few ultra-hot artists (artists with a hotness about .8) and very few familiar, ice cold artists (with a hotness of less than 0.2). The average hotness of the similar artists seems to be somewhat correlated with the hotness of the seed artist. But markedly less than with the familiarity curve. For hotness if your seed artist is hot, you are likely to get less hot similar artists, while if the seed artist is not hot, you are likely to get hotter artists. That seems like reasonable behavior to me.

Well, there you have it. Some Monday morning explorations of familiarity, similarity and hotness. Why should you care? If you are building a music recommender, familiarity and hotness are really interesting pieces of data to have access to. There’s a subtle game a recommender has to play, it has to give a certain amount of familiar recommendations to gain trust, while also giving a certain number of novel recommendations in order to enable music discovery.

Spotify + Echo Nest == w00t!

Posted by Paul in Music, The Echo Nest, web services on May 19, 2009

Yesterday, at the SanFran MusicTech Summit, I gave a sneak preview that showed how Spotify is tapping into the Echo Nest platform to help their listeners explore for and discover new music. I must say that I am pretty excited about this. Anyone who has read this blog and its previous incarnation as ‘Duke Listens!’ knows that I am a long time enthusiast of Spotify (both the application and the team). I first blogged about Spotify way back in January of 2007 while they were still in stealth mode. I blogged about the Spotify haircuts, and their serious demeanor:

Yesterday, at the SanFran MusicTech Summit, I gave a sneak preview that showed how Spotify is tapping into the Echo Nest platform to help their listeners explore for and discover new music. I must say that I am pretty excited about this. Anyone who has read this blog and its previous incarnation as ‘Duke Listens!’ knows that I am a long time enthusiast of Spotify (both the application and the team). I first blogged about Spotify way back in January of 2007 while they were still in stealth mode. I blogged about the Spotify haircuts, and their serious demeanor:

I blogged about the Spotify application when it was released to private beta: Woah – Spotify is pretty cool, and continued to blog about them every time they added another cool feature.

I’ve been a daily user of Spotify for 18 months now. It is one of my favorite ways to listen to music on my computer. It gives me access to just about any song that I’d like to hear (with a few notable exceptions – still no Beatles for instance).

It is clear to anyone who uses Spotify for a few hours that having access to millions and millions of songs can be a bit daunting. With so many artists and songs to chose from, it can be hard to decide what to listen to – Barry Schwartz calls this the Paradox of Choice – he says too many options can be confusing and can create anxiety in a consumer. The folks at Spotify understand this. From the start they’ve been building tools to help make it easier for listeners to find music. For instance, they allow you to easily share playlists with your friends. I can create a music inbox playlist that any Spotify user can add music to. If I give the URL to my friends (or to my blog readers) they can add music that they think I should listen to.

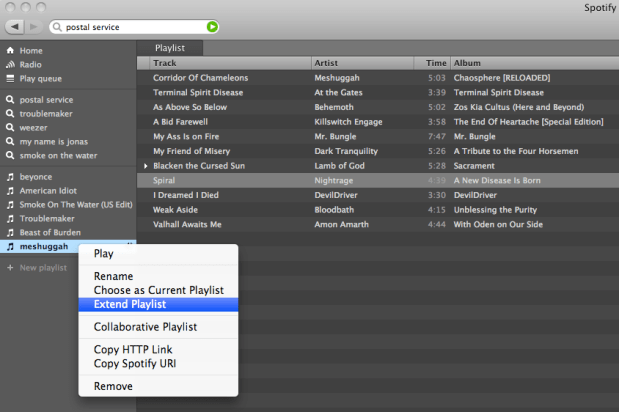

Now with the Spotify / Echo Nest connection, Spotify is going one step further in helping their listeners deal with the paradox of choice. They are providing tools to make it easier for people to explore for and discover new music. The first way that Spotify is tapping in to the Echo Nest platform is very simple, and intuitive. Right click on a playlist, and select ‘Extend Playlist’. When you do that, the playlist will automatically be extended with songs that fit in well with songs that are already in the playlist. Here’s an example:

So how is this different from any other music recommender? Well, there are a number of things going on here. First of all, most music recommenders rely on collaborative filtering (a.k.a. the wisdom of the crowds), to recommend music. This type of music recommendation works great for popular and familiar artists recommendations … if you like the Beatles, you may indeed like the Rolling Stones. But Collaborative Filtering (CF) based recommendations don’t work well when trying to recommend music at the track level. The data is often just to sparse to make recommendations. The wisdom of the crowds model fails when there is no crowd. When one is dealing with a Spotify-sized music collection of many millions of songs, there just isn’t enough user data to give effective recommendations for all of the tracks. The result is that popular tracks get recommended quite often, while less well known music is ignored. To deal with this problem many CF-based recommenders will rely on artist similarity and then select tracks at random from the set of similar artists. This approach doesn’t always work so well, especially if you are trying to make playlists with the recommender. For example, you may want a playlist of acoustic power ballads by hair metal bands of the 80s. You could seed the playlist with a song like Mötley Crüe’s Home Sweet Home, and expect to get similar power ballads, but instead you’d find your playlist populated with standard glam metal fair, with only a random chance that you’d have other acoustic power ballads. There are a boatload of other issues with wisdom of the crowds recommendations – I’ve written about them previously, suffice it to say that it is a challenge to get a CF-based recommender to give you good track-level recommendations.

The Echo Nest platform takes a different approach to track-level recommendation. Here’s what we do:

- Read and understand what people are saying about music – we crawl every corner of the web and read every news article, blog post, music review and web page for every artist, album and track. We apply statistical and natural language processing to extract meaning from all of these words. This gives us a broad and deep understanding of the global online conversation about music

- Listen to all music – we apply signal processing and machine learning algorithms to audio to extract a number perceptual features about music. For every song, we learn a wide variety of attributes about the song including the timbre, song structure, tempo, time signature, key, loudness and so on. We know, for instance, where every drum beat falls in Kashmir, and where the guitar solo starts in Starship Trooper.

- We combine this understanding of what people are saying about music and our understanding of what the music sounds like to build a model that can relate the two – to give us a better way of modeling a listeners reaction to music. There’s some pretty hardcore science and math here. If you are interested in the gory details, I suggest that you read Brian’s Thesis: Learning the meaning of music.

What this all means is that with the Echo Nest platform, if you want to make a playlist of acoustic hair metal power ballads, we’ll be able to do that – we know who the hair metal bands are, and we know what a power ballad sounds like. And since we don’t rely on the wisdom of the crowds for recommendation we can avoid some of the nasty problems that collaborative filtering can lead to. I think that when people get a chance to play with the ‘Extend Playlist’ feature they’ll be happy with the listening experience.

It was great fun giving the Spotify demo at the SanFran MusicTech Summit. Even though Spotify is not available here in the U.S., the buzz that is occuring in Europe around Spotify is leaking across the ocean. When I announced that Spotify would be using the Echo Nest, there’s was an audible gasp from the audience. Some people were seeing Spotify for the first time, but everyone knew about it. It was great to be able to show Spotify using the Echo Nest. This demo was just a sneak preview. I expect there will be lots more interestings to come. Stay tuned.

SanFran Music Tech summit

Posted by Paul in recommendation, The Echo Nest, web services on May 13, 2009

This weekend I’ll be heading out to San Francisco to attend the SanFran MusicTech Summit. The summit is a gathering of musicians, suits, lawyers, and techies with a focus on the convergence of music, business, technology and the law. There’s quite a set of music tech luminaries that will be in attendance, and the schedule of panels looks fantastic.

I’ll be moderating a panel on Music Recommendation Services. There are some really interesting folks on the panel: Stephen White from Gracenote, Alex Lascos from BMAT, James Miao from the Sixty One and Michael Papish from Media Unbound. I’ve been on a number of panels in the last few years. Some have been really good, some have been total train wrecks. The train wrecks occur when (1) panelists have an opportunity to show powerpoint slides, (2) a business-oriented panelist decides that the panel is just another sales call, (3) the moderator loses control and the panel veers down a rat hole of irrelevance. As moderator, I’ll try to make sure the panel doesn’t suck .. but already I can tell from our email exchanges that this crew will be relevant, interesting and funny. I think the panel will be worth attending.

We are already know some of the things that we want to talk about in the panel:

- Does anyone really have a problem finding new music? Is this a problem that needs to be solved?

- What makes a good music recommendation?

- What’s better – a human or a machine recommender?

- Problems in high stakes evaluations

And some things that we definitely do not want to talk about:

- Business models

- Music industry crisis

If you are attending the summit, I hope you’ll attend the panel.

The Echo Nest remix 1.0 is released!

Posted by Paul in code, fun, Music, remix, The Echo Nest, web services on May 12, 2009

Version 1.0 of the Echo Nest remix has been released. Echo Nest Remix is an open source SDK for Python that lets you write programs that manipulate music. For example, here’s a python function that will take all the beats of a song, and reverse their order:

def reverse(inputFilename, outputFilename):

audioFile = audio.LocalAudioFile(inputFilename)

chunks = audioFile.analysis.beats

chunks.reverse()

reversedAudio = audio.getpieces(audioFile, chunks)

reversedAudio.encode(outputFilename)

When you apply this to a song by The Beatles you get something that sounds like this:

which is surprisingly recognizable, musical – and yet different from the original.

Quite a few web apps have been written that use remix. One of my favorites is DonkDJ, which will ‘put a donk‘ on any song. Here’s an example: Hung Up by Madonna (with a Donk on it):

This is my jam lets you create mini-mixes to share with people.

And where would the web be without the ability to add more cowbell to any song.

There’s lots of good documentation already for remix. Adam Lindsay has created a most excellent overview and tutorial for remix. There’s API documentation and there’s documentation for the underlying Echo Nest web services that perform the audio analysis. And of course, the source is available too.

So, if you are looking for that fun summer coding project, or if you need an excuse to learn Python, or perhaps you are a budding computational remixologist download remix, grab an API key from the Echo Nest and start writing some remix code.

Here’s one more example of the fun stuff you can do with remix. Guess the song, and guess the manipulation:

Social Tags and Music Information Retrieval

Posted by Paul in Music, music information retrieval, research, tags on May 11, 2009

It is paper writing season with the ISMIR submission deadline just four days away. In the last few days a couple of researchers have asked me for a copy of the article I wrote for the Journal of New Music Research on social tags. My copyright agreement with the JNMR lets me post a pre-press version of the article – so here’s a version that is close to what appeared in the journal.

Social Tagging and Music Information Retrieval

Abstract

Social tags are free text labels that are applied to items such as artists, albums and songs. Captured in these tags is a great deal of information that is highly relevant to Music Information Retrieval (MIR) researchers including information about genre, mood, instrumentation, and quality. Unfortunately there is also a great deal of irrelevant information and noise in the tags.

Imperfect as they may be, social tags are a source of human-generated contextual knowledge about music that may become an essential part of the solution to many MIR problems. In this article, we describe the state of the art in commercial and research social tagging systems for music. We describe how tags are collected and used in current systems. We explore some of the issues that are encountered when using tags, and we suggest possible areas of exploration for future research.

Here’s the reference:

Paul Lamere. Social tagging and music information retrieval. Journal of New Music Research, 37(2):101–114.

Last.fm’s new player

Posted by Paul in Music, recommendation, tags on May 6, 2009

Last.fm pushed out a new web-based music player that has some nifty new features including an artist slideshow, multi-tag radio and multi-artist radio. It is pretty nice.

I like the new artist slide show (it is very Snapp Radio like), but they seem to run out of unique artist images rather quickly – and what’s with the grid? It looks like I am looking at the artists through a screen window.

I really like the multi-tag radio, but it is not 100% clear to me whether it is finding music that has been tagged with all the tags or whether it just alternates between the tags. Hopefully it is the former. Update: It is the former.

It is nice to see Multi-tag radio come out of the playground and into the main Last.fm player. It is a great way to get a much more fined-tuned listening experience. I do worry that Last.fm is de-emphasizing tags though. They only show a couple of tags in the player and it is hard to tell whether these are artist, album or track tags. Last.fm’s biggest treasure trove is their tag data, so they should be very careful to avoid any interface tweaks that may reduce the number of tags they collect.

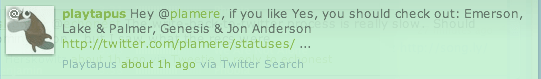

#recsplease – the Blip.fm Recommender bot

Posted by Paul in Music, recommendation, The Echo Nest, web services on May 5, 2009

Jason has put together a mashup (ah, that term seems so old and dated now) that combines twitter, blip.fm, and the Echo Nest. When you Blip a song, just add the tag #recsplease to the twitter blip and you’ll get a reply with some artists that you might like to listen to.

Jason has put together a mashup (ah, that term seems so old and dated now) that combines twitter, blip.fm, and the Echo Nest. When you Blip a song, just add the tag #recsplease to the twitter blip and you’ll get a reply with some artists that you might like to listen to.

This is similar to recomme developed by Adam Lindsay but recomme has been down for a few weeks, so clearly there was a twitter-music-recommendation gap that needed to be filled.

Check out Jason’s Blip.fm/twitter recommender bot.