The Echo Nest developer web services offer a number of interesting pieces of data about an artist, including similar artists, artist familiarity and artist hotness. Familiarity is an indication of how well known the artist is, while hotness (which we spell as the zoolanderish ‘hotttnesss’) is an indication of how much buzz the artist is getting right now. Top familiar artists are band like Led Zeppelin, Coldplay, and The Beatles, while top ‘hottt’ artists are artists like Katy Perry, The Boy Least Likely to, and Mastodon.

The Echo Nest developer web services offer a number of interesting pieces of data about an artist, including similar artists, artist familiarity and artist hotness. Familiarity is an indication of how well known the artist is, while hotness (which we spell as the zoolanderish ‘hotttnesss’) is an indication of how much buzz the artist is getting right now. Top familiar artists are band like Led Zeppelin, Coldplay, and The Beatles, while top ‘hottt’ artists are artists like Katy Perry, The Boy Least Likely to, and Mastodon.

I was interested in understanding how familiarity, hotness and similarity interact with each other, so I spent my Memorial day morning creating a couple of plots to help me explore this. First, I was interested in learning how the familiarity of an artist relates to the familiarity of that artists’s similar artists. When you get the similar artists for an artist, is there any relationship between the familiarity of these similar artists and the seed artist? Since ‘similar artists’ are often used for music discovery, it seems to me that on average, the similar artists should be less familiar than the seed artist. If you like the very familiar Beatles, I may recommend that you listen to ‘Bon Iver’, but if you like the less familiar ‘Bon Iver’ I wouldn’t recommend ‘The Beatles’. I assume that you already know about them. To look at this, I plotted the average familiarity for the top 15 most similar artists for each artist along with the seed artist’s familiarity. Here’s the plot:

In this plot, I’ve take the top 18,000 most familiar artists, ordered them by familiarity. The red line is the familiarity of the seed artist, and the green cloud shows the average familiarity of the similar artists. In the plot we can see that there’s a correlation between artist familiarity and the average familiarity of similar artists. We can also see that similar artists tend to be less familiar than the seed artist. This is exactly the behavior I was hoping to see. Our similar artist function yields similar artists that, in general, have an average famililarity that is less than the seed artist.

In this plot, I’ve take the top 18,000 most familiar artists, ordered them by familiarity. The red line is the familiarity of the seed artist, and the green cloud shows the average familiarity of the similar artists. In the plot we can see that there’s a correlation between artist familiarity and the average familiarity of similar artists. We can also see that similar artists tend to be less familiar than the seed artist. This is exactly the behavior I was hoping to see. Our similar artist function yields similar artists that, in general, have an average famililarity that is less than the seed artist.

This plot can help us q/a our artist similarity function. If we see the average familiarity for similar artists deviates from the standard curve, there may be a problem with that particular artist. For instance, T-Pain has a familiarity of 0.869, while the average familiarity of T-Pain’s similar artists is 0.340. This is quite a bit lower than we’d expect – so there may be something wrong with our data for T-Pain. We can look at the similars for T-Pain and fix the problem.

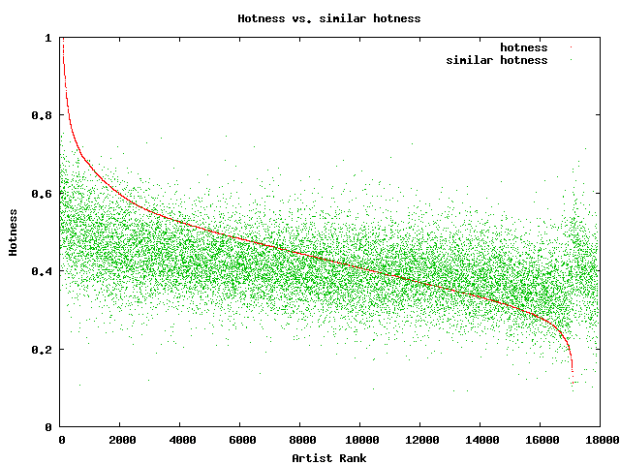

For hotness, the desired behavior is less clear. If a listener starting from a medium hot artist is looking for new music, it is unclear whether or not they’d like a hotter or colder artist. To see what we actually do, I looked at how the average hotness for similar artists compare to the hotness of the seed artist. Here’s the plot:

In this plot, the red curve is showing the hotness of the top 18,000 most familiar artists. It is interesting to see the shape of the curve, there are very few ultra-hot artists (artists with a hotness about .8) and very few familiar, ice cold artists (with a hotness of less than 0.2). The average hotness of the similar artists seems to be somewhat correlated with the hotness of the seed artist. But markedly less than with the familiarity curve. For hotness if your seed artist is hot, you are likely to get less hot similar artists, while if the seed artist is not hot, you are likely to get hotter artists. That seems like reasonable behavior to me.

In this plot, the red curve is showing the hotness of the top 18,000 most familiar artists. It is interesting to see the shape of the curve, there are very few ultra-hot artists (artists with a hotness about .8) and very few familiar, ice cold artists (with a hotness of less than 0.2). The average hotness of the similar artists seems to be somewhat correlated with the hotness of the seed artist. But markedly less than with the familiarity curve. For hotness if your seed artist is hot, you are likely to get less hot similar artists, while if the seed artist is not hot, you are likely to get hotter artists. That seems like reasonable behavior to me.

Well, there you have it. Some Monday morning explorations of familiarity, similarity and hotness. Why should you care? If you are building a music recommender, familiarity and hotness are really interesting pieces of data to have access to. There’s a subtle game a recommender has to play, it has to give a certain amount of familiar recommendations to gain trust, while also giving a certain number of novel recommendations in order to enable music discovery.

#1 by lotb on May 25, 2009 - 4:13 pm

Interesting. What do you think is the best method of determining artist similarity?

#2 by plamere on May 26, 2009 - 7:17 am

lotb:

Best way to determine artist similarity? Not an easy question to answer. There are a number of ways – 1) self identification = where the artist says who they sound like. This is not always done, and is not consistent, also artists may position themselves closer to very popular artists in order to get some reflected popularity. 2) Expert identification – where a music expert creates a list of similar artists. This is what All Music Guide does. It can give you excellent results, but suffers from a scaling problem – it is hard to do this consistently for a million artists. (3) Collaborative filtering – people who listened to X also listened to Y – this generates very good similarities for popular and mid-tail artists. It has problems with long tail artist (aka the cold start problem), has problems with feedback loops (i.e. the rich get richer) and can be easily manipulated by hackers. (4) Web mining – reading news, reviews, blogs, playlists etc and applying statistical and natural language processing to the text to generate weighted descriptive terms for each artist and determining similarity based upon how these terms overlap between artists. This approach gives good similarity perhaps even deeper into the long tail than collaborative filtering, and is less subject to the feedback and hacking problems of CF. It is harder to get right, there are tricky web mining issues, for example, the band “the the” is difficult to deal with via this approach. (5) Content-based – perform signal processing and machine learning to the audio to build an audio based music similarity function. This approach is immune to the coldstart, hacking and feedback loops but is much harder to implement. Results are not always relevant. The best approach is a hybrid approach that will combine all of these approaches into a single similarity function.