Archive for category recommendation

Finding soundtracks on spotify, mog and rdio

Posted by Paul in Music, recommendation on July 11, 2011

Ethan Kaplan over at hypebot had a problem with how hard it is to find soundtracks by John Williams on music services like Spotify and Rdio. Here’s what he said:

Try going to Spotify and browsing movie soundtracks. I’ll wait.

Try searching for John Williams. He is not a guitarist, but that is what comes up mixed in with all of the soundtrack work he has done.

And this is not something unique to Spotify, but also endemic to Rdio and Mog. Mog at least has a page of curated soundtracks, but its just as hard to find them “in the wild” as it is on Spotify. The same applies to Rdio.

Well, of course, if you search for John Williams you’ll get music by both the movie composer and by the guitarist. That is only natural, because, you may really want the music by the guitarist and not music by the composer. Let’s see what happens if you go one step further than Ethan did and search for “john williams soundstracks”. Here are the results on Spotify:

Not surprisingly, there are hundreds of matches of John Williams and soundtracks. Similar results with Rdio:

Lots of John Williams soundtrack results. Rdio even offers human curated playlists filled with soundtracks. What could be better? Likewise, if you just search for soundtracks there are lots of hits:

So I don’t buy Ethan’s premise that it is hard to find soundtracks or music by the movie composer John Williams. However, Ethan’s point still stands: finding new music on current generation music services really sucks. The next generation music services need to do much better to help people explore and discover new music. Music exploration should be fun and yet we are doomed to try to explore and discover music using a tool that looks like an accountant’s spreadsheet.

Reidentification of artists and genres in the KDD cup data

Posted by Paul in data, recommendation, research on June 21, 2011

Back in February I wrote a post about the KDD Cup ( an annual Data Mining and Knowledge Discovery competition), asking whether this year’s cup was really music recommendation since all the data identifying the music had been anonymized. The post received a number of really interesting comments about the nature of recommendation and whether or not context and content was really necessary for music recommendation, or was user behavior all you really needed. A few commenters suggested that it might be possible de-anonymize the data using a constraint propagation technique.

Many voiced an opinion that such de-anonymizing of the data to expose user listening habits would indeed be unethical. Malcolm Slaney, the researcher at Yahoo! who prepared the dataset offered the plea:

Many voiced an opinion that such de-anonymizing of the data to expose user listening habits would indeed be unethical. Malcolm Slaney, the researcher at Yahoo! who prepared the dataset offered the plea:

If you do de-anonymize the data please don’t tell anybody. We’ll NEVER be able to release data again.

As far as I know, no one has de-anonymized the KDD Cup dataset, however, researcher Matthew J. H. Rattigan of The University of Massachusetts at Amherst has done the next best thing. He has published a paper called Reidentification of artists and genres the KDD cup that shows that by analyzing at the relational structures within the dataset it is possible to identify the artists, albums, tracks and genres that are used in the anonymized dataset. Here’s an excerpt from the paper that gives an intuitive description of the approach:

For example, consider Artist 197656 from the Track 1 data. This artist has eight albums described by different combinations of ten genres. Each album is associated with several tracks, with track counts ranging from 1 to 69. We make the assumption that these albums and tracks were sampled without replacement from the discography of some real artist on the Yahoo! Music website. Furthermore, we assume that the connections between genres and albums are not sampled; that is, if an album in the KDD Cup dataset is attached to three genres, its real-world counterpart has exactly three genres (or “Categories”, as they are known on the Yahoo! Music site).

Under the above assumptions, we can compare the unlabeled KDD Cup artist with real-world Yahoo! Music artists in order to find a suitable match. The band Fischer Z, for example, is an unsuitable match, as their online discography only contains seven albums. An artist such as Meatloaf certainly has enough albums (56) to be a match, but none of those albums contain more than 31 tracks. The entry for Elvis Presley contains 109 albums, 17 of which boast 69 or more tracks; however, there is no consistent assignment of genres that satisfies our assumptions. The band Tool, however, is compatible with Artist 197656. The Tool discography contains 19 albums containing between 0 and 69 tracks. These albums are described by exactly 10 genres, which can be assigned to the unlabeled KDD Cup genres in a consistent manner. Furthermore, the match is unique: of the 134k artists in our labeled dataset, Tool is the only suitable match for Artist 197656.

Of course it is impossible for Matthew to evaluate his results directly, but he did create a number of synthetic, anonymized datasets draw from Yahoo and was able to demonstrate very high accuracy for the top artists and a 62% overall accuracy.

The motivation for this type of work is not to turn the KDD cup dataset into something that music recommendation researchers could use, but instead is to get a better understanding of data privacy issues. By understanding how large datasets can be de-anonymized, it will be easier for researchers in the future to create datasets that won’t be easily yield their hidden secrets. The paper is an interesting read – so since you are done doing all of your reviews for RecSys and ISMIR, go ahead and give it a read: https://www.cs.umass.edu/publication/docs/2011/UM-CS-2011-021.pdf. Thanks to @ocelma for the tip.

9 reasons why Google and Apple should be worried

Posted by Paul in Music, recommendation on April 4, 2011

For the last year we’ve heard rumors of how both Apple and Google were getting close to releasing music locker services that allow music listeners to upload their music collection to the cloud giving them the ability to listen to their music everywhere. So it was a big surprise when the first major Internet player to launch a music locker service wasn’t Google or Apple, but instead was Amazon. Last week, with little fanfare, Amazon released its Amazon Cloud Drive, a cloud-based music locker that includes the Amazon Cloud Player allowing people to listen to their music anywhere. Amazon’s entry into the music locker is a big deal and should be particularly worrisome for Google and Apple. Amazon brings some special sauce to the music locker world that will make them a formidable competitor:

For the last year we’ve heard rumors of how both Apple and Google were getting close to releasing music locker services that allow music listeners to upload their music collection to the cloud giving them the ability to listen to their music everywhere. So it was a big surprise when the first major Internet player to launch a music locker service wasn’t Google or Apple, but instead was Amazon. Last week, with little fanfare, Amazon released its Amazon Cloud Drive, a cloud-based music locker that includes the Amazon Cloud Player allowing people to listen to their music anywhere. Amazon’s entry into the music locker is a big deal and should be particularly worrisome for Google and Apple. Amazon brings some special sauce to the music locker world that will make them a formidable competitor:

- Amazon can keep a secret – For the last year, we’ve heard much about the rumored Google and Apple locker services, but not a peep about the Amazon service. The first time people heard about the Amazon Locker service was when Amazon announced it on its front page. It says a lot about a large organization that can launch a major new product without rumors circulating in the industry.

- Amazon isn’t afraid to say “F*ck You” to the labels. While Apple and Google are negotiating licensing rights for the locker service, Amazon just went ahead and released their locker without any special music license. Amazon Director of Music Craig Pape told Billboard.biz “We don’t believe we need licenses to store the customers’ files. We look at it the same way as if someone bought an external hard drive and copy files on there for backup.”

- Amazon knows how to do the ‘cloud thing’ – Amazon has been leading the pack in cloud computing for years. They know how to build reliable, cost-effective cloud-based solutions, they’ve been doing it longer than anyone. Thousands of applications have been deployed in the Amazon cloud from big corporations to successful startups like dropbox. Compare to Apple’s track record for MobileMe. Of course Google knows how to do this stuff too, but they haven’t been immune to problems.

- Amazon knows about discovery – Amazon’s focus on discovery makes them a much better online bookstore than any other bookstore. They use all sorts of ways to connect a reader with a book. Collaborative filtering, book reviews, customer lists, content search, best seller lists , special deals. These techniques help get their readers deep into the long tail of books. Discovery is in Amazon’s genes. Contrast that to how Youtube helps you find videos, or how well Apple’s Genius helps you find music. Currently Amazon is providing no discovery tools yet with the Amazon Cloud Music Player, but you can bet that they will be adding these features soon.

- Amazon understands the importance of metadata – Amazon has always placed a premium on collecting high quality metadata about their media. That’s why they bought IMDB, and created SoundUnwound. That’s why when I uploaded 700 albums to the Amazon cloud, Amazon found album art and metadata for every single one of them. Compare that to iTunes which after nearly 10 years, still can’t seem to find album art for 90% of my music collection.

- Amazon does APIs – this is what I’m most excited about. Imagine if and when Amazon releases the Amazon Cloud Music API that lets a developer build applications around the content stored in a music locker. This will open the door for a myriad of applications from music visualizers, playlisting engines, event recommenders, and taste sharing, on our phones, on our set top boxes, on our computers.. Amazon has lead the way in making everything they do available via APIs. When they release the Amazon Cloud Music API, I think we’ll see a new level of creativity around music exploration, discovery, organization and listening.

- Amazon has done this before – The Kindle platform has already allowed you to do for books what the Amazon music locker does for music. You can buy content in the Amazon store, keep it in your locker and consume it on any device. This is not new tech for Amazon, they’ve been doing this for years already.

- Amazon has lots of customers – Last month Steve said he thought that Apple had more customer accounts than Amazon. Of course that was just a guess and Steve is not impartial. Amazon doesn’t say how many customer accounts they have, but we know its a lot. Amazon is clever in how they use the Music Locker to promote music purchases. Music you purchase from Amazon is stored for free in your locker, and when you buy an album your locker storage gets upgraded to 20GB for free.

- Amazon seems to care – Google has accidentally built the largest music destination on the Internet, but try to use YouTube to as a place to go and find music and you are faced with the challenge of separating the good music from the many covers, remixes, parodies and just plain crap that seem to fill the channel. iTunes has gone from a pretty good way to play music to becoming something that I only use to sync new content to my phone. It is bloated, slow and painful to use. In the ten years that Apple has been king of the digital music hill they’ve done little to help improve the music listening experience. Apple has moved on to video and Apps. Music is just another feature. Contrast that with what Amazon has done with the Kindle – they’ve made a device that arguably improves the reading experience. They chose eInk over color display, they keep the non-reading features to a minimum, they give a reader great discovery tools like the ability to sample the first few chapters of any book. I’m hopeful that Amazon will apply their same since of care for books to the world of music.

Amazon’s music locker is not perfect by any means. There’s no iPhone app. The storage is too expensive, there are no discovery or automatic playlisting features in the player. But what they’ve built is solid and usable. I’m also not bullish on music lockers. I’d rather pay $10 bucks a month to listen to any of 5 million tracks than to buy tracks at a dollar each. But I’m glad to see Amazon position itself so aggressively in this space. The competition between Google, Apple and Amazon will lead to a better music experience for us all.

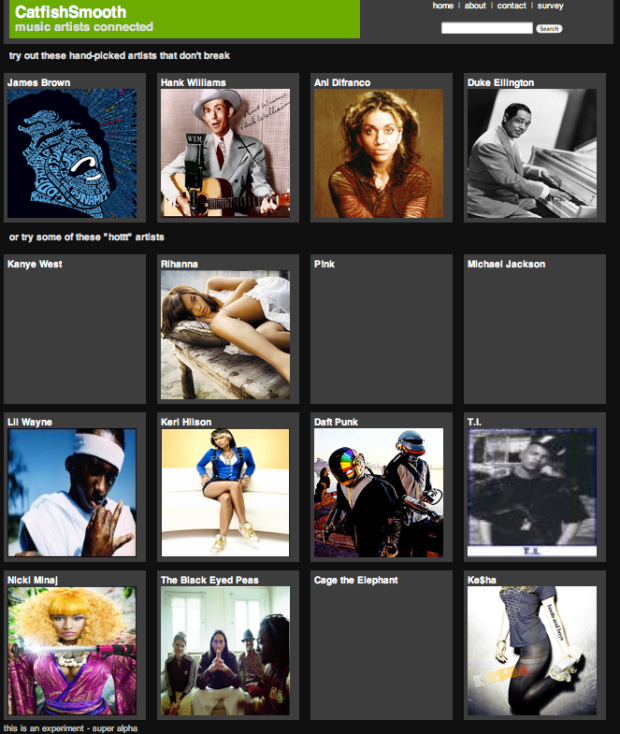

catfish smooth

Posted by Paul in data, Music, recommendation, research on January 20, 2011

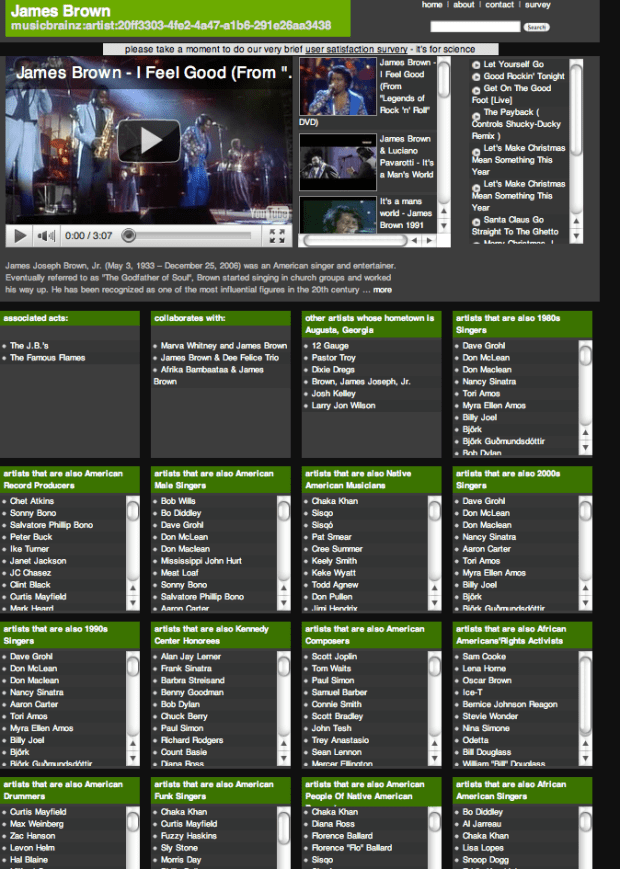

Kurt Jacobson is a recent additions to the staff here at The Echo Nest. Kurt has built a music exploration site called catfish smooth that allows you to explore the connections between artists. Kurt describes it as: all about connections between music artists. In a sense, it is a music artist recommendation system but more. For each artist, you will see the type of “similar artist” recommendations to which you are accustomed – we use last.fm and The Echo Nest to get these. But you will also see some other inter-artist connections catfish has discovered from the web of linked data. These include things like “artists that are also English Male Singers” or “artists that are also Converts To Islam” or “artists that are also People From St.Louis, Missouri”. And, hopefully, you’ll get some media for each artist so you can have a listen.

It’s a really interesting way to explore the music space, allowing you to stumble upon new artists based on a wide range of parameters.

For example take a look at the many categories and connections catfish smooth exposes for James Brown.

Kurt is currently conducting a usability survey for catfish smooth, so take a minute to kick the tires and then help Kurt finish his PhD and take the survey.

The Echo Nest gets Personal

Posted by Paul in code, Music, playlist, recommendation, remix, The Echo Nest, web services on October 15, 2010

Here at the Echo Nest just added a new feature to our APIs called Personal Catalogs. This feature lets you make all of the Echo Nest features work in your own world of music. With Personal Catalogs (PCs) you can define application or user specific catalogs (in terms of artists or songs) and then use these catalogs to drive the behavior of other Echo Nest APIs. PCs open the door to all sorts of custom apps built on the Echo Nest platform. Here are some examples:

Create better genius-style playlists – With PCs I can create a catalog that contains all of the songs in my iTunes collection. I can then use this catalog with the Echo Nest Playlist API to generate interesting playlists based upon my own personal collection. I can create a playlist of my favorite, most danceable songs for a party, or I can create a playlist of slow, low energy, jazz songs for late night reading music.

Create hyper-targeted recommendations – With PCs I can make a catalog of artists and then use the artist/similar APIs to generate recommendations within this catalog. For instance, I could create an artist catalog of all the bands that are playing this weekend in Boston and then create Music Hack Day recommender that tells each visitor to Boston what bands they should see in Boston based upon their musical tastes.

Get info on lots of stuff – people often ask questions about their whole music collection. Like, ‘what are all the songs that I have that are at 113 BPM?‘, or ‘what are the softest songs?’ Previously, to answer these sorts of questions, you’d have to query our APIs one song at a time – a rather tedious and potentially lengthy operation (if you had, say, 10K tracks). With PCs, you can make a single catalog for all of your tracks and then make bulk queries against this catalog. Once you’ve created the catalog, it is very quick to read back all the tempos in your collection.

Represent your music taste – since a Personal Catalog can contain info such as playcounts, skips, and ratings for all of the artists and songs in your collection, it can serve as an excellent proxy to your music taste. Current and soon to be released APIs will use personal catalogs as a representation of your taste to give you personalized results. Playlisting, artist similarity, music recommendations all personalized based on you listening history.

These examples just scratch the surface. We hope to see lots of novel applications of Personal Catalogs. Check out the APIs, and start writing some code.

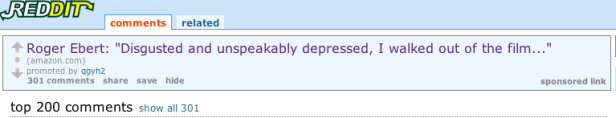

Using bad reviews to sell stuff

Posted by Paul in recommendation on October 5, 2010

Here’s a ‘sponsored link’ purchased by Amazon on the popular social news site Reddit. The text of the ad is a excerpt from Roger Ebert’s scathing review of the movie Caligula (the review opens with “Caligula is sickening, utterly worthless, shameful trash” and it goes downhill from there).

I found it a bit curious to see Amazon using such a horrendous review in an ad, but those folks at Amazon are clever. The ad has over 300 comments by Reddit readers meaning that many thousands have probably clicked on the ad to see which movie Ebert was talking about. Hundreds of comments, thousands of visitors all from a 10 word excerpt of a scathing review of the movie. Not too shabby.

Update – the commenters point out that the sponsored link is not purchased by Amazon but by Reddit user qgyh2 who makes money via Amazon’s affiliate program. As Dan says – “he picks headlines that are likely to encourage people to click on the link and then he makes money from whatever they buy while they are at Amazon.” So, qgyh2 is the clever one (but Amazon gets cleverage points for encouraging this kind of stuff via their affiliate program).

Update 2 – flx points out that qgyh2 actually works for Reddit. Here’s more info – ‘He’s helping us experiment with new ways of supporting the site. We weren’t really ready to announce this one yet, or even decide if it’s going to be a permanent fixture. When we do, there will be a blog post about it.’

The next music tastemakers – the computer programmers

Posted by Paul in recommendation on July 29, 2010

There’s an interesting piece in the New Yorker about the future of listening. The article focuses on Pandora and MOG and the challenges of making the online listening experience. Author Sasha Frere-Jones concludes with this:

While using these services, I kept thinking about an early-eighties drum machine called the Roland TR-808, which has seduced generations of musicians with its heavy kick-drum sound and the oddly human swing of its clock. Whoever programmed this box had more impact on dance music than the hundreds of better-known musicians who used the device. Similarly, the anonymous programmers who write the algorithms that control the series of songs in these streaming services may end up having a huge effect on the way that people think of musical narrative—what follows what, and who sounds best with whom. Sometimes we will be the d.j.s, and sometimes the machines will be, and we may be surprised by which we prefer.

Read the article:

The Playlist Survey

[tweetmeme source= ‘plamere’ only_single=false] Playlists have long been a big part of the music experience. But making a good playlist is not always easy. We can spend lots of time crafting the perfect mix, but more often than not, in this iPod age, we are likely to toss on a pre-made playlist (such as an album), have the computer generate a playlist (with something like iTunes Genius) or (more likely) we’ll just hit the shuffle button and listen to songs at random. I pine for the old days when Radio DJs would play well-crafted sets – mixes of old favorites and the newest, undiscovered tracks – connected in interesting ways. These professionally created playlists magnified the listening experience. The whole was indeed greater than the sum of its parts.

The tradition of the old-style Radio DJ continues on Internet Radio sites like Radio Paradise. RP founder/DJ Bill Goldsmith says of Radio Paradise: “Our specialty is taking a diverse assortment of songs and making them flow together in a way that makes sense harmonically, rhythmically, and lyrically — an art that, to us, is the very essence of radio.” Anyone who has listened to Radio Paradise will come to appreciate the immense value that a professionally curated playlist brings to the listening experience.

I wish I could put Bill Goldsmith in my iPod and have him craft personalized playlists for me – playlists that make sense harmonically, rhythmically and lyrically, and customized to my music taste, mood and context . That, of course, will never happen. Instead I’m going to rely on computer algorithms to generate my playlists. But how good are computer generated playlists? Can a computer really generate playlists as good as Bill Goldsmith, with his decades of knowledge about good music and his understanding of how to fit songs together?

To help answer this question, I’ve created a Playlist Survey – that will collect information about the quality of playlists generated by a human expert, a computer algorithm and a random number generator. The survey presents a set of playlists and the subject rates each playlist in terms of its quality and also tries to guess whether the playlist was created by a human expert, a computer algorithm or was generated at random.

Bill Goldsmith and Radio Paradise have graciously contributed 18 months of historical playlist data from Radio Paradise to serve as the expert playlist data. That’s nearly 50,000 playlists and a quarter million song plays spread over nearly 7,000 different tracks.

The Playlist Survey also servers as a Radio DJ Turing test. Can a computer algorithm (or a random number generator for that matter) create playlists that people will think are created by a living and breathing music expert? What will it mean, for instance, if we learn that people really can’t tell the difference between expert playlists and shuffle play?

Ben Fields and I will offer the results of this Playlist when we present Finding a path through the Jukebox – The Playlist Tutorial – at ISMIR 2010 in Utrecth in August. I’ll also follow up with detailed posts about the results here in this blog after the conference. I invite all of my readers to spend 10 to 15 minutes to take The Playlist Survey. Your efforts will help researchers better understand what makes a good playlist.

Take the Playlist Survey

Is Music Recommendation Broken? How can we fix it?

Posted by Paul in events, Music, recommendation, research on June 1, 2010

Save the date: 26th September 2010 for The Workshop on Music Recommendation and Discovery being held in conjunction with ACM RecSys in Barcelona, Spain. At this workshop, community members from the Recommender System, Music Information Retrieval, User Modeling, Music Cognition, and Music Psychology can meet, exchange ideas and collaborate.

Topics of interest

Topics of interest for Womrad 2010 include:

- Music recommendation algorithms

- Theoretical aspects of music recommender systems

- User modeling in music recommender systems

- Similarity Measures, and how to combine them

- Novel paradigms of music recommender systems

- Social tagging in music recommendation and discovery

- Social networks in music recommender systems

- Novelty, familiarity and serendipity in music recommendation and discovery

- Exploration and discovery in large music collections

- Evaluation of music recommender systems

- Evaluation of different sources of data/APIs for music recommendation and exploration

- Context-aware, mobile, and geolocation in music recommendation and discovery

- Case studies of music recommender system implementations

- User studies

- Innovative music recommendation applications

- Interfaces for music recommendation and discovery systems

- Scalability issues and solutions

- Semantic Web, Linking Open Data and Open Web Services for music recommendation and discovery

More info: Wormrad 2010 Call for papers

Spying on how we read

Posted by Paul in data, Music, recommendation on March 26, 2010

[tweetmeme source=”plamere” only_single=false] I’ve been reading all my books lately using Kindle for iPhone. It is a great way to read – and having a library of books in my pocket at all times means I’m never without a book. One feature of the Kindle software is called Whispersync. It keeps track of where you are in a book so that if you switch devices (from an iPhone to a Kindle or an iPad or desktop), you can pick up exactly where you left off. Kindle also stores any bookmarks, notes, highlights, or similar markings you make in the cloud so they can be shared across devices. Whispersync is a useful feature for readers, but it is also a goldmine of data for Amazon. With Whispersync data from millions of Kindle readers Amazon can learn not just what we are reading but how we are reading. In brick-and-mortar bookstore days, the only thing a bookseller, author or publisher could really know about a book was how many copies it sold. But now with the Whispersync Amazon can get learn all sorts of things about how we are reading. With the insights that they gain from this data, they will, no doubt, find better ways to help people find the books they like to read.

[tweetmeme source=”plamere” only_single=false] I’ve been reading all my books lately using Kindle for iPhone. It is a great way to read – and having a library of books in my pocket at all times means I’m never without a book. One feature of the Kindle software is called Whispersync. It keeps track of where you are in a book so that if you switch devices (from an iPhone to a Kindle or an iPad or desktop), you can pick up exactly where you left off. Kindle also stores any bookmarks, notes, highlights, or similar markings you make in the cloud so they can be shared across devices. Whispersync is a useful feature for readers, but it is also a goldmine of data for Amazon. With Whispersync data from millions of Kindle readers Amazon can learn not just what we are reading but how we are reading. In brick-and-mortar bookstore days, the only thing a bookseller, author or publisher could really know about a book was how many copies it sold. But now with the Whispersync Amazon can get learn all sorts of things about how we are reading. With the insights that they gain from this data, they will, no doubt, find better ways to help people find the books they like to read.

I hope Amazon aggregates their Whispersync data and give us some Last.fm-style charts about how people are reading. Some charts I’d like to see:

- Most Abandoned – the books and/or authors that are most frequently left unfinished. What book is the most abandoned book of all time? (My money is on ‘A Brief History of Time’) A related metric – for any particular book where is it most frequently abandoned? (I’ve heard of dozens of people who never got past ‘The Council of Elrond’ chapter in LOTR).

- Pageturner – the top books ordered by average number of words read per reading session. Does the average Harry Potter fan read more of the book in one sitting than the average Twilight fan?

- Burning the midnight oil – books that keep people up late at night.

- Read Speed – which books/authors/genres have the lowest word-per-minute average reading rate? Do readers of Glenn Beck read faster or slower than readers of Jon Stewart?

- Most Re-read – which books are read over and over again? A related metric – which are the most re-read passages? Is it when Frodo claims the ring, or when Bella almost gets hit by a car?

- Mystery cheats – which books have their last chapter read before other chapters.

- Valuable reference – which books are not read in order, but are visited very frequently? (I’ve not read my Python in a nutshell book from cover to cover, but I visit it almost every day).

- Biggest Slogs – the books that take the longest to read.

- Back to the start – Books that are most frequently re-read immediately after they are finished.

- Page shufflers – books that most often send their readers to the glossary, dictionary, map or the elaborate family tree. (xkcd offers some insights)

- Trophy Books – books that are most frequently purchased, but never actually read.

- Dishonest rater – books that most frequently rated highly by readers who never actually finished reading the book

- Most efficient language – the average time to read books by language. Do native Italians read ‘Il nome della rosa‘ faster than native English speakers can read ‘The name of the rose‘?

- Most attempts – which books are restarted most frequently? (It took me 4 attempts to get through Cryptonomicon, but when I did I really enjoyed it).

- A turn for the worse – which books are most frequently abandoned in the last third of the book? These are the books that go bad.

- Never at night – books that are read less in the dark than others.

- Entertainment value – the books with the lowest overall cost per hour of reading (including all re-reads)

Whispersync is to books as the audioscrobbler is to music. It is an implicit way to track what you are really paying attention to. The data from Whispersync will give us new insights into how people really read books. A chart that shows that the most abandoned author is James Patterson may steer readers away from Patterson and toward books by better authors. I’d rather not turn to the New York Times Best Seller list to decide what to read. I want to see the Amazon Most Frequently Finished book list instead.