What’s Hot? Estimating Country Specific Artist Popularity

I am at ISMIR this week, blogging sessions and papers that I find interesting.

What’s Hot? Estimating Countrhy Specific Artist Popularity

Markus Schedl, Tim Pohle, Noam Koenigstein, Peter Knees

Traditional charts are not perfect, not available in on countries, have biases (sales vs. plays), don’t incorporate non-sales channels like p2p. inhomogenity between countries .

Approach: Look at different channels: Google, Twitter, shared folders in Gnutella, Last.fm

- Google: “led zeppelin” + “france” but applied a popularity filter to reduce affect of overall popularity

- twiiter – geolocated major citiies of the world using freebase. Used twitter APIs with #nowplaying hashtag along with the geolocation api to search for plays in a particular country

- P2p shared folders – gnutella network – gathered a million gnutella IP addresses, gathered the metadata for the shared folders at each address, used IP2location to resolve to a geographic location

- Last.fm – retreive top 400 listeners in each country. For these top 400 listeners, retrieve the top-played artists.

Evaluation: Retrieve Last.fm most popular. Use top-n rank overlap for scoring. Compared the 4 different sources. Each approach was prone to certain distortions and bias. For future they hope to combine these sources to build a hybrid system that combines best attributes of all approaches.

ISMIR Day zero in Utrecht

Posted by Paul in events, music information retrieval on August 10, 2010

We’ve just finished Day 0 of ISMIR (the yearly conference of the International Society of Music Information Retrieval) being held in Utrecht. It is a lovely city, I’ve been enjoying walks along the many canals in the comfortably cool weather.

The zeroth day of ISMIR is the tutorial day. Ben Fields and I presented our playlisting tutorial. It was well attended, with lots of good questions at the end. The 3 hour long presentation seemed to fly by. Here’s Ben making last minute edits just before the presentation.

Finding a path through the Jukebox: The Playlist Tutorial

Posted by Paul in events, music information retrieval, playlist, research, The Echo Nest on August 6, 2010

Ben Fields and I have just put the finishing touches on our playlisting tutorial for ISMIR. Everything you could want to know about playlists. As one of the founders of a well known music intelligence company once said: Take the fun out of music and read Paul’s slides …

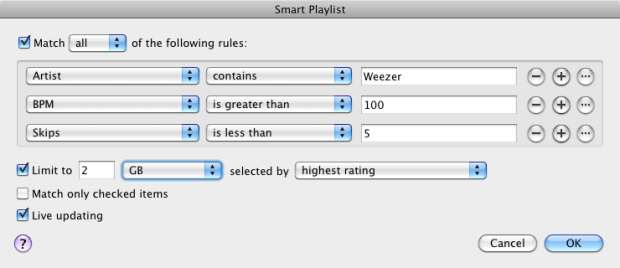

Do you use Smart Playlists?

[tweetmeme only_single=false] iTunes Smart Playlists allow for very flexible creation of dynamic playlists based on a whole boat-load of parameters. But I wonder how often people use this feature. Is it too complicated? Let’s find out. I’ve created a poll that will take you about 20 seconds to complete. Go to iTunes, count up how many smart playlists you have. You can tell which playlists are smart playlists because they have the little gear icon:

Don’t count the pre-fab smart playlists that come with iTunes (like 90’s music, Recently Added, My Top Rated, etc.). Once you’ve counted up your playlists, take the poll:

The next music tastemakers – the computer programmers

Posted by Paul in recommendation on July 29, 2010

There’s an interesting piece in the New Yorker about the future of listening. The article focuses on Pandora and MOG and the challenges of making the online listening experience. Author Sasha Frere-Jones concludes with this:

While using these services, I kept thinking about an early-eighties drum machine called the Roland TR-808, which has seduced generations of musicians with its heavy kick-drum sound and the oddly human swing of its clock. Whoever programmed this box had more impact on dance music than the hundreds of better-known musicians who used the device. Similarly, the anonymous programmers who write the algorithms that control the series of songs in these streaming services may end up having a huge effect on the way that people think of musical narrative—what follows what, and who sounds best with whom. Sometimes we will be the d.j.s, and sometimes the machines will be, and we may be surprised by which we prefer.

Read the article:

Help researchers understand earworms

Posted by Paul in Music, music information retrieval, research on July 29, 2010

Researchers at Goldsmiths, University of London, in a collaboration with the BBC 6 and the British Academy, are conducting research to find out about the music in people’s heads, sometimes called ’musical imagery’. They want to know what songs are the most common, whether people like it or don’t, what triggers it, and if some people have music in their head all the time, etc.

To help researchers understand this phenomenon, take part in a questionnaire (and you could win £150 too). I took the survey, it took about 10 minutes. They do ask some rather personal questions that seem related to one’s tendency towards compulsive behavior. (yes, I do sometimes count the stairs that I’m walking up).

It looks to be an interesting research project. More details about it are here: The Earwomery.com

Visual Music

Posted by Paul in code, data, events, fun, Music, The Echo Nest, visualization on July 28, 2010

The week long Visual Music Collaborative Workshop held at the Eyebeam just finished up. This was an invite-only event where participants did a deep dive into sound analysis techniques, openGL programming, and interfacing with mobile control devices.

Here’s one project built during the week that uses The Echo Nest analysis output:

(Via Aaron Meyers)

Novelty playlist ordering

[tweetmeme source= ‘plamere’ only_single=false] We’ve been building a new playlisting engine here at the Echo Nest. The engine is really neat – it lets you apply a whole range of very flexible constraints and orderings to make all sorts of playlists that would be a challenge for even the most savvy DJ. Playlists like 15 songs with a tempo between 120 and 130 BPM ordered by how danceable they are by very popular female artists that sound similar to Lady Gaga, that live near London, but never ever include tracks by The Spice Girls.

I was playing with the engine this weekend, writing some rules to make novelty playlists to test the limits of the engine. I started with rules typical for a similar-artist playlist: 15 songs long, filled with songs by artists similar to a seed artist (in this case Weezer), the first and last song must be by the seed artist, and no two consecutive songs can be by the same artist. Simple enough, but then I added two more rules to turn this into a novelty playlist that would be very hard for a human to make. See if you can guess what the two rules are. I think one of the rules is pretty obvious, but the second is a bit more subtle. Post your guesses in the comments.

0 Tripping Down the Freeway - Weezer

1 Yer All I've Got Ttonight - The Smashing Pumpkins

2 The Most Beautiful Things - Jimmy Eat World

3 Someday You Will Be Loved - Death Cab For Cutie

4 Don't Make Me Prove It - Veruca Salt

5 The Sacred And Profane - Smashing Pumpkins, The

6 Everything Is Alright - Motion City Soundtrack

7 The Ego's Last Stand - The Flaming Lips

8 Don't Believe A Word - Third Eye Blind

9 Don's Gone Columbia - Teenage Fanclub

10 Alone + Easy Target - Foo Fighters

11 The Houses Of Roofs - Biffy Clyro

12 Santa Has a Mullet - Nerf Herder

13 Turtleneck Coverup - Ozma

14 Perfect Situation - Weezer

Here’s another playlist – with a different set of two novelty rules, with a seed artist of Led Zeppelin. Again, if you can guess the rules, post a comment.

0 El Niño - Jethro Tull

1 Cheater - Uriah Heep

2 Hot Dog - Led Zeppelin

3 One Thing - Lynyrd Skynyrd

4 Nightmare - Black Sabbath

5 Ezy Ryder - The Jimi Hendrix Experience

6 Soulshine - Govt Mule

7 The Gypsy - Deep Purple

8 I'll Wait - Van Halen

9 Slow Down - Ozzy Osbourne

10 Civil War - Guns N' Roses

11 One Rainy Wish - Jimi Hendrix

12 Overture (Live) - Grand Funk Railroad

13 Larger Than Life - Gov'T Mule

The Music App Summit

Posted by Paul in events, Music, startup, The Echo Nest on July 23, 2010

Billboard has long been known for tracking the hottest artists, albums and songs. Now they are moving into new territory – Music Apps. In October Billboard is hosting a Music App Summit – a day focused on the world of mobile music apps. The summit will focus on new companies and technologies that are now building the next generation of music applications for mobile devices. The summit has some awesome speakers and panelist lined up from a cross section of domains (technology, business and music) like Ge Wang, Ted Cohen, Dave Kusek, Brian Zisk and The Echo Nest’s CEO Jim Lucchese.

At the core of the summit are Billboard’s first ever Music App Awards. Billboard is giving awards to the best apps in a number of categories:

- Best Artist-based App: Apps created specifically for an individual artist

- Best Music Streaming App: Apps that allow users to stream, download or otherwise enjoy music, such as Internet radio or on-demand.

- Best Music Engagement App: Apps that lets users engage in music in various ways, such as music games, music ID services, etc.

- Best Music Creation App: App that lets users make their own music.

- Best Branded App: App that best incorporates a sponsor with music capabilities to promote both the sponsor’s message and highlight the music

- Best Touring App: App created in conjunction with a specific tour or festival

Judges for the apps include Eliot Van Buskirk of Wired, Ian Rogers of Top Spin and Grammy Award winner MC Hammer.

Winning developers receive some modest prizes – but the real award is getting to demo your app to the attendees of the summit – the movers and shakers of the music industry will be there looking for that killer music app – the winner in each of the app categories will get to show their stuff. If you have a mobile music app consider submitting it to the Music App Awards. The submission deadline is July 30.

Keith Moon meets Animal

Another vafromb.py masterpiece from joshmillard.