Learning Tags that Vary Within a Song

Michael I. Mandel, Douglas Eck and Yoshua Bengio

Abstract:This paper examines the relationship between human generated tags describing different parts of the same song. These tags were collected using Amazon’s Mechanical Turk service. We find that the agreement between different people’s tags decreases as the distance between the parts of a song that they heard increases. To model these tags and these relationships, we describe a conditional restricted Boltzmann machine. Using this model to fill in tags that should probably be present given a context of other tags, we train automatic tag classifiers (autotaggers) that outperform those trained on the original data.

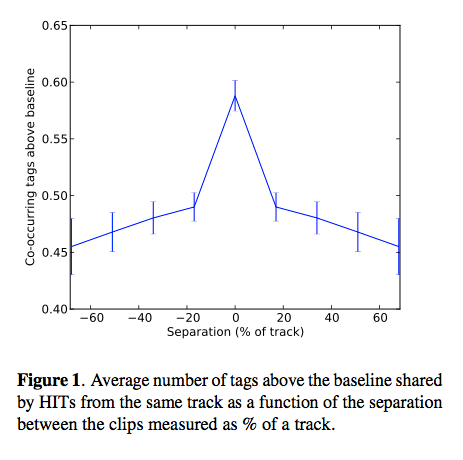

Michael enlisted the Amazon Turk to tag music. They paid for about a penny per tag. About 11% of the tags were spam. He then looked at co-occurence data that showed interesting patterns, especially as related to the distance between clips in a single track.

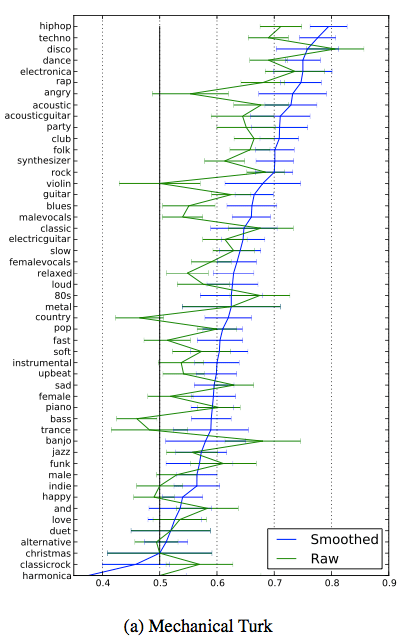

Results shows that smoothing of the data with a boltzmann machine tends to give better accuracy when tag data is sparse.

Results shows that smoothing of the data with a boltzmann machine tends to give better accuracy when tag data is sparse.