Sparse Multi-label Linear Embedding Within Nonnegative Tensor Factorization Applied to Music Tagging Yannis Panagakis, Constantine Kotropoulos and Gonzalo R. Arce

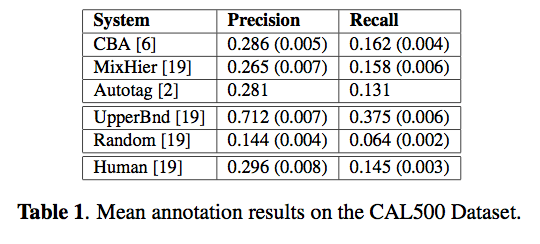

Abstract: A novel framework for music tagging is proposed. First, each music recording is represented by bio-inspired auditory temporal modulations. Then, a multilinear subspace learning algorithm based on sparse label coding is developed to effectively harness the multi-label information for dimensionality reduction. The proposed algorithm is referred to as Sparse Multi-label Linear Embedding Non- negative Tensor Factorization, whose convergence to a stationary point is guaranteed. Finally, a recently proposed method is employed to propagate the multiple labels of training auditory temporal modulations to auditory temporal modulations extracted from a test music recording by means of the sparse l1 reconstruction coefficients. The overall framework, that is described here, outperforms both humans and state-of-the-art computer audition systems in the music tagging task, when applied to the CAL500 dataset.

This paper gets the ‘Title that rolls off the tongue best’ award. I don’t understand all of the math for this one, but some notes – the wavelet-based features used, seem to be good at discriminating at the genre level. He compares the system to Doug Turnbull’s MixHier and to the system that we built at Sun labs with Thierry, Doug, Francois and myself (Autotagger: A model for predicting social tags from acoustic features on Large Music Databases)

.

#1 by Matt Hoffman on August 18, 2010 - 1:18 pm

Going over this (dense, but actually pretty interesting) paper, I noticed a little problem with their evaluation setup. The version of per-word precision they’re using ignores words that they don’t use, rather than using the prior frequency of that word like Turnbull et al., you, and I did. In my experiments, doing likewise would have given a bump in per-word precision of about .05.

If you assume they get a similar bump from that, their per-word precision is probably something more like .321 than the .371 they report, which is still a meaningful improvement over previous results.