Paul

I'm the Director of Developer Community at The Echo Nest, a research-focused music intelligence startup that provides music information services to developers and partners through a data mining and machine listening platform. I am especially interested in hybrid music recommenders and using visualizations to aid music discovery.

Looking for the Slow Build

This is the second in a series of posts exploring the Million Song Dataset.

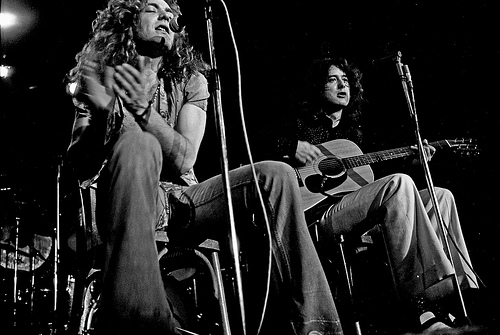

Every few months you’ll see a query like this on Reddit – someone is looking for songs that slowly build in intensity. It’s an interesting music query since it is primarily focused on what the music sounds like. Since we’ve analyzed the audio of millions and millions of tracks here at The Echo Nest we should be able to automate this type of query. One would expect that Slow Build songs will have a steady increase in volume over the course of a song, so lets look at the loudness data for a few Slow Build songs to confirm this intuition. First, here’s the canonical slow builder: Stairway to Heaven:

The green line is the raw loudness data, the blue line is a smoothed version of the data. Clearly we see a rise in the volume over the course of the song. Let’s look at another classic Slow Build – The Hall Of the Mountain King – again our intuition is confirmed:

The green line is the raw loudness data, the blue line is a smoothed version of the data. Clearly we see a rise in the volume over the course of the song. Let’s look at another classic Slow Build – The Hall Of the Mountain King – again our intuition is confirmed:

Looking at a non-Slow Build song like Katy Perry’s California Gurls we see that the loudness curve is quite flat by comparison:

Of course there are other aspects beyond loudness that a musician may use to build a song to a climax – tempo, timbre and harmony are all useful, but to keep things simple I’m going to focus only on loudness.

Looking at these plots it is easy to see which songs have a Slow Build. To algorithmically identify songs that have a slow build, we can use a technique similar to the one I described in The Stairway Detector. It is a simple algorithm that compares the average loudness of the first half of the song to the average loudness of the second half of the song. Songs with the biggest increase in average loudness rank the highest. For example, take a look at a loudness plot for Stairway to Heaven. You can see that there is a distinct rise in scores from the first half to the second half of the song (the horizontal dashed lines show the average loudness for the first and second half of the song). Calculating the ramp factor we see that Stairway to Heaven scores an 11.36 meaning that there is an increase in average loudness of 11.36 decibels between the first and the second half of the song.

This algorithm has some flaws – for instance it will give very high scores to ‘hidden track’ songs. Artists will sometimes ‘hide’ a track at the end of a CD by padding the beginning of the track with a few minutes of silence. For example, this track by ‘Fudge Tunnel’ has about five minutes of silence before the band comes in.

Clearly this song isn’t a Slow Build, our simple algorithm is fooled. To fix this we need to introduce a measure of how straight the ramp is. One way to measure the straightness of a line is to calculate the Pearson correlation for the loudness data as a function of time. XY Data with correlation that approaches one (or negative one) is by definition, linear. This nifty wikipedia visualization of the correlation of different datasets shows the correlation for various datasets:

We can combine the correlation with our ramp factors to generate an overall score that takes into account the ramp of the song as well as the straightness of the ramp. The overall score serves as our Slow Build detector. Songs with a high score are Slow Build songs. I suspect that there are better algorithms for this so if you are a math-oriented reader who is cringing at my naivete please set me and my algorithm straight.

We can combine the correlation with our ramp factors to generate an overall score that takes into account the ramp of the song as well as the straightness of the ramp. The overall score serves as our Slow Build detector. Songs with a high score are Slow Build songs. I suspect that there are better algorithms for this so if you are a math-oriented reader who is cringing at my naivete please set me and my algorithm straight.

Armed with our Slow Build Detector, I built a little web app that lets you explore for Slow Build songs. The app – Looking For The Slow Build – looks like this:

The application lets you type in the name of your favorite song and will give you a plot of the loudness over the course of the song, and calculates the overall Slow Build score along with the ramp and correlation. If you find a song with an exceptionally high Slow Build score it will be added to the gallery. I challenge you to get at least one song in the gallery.

You may find that some songs that you think should get a high Slow Build score don’t score as high as you would expect. For instance, take the song Hoppipolla by Sigur Ros. It seems to have a good build, but it scores low:

It has an early build but after a minute it has reached it’s zenith. The ending is symmetrical with the beginning with a minute of fade. This explains the low score.

Another song that builds but has a low score is Weezer’s The Angel and the One.

This song has a 4 minute power ballad build – but fails to qualify a a slow build because the last 2 minutes of the song are nearly silent.

Finding Slow Build songs in the Million Song Dataset

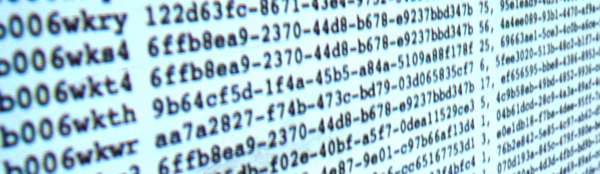

Now that we have an algorithm that finds Slow Build songs, lets apply it to the Million Song Dataset. I can create a simple MapReduce job in Python that will go through all of the million tracks and calculate the Slow Build score for each of them to help us find the songs with the biggest Slow Build. I’m using the same framework that I described in the post “How to Process a Million Songs in 20 minutes“. I use the S3 hosted version of the Million Song Dataset and process it via Amazon’s Elastic MapReduce using mrjob – a Python MapReduce library. Here’s the mapper that does almost all of the work, the full code is on github in cramp.py:

def mapper(self, _, line):

""" The mapper loads a track and yields its ramp factor """

t = track.load_track(line)

if t and t['duration'] > 60 and len(t['segments']) > 20:

segments = t['segments']

half_track = t['duration'] / 2

first_half = 0

second_half = 0

first_count = 0

second_count = 0

xdata = []

ydata = []

for i in xrange(len(segments)):

seg = segments[i]

seg_loudness = seg['loudness_max'] * seg['duration']

if seg['start'] + seg['duration'] <= half_track:

seg_loudness = seg['loudness_max'] * seg['duration']

first_half += seg_loudness

first_count += 1

elif seg['start'] < half_track and seg['start'] + seg['duration'] > half_track:

# this is the nasty segment that spans the song midpoint.

# apportion the loudness appropriately

first_seg_loudness = seg['loudness_max'] * (half_track - seg['start'])

first_half += first_seg_loudness

first_count += 1

second_seg_loudness = seg['loudness_max'] * (seg['duration'] - (half_track - seg['start']))

second_half += second_seg_loudness

second_count += 1

else:

seg_loudness = seg['loudness_max'] * seg['duration']

second_half += seg_loudness

second_count += 1

xdata.append( seg['start'] )

ydata.append( seg['loudness_max'] )

correlation = pearsonr(xdata, ydata)

ramp_factor = second_half / half_track - first_half / half_track

if YIELD_ALL or ramp_factor > 10 and correlation > .5:

yield (t['artist_name'], t['title'], t['track_id'], correlation), ramp_factor

This code takes less than a half hour to run on 50 small EC2 instances and finds a bucketload of Slow Build songs. I’ve created a page of plots of the top 500 or so Slow Build songs found by this job. There are all sorts of hidden gems in there. Go check it out:

Looking for the Slow Build in the Million Song Dataset

The page has 500 plots all linked to Spotify so you can listen to any song that strikes your fancy. Here are some my favorite discoveries:

Respighi’s The Pines of the Appian Way

I remember playing this in the orchestra back in high school. It really is sublime. Click the plot to listen in Spotify.

Maria Friedman’s Play The Song Again

So very theatrical

Mandy Patinkin’s Rock-A-Bye Your Baby With A Dixie Melody

Another song that seems to be right off of Broadway – it has an awesome slow build.

- The Million Song Dataset – deep data about a million songs

- The Stairway Index – my first look at this stuff about 2 years ago

- How to process a million songs in 20 minutes – a blog post about how to process the MSD with mrjob and Elastic Map Reduce

- Looking for the Slow Build – a simple web app that calculates the Slow Build score and loudness plot for just about any song

- cramp.py – the MapReduce code for calculating Slow Build scores for the MSD

- Looking for the Slow Build in the Million Song Dataset – 500 loudness plots of the top Slow Builders

- Top Slow Build songs in the Million Song Dataset – the top 6K songs with a Slow Build score of 10 and above

- A Spotify collaborative playlist with a bunch of Slow Build songs in it. Feel free to add more.

How to process a million songs in 20 minutes

The recently released Million Song Dataset (MSD), a collaborative project between The Echo Nest and Columbia’s LabROSA is a fantastic resource for music researchers. It contains detailed acoustic and contextual data for a million songs. However, getting started with the dataset can be a bit daunting. First of all, the dataset is huge (around 300 gb) which is more than most people want to download. Second, it is such a big dataset that processing it in a traditional fashion, one track at a time, is going to take a long time. Even if you can process a track in 100 milliseconds, it is still going to take over a day to process all of the tracks in the dataset. Luckily there are some techniques such as Map/Reduce that make processing big data scalable over multiple CPUs. In this post I shall describe how we can use Amazon’s Elastic Map Reduce to easily process the million song dataset.

The Problem

For this first experiment in processing the million song data set I want to do something fairly simple and yet still interesting. One easy calculation is to determine each song’s density – where the density is defined as the average number of notes or atomic sounds (called segments) per second in a song. To calculate the density we just divide the number of segments in a song by the song’s duration. The set of segments for a track is already calculated in the MSD. An onset detector is used to identify atomic units of sound such as individual notes, chords, drum sounds, etc. Each segment represents a rich and complex and usually short polyphonic sound. In the above graph the audio signal (in blue) is divided into about 18 segments (marked by the red lines). The resulting segments vary in duration. We should expect that high density songs will have lots of activity (as an Emperor once said “too many notes”), while low density songs won’t have very much going on. For this experiment I’ll calculate the density of all 1 million songs and find the most dense and the least dense songs.

MapReduce

A traditional approach to processing a set of tracks would be to iterate through each track, process the track, and report the result. This approach, although simple, will not scale very well as the number of tracks or the complexity of the per track calculation increases. Luckily, a number of scalable programming models have emerged in the last decade to make tackling this type of problem more tractable. One such approach is MapReduce.

MapReduce is a programming model developed by researchers at Google for processing and generating large data sets. With MapReduce you specify a map function that processes a key/value pair to generate a set of intermediate key/value pairs, and a reduce function that merges all intermediate values associated with the same intermediate key. There are a number of implementations of MapReduce including the popular open sourced Hadoop and Amazon’s Elastic MapReduce.

There’s a nifty MapReduce Python library developed by the folks at Yelp called mrjob. With mrjob you can write a MapReduce task in Python and run it as a standalone app while you test and debug it. When your mrjob is ready, you can then launch it on a Hadoop cluster (if you have one), or run the job on 10s or even 100s of CPUs using Amazon’s Elastic MapReduce. Writing an mrjob MapReduce task couldn’t be easier. Here’s the classic word counter example written with mrjob:

from mrjob.job import MRJob

class MRWordCounter(MRJob):

def mapper(self, key, line):

for word in line.split():

yield word, 1

def reducer(self, word, occurrences):

yield word, sum(occurrences)

if __name__ == '__main__':

MRWordCounter.run()

The input is presented to the mapper function, one line at a time. The mapper breaks the line into a set of words and emits a word count of 1 for each word that it finds. The reducer is called with a list of the emitted counts for each word, it sums up the counts and emits them.

When you run your job in standalone mode, it runs in a single thread, but when you run it on Hadoop or Amazon (which you can do by adding a few command-line switches), the job is spread out over all of the available CPUs.

MapReduce job to calculate density

We can calculate the density of each track with this very simple mrjob – in fact, we don’t even need a reducer step:

class MRDensity(MRJob):

""" A map-reduce job that calculates the density """

def mapper(self, _, line):

""" The mapper loads a track and yields its density """

t = track.load_track(line)

if t:

if t['tempo'] > 0:

density = len(t['segments']) / t['duration']

yield (t['artist_name'], t['title'], t['song_id']), density

(see the full code on github)

The mapper loads a line and parses it into a track dictionary (more on this in a bit), and if we have a good track that has a tempo then we calculate the density by dividing the number of segments by the song’s duration.

Parsing the Million Song Dataset

We want to be able to process the MSD with code running on Amazon’s Elastic MapReduce. Since the easiest way to get data to Elastic MapReduce is via Amazon’s Simple Storage Service (S3), we’ve loaded the entire MSD into a single S3 bucket at http://tbmmsd.s3.amazonaws.com/. (The ‘tbm’ stands for Thierry Bertin-Mahieux, the man behind the MSD). This bucket contains around 300 files each with data on about 3,000 tracks. Each file is formatted with one track per line following the format described in the MSD field list. You can see a small subset of this data for just 20 tracks in this file on github: tiny.dat. I’ve written track.py that will parse this track data and return a dictionary containing all the data.

You are welcome to use this S3 version of the MSD for your Elastic MapReduce experiments. But note that we are making the S3 bucket containing the MSD available as an experiment. If you run your MapReduce jobs in the “US Standard Region” of Amazon, it should cost us little or no money to make this S3 data available. If you want to download the MSD, please don’t download it from the S3 bucket, instead go to one of the other sources of MSD data such as Infochimps. We’ll keep the S3 MSD data live as long as people don’t abuse it.

Running the Density MapReduce job

You can run the density MapReduce job on a local file to make sure that it works:

% python density.py tiny.dat

This creates output like this:

["Planet P Project", "Pink World", "SOIAZJW12AB01853F1"] 3.3800521773317689 ["Gleave", "Come With Me", "SOKBZHG12A81C21426"] 7.0173630509232234 ["Chokebore", "Popular Modern Themes", "SOGVJUR12A8C13485C"] 2.7012807851495166 ["Casual", "I Didn't Mean To", "SOMZWCG12A8C13C480"] 4.4351713380683542 ["Minni the Moocher", "Rosi_ das M\u00e4dchen aus dem Chat", "SODFMEL12AC4689D8C"] 3.7249476012698159 ["Rated R", "Keepin It Real (Skit)", "SOMJBYD12A6D4F8557"] 4.1905674943168156 ["F.L.Y. (Fast Life Yungstaz)", "Bands", "SOYKDDB12AB017EA7A"] 4.2953929132587785

Where each ‘yield’ from the mapper is represented by a single line in the output, showing the track ID info and the calculated density.

Running on Amazon’s Elastic MapReduce

When you are ready to run the job on a million songs, you can run it the on Elastic Map Reduce. First you will need to set up your AWS system. To get setup for Elastic MapReduce follow these steps:

- create an Amazon Web Services account: <http://aws.amazon.com/>

- sign up for Elastic MapReduce: <http://aws.amazon.com/elasticmapreduce/>

- Get your access and secret keys (go to <http://aws.amazon.com/account/> and click on “Security Credentials”)

- Set the environment variables $AWS_ACCESS_KEY_ID and $AWS_SECRET_ACCESS_KEY accordingly for mrjob.

Once you’ve set things up, you can run your job on Amazon using the entire MSD as input by adding a few command switches like so:

% python density.py --num-ec2-instances 100 --python-archive t.tar.gz -r emr 's3://tbmmsd/*.tsv.*' > out.dat

The ‘-r emr’ says to run the job on Elastic Map Reduce, and the ‘–num-ec2-instances 100’ says to run the job on 100 small EC2 instances. A small instance currently costs about ten cents an hour billed in one hour increments, so this job will cost about $10 to run if it finishes in less than an hour, and in fact this job takes about 20 minutes to run. If you run it on only 10 instances it will cost 1 or 2 dollars. Note that the t.tar.gz file simply contains any supporting python code needed to run the job. In this case it contains the file track.py. See the mrjob docs for all the details on running your job on EC2.

The Results

The output of this job is a million calculated densities, one for each track in the MSD. We can sort this data to find the most and least dense tracks in the dataset. Here are some high density examples:

Ichigo Ichie by Ryuji Takeuchi has a density of 9.2 segments/second

Ichigo Ichie by Ryuji Takeuchi

129 by Strojovna 07 has a density of 9.2 segments/second

129 by Strojovna 07

The Feeding Circle by Makaton with a density of 9.1 segments per segment

The Feeding Circle by Makaton

Indeed, these pass the audio test, they are indeed high density tracks. Now lets look at some of the lowest density tracks.

Deviation by Biosphere with a density of .014 segments per second

Deviation by Biosphere

The Wire IV by Alvin Lucier with a density of 0.014 segments per second

The Wire IV by Alvin Lucier

improvisiation_122904b by Richard Chartier with a density of .02 segments per second

improvisation by Richard Chartier

Wrapping up

The ‘density’ MapReduce task is about as simple a task for processing the MSD that you’ll find. Consider this the ‘hello, world’ of the MSD. Over the next few weeks, I’ll be creating some more complex and hopefully interesting tasks that show some of the really interesting knowledge about music that can be gleaned from the MSD.

(Thanks to Thierry Bertin-Mahieux for his work in creating the MSD and setting up the S3 buckets. Thanks to 7Digital for providing the audio samples)

How do you discover music?

Posted in Music, recommendation, research on August 18, 2011

I’m interested in learning more about how people are discovering new music. I hope that you will spend 2 mins and take this 3 question poll. I’ll publish the results in a few weeks.

Loudest songs in the world

Posted in fun, Music, The Echo Nest on August 17, 2011

Lots of ink has been spilled about the Loudness war and how modern recordings keep getting louder as a cheap method of grabbing a listener’s attention. We know that, in general, music is getting louder. But what are the loudest songs? We can use The Echo Nest API to answer this question. Since the Echo Nest has analyzed millions and millions of songs, we can make a simple API query that will return the set of loudest songs known to man. (For the hardcore geeks, here’s the API query that I used). Note that I’ve restricted the results to those in the 7Digital-US catalog in order to guarantee that I’ll have a 30 second preview for each song.

So without further adieu, here are the loudest songs

Topping and Core by Grimalkin555

The song Topping and Core by Grmalking555 has a whopping loudness of 4.428 dB.

Modifications by Micron

The song Modifications by Micron has a loudness of 4.318 dB.

Hey You Fuxxx! by Kylie Minoise

The song Hey You Fuxxx! by Kylie Minoise with a loudness of 4.231 dB

Here’s a little taste of Kylie Minoise live (you may want to turn down your volume)

War Memorial Exit by Noma

The song War Memorial Exit by Noma with a loudness of 4.166 dB

Hello Dirty 10 by Massimo

The song Hello Dirty 10 by Massimo with a loudness of 4.121 dB.

These songs are pretty niche. So I thought it might be interesting to look the loudest songs culled from the most popular songs. Here’s the query to do that. The loudest popular song is:

Welcome to the Jungle by Guns 'N Roses

The loudest popular song is Welcome to the Jungle by Guns ‘N Roses with a loudness of -1.931 dB.

You may be wondering how a loudness value can be greater than 0dB. Loudness is a complex measurement that is both a function of time and frequency. Unlike traditional loudness measures, The Echo Nest analysis models loudness via a human model of listening, instead of directly mapping loudness from the recorded signal. For instance, with a traditional dB model a simple sinusoidal function would be measured as having the same exact “amplitude” (in dB) whether at 3KHz or 12KHz. But with The Echo Nest model, the loudness is lower at 12KHz than it is at 3KHz because you actually perceive those signals differently.

Thanks to the always awesome 7Digital for providing album art and 30 second previews in this post.

25 SXSW Music Panels worth voting for

Posted in events, Music, The Echo Nest on August 16, 2011

Yesterday, SXSW opened up the 2012 Panel Picker allowing you to vote up (or down) your favorite panels. The SXSW organizers will use the voting info to help whittle the nearly 3,600 proposals down to 500. I took a tour through the list of music related panel proposals and selected a few that I think are worth voting for. Talks in green are on my “can’t miss this talk” list. Note that I work with or have collaborated with many of the speakers on my list, so my list can not be construed as objective in any way.

There are many recurring themes. Turnatable.fm is everywhere. Everyone wants to talk about the role of the curator in this new world of algorithmic music recommendations. And Spotify is not to be found anywhere!

I’ve broken my list down into a few categories:

Social Music – there must be a twenty panels related to social music. (Eleven(!) have something to do with Turntable.fm) My favorites are:

- Social Music Strategies: Viral & the Power of Free – with folks from MOG, Turntable, Sirius XM, Facebook and Fred Wilson. I’m not a big fan of big panels (by the time you get done with the introductions, it is time for Q&A), but this panel seems stacked with people with an interesting perspective on the social music scene. I’m particularly interested in hearing the different perspectives from Turntable vs. Sirius XM.

- Can Social Music Save the Music Industry? – Rdio, Turntable, Gartner, Rootmusic, Songkick – Another good lineup of speakers (Turntable.fm is everywhere at SXSW this year) exploring social music. Curiously, there’s no Spotify here (or as far as I can tell on any talks at SXSW).

- Turntable.fm the Future of Music is Social – Turntable.fm – This is the turntable.fm story.

- Reinventing Tribal Music in the land of Earbuds – AT&T – this talk explores how music consumption changes with new social services and the technical/sociological issues that arise when people are once again free to choose and listen to music together.

Man vs. Machine – what is the role of the human curator in this age of algorithmic recommendation and music. Curiously, there are at least 5 panel proposals on this topic.

- Music Discovery:Man Vs. Machine – MOG, KCRW, Turntable.fm, Heather Browne

- Music/Radio Content: Tastemakers vs. Automation – Slacker

- Editor vs. Algorithm in the Music Discovery Space – SPIN, Hype Machine, Echo Nest, 7Digital

- Curation in the age of mechanical recommendations – Matt Ogle / Echo Nest – This is my pick for the Man vs. Machine talk. Matt is *the* man when it comes to understanding what is going on in the world of music listening experience.

- Crowding out the Experts – Social Taste Formation – Last.fm, Via, Rolling Stone – Is social media reducing the importance of reviewers and traditional cultural gatekeepers? Are Yelp, Twitter, Last.fm and other platforms creating a new class of tastemakers?

Music Discovery – A half dozen panels on music recommendation and discovery. Favs include:

- YouKnowYouWantIt: Recommendation Engines that Rock – Netflix, Pandora, Match.com – this panel is filled with recommendation rock stars

- The Dark Art of Digital Music Recommendations – Rovi – Michael Papish of Rovi promises to dive under the hood of music recommendation.

- No Points for Style: Genre vs. Music Networks – SceneMachine – Any talk proposal with statements like “Genre uses a 19th-century tool — a Darwinian tree — to solve a 21st-century problem. And unlike evolutionary science, it’s subjective. By the time a genre branch has been labeled (viz. “grunge”), the scene it describes is as dead as Australopithecus.” is worth checking out.

Mobile Music – Is that a million songs in your pocket or are you just glad to see me?

- Music Everywhere: Are we there yet? – Soundcloud, Songkick, Jawbone – Have we arrived at the proverbial celestial jukebox? What are the challenges?

Big Data – exploring big data sets to learn about music

- Data Mining Music – Paul Lamere – Shameless self promotion. What can we learn if we have really deep data about millions of songs?

- The Wisdom of Thieves: Meaning in P2P Behavior – Ben Fields – Don’t miss Ben’s talk about what we can learn about music (and other media) from mining P2P behavior. This talk is on my must see list.

- Big data for Everyman: Help liberate the data serf – Splunk – webifying and exploiting big data

Echo Nesty panels – proposals from folks from the nest. Of course, I recommend all of these fine talks.

- Active Listening – Tristan Jehan – Tristan takes a look at how the music experience is changing now that the listener can take much more active control of the listening experience. There’s no one who understands music analysis and understanding better than Tristan.

- Data Mining Music – Paul Lamere – This is my awesome talk about extracting info from big data sets like the Million Song Dataset. If you are a regular reader of this blog, you’ll know that I’ll be looking at things like click track detectors, passion indexes, loudness wars and son on.

- What’s a music fan worth? – Jim Lucchese – Echo Nest CEO takes a look at the economics of music, from iOS apps to musicians. Jim knows this stuff better than anyone.

- Music Apps Gone Wild – Eliot Van Buskirk – Eliot takes a tour of the most advanced, wackiest music apps that exist — or are on their way to existing.

- Curation in the age of mechanical recommendations – Matt Ogle – Matt is a phenomenal speaker and thinker in the music space. His take on the role of the curator in this world of algorithms is at the top of my SXSW panel list.

- Editor vs. Algorithm in the Music Discovery Space – SPIN, Hype Machine, Echo Nest (Jim Lucchese), 7Digital

- Defining Music Discovery through Listening – Echo Nest (Tristan Jehan), Hunted Media – This session will examine “true” music discovery through listening and how technology is the facilitator.

Miscellaneous topics

- Designing Future Music Experiences – Rdio, Turntable, Mary Fagot – A look at the user experience for next generation music apps.

- Music at the App Store: Lessons from Eno and Björk – Are albums as apps gimmicks or do they provide real value?

- Participatory Culture: The Discourse of Pitchfork – An analysis of ten years of music writing to extract themes.

Data Mining Music – a SXSW 2012 Panel Proposal

Posted in data, events, Music, music information retrieval, The Echo Nest on August 15, 2011

I’ve submitted a proposal for a SXSW 2012 panel called Data Mining Music. The PanelPicker page for the talk is here: Data Mining Music. If you feel so inclined feel free to comment and/or vote for the talk. I promise to fill the talk with all sorts of fun info that you can extract from datasets like the Million Song Dataset.

Here’s the abstract:

Data mining is the process of extracting patterns and knowledge from large data sets. It has already helped revolutionized fields as diverse as advertising and medicine. In this talk we dive into mega-scale music data such as the Million Song Dataset (a recently released, freely-available collection of detailed audio features and metadata for a million contemporary popular music tracks) to help us get a better understanding of the music and the artists that perform the music.

We explore how we can use music data mining for tasks such as automatic genre detection, song similarity for music recommendation, and data visualization for music exploration and discovery. We use these techniques to try to answers questions about music such as: Which drummers use click tracks to help set the tempo? or Is music really faster and louder than it used to be? Finally, we look at techniques and challenges in processing these extremely large datasets.

Questions answered:

- What large music datasets are available for data mining?

- What insights about music can we gain from mining acoustic music data?

- What can we learn from mining music listener behavior data?

- Who is a better drummer: Buddy Rich or Neil Peart?

- What are some of the challenges in processing these extremely large datasets?

Flickr photo CC by tristanf

Amazon strikes back

Posted in Music on August 10, 2011

Last month we saw how Amazon had to change its Kindle iOS app to comply with Apple’s TOS. Amazon eliminated the ‘Kindle Store’ button making it harder for Kindle readers to purchase books. Today, Amazon has fought back by releasing the Amazon Kindle Cloud Reader – A pure HTML5 web application for reading books. The cloud reader lets you do anything that the native Kindle app does, including offline reading. And, since HTML5 apps are not subject to Apple’s TOS, the Kindle Cloud Reader brings back integration with the Kinde Store.

This may ultimately become the most viable route for music subscription services as well. Instead of creating native iOS apps, music services may look to create rich web apps instead. HTML5 is certainly capable enough, and soon audio support and local caching will be mature enough to support even the most sophisticated music listening app. MOG has already converted their main application to HTML5. I suspect more will follow suit. As HTML5 improves, we may see an exodus away from iOS. The more you tighten your grip, Apple, the more applications will slip through your fingers.

How are music services responding to Apple’s new TOS?

Posted in Music, recommendation on August 4, 2011

There’s been quite a bit of turmoil around how IOS developers can sell products and subscriptions within their IOS application. Apple says, essentially, if you sell stuff within your app you have to give Apple a 30% cut

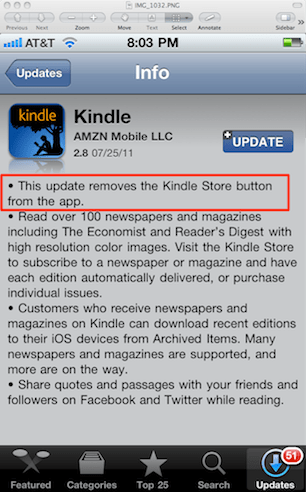

There’s been quite a bit of turmoil around how IOS developers can sell products and subscriptions within their IOS application. Apple says, essentially, if you sell stuff within your app you have to give Apple a 30% cut and you can’t try to pass costs onto the customer by charging more for items purchased within an App. The cost for an item must be the same whether it was purchased through the app or through some other means. Update: In June, MacRumors reported that Apple updated its TOS so that content providers are now also free to charge whatever price they wish for content purchased outside of an App. Apple also says that you can no longer have a button or a link in your app to a website where a user can purchase content without giving Apple their 30% cut.

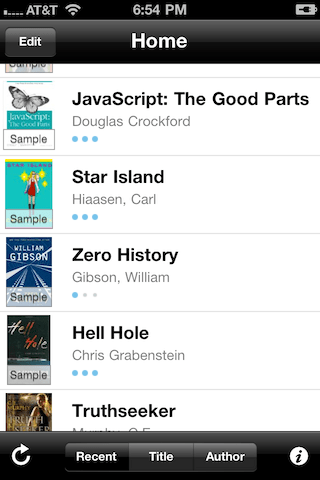

For most media industries,there is not enough left of the profit pie to allow Apple to take 30% of it. This has left most media companies in a quandary of how to continue to give their users a good experience, without bankrupting their company. Many folks looked toward Amazon to see how they would react. Amazon’s Kindle reader is used by millions of iPad and iPhone readers to purchase and read digital books. Amazon’s solution was simple. Last week they issued an update to their Kindle Reader IOS app that removed the Kindle Store button. After the update, The [Kindle Store] button is no longer present in the app. This means that users of the Kindle IOS app can no longer launch a book shopping session from within the Kindle app. Here’s the update:

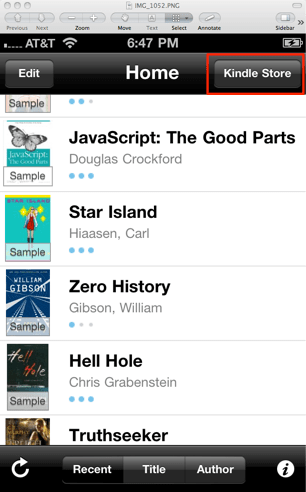

Before the update, the Kindle app looks like this, with a very visible Kindle Store button that will take you to the Kindle web store, where you can buy Kindle books:

After the update, the Kindle App looks like this. The Kindle Store button is gone.

What are music services doing?

I was curious to see how various music subscription services were dealing with the same issue. I fired up the apps, checked for updates and this is what I found.

Spotify

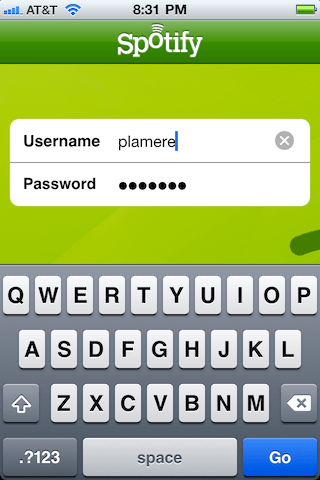

Spotify updated their app to get rid of any in-app purchases or subscription links just like the Kindle. You can only listen to Spotify mobile if you already have a Spotify mobile account.

When you login to Spotify there is no option to register an account. Spotify just assumes that you have already registered and are ready to login in and start using the app:

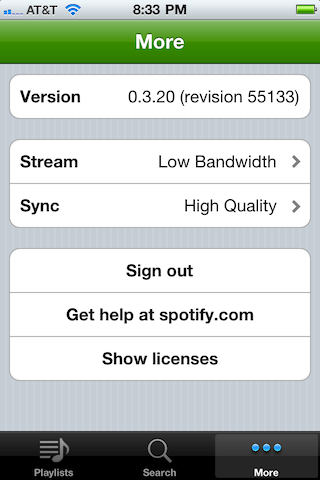

Curiously, there is a ‘Get help at Spotify.com’ button on the More page of the app. This will open a web browser and bring you to the Spotify Help page, which puts you two clicks away from a ‘subscribe’ button. This must cut pretty close to Apple’s rules about links to web sites.

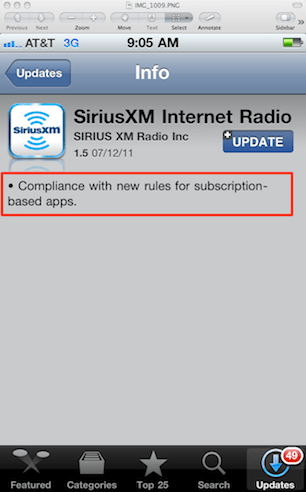

SiriusXM

As with Spotify, SiriusXM, removed any links back to their site. Only people that already have a SiriusXM account can use the SiriusXM app.

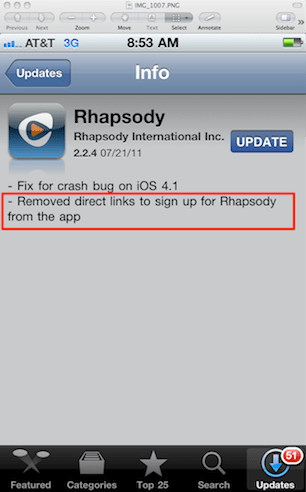

Rhapsody

Same story for Rhapsody, there’s no way to get a subscription for Rhapsody within the Rhapsody Application.

MOG

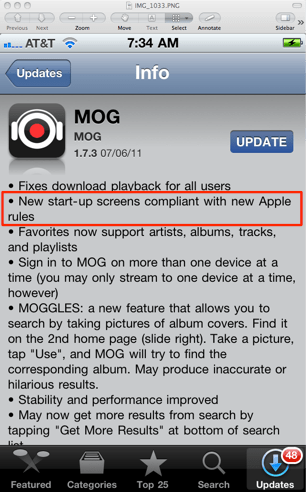

MOG issued in update in July that removed links to the MOG subscription portal.

Napster

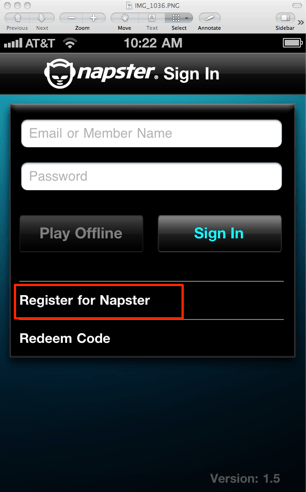

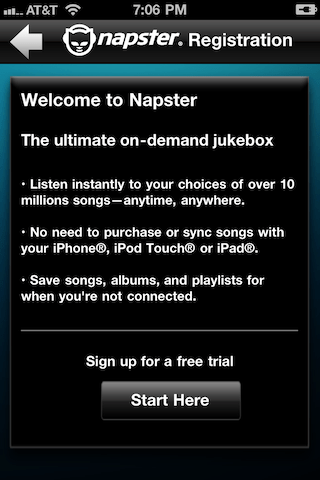

Interestingly enough, the very latest version of Napster happily allows you to register for Napster through the application. On the Sign In page there is a prominent Register for Napster button.

Pressing the button brings you to a Registration page where you can sign up for a 7-day free trial

I wonder what happens if a 7-day free trial user converts to a paying subscriber. Does Apple get 30% or is Napster hoping that no one notices?

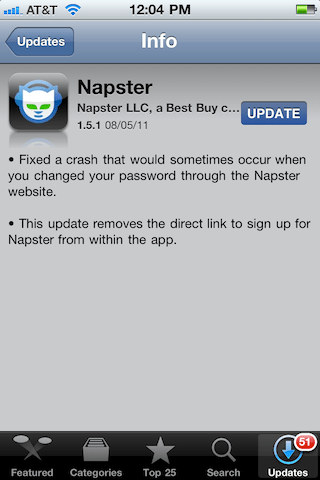

Update – A Napster update was released one day after this post was published that eliminates the direct signup link:

Slacker

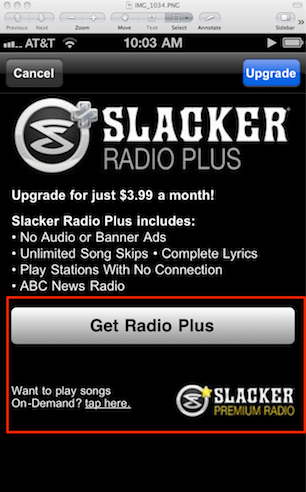

Slacker’s $3.99 a month Radio Plus product is included as a prominent upgrade in the Slacker app. If you hit the upgrade button you will get a form to fill out with all of your credit card info so they can start charging you the 4 bucks. The question is whether or not Apple is getting $1.20 of that 4 bucks.

Pandora

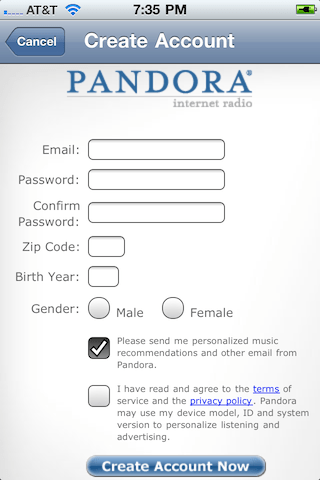

With Pandora you can create a free account through the mobile app, but there is no mention of a premium account, nor are there any links to Pandora.com as far as I can tell.

Last.fm

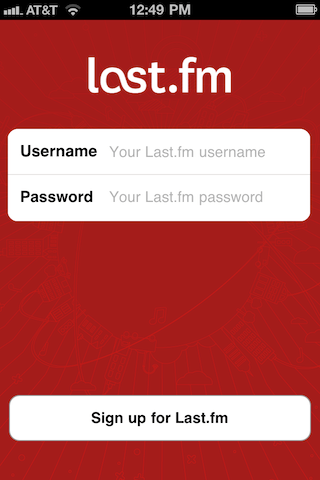

Just like Pandora, the Last.fm app will let you sign up for a non-premium account via the app and makes no mention or attempt to upsell you to a paid account:

Rdio

Rdio takes a similar approach to Pandora and Last.fm. It allows users to sign up for a 7 day free trial account via the app. It makes no mention and has no links to a premium subscription page. It is not clear to me what happens at the end of the trial period, whether they will prompt you to visit Rdio, or if they just say “Your free trial is over, thanks for listening”.

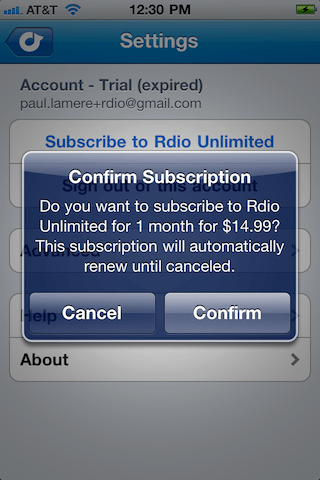

Update – It is a moving target out there. Rdio issued an update yesterday that now allows you to purchase a monthly subscription in the app. With the new version you can now click on the ‘Subscribe to Rdio Unlimited’. When you do you receive this confirmation dialog:

This allows you to purchase the Rdio subscription for $14.99, which just happens to be 33% more than an Rdio Unlimited subscription would cost if purchased directly from the web. Rdio is taking advantage of Apple’s recent relaxation of the rules and seeing how effective in-app subscription purchases stack up against cheaper out-of-app purchases. There’s a good LA Times article Rdio attempts to survive Apple’s subscription tax that describes Rdio’s approach to dealing with this issue.

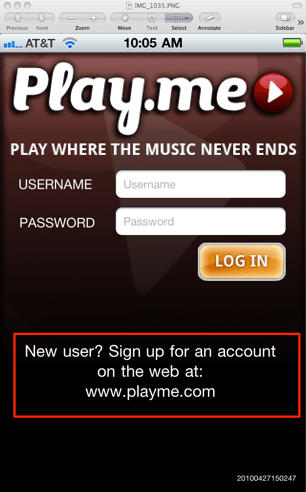

Playme

The latest version of Playme doesn’t have a button or link that brings you to the Playme subscription page. It does, however, display http://www.playme.com prominently on the sign in page so you can type the URL directly into your browser. I guess technically the words http://www.playme.com are not a link if you can’t click or tap it to go there.

Grooveshark

Grooveshark has never been timid of walking up to the line and stepping across it. The only way to get Grooveshark on an IOS device is to Jailbreak your device. With a Jailbroken version, Grooveshark doesn’t need to pay anyone for anything.

Conclusion

Apple has always been a company that prides itself on encouraging an excellent user experience. However, when Apple had to weigh a good user experience against potentially making 30% of every music subscription they decided to screw over the user and go for the pot of money. The reality, is, however, that no music streaming company will ever be able to afford to give Apple a 30% cut. The result is that these apps have to work around Apple’s rules, the result being a poor user experience, and no money for Apple. Hopefully, by the end of the year, Apple will look at the bottom line and realize that they’ve made no extra money from the 30% rule, and instead have encouraged the creation of a big streaming pile of music apps that make the user jump through all sorts of unnecessary hoops for no good reason. Note however, that the story isn’t over. Rdio is experimenting with in-app subscription purchases. If they are successful at this, in a few months time, perhaps we’ll see Spotify, Mog, Rhapsody and the others try the same thing.

How do you spell ‘Britney Spears’?

Posted in code, data, Music, music information retrieval, research, The Echo Nest on July 28, 2011

I’ve been under the weather for the last couple of weeks, which has prevented me from doing most things, including blogging. Luckily, I had a blog post sitting in my drafts folder almost ready to go. I spent a bit of time today finishing it up, and so here it is. A look at the fascinating world of spelling correction for artist names.

In today’s digital music world, you will often look for music by typing an artist name into a search box of your favorite music app. However this becomes a problem if you don’t know how to spell the name of the artist you are looking for. This is probably not much of a problem if you are looking for U2, but it most definitely is a problem if you are looking for Röyksopp, Jamiroquai or Britney Spears. To help solve this problem, we can try to identify common misspellings for artists and use these misspellings to help steer you to the artists that you are looking for.

In today’s digital music world, you will often look for music by typing an artist name into a search box of your favorite music app. However this becomes a problem if you don’t know how to spell the name of the artist you are looking for. This is probably not much of a problem if you are looking for U2, but it most definitely is a problem if you are looking for Röyksopp, Jamiroquai or Britney Spears. To help solve this problem, we can try to identify common misspellings for artists and use these misspellings to help steer you to the artists that you are looking for.

A spelling corrector in 21 lines of code

A good place for us to start is a post by Peter Norvig (Director of Research at Google) called ‘How to write a spelling corrector‘ which presents a fully operational spelling corrector in 21 lines of Python. (It is a phenomenal bit of code, worth the time studying it). At the core of Peter’s algorithm is the concept of the edit distance which is a way to represent the similarity of two strings by calculating the number of operations (inserts, deletes, replacements and transpositions) needed to transform one string into the other. Peter cites literature that suggests that 80 to 95% of spelling errors are within an edit distance of 1 (meaning that most misspellings are just one insert, delete, replacement or transposition away from the correct word). Not being satisfied with that accuracy, Peter’s algorithm considers all words that are within an edit distance of 2 as candidates for his spelling corrector. For Peter’s small test case (he wrote his system on a plane so he didn’t have lots of data nearby), his corrector covered 98.9% of his test cases.

Spell checking Britney

A few years ago, the smart folks at Google posted a list of Britney Spears spelling corrections that shows nearly 600 variants on Ms. Spears name collected in three months of Google searches. Perusing the list, you’ll find all sorts of interesting variations such as ‘birtheny spears’ , ‘brinsley spears’ and ‘britain spears’. I suspect that some these queries (like ‘Brandi Spears’) may actually not be for the pop artist. One curiosity in the list is that although there are 600 variations on the spelling of ‘Britney’ there is exactly one way that ‘spears’ is spelled. There’s no ‘speers’ or ‘spheres’, or ‘britany’s beers’ on this list.

One thing I did notice about Google’s list of Britneys is that there are many variations that seem to be further away from the correct spelling than an edit distance of two at the core of Peter’s algorithm. This means that if you give these variants to Peter’s spelling corrector, it won’t find the proper spelling. Being an empiricist I tried it and found that of the 593 variants of ‘Britney Spears’, 200 were not within an edit distance of two of the proper spelling and would not be correctable. This is not too surprising. Names are traditionally hard to spell, there are many alternative spellings for the name ‘Britney’ that are real names, and many people searching for music artists for the first time may have only heard the name pronounced and have never seen it in its written form.

Making it better with an artist-oriented spell checker

A 33% miss rate for a popular artist’s name seems a bit high, so I thought I’d see if I could improve on this. I have one big advantage that Peter didn’t. I work for a music data company so I can be pretty confident that all the search queries that I see are going to be related to music. Restricting the possible vocabulary to just artist names makes things a whole lot easier. The algorithm couldn’t be simpler. Collect the names of the top 100K most popular artists. For each artist name query, find the artist name with the smallest edit distance to the query and return that name as the best candidate match. This algorithm will let us find the closest matching artist even if it is has an edit distance of more than 2 as we see in Peter’s algorithm. When I run this against the 593 Britney Spears misspellings, I only get one mismatch – ‘brandi spears’ is closer to the artist ‘burning spear’ than it is to ‘Britney Spears’. Considering the naive implementation, the algorithm is fairly fast (40 ms per query on my 2.5 year old laptop, in python).

Looking at spelling variations

With this artist-oriented spelling checker in hand, I decided to take a look at some real artist queries to see what interesting things I could find buried within. I gathered some artist name search queries from the Echo Nest API logs and looked for some interesting patterns (since I’m doing this at home over the weekend, I only looked at the most recent logs which consists of only about 2 million artist name queries).

Artists with most spelling variations

Not surprisingly, very popular artists are the most frequently misspelled. It seems that just about every permutation has been made in an attempt to spell these artists.

- Michael Jackson – Variations: michael jackson, micheal jackson, michel jackson, mickael jackson, mickal jackson, michael jacson, mihceal jackson, mickeljackson, michel jakson, micheal jaskcon, michal jackson, michael jackson by pbtone, mical jachson, micahle jackson, machael jackson, muickael jackson, mikael jackson, miechle jackson, mickel jackson, mickeal jackson, michkeal jackson, michele jakson, micheal jaskson, micheal jasckson, micheal jakson, micheal jackston, micheal jackson just beat, micheal jackson, michal jakson, michaeljackson, michael joseph jackson, michael jayston, michael jakson, michael jackson mania!, michael jackson and friends, michael jackaon, micael jackson, machel jackson, jichael mackson

- Justin Bieber – Variations: justin bieber, justin beiber, i just got bieber’ed by, justin biber, justin bieber baby, justin beber, justin bebbier, justin beaber, justien beiber, sjustin beiber, justinbieber, justin_bieber, justin. bieber, justin bierber, justin bieber<3 4 ever<3, justin bieber x mstrkrft, justin bieber x, justin bieber and selens gomaz, justin bieber and rascal flats, justin bibar, justin bever, justin beiber baby, justin beeber, justin bebber, justin bebar, justien berbier, justen bever, justebibar, jsustin bieber, jastin bieber, jastin beiber, jasten biber, jasten beber songs, gestin bieber, eiine mainie justin bieber, baby justin bieber,

- Red Hot Chili Peppers – Variations: red hot chilli peppers, the red hot chili peppers, red hot chilli pipers, red hot chilli pepers, red hot chili, red hot chilly peppers, red hot chili pepers, hot red chili pepers, red hot chilli peppears, redhotchillipeppers, redhotchilipeppers, redhotchilipepers, redhot chili peppers, redhot chili pepers, red not chili peppers, red hot chily papers, red hot chilli peppers greatest hits, red hot chilli pepper, red hot chilli peepers, red hot chilli pappers, red hot chili pepper, red hot chile peppers

- Mumford and Sons – Variations: mumford and sons, mumford and sons cave, mumford and son, munford and sons, mummford and sons, mumford son, momford and sons, modfod and sons, munfordandsons, munford and son, mumfrund and sons, mumfors and sons, mumford sons, mumford ans sons, mumford and sonns, mumford and songs, mumford and sona, mumford and, mumford &sons, mumfird and sons, mumfadeleord and sons

- Katy Perry – Even an artist with a seemingly very simple name like Katy Perry has numerous variations: katy perry, katie perry, kate perry, kathy perry, katy perry ft.kanye west, katty perry, katy perry i kissed a girl, peacock katy perry, katyperry, katey parey, kety perry, kety peliy, katy pwrry, katy perry-firework, katy perry x, katy perry, katy perris, katy parry, kati perry, kathy pery, katey perry, katey perey, katey peliy, kata perry, kaity perry

Some other most frequently misspelled artists:

- Britney Spears

- Linkin Park

- Arctic Monkeys

- Katy Perry

- Guns N’ Roses

- Nicki Minaj

- Muse

- Weezer

- U2

- Oasis

- Moby

- Flyleaf

- Seether

- byran adams – ryan adams

- Underworld – Uverworld