Posts Tagged ismir09

ISMIR Oral Session 5 – Tags

Oral Session 5 – Tags

Session Chair: Paul Lamere

I’m the session chair for this session, so I can’t keep notes. So instead I offer the abstracts.

TAG INTEGRATED MULTI-LABEL MUSIC STYLE CLASSIFICATION WITH HYPERGRAPH

Fei Wang, Xin Wang, Bo Shao, Tao Li Mitsunori Ogihara

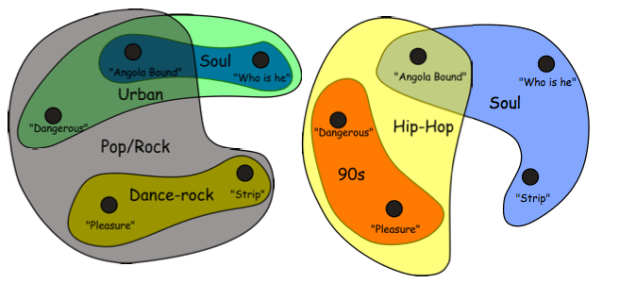

Abstract: Automatic music style classification is an important, but challenging problem in music information retrieval. It has a number of applications, such as indexing of and search- ing in musical databases. Traditional music style classifi- cation approaches usually assume that each piece of music has a unique style and they make use of the music con- tents to construct a classifier for classifying each piece into its unique style. However, in reality, a piece may match more than one, even several different styles. Also, in this modern Web 2.0 era, it is easy to get a hold of additional, indirect information (e.g., music tags) about music. This paper proposes a multi-label music style classification ap- proach, called Hypergraph integrated Support Vector Ma- chine (HiSVM), which can integrate both music contents and music tags for automatic music style classification. Experimental results based on a real world data set are pre- sented to demonstrate the effectiveness of the method.

EASY AS CBA: A SIMPLE PROBABILISTIC MODEL FOR TAGGING MUSIC

Matthew D. Hoffman, David M. Blei, Perry R. Cook

ABSTRACT Many songs in large music databases are not labeled with semantic tags that could help users sort out the songs they want to listen to from those they do not. If the words that apply to a song can be predicted from audio, then those predictions can be used both to automatically annotate a song with tags, allowing users to get a sense of what qualities characterize a song at a glance. Automatic tag prediction can also drive retrieval by allowing users to search for the songs most strongly characterized by a particular word. We present a probabilistic model that learns to predict the probability that a word applies to a song from audio. Our model is simple to implement, fast to train, predicts tags for new songs quickly, and achieves state-of-the-art performance on annotation and retrieval tasks.

USING ARTIST SIMILARITY TO PROPAGATE SEMANTIC INFORMATION

Joon Hee Kim, Brian Tomasik, Douglas Turnbull

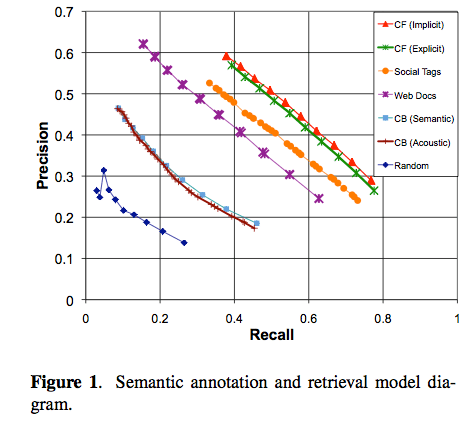

ABSTRACT Tags are useful text-based labels that encode semantic information about music (instrumentation, genres, emotions, geographic origins). While there are a number of ways to collect and generate tags, there is generally a data sparsity problem in which very few songs and artists have been accurately annotated with a sufficiently large set of relevant tags. We explore the idea of tag propagation to help alleviate the data sparsity problem. Tag propagation, originally proposed by Sordo et al., involves annotating a novel artist with tags that have been frequently associated with other similar artists. In this paper, we explore four approaches for computing artists similarity based on dif- ferent sources of music information (user preference data, social tags, web documents, and audio content). We com- pare these approaches in terms of their ability to accurately propagate three different types of tags (genres, acoustic de- scriptors, social tags). We find that the approach based on collaborative filtering performs best. This is somewhat surprising considering that it is the only approach that is not explicitly based on notions of semantic similarity. We also find that tag propagation based on content-based mu- sic analysis results in relatively poor performance.

MUSIC MOOD REPRESENTATIONS FROM SOCIAL TAGS

MUSIC MOOD REPRESENTATIONS FROM SOCIAL TAGS

Cyril Laurier, Mohamed Sordo, Joan Serra, Perfecto Herrera

ABSTRACT This paper presents findings about mood representations. We aim to analyze how do people tag music by mood, to create representations based on this data and to study the agreement between experts and a large community. For this purpose, we create a semantic mood space from last.fm tags using Latent Semantic Analysis. With an unsuper- vised clustering approach, we derive from this space an ideal categorical representation. We compare our commu- nity based semantic space with expert representations from Hevner and the clusters from the MIREX Audio Mood Classification task. Using dimensional reduction with a Self-Organizing Map, we obtain a 2D representation that we compare with the dimensional model from Russell. We present as well a tree diagram of the mood tags obtained with a hierarchical clustering approach. All these results show a consistency between the community and the ex- perts as well as some limitations of current expert models. This study demonstrates a particular relevancy of the basic emotions model with four mood clusters that can be sum- marized as: happy, sad, angry and tender. This outcome can help to create better ground truth and to provide more realistic mood classification algorithms. Furthermore, this method can be applied to other types of representations to build better computational models.

EVALUATION OF ALGORITHMS USING GAMES: THE CASE OF MUSIC TAGGING

Edith Law, Kris West, Michael Mandel, Mert Bay, J. Stephen Downie

Abstract Search by keyword is an extremely popular method for retrieving music. To support this, novel algorithms that automatically tag music are being developed. The conventional way to evaluate audio tagging algorithms is to com- pute measures of agreement between the output and the ground truth set. In this work, we introduce a new method for evaluating audio tagging algorithms on a large scale by collecting set-level judgments from players of a human computation game called TagATune. We present the de- sign and preliminary results of an experiment comparing five algorithms using this new evaluation metric, and con- trast the results with those obtained by applying several conventional agreement-based evaluation metrics.