Paul

I'm the Director of Developer Community at The Echo Nest, a research-focused music intelligence startup that provides music information services to developers and partners through a data mining and machine listening platform. I am especially interested in hybrid music recommenders and using visualizations to aid music discovery.

We are the Earth Destroyers

Posted in Music, The Echo Nest, web services on September 5, 2010

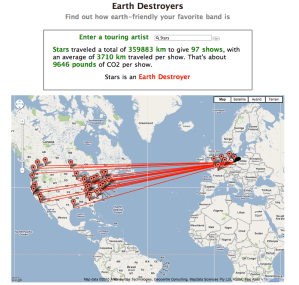

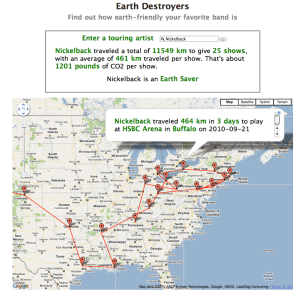

For my London Music Hackday hack I built a web app called ‘Earth Destroyers’. Give Earth Destroyers a band name and it will show you how eco-friendly the band’s touring schedule is. Earth Destroyers calculates the total distance traveled from the first gig to the last along with the average distance between shows. If an artist has an average inter-show distance of greater than a 1,000 km I consider it an ‘Earth Destroyer’. The app also shows you a Google map so you can see just how inefficient the tour is. To build the app I used event data from Bandsintown.

Check out Earth Destroyers

Turning music into silly putty

Posted in remix, The Echo Nest, video on September 5, 2010

I gave a talk last week at Last.fm about The Echo Nest Remix. Klaas has posted it on Vimeo. Here it is:

Is that a million songs in your pocket, or are you just glad to see me?

Posted in Music, playlist, research, The Echo Nest, web services on September 2, 2010

Yesterday, Steve Jobs reminded us that it was less than 10 years ago when Apple announced the first iPod which could put a thousand songs in your pocket. With the emergence of cloud-based music services like Spotify and Rhapsody, we can now have a virtually endless supply of music in our pocket. The ‘bottomless iPod’ will have as big an effect on how we listen to music as the original iPod had back in 2001. But with millions of songs to chose from, we will need help finding music that we want to hear. Shuffle play won’t work when we have a million songs to chose from. We will need new tools that help us manage our listening experience. I’m convinced that one of these tools will be intelligent automatic playlisting.

This weekend at the Music Hack Day London, The Echo Nest is releasing the first version of our new Playlisting API. The Playlisting API lets developers construct playlists based on a flexible set of artist/song selection and sorting rules. The Echo Nest has deep data about millions of artists and songs. We know how popular Lady Gaga is, we know the tempo of every one of her songs, we know other artists that sound similar to her, we know where she’s from, we know what words people use to describe her music (‘dance pop’, ‘club’, ‘party music’, ‘female’, ‘diva’ ). With the Playlisting API we can use this data to select music and arrange it in all sorts of flexible ways – from very simple Pandora radio style playlists of similar sounding songs to elaborate playlists drawing on a wide range of parameters. Here are some examples of the types of playlists you can construct with the API:

- Similar artist radio – generate a playlist of songs by similar artists

- Jogging playlist – generate a playlist of 80s power pop with a tempo between 120 and 130 BPM, but never ever play Bon Jovi

- London Music Hack Day Playlist -generate a playlist of electronic and techno music by unknown artists near London, order the tracks by tempo from slow to fast

- Tomorrow’s top 40 – play the hottest songs by pop artists with low familiarity that are starting to get hottt

- Heavy Metal Radio – A DMCA-Compliant radio stream of nothing but heavy metal

We have also provide a dynamic playlisting API that will allow for the creation of playlists that adapt based upon skipping and rating behavior of the listener.

I’m about to jump on a plane for the Music Hackday London where we will be demonstrating this new API and some cool apps that have already been built upon it. I’m hoping to see a few apps emerge from this Music Hack Day that use the new API. More info about the APIs and how you can use it to do all sorts of fun things will be forthcoming. For the motivated dive into the APIs right now.

Cool music panels at SXSW 2011

I was going to write a post describing all of the cool looking music-oriented panels that have been proposed for SXSW 2011, but debcha at zed equals zee beat me to it. Be sure to read Deb’s SXSWi 2011 panel proposals in music and tech post. Some of the panels I’m looking to the most are:

I was going to write a post describing all of the cool looking music-oriented panels that have been proposed for SXSW 2011, but debcha at zed equals zee beat me to it. Be sure to read Deb’s SXSWi 2011 panel proposals in music and tech post. Some of the panels I’m looking to the most are:

Digital Music Smackdown: The Best Digital Music Service – In what is expected to be a heated and fiercely competitive discussion, C and VP-level executives from four digital music companies (MOG, Spotify, Pandora and Rhapsody) battle it out over the title of “Best Digital Music Service. This could be fun if it is really a smackdown, but I suspect that the execs will be very polite and complimentary of each other’s services leading to a boring panel. I hope I’m wrong. Also, where’s Last.fm? – they should be on the panel too.

We Built this App on RocknRoll: Style Matters – For an inherently auditory medium, music is ingrained with style. From 12″ artwork and niche mp3 blogs to the latest design on your sweatshirt or skate deck, music has always been analogous with visual culture. So what happens when you overlay this complex fabric of cultural values and personal identities on what is already a thorny process: building and launching a music app. – Hannah of Last.fm and Anthony of Hype Machine talk about the design of music apps. These two know their stuff. Should be really interesting.

Music & Metadata: Do Songs Remain the Same? Metadata may be an afterthought when it comes to most people’s digital music collections, but when it comes to finding, buying, selling, rating, sharing, or describing music, little matters more. Metadata defines how we interact and talk about music—from discreet bits like titles, styles, artists, genres to its broader context and history. Metadata builds communities and industries, from the local fan base to the online social network. Its value is immense. But who owns it? This panel is on my Must See list.

Expressing yourself musically with Mobile Technology – This is a panel with Ge Wang, founder/CTO of Smule talking about creating music on mobile devices. Ge is an awesome speaker and gives great demo. Don’t miss this one.

Music APIs – A Choreographed Dance with Devices? – This panel discussion focuses on real-world examples beyond the fundamentals or technical aspects of an API. Attend this panel and review success stories from the pros that demonstrate how an API brings content, software, and hardware together. Looks like a good Music APIs 101 for biz types.

I would be remiss if I didn’t pimp my own panels. Be sure to consider (and maybe even comment on / vote for ) these panels:

Love, Music & APIs. – Consider this to be the Music Hack Day panel. Dave Haynes (SoundCloud) and I will talk about the impact that Music APIs are having on the world of music and how programmers will soon be the new music gamekeeper.

Finding Music With Pictures: Data Visualization for Discovery: In this panel I’ll look at how visualizations can be used to help people explore the music space and discover new, interesting music that they will like. We will look at a wide range of visualizations, from hand drawn artist maps, to highly interactive, immersive 3D environments.

The folks at SXSW are looking for input on these panels to help decide what makes it onto the schedule, so if any of these strike your fancy, head on over to the panel descriptions and add your comments.

Upbeat and Quirky, With a Bit of a Build: Interpretive Repertoires in Creative Music Search

Posted in events, Music, music information retrieval, research on August 13, 2010

Upbeat and Quirky, With a Bit of a Build: Interpretive Repertoires in Creative Music Search

Charlie Inskip, Andy MacFarlane and Pauline Rafferty

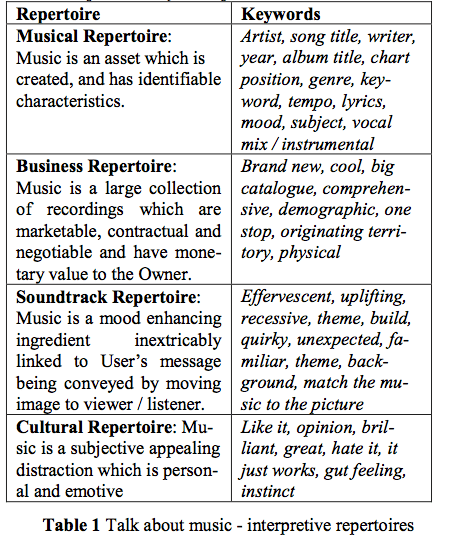

ABSTRACT Pre-existing commercial music is widely used to accompany moving images in films, TV commercials and computer games. This process is known as music synchronisation. Professionals are employed by rights holders and film makers to perform creative music searches on large catalogues to find appropriate pieces of music for syn- chronisation. This paper discusses a Discourse Analysis of thirty interview texts related to the process. Coded examples are presented and discussed. Four interpretive re- pertoires are identified: the Musical Repertoire, the Soundtrack Repertoire, the Business Repertoire and the Cultural Repertoire. These ways of talking about music are adopted by all of the community regardless of their interest as Music Owner or Music User.

Music is shown to have multi-variate and sometimes conflicting meanings within this community which are dynamic and negotiated. This is related to a theoretical feedback model of communication and meaning making which proposes that Owners and Users employ their own and shared ways of talking and thinking about music and its context to determine musical meaning. The value to the music information retrieval community is to inform system design from a user information needs perspective.

What Makes Beat Tracking Difficult? A Case Study on Chopin Mazurkas

Posted in events, ismir, music information retrieval, research on August 13, 2010

What Makes Beat Tracking Difficult? A Case Study on Chopin Mazurkas

Peter Grosche, Meinard Müller and Craig Stuart Sapp

ABSTRACT – The automated extraction of tempo and beat information from music recordings is a challenging task. Especially in the case of expressive performances, current beat tracking approaches still have significant problems to accurately capture local tempo deviations and beat positions. In this paper, we introduce a novel evaluation framework for detecting critical passages in a piece of music that are prone to tracking errors. Our idea is to look for consistencies in the beat tracking results over multiple performances of the same underlying piece. As another contribution, we further classify the critical passages by specifying musical properties of certain beats that frequently evoke trac ing errors. Finally, considering three conceptually different beat tracking procedures, we conduct a case study on the basis of a challenging test set that consists of a variety of piano performances of Chopin Mazurkas. Our experimental results not only make the limitations of state-of-the-art beat trackers explicit but also deepens the understanding of the underlying music material.

An Audio Processing Library for MIR Application Development in Flash

Posted in events, ismir, music information retrieval, research on August 13, 2010

An Audio Processing Library for MIR Application Development in Flash

Jeffrey Scott, Raymond Migneco, Brandon Morton, Christian M. Hahn, Paul Diefenbach and Youngmoo E. Kim

The Audio processing Library for Flash affords music-IR researchers the opportunity to generate rich, interactive, real-time music-IR driven applications. The various lev-els of complexity and control as well as the capability to execute analysis and synthesis simultaneously provide a means to generate unique programs that integrate content based retrieval of audio features. We have demonstrated the versatility and usefulness of ALF through the variety of applications described in this paper. As interest in mu sic driven applications intensifies, it is our goal to enable the community of developers and researchers in music-IR and related fields to generate interactive web-based media.

Music21: A Toolkit for Computer-Aided Musicology and Symbolic Music Data

Posted in events, ismir, Music, music information retrieval, research on August 13, 2010

Music21: A Toolkit for Computer-Aided Musicology and Symbolic Music Data

Michael Scott Cuthbert and Christopher Ariza

ABSTRACT – Music21 is an object-oriented toolkit for analyzing, searching, and transforming music in symbolic (score- based) forms. The modular approach of the project allows musicians and researchers to write simple scripts rapidly and reuse them in other projects. The toolkit aims to pro- vide powerful software tools integrated with sophisticated musical knowledge to both musicians with little pro- gramming experience (especially musicologists) and to programmers with only modest music theory skills.

Music21 looks to be a pretty neat toolkit for analyzing and manipulating symbolic music. It’s like Echo Nest Remix for MIDI. The blog has lots more info: music21 blog. You can get the toolkit here: music21

State of the Art Report: Audio-Based Music Structure Analysis

Posted in events, ismir, music information retrieval, research on August 13, 2010

State of the Art Report: Audio-Based Music Structure Analysis

Jouni Paulus, Meinard Müller and Anssi Klapuri

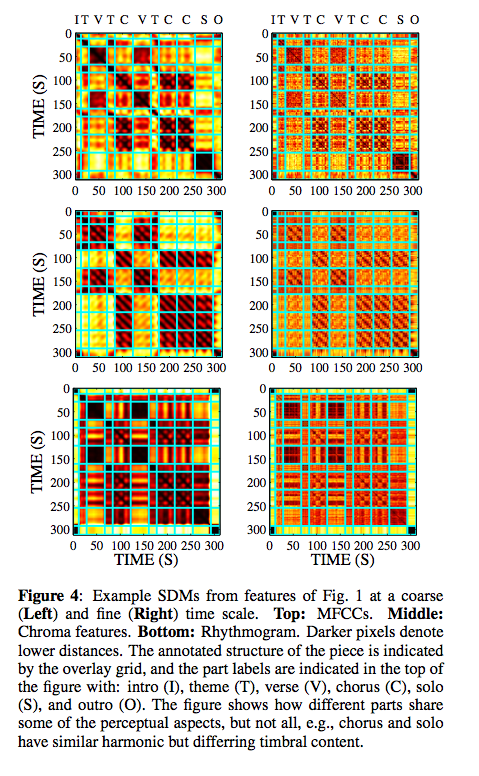

ABSTRACT – Humans tend to organize perceived information into hierarchies and structures, a principle that also applies to music. Even musically untrained listeners unconsciously analyze and segment music with regard to various musical aspects, for example, identifying recurrent themes or detecting temporal boundaries between contrasting musical parts. This paper gives an overview of state-of-the- art methods for computational music structure analysis, where the general goal is to divide an audio recording into temporal segments corresponding to musical parts and to group these segments into musically meaningful categories. There are many different criteria for segmenting and structuring music audio. In particular, one can identify three conceptually different approaches, which we refer to as repetition-based, novelty-based, and homogeneity- based approaches. Furthermore, one has to account for different musical dimensions such as melody, harmony, rhythm, and timbre. In our state-of-the-art report, we address these different issues in the context of music structure analysis, while discussing and categorizing the most relevant and recent articles in this field.

This presentation is an overview of the music structure analysis problem, and the methods proposed for solving it. The methods have been divided into three categories: novelty-based approaches, homogeneity-based approaches, and repetition-based approaches. The comparison of different methods has been problematic because of the differring goals, but current evaluations suggest that none of the approaches is clearly superior at this time, and that there is still room for considerable improvements.

The ISMIR business meeting

Posted in events, ismir, music information retrieval, research on August 12, 2010

Notes from the ISMIR business meeting – this is a meeting with the board of ISMIR.

Officers

- President: J. Stephen Downie, University of Illinois at Urbana-Champaign, USA

- Treasurer: George Tzanetakis, University of Victoria, Canada

- Secretary: Jin Ha Lee, University of Illinois at Urbana-Champaign, USA

- President-elect: Tim Crawford, Goldsmiths College, University of London, UK

- Member-at-large: Doug Eck, University of Montreal, Canada

- Member-at-large: Masataka Goto, National Institute of Advanced Industrial Science and Technology, Japan

- Member-at-large: Meinard Mueller, Max-Planck-Institut für Informatik, Germany

Stephen reviewed the roles of the various officers and duties of the various committees. He reminded us that one does not need to be on the board to serve on a subcommittee.

Publication Issues

- website redesign

- Other communities hardly know about ISMIR. Want to help other communities be aware of our research. One way is to make more links to other communities. Entering committees in other communities.

Hosting Issue – will formalize documentation, location planning, site selection.

Name change? There was a nifty debate around the meaning of ISMIR. There was a proposal to change it to ‘International Society for Music Informatics Research’. I recommend, given Doug’s comments about Youtube from this morning that we change the name to: ‘ International Society for Movie Informatics Research’

Review Process: Good discussion about the review process – we want paper bidding and double-blind reviews. Helps avoid gender bias:

Doug snuck in the secret word ‘youtube’ too, just for those hanging out on IRC.