Paul

I'm the Director of Developer Community at The Echo Nest, a research-focused music intelligence startup that provides music information services to developers and partners through a data mining and machine listening platform. I am especially interested in hybrid music recommenders and using visualizations to aid music discovery.

Top SXSW Music Panels for music exploration, discovery and interaction

Posted in events on August 22, 2013

SXSW 2014 PanelPicker has opened up. I took a tour through the SXSW Music panel proposals to highlight the ones that are of most interest to me … typically technical panels about music discovery and interaction. Here’s the best of the bunch. You’ll notice a number of Echo Nest oriented proposals. I’m really not shilling, I genuinely think these are really interesting talks (well, maybe I’m shilling for my talk).

I’ve previously highlighted the best the bunch for SXSW Interactive.

A Genre Map for Discovering the World of Music

All the music ever made (approximately) is a click or two away. Your favorite music in the world is probably something you’ve never even heard of yet. But which click leads to it?

All the music ever made (approximately) is a click or two away. Your favorite music in the world is probably something you’ve never even heard of yet. But which click leads to it?

Most music “discovery” tools are only designed to discover the most familiar thing you don’t already know. Do you like the Dave Matthews Band? You might like O.A.R.! Want to know what your friends are listening to? They’re listening to Daft Punk, because they don’t know any more than you. Want to know what’s hot? It’s yet another Imagine Dragons song that actually came out in 2012. What we NEED are tools for discovery through exploration, not dictation.

This talk will provide a manic music-discovery demonstration-expedition, showcasing how discovery through exploration (The Echo Nest Discovery list & the genre mapping experiment, Every Noise at Once) in the new streaming world is not an opportunity to pay different people to dictate your taste, but rather a journey, unearthing new music JUST FOR YOU.

The Predictive Power of Music

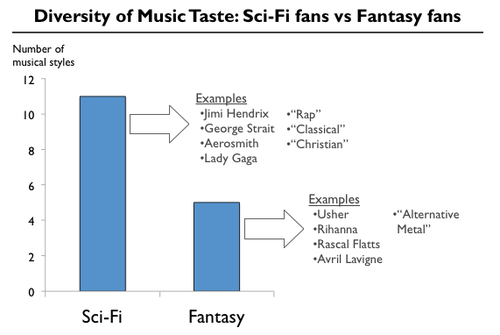

Music taste is extremely personal and an important part of defining and communicating who we are.

Musical Identity, understanding who you are as a music fan and what that says about you, has always been a powerful indicator of other things about you. Broadcast radio’s formats (Urban, Hot A/C, Pop, and so on) are based on the premise that a certain type of music attracts a certain type of person. However, the broadcast version of Musical Identity is a blunt instrument, grouping millions of people into about 12 audience segments. Now that music has become a two-way conversation online, Musical Identity can become considerably more precise, powerful, and predictive.

In this talk, we’ll look at why music is one of the strongest predictors and how music preference can be used to make predictions about your taste in other forms of entertainment (books, movies, games, etc).

Your Friends Have Bad Taste: Fixing Social Music

Music is the most social form of entertainment consumption, but online music has failed to deliver truly social & connected music experiences. Social media updates telling you your aunt listened to Hall and Oates doesn’t deliver on the promise of social music. As access-based, streaming music becomes more mainstream, the current failure & huge potential of social music is becoming clearer. A variety of app developers & online music services are working to create experiences that use music to connect friends & use friends to connect you with new music you’ll love. This talk will uncover how to make social music a reality.

Anyone Can Be a DJ: New Active Listening on Mobile

The mobile phone has become the de facto device for accessing music. According to a recent report, the average person uses their phone as a music player 13 times per day. With over 30 million songs available, any time, any place, listening is shifting from a passive to a personalized and interactive experience for a highly engaged audience.

New data-powered music players on sensor-packed devices are becoming smarter, and could enable listeners to feel more like creators (e.g. Instagram) by dynamically adapting music to its context (e.g. running, commuting, partying, playing). A truly personalized pocket DJ will bring music listening, discovery, and sharing to an entirely new level.

In this talk, we’ll look at how data-enhanced content and smarter mobile players will change the consumer experience into a more active, more connected, and more engaged listening experience.

Human vs. Machine: The Music Curation Formula

Recreating human recommendations in the digital sphere at scale is a problem we’re actively solving across verticals but no one quite has the perfect formula. The vertical where this issue is especially ubiquitous is music. Where we currently stand is solving the integration of human data with machine data and algorithms to generate personalized recommendations that mirrors the nuances of human curation. This formula is the holy grail.

Algorithmic, Curated & Social Music Discover

As the Internet has made millions of tracks available for instant listening, digital music and streaming companies have focused on music recommendations and discovery. Approaches have included using algorithms to present music tailored to listeners’ tastes, using the social graph to find music, and presenting curated & editorial content. This panel will discuss the methods, successes and drawbacks of each of these approaches. We will also discuss the possibility of combining all three approaches to present listeners with a better music discovery experience, with on-the-ground stories of the lessons from building a Discover experience at Spotify.

Beyond the Play Button – The Future of Listening (This is my talk)

Rolling in the Deep (labelled) by Adele

35 years after the first Sony Walkman shipped, today’s music player still has essentially the same set of controls as that original portable music player. Even though today’s music player might have a million times more music than the cassette player, the interface to all of that music has changed very little.

In this talk we’ll explore new ways that a music listener can interact with their music. First we will explore the near future where your music player knows so much about you, your music taste and your current context that it plays the right music for you all the time. No UI is needed.

Next, we’ll explore a future where music listening is no longer a passive experience. Instead of just pressing the play button and passively listening you will be able to jump in and interact with the music. Make your favorite song last forever, add your favorite drummer to that Adele track or unleash your inner Skrillex and take total control of your favorite track.

5 Years of Music Hack Day

![]() Started in 2009 by Dave Haynes and James Darling, Music Hack Day has become the gold standard of music technology events. Having grown to a worldwide, monthly event that has seen over 3500 music hacks created in over 20 cities the event is still going great guns. But, what impact has this event had on the music industry and it’s connection with technology? This talk looks back at the first 5 years of Music Hack Day, from it’s origins to becoming something more important and difficult to control than it’s ‘adhocracy’ beginnings. Have these events really impacted the industry in a positive way or have the last 5 years simply seen a maturing attitude towards technologies place in the music industry? We’ll look at the successes, the hacks that blew people’s minds and what influence so many events with such as passionate audience have had on changing the relationship between music and tech.

Started in 2009 by Dave Haynes and James Darling, Music Hack Day has become the gold standard of music technology events. Having grown to a worldwide, monthly event that has seen over 3500 music hacks created in over 20 cities the event is still going great guns. But, what impact has this event had on the music industry and it’s connection with technology? This talk looks back at the first 5 years of Music Hack Day, from it’s origins to becoming something more important and difficult to control than it’s ‘adhocracy’ beginnings. Have these events really impacted the industry in a positive way or have the last 5 years simply seen a maturing attitude towards technologies place in the music industry? We’ll look at the successes, the hacks that blew people’s minds and what influence so many events with such as passionate audience have had on changing the relationship between music and tech.

The SXSW organizers pay attention when they see a panel that gets lots of votes, so head on over and make your opinion be known.

Top SXSWi panels for music discovery and interaction

Posted in events on August 19, 2013

SXSW 2014 PanelPicker has opened up. I took a tour through the SXSW Interactive talk proposals to highlight the ones that are of most interest to me … typically technical panels about music discover and interaction. Here’s the best of the bunch. Tomorrow, I’ll take a tour through the SXSW Music proposals.

Algorithmic Music Discovery at Spotify

Spotify crunches hundreds of billions of streams to analyze user’s music taste and provide music recommendations for its users. We will discuss how the algorithms work, how they fit in within the products, what the problems are and where we think music discovery is going. The talk will be quite technical with a focus on the concepts and methods, mainly how we use large scale machine learning, but we will also some aspects of music discovery from a user perspective that greatly influenced the design decisions.

Delivering Music Recommendations to Millions

At its heart, presenting personalized data and experiences for users is simple. But transferring, delivering and serving this data at high scale can become quite challenging.

In this session, we will speak about the scalability lessons we learned building Spotify’s Discover system. This system generates terabytes of music recommendations that need to be delivered to tens of millions of users every day. We will focus on the problems encountered when big data needs to be replicated across the globe to power interactive media applications, and share strategies for coping with data at this scale.

Are Machines the DJ’s of Digital Music?

When it comes to music curation, has our technology exceeded our humanity? Fancy algorithms have done wonders for online dating. Can they match you with your new favorite music? Hear music editors from Rhapsody, Google Music, Sony Music and Echonest debate their changing role in curation and music discovery for streaming music services. Whether tuning into the perfect summer dance playlist or easily browsing recommended artists, finding and listening to music is the result of very intentional decisions made by editorial teams and algorithms. Are we sophisticated enough to no longer need the human touch on our music services? Or is that all that separates us from the machines?

Your Friends Have Bad Taste: Fixing Social Music

Music is the most social form of entertainment consumption, but online music has failed to deliver truly social & connected music experiences. Social media updates telling you your aunt listened to Hall and Oates doesn’t deliver on the promise of social music. As access-based, streaming music becomes more mainstream, the current failure & huge potential of social music is becoming clearer. A variety of app developers & online music services are working to create experiences that use music to connect friends & use friends to connect you with new music you’ll love. This talk will uncover how to make social music a reality, including:

- Musical Identity (MI) – who we are as music fans and how understanding MI is unlocking social music apps

- If my friend uses Spotify & I use Rdio, can we still be friends? ID resolution & social sharing challenges

- Discovery issue: finding like-minded fans & relevant expert music curators

- A look at who’s actually building the future of social music

‘Man vs. Machine’ Is Dead, Long Live Man+Machine

A human on a bicycle is the most efficient land-traveller on planet Earth. Likewise, the most efficient advanced, accurate, helpful, and enjoyable music recommendation systems combine man and machine. This dual-pronged approach puts powerful, data-driven tools in the hands of thinking, feeling experts and end users. In other words, the debate over whether human experts or machines are better at recommending music is over. The answer is “both” — a hybrid between creative technology and creative curators. This panel will provide specific examples of this approach that are already taking place, while looking to the future to see where it’s all headed.

Are Recommendation Engines Killing Discovery?

Are recommendation engines – like Yelp, Google, and Spotify – ruining the way we experience life? “Absolutely,” says Ned Lampert. The average person looks at their phone 150 times a day, and the majority of content they’re looking at is filtered through a network of friends, likes, and assumptions. Life is becoming prescriptive, opinions are increasingly polarized, and curiosity is being stifled. Recommendation engines leave no room for the unexpected. Craig Key says, “absolutely not.” The Web now has infinitely more data points than we did pre-Google. Not only is there more content, but there’s more data about you and me: our social graph, Netflix history (if you’re brave), our Tweets, and yes, our Spotify activity. Data is the new currency in digital experiences. While content remains king, it will be companies that can use data to sort and display that content in a meaningful way that will win. This session will explore these dueling perspectives.

Genre-Bending: Rise of Digital Eclecticism

The explosion in popularity of streaming music services has started to change the way we listen. But even beyond those always-on devices with unlimited access to millions of songs that we listen to on our morning commutes, while wending our way through paperwork at our desks or on our evening jogs, there is an even a more fundamental change going on. Unlimited access has unhinged musical taste to the point where eclecticism and tastemaking trump identifying with a scene. Listeners are becoming more adventurous, experiencing many more types of music than ever before. And artists are right there with them, blending styles and genres in ways that would be unimaginable even a decade ago. In his role as VP Product-Content Jon Maples has a front row seat to how music-listening behavior has evolved. He’ll share findings from a recent ethnographic study that reveals intimate details on how people live their musical lives.

Put It In Your Mouth: Startups as Tastemakers

Your life has been changed, at least once, by a startup in the last year. Don’t argue; it’s true. Think about it – how do you listen to music? How do you choose what movie to watch? How do you shop, track your fitness or share memories? Whoever you are, whatever your preferences, emerging technology has crept into your life and changed the way you do things on a daily basis. This group of innovators and tastemakers will take a highly entertaining look at how the apps, devices and online services in our lives are enhancing and molding our culture in fundamental ways. Be warned – a dance party might break out and your movie queue might expand exponentially.

And here’s a bit of self promotion … my proposed panel is all about new interfaces for music.

Beyond the Play Button – The Future of Listening

35 years after the first Sony Walkman shipped, today’s music player still has essentially the same set of controls as that original portable music player. Even though today’s music player might have a million times more music than the cassette player, the interface to all of that music has changed very little. In this talk we’ll explore new ways that a music listener can interact with their music. First we will explore the near future where your music player knows so much about you, your music taste and your current context that it plays the right music for you all the time. No UI is needed. Next, we’ll explore a future where music listening is no longer a passive experience. Instead of just pressing the play button and passively listening you will be able to jump in and interact with the music. Make your favorite song last forever, add your favorite drummer to that Adele track or unleash your inner Skrillex and take total control of your favorite track.

The SXSW organizers pay attention when they see a panel that gets lots of votes, so head on over and make your opinion be known.

Beyond the Play Button – My SXSW Proposal

It is SXSW Panel Picker season. I’ve submitted a talk to both SXSW Interactive and SXSW Music. The talk is called ‘Beyond the Play Button – the Future of Listening’ – the goal of the talk is to explore new interfaces for music listening, discovery and interaction. I’ll show a bunch of my hacks and some nifty stuff I’ve been building in the lab. Here’s the illustrated abstract:

35 years after the first Sony Walkman shipped, today’s music player still has essentially the same set of controls as that original portable music player. Even though today’s music player might have a million times more music than the cassette player, the interface to all of that music has changed very little.

In this talk we’ll explore new ways that a music listener can interact with their music. First we will explore the near future where your music player knows so much about you, your music taste and your current context that it plays the right music for you all the time. No UI is needed.

Next, we’ll explore a future where music listening is no longer a passive experience. Instead of just pressing the play button and passively listening you will be able to jump in and interact with the music. Make your favorite song last forever, add your favorite drummer to that Adele track or unleash your inner Skrillex and take total control of your favorite track.

One Minute Radio

Posted in Music, playlist, The Echo Nest on August 16, 2013

If you’ve got a short attention span when it comes to new music, you may be interested in One Minute Radio. One Minute Radio is a Pandora-style radio app with the twist that it only every plays songs that are less than a minute long. Select a genre and you’ll get a playlist of very short songs.

Now I can’t testify that you’ll always get a great sounding playlist – you’ll hear intros, false starts and novelty songs throughout, but it is certainly interesting. And some genres are chock full of good short songs, like punk, speed metal, thrash metal and, surprisingly, even classical.

OMR was inspired by a conversation with Glenn about the best default for song duration filters in our playlisting API. Check out One Minute Radio. The source is on github too.

The Saddest Stylophone – my #wowhack2 hack

Posted in hacking, Music, The Echo Nest on August 15, 2013

Last week, I ventured to Gothenburg Sweden to participate in the Way Out West Hack 2 – a music-oriented hackathon associated with the Way Out West Music Festival.

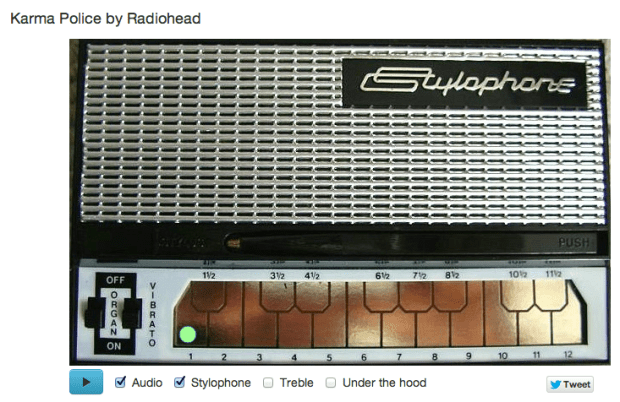

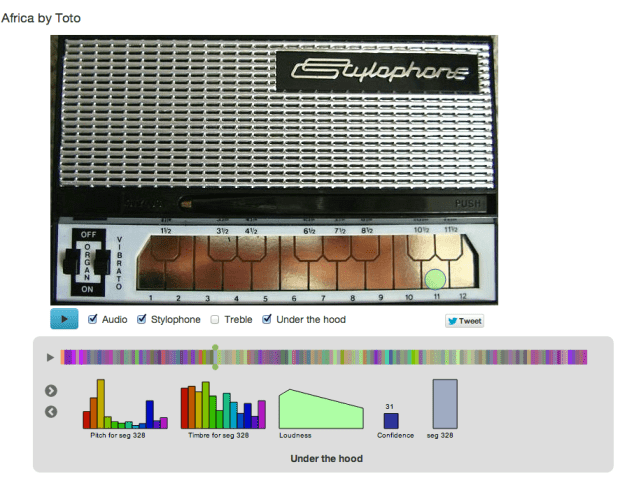

I was, of course, representing and supporting The Echo Nest API during the hack, but I also put together my own Echo Nest-based hack: The Saddest Stylophone. The hack creates an auto accompaniment for just about any song played on the Stylophone – an analog synthesizer toy created in the 60s that you play with a stylus.

Two hacking pivots on the way … The road to the Saddest Stylophone was by no means a straight line. In fact, when I arrived at #wowhack2 I had in mind a very different hack – but after the first hour at the hackathon it became clear that the WIFI at the event was going to be sketchy at best, and it was going to be very slow going for any hack (including the hack I had planned) that was going to need zippy access to to the web, and so after an hour I shelved that idea for another hack day. The next idea was to see if I could use the Echo Nest analysis data to convert any song to an 8-bit chiptune version.  This is not new ground, Brian McFee had a go at this back at the 2012 MIT Music Hack Day. I thought it would be interesting to try a different approach and use an off-the-shelf 8bit software synth and the Echo Nest pitch data. My intention was to use a Javascript sound engine called jsfx to generate the audio. It seemed like it pretty straightforward way to create authentic 8bit sounds. In small doses jsfx worked great, but when I started to create sequences of overlapping sounds my browser would crash. Every time. After spending a few hours trying to figure out a way to get jsfx to work reliably, I had to abandon jsfx. It just wasn’t designed to generate lots of short overlapping and simultaneous sounds, and so I spent some time looking for another synthesizer. I finally settled on timbre.js. Timbre.js seemed like a fully featured synth. Anyone with a Csound background

This is not new ground, Brian McFee had a go at this back at the 2012 MIT Music Hack Day. I thought it would be interesting to try a different approach and use an off-the-shelf 8bit software synth and the Echo Nest pitch data. My intention was to use a Javascript sound engine called jsfx to generate the audio. It seemed like it pretty straightforward way to create authentic 8bit sounds. In small doses jsfx worked great, but when I started to create sequences of overlapping sounds my browser would crash. Every time. After spending a few hours trying to figure out a way to get jsfx to work reliably, I had to abandon jsfx. It just wasn’t designed to generate lots of short overlapping and simultaneous sounds, and so I spent some time looking for another synthesizer. I finally settled on timbre.js. Timbre.js seemed like a fully featured synth. Anyone with a Csound background would be comfortable with creating sounds with Timbre.js It did not take long before I was generating tones that were tracking the melody and chord changes of a song. My plan was to create a set of tone generators, and dynamically control the dynamics envelope based upon the Echo Nest segment data. This is when I hit my next roadblock. The timbre.js docs are pretty good, but I just couldn’t find out how to dynamically adjust parameters such as the ADSR table. I’m sure there’s a way to do it, but when there’s only 12 hours left in a 24 hour hackathon, the two hours spent looking through JS library source seemed like forever, and I began to think that I’d not figure out how to get fine grained control over the synth. I was pretty happy with how well I was able to track a song and play along with it, but without ADSR control or even simple control over dynamics the output sounded pretty crappy. In fact I hadn’t heard anything that sounded so bad since I heard @skattyadz

would be comfortable with creating sounds with Timbre.js It did not take long before I was generating tones that were tracking the melody and chord changes of a song. My plan was to create a set of tone generators, and dynamically control the dynamics envelope based upon the Echo Nest segment data. This is when I hit my next roadblock. The timbre.js docs are pretty good, but I just couldn’t find out how to dynamically adjust parameters such as the ADSR table. I’m sure there’s a way to do it, but when there’s only 12 hours left in a 24 hour hackathon, the two hours spent looking through JS library source seemed like forever, and I began to think that I’d not figure out how to get fine grained control over the synth. I was pretty happy with how well I was able to track a song and play along with it, but without ADSR control or even simple control over dynamics the output sounded pretty crappy. In fact I hadn’t heard anything that sounded so bad since I heard @skattyadz  play a tune on his Stylophone at the Midem Music Hack Day earlier this year. That thought turned out to be the best observation I had during the hackathon. I could hide all of my troubles trying to get a good sounding output by declaring that my hack was a Stylophone simulator. Just like a Stylophone, my app would not be capable of playing multiple tones at once, it would not have complex changes in dynamics, it would only have a one and half octave range, it would not even have a pleasing tone. All I’d need to do would be to convincingly track a melody or harmonic line in a song and I’d be successful. And so, after my third pivot, I finally had a hack that I felt I’d be able to finish in time for the demo session and not embarrass myself. I was quite pleased with the results.

play a tune on his Stylophone at the Midem Music Hack Day earlier this year. That thought turned out to be the best observation I had during the hackathon. I could hide all of my troubles trying to get a good sounding output by declaring that my hack was a Stylophone simulator. Just like a Stylophone, my app would not be capable of playing multiple tones at once, it would not have complex changes in dynamics, it would only have a one and half octave range, it would not even have a pleasing tone. All I’d need to do would be to convincingly track a melody or harmonic line in a song and I’d be successful. And so, after my third pivot, I finally had a hack that I felt I’d be able to finish in time for the demo session and not embarrass myself. I was quite pleased with the results.

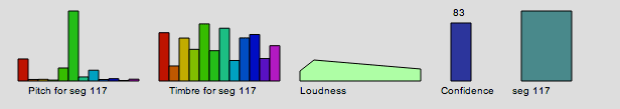

How does it work? The Sad Stylophone takes advantage of the Echo Nest detailed analysis. The analysis provides detailed information about a song. It includes information about where all the bars and beats are, and includes a very detailed map of the segments of a song. Segments are typically small, somewhat homogenous audio snippets in a song, corresponding to musical events (like a strummed chord on the guitar or a brass hit from the band).

A single segment contains detailed information on the pitch, timbre, loudness. For pitch it contains a vector of 12 floating point values that correspond to the amount of energy at each of the notes in the 12-note western scale. Here’s a graphic representation of a single segment:

This graphic shows the pitch vector, the timbre vector, the loudness, confidence and duration of a segment.

The Saddest Stylophone only uses the pitch, duration and confidence data from each segment. First, it filters segments to combine short, low confidence segments with higher confidence segments. Next it filters out segments that don’t have a predominant frequency component in the pitch vector. Then for each surviving segment, it picks the strongest of the 12 pitch bins and maps that pitch to a note on the Stylophone. Since the Stylophone supports an octave and a half (20 notes), we need to map 12 notes onto 20 notes. We do this by unfolding the 12 bins by reducing inter-note jumps to less than half an octave when possible. For example, if between segment one and segment two we would jump 8 notes higher, we instead check to see if it would be possible to jump to 4 notes lower instead (which would be an octave lower than segment two) while still remaining within the Stylophone range. If so, we replace the upward long jump with the downward, shorter jump. The result of this a list of notes and timings mapped on to the 20 notes of the Stylophone. We then map the note onto the proper frequency and key position – the rest is just playing the note via timbre.js at the proper time in sync with the original audio track and animating the stylus using Raphael.

I’ve upgraded the app to include an Under the hood selection that, when clicked opens up a visualization that shows the detailed info for a segment, so you can follow along and see how each segment is mapped onto a note. You can interact with visualization, stepping through the segments, and auditioning and visualizing them.

That’t the story of the Saddest Stylophone – it was not the hack I thought I was going to make when I got to #wowhack – but I was pleased with the result, when The Sad Stylophone plays well, it really can make any song sound sadder and more pathetic. Its a win. I’m not the only one – wired.co.uk listed it as one of the five best hacks at the hackathon.

Give it a try at Saddest Stylophone.

Cyborg Karaoke Party

Posted in Music on August 12, 2013

Another innovative hack built at the Toronto Music Hack Day is the Cyborg Karaoke Party developed by Cameron Gorrie, George Cheng, Kyle Barnhart, Dmitry Arkhipov and Marc Palermo. This hack combines timestamped lyrics from Lyricfind with Rdio Karaoke tracks and a speech synthesizer to give you automatic robot karaoke. Its a neat idea. Perfect music to put on for your Roomba before you leave for work for the day.

Maestro

Posted in Music on August 12, 2013

Maestro is a hack built at Music Hack Day Toronto. It allows you to ‘conduct’ your music by waving your iPhone around like a conductor waves their baton. You can speed up and slow down your music at will. Here’s the demo:

[youtube http://www.youtube.com/watch?v=Q8NYaKTJZR0#t=0m46s]

The hack was created by Wen-Hao Lue and Peter Sobot.

FRANKENMASHER 2000

Posted in Music on August 12, 2013

Brian McFee brought the heavy lifting to the Toronto Music Hack Day. His goal – to see what it would sound like if Billie Holiday sang for Black Sabbath or if Kenny G and The Jesus Lizard formed a supergroup. To do this he built the FRANKENMASHER 2000. This program uses some heavy math to separate the vocals from one song and the instrumentation of another to combine them into what he calls a ‘horrible abomination of sonic torture’. Here are some examples:

Brian has a blog post that describes a bit of the math involved and has more examples. If you are into MIR, Brian’s the guy to keep an eye on. He’s doing interesting things.

Remixes on Soundcloud

Posted in Music on August 12, 2013

This cool hack created at the Toronto Music Hack Day by Devin Sevilla from Rdio looks at what you are currently playing in Rdio (using the Rdio ‘now playing’ API) and then finds all the remixes of that song that have been posted to to Soundcloud. It is a fantastic idea and works great. I really had no idea how many remixes are posted to to Soundcloud. For instance, check out this orchestral version of Skrillex’s First of the Year.

A really cool hack. Check out Remixes on Soundcloud

The Music Radiator

Posted in Music on August 12, 2013

I didn’t make it to the Toronto Music Hack Day, but I’ve heard great things about the event. One hack, built by Ned Lovely is getting lots of attention. It is called Music Radiator. It gives you spot-on genre playlists with a very slick user interface. Pick one of hundreds of genres and just let the music flow. If you give a song a ‘thumbs up’ it will be added to your Rdio collection, give it a ‘thumbs down’ and you’ll never hear it again (well, at least never again on The Music Radiator). Ned has a great sense of design, and the music and the music flows well. I may use this app as my primary way to listen to music when on the web. Check it out.