Session Chair: Juan Pablo Bello

SCALABILITY, GENERALITY AND TEMPORAL ASPECTS IN AUTOMATIC RECOGNITION OF PREDOMINANT MUSICAL INSTRUMENTS IN POLYPHONIC MUSIC

By Ferdinand Fuhrmann, Martín Haro, Perfecto Herrera

- Automatic recognition of music instruments

- Polyphonic music

- Predominate

Research Questions

- scale existing methods to higlh ployphonci muci

- generalize in respect to used intstruments

- model temporal information for recognition

Goals:

- Unifed framework

- Pitched and unpitched …

- (more goals but I couldn’t keep up_

Neat presentation of survey of related work, plotting on simple vs. complex

Ferdinand was going too fast for me (or perhaps jetlag was kicking in), so I include the conclusion from his paper here to summarize the work:

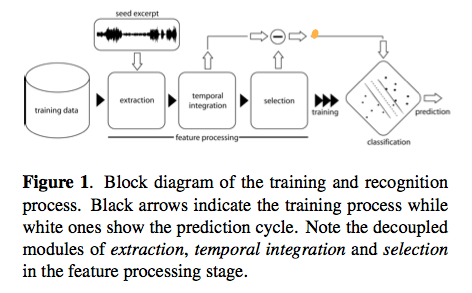

Conclusions: In this paper we addressed three open gaps in automatic recognition of instruments from polyphonic audio. First we showed that by providing extensive, well designed data- sets, statistical models are scalable to commercially avail- able polyphonic music. Second, to account for instrument generality, we presented a consistent methodology for the recognition of 11 pitched and 3 percussive instruments in the main western genres classical, jazz and pop/rock. Fi- nally, we examined the importance and modeling accuracy of temporal characteristics in combination with statistical models. Thereby we showed that modelling the temporal behaviour of raw audio features improves recognition per- formance, even though a detailed modelling is not possible. Results showed an average classification accuracy of 63% and 78% for the pitched and percussive recognition task, respectively. Although no complete system was presented, the developed algorithms could be easily incorporated into a robust recognition tool, able to index unseen data or label query songs according to the instrumentation.

MUSICAL INSTRUMENT RECOGNITION IN POLYPHONIC AUDIO USING SOURCE-FILTER MODEL FOR SOUND SEPARATION

by Toni Heittola, Anssi Klapuri and Tuomas Virtanen

Quick summary: A novel approach to musical instrument recognition in polyphonic audio signals by using a source-filter model and an augmented non-negative matrix factorization algorithm for sound separation. The mixture signal is decomposed into a sum of spectral bases modeled as a product of excitations and filters. The excitations are restricted to harmonic spectra and their fundamental frequencies are estimated in advance using a multipitch estimator, whereas the filters are restricted to have smooth frequency responses by modeling them as a sum of elementary functions on the Mel-frequency scale. The pitch and timbre information are used in organizing individual notes into sound sources. The method is evaluated with polyphonic signals, randomly generated from 19 instrument classes.

Source separation into various sources. Typically uses non-negative matrix factorization. Problem: Each pitch needs its own function leading to many functions. The system overview:

The Examples are very interesting: www.cs.tut.fi/~heittolt/ismir09

HARMONICALLY INFORMED MULTI-PITCH TRACKING

by Zhiyao Duan, Jinyu Han and Bryan Pardo

A novel system for multipitch tracking, i.e. estimate the pitch trajectory of each monophonic source in a mixture of harmonic sounds. Current systems are not robust, since they use local time-frequencies, they tend to generate only short pitch trajectories. This system has two stages: multi-pitch estimation and pitch trajectory formation. In the first stage, they model spectral peaks and non-peak regions to estimate pitches and polyphony in each single frame. In the second stage, pitch trajectories are clustered following some constraints: global timbre consistency, local time-frequency locality.

Here’s the system overview: